Cato Networks has recently released a new data loss prevention (DLP) capability, enabling customers to detect and block documents being transferred over the network, based... Read ›

How Cato Uses Large Language Models to Improve Data Loss Prevention Cato Networks has recently released a new data loss prevention (DLP) capability, enabling customers to detect and block documents being transferred over the network, based on sensitive categories, such as tax forms, financial transactions, patent filings, medical records, job applications, and more. Many modern DLP solutions rely heavily on pattern-based matching to detect sensitive information. However, they don’t enable full control over sensitive data loss. Take for example a legal document such as an NDA, it may contain certain patterns that a legacy DLP engine could detect, but what likely concerns the company’s DLP policy is the actual contents of the document and possible sensitive information contained in it.

Unfortunately, pattern-based methods fall short when trying to detect the document category. Many sensitive documents don’t have specific keywords or patterns that distinguish them from others, and therefore, require full-text analysis. In this case, the best approach is to apply data-driven methods and tools from the domain of natural language processing (NLP), specifically, large language models (LLM).

LLMs for Document Similarity

LLMs are artificial neural networks, that were trained on massive amounts of text, commonly crawled from the web, to model natural language. In recent years, we’ve seen far-reaching advancements in their application to our modern-day lives and business use cases. These applications include language translation, chatbots (e.g. ChatGPT), text summarization, and more.

In the context of document classification, we can use a specialized LLM to analyze large amounts of text and create a compact numeric representation that captures semantic relationships and contextual information, formally known as text embeddings. An example of a LLM suited for text embeddings is Sentence-Bert. Sentence-BERT uses the well-known transformer-encoder architecture of BERT, and fine-tunes it to detect sentence similarity using a technique called contrastive learning.

In contrastive learning, the objective of the model is to learn an embedding for the text such that similar sentences are close together in the embedding space, while dissimilar sentences are far apart. This task can be achieved during the learning phase using triplet loss.In simpler terms, it involves sets of three samples:

An "anchor" (A) - a reference item

A "positive" (P) - a similar item to the anchor

A "negative" (N) - a dissimilar item.

The goal is to train a model to minimize the distance between the anchor and positive samples while maximizing the distance between the anchor and negative samples.

Contrastive Learning with triplet loss for sentence similarity.

To illustrate the usage of Sentence-BERT for creating text embeddings, let’s take an example with 3 IRS tax forms. An empty W-9 form, a filled W-9 form, and an empty 1040 form. Feeding the LLM with the extracted and tokenized text of the documents produces 3 vectors with n numeric values. n being the embedding size, depending on the LLM architecture. While each document contains unique and distinguishable text, their embeddings remain similar. More formally, the cosine similarity measured between each pair of embeddings is close to the maximum value.

Creating text embeddings from tax documents using Sentence-BERT.

Now that we have a numeric representation of each document and a similarity metric to compare them, we can proceed to classify them. To do that, we will first require a set of several labeled documents per category, that we refer to as the “support set”. Then, for each new document sample, the class with the highest similarity from the support set will be inferred as the class label by our model.

There are several methods to measure the class with the highest similarity from a support set. In our case, we will apply a variation of the k-nearest neighbors algorithm that implements the classification based on the neighbors within a fixed radius.

In the illustration below, we see a new sample document, in the vector space given by the LLM’s text embedding. There are a total of 4 documents from the support set that are located in its neighborhood, defined by a radius R.

Formally, a text embedding y from the support set will be located in the neighborhood of a new sample document’s text embedding x , if

R ≥ 1 - similarity(x, y)

similarity being the cosine similarity function. Once all the neighbors are found, we can classify the new document based on the majority class.

Classifying a new document as a tax form based on the support set documents in its neighborhood.

[boxlink link="https://www.catonetworks.com/resources/protect-your-sensitive-data-and-ensure-regulatory-compliance-with-catos-dlp/"] Protect Your Sensitive Data and Ensure Regulatory Compliance with Cato’s DLP | Get It Now [/boxlink]

Creating Advanced DLP Policies

Sensitive data is more than just personal information. ML solutions, specifically NLP and LLMs, can go beyond pattern-based matching, by analyzing large amounts of text to extract context and meaning. To create advanced data protection systems that are adaptable to the challenges of keeping all kinds of information safe, it’s crucial to incorporate this technology as well.

Cato’s newly released DLP enhancements which leverage our ML model include detection capabilities for a dozen different sensitive file categories, including financial, legal, HR, immigration, and medical documents. The new datatypes can be used alongside the previous custom regex and keyword-based datatypes, to create advanced and powerful DLP policies, as in the example below.

A DLP rule to prevent internal job applicant resumes with contact details from being uploaded to 3rd party AI assistants.

While we've explored LLMs for text analysis, the realm of document understanding remains a dynamic area of ongoing research. Recent advancements have seen the integration of large vision models (LVM), which not only aid in analyzing text but also help understand the spatial layout of documents, offering promising avenues for enhancing DLP engines even further.

For further reading on DLP and how Cato customers can use the new features:

https://www.catonetworks.com/platform/data-loss-prevention-dlp/

https://support.catonetworks.com/hc/en-us/articles/5352915107869-Creating-DLP-Content-Profiles

In the previous post, we’ve discussed how passive OS identification can be done based on different network protocols. We’ve also used the OSI model to... Read ›

Machine Learning in Action – An In-Depth Look at Identifying Operating Systems Through a TCP/IP Based Model In the previous post, we’ve discussed how passive OS identification can be done based on different network protocols. We’ve also used the OSI model to categorize the different indicators and prioritize them based on reliability and granularity. In this post, we will focus on the network and transport layers and introduce a machine learning OS identification model based on TCP/IP header values.

So, what are machine learning (ML) algorithms and how can they replace traditional network and security analysis paradigms? If you aren’t familiar yet, ML is a field devoted to performing certain tasks by learning from data samples. The process of learning is done by a suitable algorithm for the given task and is called the “training” phase, which results in a fitted model. The resulting model can then be used for inference on new and unseen data.

ML models have been used in the security and network industry for over two decades. Their main contribution to network and security analysis is that they make decisions based on data, as opposed to domain expertise (i.e., they are data-driven). At Cato we use ML models extensively across our service, and in this post specifically we’ll delve into the details of how we enhanced our OS identification engine using a TCP/IP based model.

For OS identification, a network analyst might create a passive network signature for detecting a Windows OS based on his knowledge on the characteristics of the Windows TCP/IP stack implementation. In this case, he will also need to be familiar with other OS implementations to avoid false positives. However, with ML, an accurate network signature can be produced by the algorithm after training on several labeled network flows from different devices and OSs. The differences between the two approaches are illustrated in Figure 1.

Figure 1: A traditional paradigm for writing identification rules vs. a machine learning approach.

In the following sections, we will demonstrate how an ML model that generates OS identification rules can be created using a Decision Tree. A decision tree is a good choice for our task for a couple of reasons. Firstly, it is suitable for multiclass classification problems, such as OS identification, where a flow can be produced from various OS types (Windows, Linux, iOS, Android, Linux, and more). But perhaps even more importantly, after being trained, the resulting model can be easily converted to a set of decision rules, with the following form:

if condition1 and condition 2 … and condition n then label

This means that your model can be deployed on environments with minimal dependencies and strict performance limits, which are common requirements for network appliances such as packet filtering firewalls and deep packet inspection (DPI) intrusion prevention systems (IPS).

How do decision trees work for classification tasks?

In this section we will use the following example dataset to explain the theory behind decision trees. The dataset represents the task of classifying OSs based on TCP/IP features. It contains 8 samples in total, captured from 3 different OSs: Linux, Mac, and Windows. From each capture, 3 elements were extracted: IP initial time-to-live (ITTL), TCP maximum segment size (MSS), and TCP window size.

Figure 2: The training dataset with 8 samples, 3 features, and 3 classes.

Decision trees, as their name implies, use a tree-based structure to perform classification. Each node in the root and internal tree levels represents a condition used to split the data samples and move them down the tree. The nodes at the bottom level, also called leaves, represent the classification type. This way, the data samples are classified by traversing the tree paths until they reach a leaf node. In Figure 3, we can observe a decision tree created from our dataset. The first level of the tree splits our data samples based on the “IP ITTL” feature. Samples with a value higher than 96 are classified as a Windows OS, while the rest traverse down the tree to the second level decision split.

Figure 3: A simple decision tree for classifying an OS.

So, how did we create this tree from our data? Well, this is the process of learning that was mentioned earlier. Several variations exist for training a decision tree; In our example, we will apply the well-known Classification and Regression Tree (CART) algorithm.

The process of building the tree is done from top to bottom, starting from the root node. In each step, a split criterion is selected with the feature and threshold that provide the best “split quality” for the data in the current node. In general, split criterions that divide the data into groups with more homogeneous class representation (i.e., higher purity) are considered to have a better split quality. The CART algorithm measures the split quality using a metric called Gini Impurity. Formally, the metric is defined as:

Where 𝐶 denotes the number of classes in the data (in our case, 3), and 𝑝 denotes the probability for that class, given the data in the current node. The metric is bounded between 0 and 1 the represent the degree of node impurity. The quality of the split criterion is then defined by the weighted sum of the Gini Impurity values of the nodes below. Finally, the split criterion that gives to lowest weighted sum of the Gini Impurities for the bottom nodes is selected.

In Figure 4, we can see an example for selecting the first split criterion of the tree. The root node of tree, containing all data samples, has the Gini Impurity values of:

Then, given the split criterion of “IP ITTL <= 96”, the data is split to two nodes. The node that satisfies the condition (left side), has the Gini Impurity values of:

While the node that doesn’t, has the Gini Impurity values of:

Overall, the weighted sum for this split is:

This value is the minimal Gini Impurity of all the candidates and is therefore selected for the first split of the tree. For numeric features, the CART algorithm selects the candidates as all the midpoints between sequential values from different classes, when sorted by value. For example, when looking at the sorted “IP ITTL” feature in the dataset, the split criterion is the midpoint between IP ITTL = 64, which belongs to a Mac sample, and IP ITTL = 128, which belongs to a Windows sample. For the second split, the best split quality is given by the “TCP MSS” features, from the midpoint between TCP MSS = 1386, which belongs to a Mac sample, and TCP MSS = 1460, which belongs to a Linux sample.

Figure 4: Building a tree from the data – level 1 and level 2. The tree nodes display: 1. Split criterion, 2. Gini Impurity value, 3. Number of data sample from each class.

In our example, we fully grow our tree until all the leaves have a homogenous class representation, i.e., each leaf has data samples from a single class only. In practice, when fitting a decision tree to data, a stopping criterion is selected to make sure the model doesn’t overfit the data. These criteria include maximum tree height, minimum data samples for a node to be considered a leaf, maximum number of leaves, and more. In case the stopping criterion is reached, and the leaf doesn’t have a homogeneous class representation, the majority class can be used for classification.

[boxlink link="https://catonetworks.easywebinar.live/registration-everything-you-wanted-to-know-about-ai-security"] Everything You Wanted To Know About AI Security But Were Afraid To Ask | Watch the Webinar [/boxlink]

From tree to decision rules

The process of converting a tree to rules is straight forward. Each route in the tree from root to leaf node is a decision rule composed from a conjunction of statements. I.e., If a new data sample satisfies all of statements in the path it is classified with the corresponding label.

Based on the full binary tree theorem, for a binary tree with 𝑛 nodes, the number of extracted decision rules is (𝑛+1)/ 2. In Figure 5, we can see how the trained decision tree with 5 nodes, is converted to 3 rules.

Figure 5: Converting the tree to a set of decision rules.

Cato’s OS detection model

Cato’s OS detection engine, running in real-time on our cloud, is enhanced by rules generated by a decision tree ML model, based on the concepts we described in this post. In practice, to gain a robust and accurate model we trained our model on over 10k unique labeled TCP SYN packets from various types of devices. Once the initial model is trained it also becomes straightforward to re-train it on samples from new operating systems or when an existing networking implementation changes.

We also added additional network features and extended our target classes to include embedded and mobile OSs such as iOS and Android. This resulted in a much more complex tree that generated 125 different OS detection rules. The resulting set of rules that were generated through this process would simply not have been feasible to achieve using a manual work process. This greatly emphasizes the strength of the ML approach, both the large scope of rules we were able to generate and saving a great deal of engineering time.

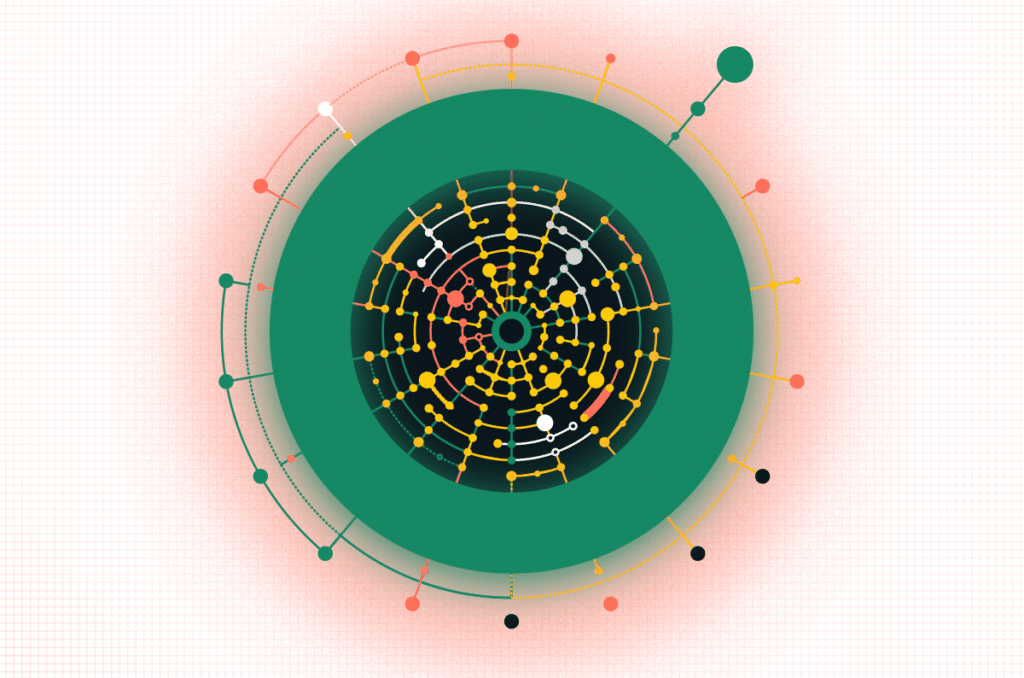

Figure 6: Cato’s OS detection tree model. 15 levels, 249 nodes, and 125 OS detection rules.

Having a data-driven OS detection engine enables us to keep up with the continuously evolving landscape of network-connected enterprise devices, including IoT and BYOD (bring your own device). This capability is leveraged across many of our security and networking capabilities, such as identifying and analyzing security incidents using OS information, enforcing OS-based connection policies, and improving visibility into the array of devices visible in the network.

An example of the usage of the latter implementation of our OS model can be demonstrated in Figure 7, the view of Device Inventory, a new feature giving administrators a full view of all network connected devices, from workstations to printers and smartwatches. With the ability to filter and search through the entire inventory. Devices can be aggregated by different categories, such as OS shown below or by the device type, manufacturer, etc.

Figure 7: Device Inventory, filtering by OS using data classified using our models

However, when inspecting device traffic, there is other significant information besides OS we can extract using data-driven methods. When enforcing security policies, it is also critical to learn the device hardware, model, installed application, and running services. But we'll leave that for another post.

Wrapping up

In this post, we’ve discussed how to generate OS identification rules using a data-driven ML approach. We’ve also introduced the Decision Tree algorithm for deployment considerations on minimal dependencies and strict performance limits environments, which are common requirements for network appliances. Combined with the manual fingerprinting we’ve seen in the previous post; this series provides an overview of the current best practices for OS identification based on network protocols.

We just introduced what we believe is a unique application of real-time, deep learning (DL) algorithms to network prevention. The announcement is hardly our foray... Read ›

Enhancing Security and Asset Management with AI/ML in Cato Networks’ SASE Product We just introduced what we believe is a unique application of real-time, deep learning (DL) algorithms to network prevention. The announcement is hardly our foray into artificial intelligence (AI) and machine learning (ML). The technologies have long played a pivotal role in augmenting Cato's SASE security and networking capabilities, enabling advanced threat prevention and efficient asset management. Let's take a closer look.

What is Artificial Intelligence (AI), Machine Learning (ML), and Deep Learning (DL)?

Before diving into the details of Cato's approach to AI, ML, and DL, let's provide some context around the technologies. AI is the overarching concept of creating machines that can perform tasks typically requiring human intelligence, such as learning, reasoning, problem-solving, understanding natural language, and perception. One example of AI applications is in healthcare, where AI-powered systems can assist doctors in diagnosing diseases or recommending personalized treatment plans.

ML is a subset of AI that focuses on developing algorithms to learn from and make predictions based on data. These algorithms identify patterns and relationships within datasets, allowing a system to make data-driven decisions without explicit programming. An example of an ML application is in finance, where algorithms are used for credit scoring, fraud detection, and algorithmic trading to optimize investment strategy and risk management.

Deep Learning (DL) is a subset of ML, employing artificial neural networks to process data and mimic the human brain's decision-making capabilities. These networks consist of multiple interconnected layers capable of extracting higher-level features and patterns from vast amounts of data. A popular use of DL is seen in self-driving vehicles, where complex image recognition algorithms allow the vehicle to detect and respond appropriately to traffic signs, pedestrians, and other obstacles to ensure safe driving.

Overcoming Challenges in Implementing AI/ML for Real-time Network Security Monitoring

Implementing DL and ML for Cato customers presents several challenges. Cato handles and monitors terabytes of customer network traffic daily. Processing that much data requires a tremendous amount of compute capacity. Falsely flagging network activity as an attack could materially impact our customer's operations so our algorithms must be incredibly accurate. Additionally, we can't interfere with our user's experience, leaving just milliseconds to perform real-time inference.

Cato tackles these challenges by running our DL and ML algorithms on Cato's cloud infrastructure. Being able to run in the cloud enables us to use the cloud's ubiquitous compute and storage capacity. In addition, we've taken advantage of cloud infrastructure advancements, such as AWS SageMaker. SageMaker is a cloud-based platform that provides a comprehensive set of tools and services for building, training, and deploying machine learning models at scale. Finally, Cato's data lake provides a rich data set, converging networking metadata with security information, to better train our algorithms.

With these technologies, we have successfully deployed and optimized our ML algorithms, meticulously reducing the risks associated with false flagging network activity and ensuring real-time inference. The Cato algorithms monitor network traffic in real-time while maintaining low false positive rates and high detection rates.

How Cato Uses Deep Learning to Enhance Threat Detection and Prevention

Using DL techniques, Cato harnesses the power of artificial intelligence to amplify the effectiveness of threat detection and prevention, thereby fortifying network security and safeguarding users against diverse and evolving cyber risks. DL is used in many different ways in Cato SASE Cloud.

For example, we use DL for DNS protection by integrating deep learning models within Cato IPS to detect Command and Control (C2) communication originating from Domain Generation Algorithm (DGA) domains, the essence of our launch today, and DNS tunneling. By running these models inline on enormous amounts of network traffic, Cato Networks can effectively identify and mitigate threats associated with malicious communication channels, preventing real-time unauthorized access and data breaches in milliseconds.

[boxlink link="https://www.catonetworks.com/resources/eliminate-threat-intelligence-false-positives-with-sase/"] Eliminate Threat Intelligence False Positives with SASE | Download the eBook [/boxlink]

We stop phishing attempts through text and image analysis by detecting flows to known brands with low reputations and newly registered websites associated with phishing attempts. By training models on vast datasets of brand information and visual content, Cato Networks can swiftly identify potential phishing sites, protecting users from falling victim to fraudulent schemes that exploit their trust in reputable brands.

We also prioritize incidents for enhanced security with machine learning. Cato identifies attack patterns using aggregations on customer network activity and the classical ML Random Forest algorithm, enabling security analysts to focus on high-priority incidents based on the model score.

The prioritization model considers client group characteristics, time-related metrics, MITRE ATT&CK framework flags, server IP geolocation, and network features. By evaluating these varied factors, the model boosts incident response efficiency, streamlining the process, and ensures clients' networks' security and resilience against emerging threats.

Finally, we leverage ML and clustering for enhanced threat prediction. Cato harnesses the power of collective intelligence to predict the risk and type of threat of new incidents. We employ advanced ML techniques, such as clustering and Naive Bayes-like algorithms, on previously handled security incidents. This data-driven approach using forensics-based distance metrics between events enables us to identify similarities among incidents. We can then identify new incidents with similar networking attributes to predict risk and threat accurately.

How Cato Uses AI and ML in Asset Visibility and Risk Assessment

In addition to using ML for threat detection and prevention, we also tap AI and ML for identifying and assessing the risk of assets connecting to Cato. Understanding the operating system and device types is critical to that risk assessment, as it allows organizations to gain insights into the asset landscape and enforce tailored security policies based on each asset's unique characteristics and vulnerabilities.

Cato assesses the risk of a device by inspecting traffic coming from client device applications and software. This approach operates on all devices connected to the network. By contrast, relying on client-side applications is only effective for known supported devices. By leveraging powerful AI/ML algorithms, Cato continuously monitors device behavior and identifies potential vulnerabilities associated with outdated software versions and risky applications.

For OS Type Detection, Cato's AI/ML capabilities accurately identify the operating system type of agentless devices connected to the network. This information provides valuable insights into the security posture of individual devices and enables organizations to enforce appropriate security policies tailored to different operating systems, strengthening overall network security.

Cato Will Continue to Expand its ML/AI Usage

Cato will continue looking at ways of tapping ML and AI to simplify security and improve its effectiveness. Keep an eye on this blog as we publish new findings.

Successfully Identifying operating systems in organizations has become a crucial part of network security and asset management products. With this information, IT and security departments... Read ›

IoT has an identity problem. Here’s how to solve it Successfully Identifying operating systems in organizations has become a crucial part of network security and asset management products. With this information, IT and security departments can gain greater visibility and control over their network. When a software agent is installed on a host, this task becomes trivial. However, several OS types, mainly for embedded and IoT devices, are unmanaged or aren’t suitable to run an agent.

Fortunately, identification can also be done with a much more passive method, that doesn’t require installation of software on endpoint devices and works for most OS types. This method, called passive OS fingerprinting, involves matching uniquely identifying patterns in the network traffic a host produces, and classifying it accordingly. In most cases, these patterns are evaluated on a single network packet, rather than a sequence of flows between a client host and a server.

There exist several protocols, from different network layers that can be used for OS fingerprinting. In this post we will cover those that are most commonly used today. Figure 1 displays these protocols, based on the Open Systems Interconnection (OSI) model. As a rule of thumb, protocols at the lower levels of the OSI stack provide better reliability with lower granularity compared to those on the upper levels of stack, and vice versa.

Figure 1: Different network protocols for OS identification based on the OSI model

Starting from the bottom of the stack, at the data link layer, exists the medium access control (MAC) protocol. Over this protocol, a unique physical identifier, called the MAC address, is allocated to the network interface card (NIC) of each network device. The address, which is hardcoded into the device at manufacturing, is composed of 12 hexadecimal digits, which are commonly represented as 6 pairs divided by hyphens. From these hexadecimal digits, the leftmost six represent the manufacturer's unique identifier, while the rightmost six represent the serial number of the NIC. In the context of OS identification, using the manufacturer's unique identifier, we can infer the type of device running in the network, and in some cases, even the OS.

In Figure 2, we see a packet capture from a MacBook laptop communicating over Ethernet. The 6 leftmost digits of the source MAC address are 88:66:5a, and affiliated with “Apple, Inc.” manufacturer.

Figure 2: an “Apple, Inc.” MAC address in the data link layer of a packet capture

Moving up the stack, at the network and transport layers, is a much more granular source of information, the TCP/IP stack. Fingerprinting based on TCP/IP information stems from the fact that the TCP and IP protocols have certain parameters, from the header segment of the packet, that are left up for implementation, and most OSes select unique values for these parameters.

[boxlink link="https://www.catonetworks.com/resources/cato-networks-sase-threat-research-report/"] Cato Networks SASE Threat Research Report H2/2022 | Download the Report [/boxlink]

Some of the most commonly used today for identification are initial time to live (TTL), Windows Size, “Don't Fragment” flag, and TCP options (values and order). In Figure 3 and Figure 4, we can see a packet capture of a MacBook laptop initiating a TCP connection to a remote server. For each outgoing packet, The IP header includes a combination of flags and an initial TTL value that is common for MacOS hosts, as well as the first “SYN” packet of the TCP handshake with the Windows Size value and the set of TCP options. The combination of these values is sufficient to identify this host as a MacOS.

Figure 3: Different header values from the IP protocol in the network layer

Figure 4: Different header values from the TCP protocol in the transport layer

At the upper most level of the stack, in the application layer, several different protocols can be used for identification. While providing a high level of granularity, that often indicates not only the OS type but also the exact version or distribution, some of the indicators in these protocols are open to user configuration, and therefore, provide lower reliability.

Perhaps the most common protocol in the application level used for OS identification is HTTP. Applications communicating over the web often add a User-Agent field in the HTTP headers, which allows network peers to identify the application, OS, and underlying device of the client.

In Figure 5, we can see a packet capture of an HTTP connection from a browser. After the TCP handshake, the first HTTP request to the server contains a User-Agent field which identifies the client as a Firefox browser, running on a Windows 7 OS.

Figure 5: Detecting a Windows 7 OS from the User-Agent field in the HTTP headers

However, the User-Agent field, is not the only OS indicator that can be found over the HTTP protocol. While being completely nontransparent to the end-user, most OSes have a unique implementation of connectivity tests that automatically run when the host connects to a public network. A good example for this scenario is Microsoft’s Network Connectivity Status Indicator (NCSI). The NCSI is an internet connection awareness protocol used in Microsoft's Windows OSes. It is composed of a sequence of specifically crafted DNS and HTTP requests and responses that help indicate if the host is located behind a captive portal or a proxy server. In Figure 6, we can see a packet capture of a Windows host performing a connectivity test based on the NCSI protocol. After a TCP handshake is conducted, an HTTP GET request is sent to http://www.msftncsi.com/ncsi.txt.

Figure 6: Windows host running a connectivity test based on the NCSI protocol

The last protocol we will cover in the application layer, is DHCP. The DHCP protocol, used for IP assignment over the network. Overall, this process is composed of 4 steps: Discovery, Offer, Request and Acknowledge (DORA). In these exchanges, several granular OS indicators are provided in the DHCP options of the message. In Figure 7, we can see a packet capture of a client host (192.168.1.111) that is broadcasting DHCP messages over the LAN and receiving replies from a DHCP server (192.168.1.1). The first DHCP Inform message, contains the DHCP option number 60 (vendor class identifier) with the value of “MSFT 5.0”, associated with a Microsoft Windows client. In addition, the DHCP option number 55 (parameter request list) contains a sequence of values that is common for Windows OSes. Combined with the order of the DHCP options themselves, these indicators are sufficient to identify this host as a Windows OS.

Figure 7: Using DHCP options for OS identification

Wrapping up

In this post, we’ve introduced the task of OS identification from network traffic and covered some of the most commonly used protocols these days. While some protocols provide better accuracy than others, there is no 'silver bullet' for this task, and we’ve seen the tradeoff between granularity and reliability with the different options. Rather than fingerprinting based on a single protocol, you might consider a multi-protocol approach. For example, an HTTP User-Agent combined with lower-level TCP options fingerprint.

Identification of OS-level client types over IP networks has become crucial for network security vendors. With this information, security administrators can gain greater visibility into... Read ›

Inside Cato: How a Data Driven Approach Improves Client Identification in Enterprise Networks Identification of OS-level client types over IP networks has become crucial for network security vendors. With this information, security administrators can gain greater visibility into their networks, differentiate between legitimate human activity and suspicious bot activity, and identify potentially unwanted software. The process of identifying clients by their network traces is, however, very challenging. Most of the common methods being applied today require a great deal of manual work, advanced domain expertise, and are prone to misclassification. Using a data-driven approach based on machine learning, Cato recently developed a new technology that addresses these problems, enabling accurate and scalable identification of network clients.

Going “old school” with manual fingerprinting

One of the most common methods to passively identify network clients, without requiring access to either endpoint, is fingerprinting. Imagine you are a police investigator arriving at a crime scene for forensics. Lucky for you, the suspect left an intact fingerprint. Since he is a well-known criminal with previous offenses, his fingerprints are already in the police database, and you can use the one you found to trace back to him. Like humans, network clients also leave unique traces that can be used to identify them. In your network, combinations of attributes such as HTTP headers order, user-agents, and TLS cipher suites, are unique to certain clients.

[boxlink link="https://catonetworks.easywebinar.live/registration-ransomware-chokepoints"] Ransomware Chokepoints: Disrupt the Attack | Watch Webinar [/boxlink]

In recent years, fingerprints relying solely on unsecured network traffic attributes (e.g., the HTTP user-agent value) have become obsolete, since they are easy to spoof, and are not applicable when using secured connections. TLS fingerprints, that rely on attributes from the Client Hello message of the TLS handshake, on the other hand, do not suffer from these drawbacks and are slowly gaining larger adaptation from security vendors.

Below is an example of a TLS fingerprint that identifies a OneDrive client.

Caption: TLS header fingerprint of a One Drive client (source)

However, manually mapping network clients to unique identifiers is not a simple task; It requires in-depth domain knowledge and expertise. Without them, the method is prone to misclassifications. In a shared community effort to address this issue, some open-source projects (e.g. sslhaf and JA3) were created, but they provide low coverage and are not updated frequently.

An even greater issue with manual fingerprinting is scalability. Accurately classifying client types requires manually analyzing traffic captures, a labor-intensive process that does not scale for enterprise networks.

Taking such an approach at Cato wasn’t feasible. Each day the Cato SASE Cloud must inspect millions of unique TLS handshake values. The large number of values is due not only to the number of network clients connected to Cato SASE Cloud but also to the number of different versioning and updates that alter the TLS behavior of each client. Clearly, we needed a better solution.

Clustering – An automated and robust approach

With great amounts of data, comes great amounts of capabilities. With the use of machine learning clustering algorithms, we’ve managed to reduce millions of unique TLS handshake values to a subset of a few hundred clusters, representing unique network clients that share similar values. After creating the clusters, a single fingerprint can be generated for each one, using the longest common substring (LCS) from the Client Hello message of all the samples in the cluster. Finding the common denominator of several samples makes the approach more robust to small variations in the message values.

Caption: Similar values from the TLS Client Hello message are clustered together and the LCS is used to generate a fingerprint. Each colored cluster represents a different client.

The next step of the process is to identify and label the client of the fingerprint. To do so, we search for the fingerprint in our data lake, containing terabytes of daily network flows generated from different clients, and look for common attributes such as domains or applications contacted, and HTTP user-agent (visible from TLS traffic interception and inspection).

For example, in the plot above, a group of TLS network flows, containing similar Client Hello messages, were clustered together by the algorithm (see the 3-point “Java/1.8.0_211” cluster colored in light blue). The resulting TLS fingerprint matched a group of inspected TLS flows in our data lake, with visible HTTP headers; all of which had a common user-agent that belongs to the Java Standard Library; a library used to perform HTTP requests.

Wrapping up

Accurately identifying client types has become crucial for network security vendors. With this new data-driven approach, we’ve managed to develop a fully automated and continuous pipeline that generates TLS fingerprints. The new method scales to large enterprise networks and is more robust to variation in the client's network activity.

A technique long used for profiting from the brand strength of popular domain names is finding increased use in phishing attacks. Cybersquatting (also called domain... Read ›

Cato Networks Adds Protection from the Perils of Cybersquatting A technique long used for profiting from the brand strength of popular domain names is finding increased use in phishing attacks. Cybersquatting (also called domain squatting) is the use of a domain name with the intent to profit from the goodwill of a trademark belonging to someone else. Increasingly, attackers are tapping cybersquatting to harvest user credentials.

Last month, one such campaign targeted 1,000 users at a high-profile communications company with an email containing a supposed secure file link from an email security vendor. Once clicked, the link led to a spoofed Proofpoint page with login links for different email providers.

So prevalent are these threats that Cato Networks has added cybersquatting protection to our service. Over the past month, we’ve detected 5,000 unique squatted domains for more than 50 well-known trademarks. These domains follow certain patterns. By understanding these patterns, you’ll be more likely to protect your organization from this new threat.

[boxlink link="https://www.catonetworks.com/resources/ransomware-is-on-the-rise-catos-security-as-a-service-can-help?utm_source=blog&utm_medium=top_cta&utm_campaign=ransomware_ebook"] Ransomware is on the Rise | Download eBook [/boxlink]

Types of Cybersquatting

There are several techniques for creating domains that may trick unsuspecting users. Here are four of the most common:

Typosquatting

Typosquatting creates domain names that incorporate typical typos users input when attempting to access a legitimate site. A perfect example is catonetwrks.com, which leaves out the “o” in networks. The user mistypes Cato’s Web site and ends up interacting with another site used to spread misinformation, redirect the user, or download malware to the user’s system.

Combosquatting

Combosquatting creates a domain that combines the legitimate domain with additional words or letters. For example, cato-networks.com adds a hyphen to Cato’s URL catonetworks.com. Combosquatting is often used for links in phishing emails.

Here are two examples of counterfeit websites that use combosquatting to prompt the user to submit sensitive information. The domain names, amazon-verifications[.]com and amazonverification[.]tk, make the user think they are interacting with a legitimate website owned by Amazon.

[caption id="attachment_20714" align="alignnone" width="860"] Figure 1- Examples of combosquatting[/caption]

Levelsquatting

Levelsquatting inserts the target domain into the subdomain of the cybersquatting URL. This attack usually targets mobile device users who may only see part of the URL displayed in the small mobile-device browser window. A perfect example of levelsquatting would be login.catonetworks.com.fake.com. The user may only see the prefix of login.catonetworks.com on his Apple or Android screen and thinks it’s a legitimate Cato Networks login site.

Homographsquatting

Homographsquatting uses various character combinations that resemble the target domain visually. One example is catonet0rks.com, which uses a zero digit that looks like the letter “o” or ccitonetworks.com, where the combination of “c” and “i” after the initial “c” looks to users like the letter “a.”

Homographsquatting can also use Punycode to include non-ASCII characters in international domain names (IDN). An example would be cаtonetworks.com (xn--ctonetworks-yij.com in Punycode). In this case the “a” is a non-ASCII character from the Cyrillic alphabet.

Here is a non-malicious example of a Facebook homograph domain (xn--facebok-y0a[.]com in Punycode) offered for sale. The squatted domain is used for the owner’s personal profit.

[caption id="attachment_20716" align="alignnone" width="1600"] Figure 2- Example of homographsquatting targeting Facebook users[/caption]

And here is another use of homographsquatting, this time going after Microsoft users. The domain name - nnicrosoft[.]online – uses double “n”s to look like the “m” in “microsoft.”

[caption id="attachment_20718" align="alignnone" width="1600"] Figure 3- Example of homographsquatting targeting Microsoft users[/caption]

How to Detect Cybersquatting

To detect cybersquatted domains, Cato Networks uses a method called Damerau-Levenshtein distance. This approach counts the minimum number of operations (insertions, deletions, substitution, or transposition of two adjacent characters) needed to change one word into the other.

For example, netflex.com has an edit distance of 1 from the legitimate site, netflix.com via the substitution the “i” character with “e”.

[caption id="attachment_20722" align="alignnone" width="861"] Figure 4 – Substitution of the “i” character with an “e” in the netflix domain.[/caption]

Cato Networks configures the edit distance used to classify squatted domains dynamically for each squatted trademark, taking into consideration the length and word similarity. Think of the words that can be generated with a 2 edit distance from the name Instagram or DHL, for example.

We also look at who registered the domain. You might be surprised to learn that many domains of trademarks with common typos are registered by the trademark owner to redirect the user to the correct site. Detecting a domain registered by anyone other than the trademark owner arouses suspicion.

Checking the domain age and registrar also turns up clues. Newly registered domains and domains from low-reputation registrars are more likely to be associated with unwanted and malicious activity than others.

Separating Squatted from Non-squatted Domains

In October 2021 alone, Cato Networks used these methods to detect more than 5,000 unique squatted domains for more than 50 well-known trademarks. The graphic below shows that fewer than 20% were owned by the legitimate trademark owner.

[caption id="attachment_20724" align="alignnone" width="1200"] Figure 5 – Distribution of ownership in the detected domains.[/caption]

Additionally, Cato’s data shows that legitimate companies tend to register domains that include their trademark with combinations of other characters and typical typos. Domains that are not registered by trademark owners tend to have a higher percentage of trademarks in the subdomain level, i.e. levelsquatting.

[caption id="attachment_20726" align="alignnone" width="1200"] Figure 6 - Distribution of squatting techniques in domains not registered by trademark owners.[/caption]

Finally, this graphic of Cato Networks data shows that many of the squatted domains target search engines, social media, Office suites and e-commerce websites.

[caption id="attachment_20728" align="alignnone" width="1200"] Figure 7- Top targeted trademarks.[/caption]

Don’t Wait to Identify Cybersquatting

There is no doubt that cybersquatting can be used in a variety of ways to target unsuspecting users and companies for a data breach. Organizations need to educate themselves on the perils of cybersquatting and incorporate tools and techniques for detecting phishing and other attacks that use this method for nefarious purposes. The good news is that Cato customers can now take advantage of Cato’s cybersquatting detection to protect their users and precious data.