Cato SASE Cloud: Enjoy Simplified Configuration and Centralized, Global Policy Delivery

|

Listen to post:

Getting your Trinity Audio player ready...

|

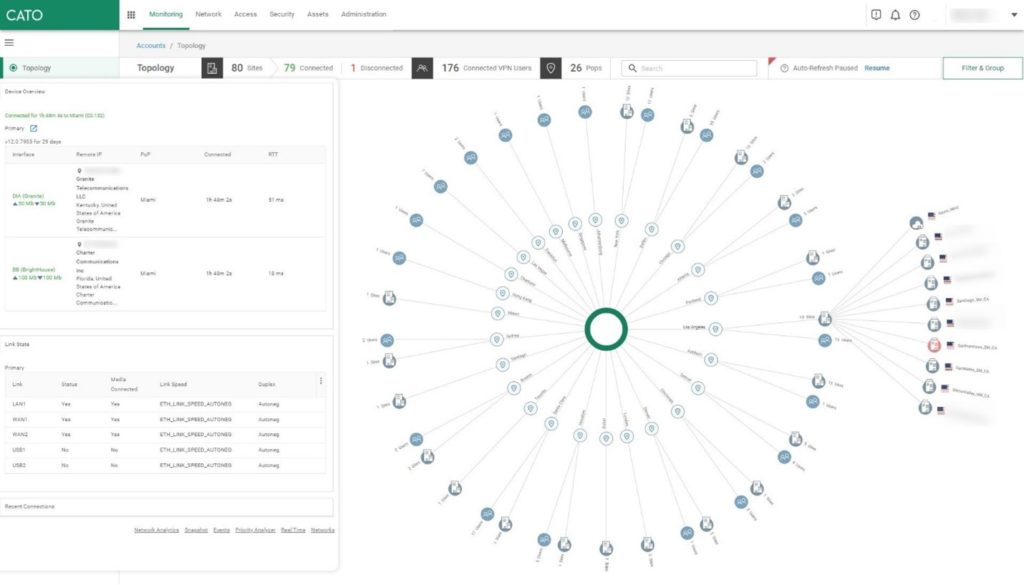

In this article, we will discuss some of the various policy objects that exist within the Cato Management Application and how they are used. You may be familiar with the concept of localized versus centralized policies that exist within legacy SD-WAN architectures, but Cato’s cloud-native SASE architecture simplifies configuration and policy delivery across all capabilities from a true single management application.

Understanding Cato’s Management Application from Its Architecture

To understand policy design within the Cato Management application, it’s useful to discuss some of Cato’s architecture. Cato’s cloud was built from the ground up to provide converged networking and security globally. Because of this convergence, automated security engines and customized policies benefit from shared context and visibility allowing true single-pass processing and more accurate security verdicts.

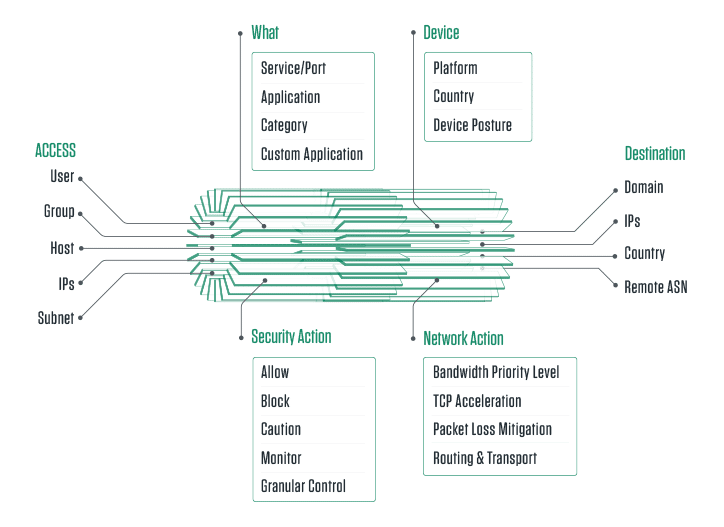

Each piece of context can typically be used for policy matching across both networking and security capabilities within Cato’s SASE Cloud. This includes elements like IP address, subnet, username, group membership, hostname, remote user, site, and more. Additionally, policy rules can be further refined based on application context including application (custom applications too), application categories, service, port range, domain name, and more. All created rules apply based on the first match in the rule list from the top down.

Cato SSE 360: Finally, SSE with Total Visibility and Control | WhitepaperA Close Look at Cato’s Networking Policy

Cato’s SASE Cloud is comprised of over 75 (and growing) top-tier data center locations, each connected with multiple tier 1 ISP connections forming Cato’s global private backbone. Cato automatically chooses the best route for your traffic dynamically, resulting in a predictable and reliable connection to resources compared with public Internet. Included features like QoS, TCP Acceleration, and Packet Loss Mitigation allow customers to fine-tune performance to their needs.

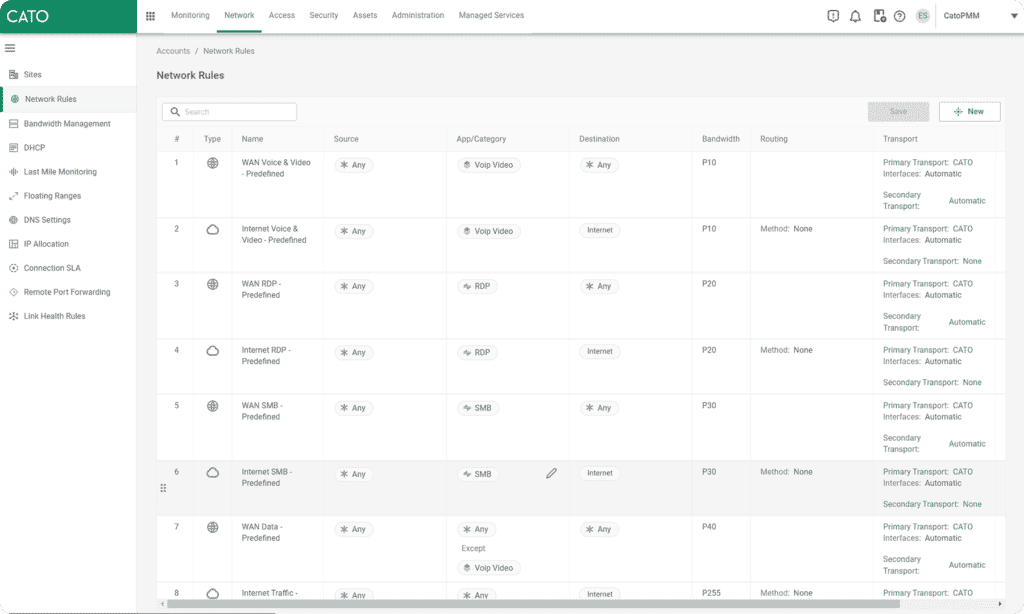

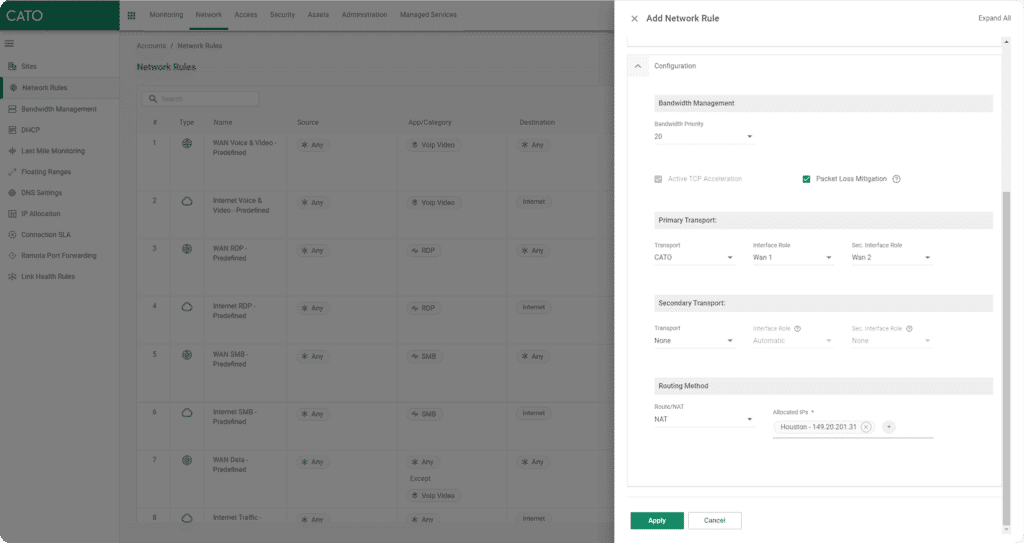

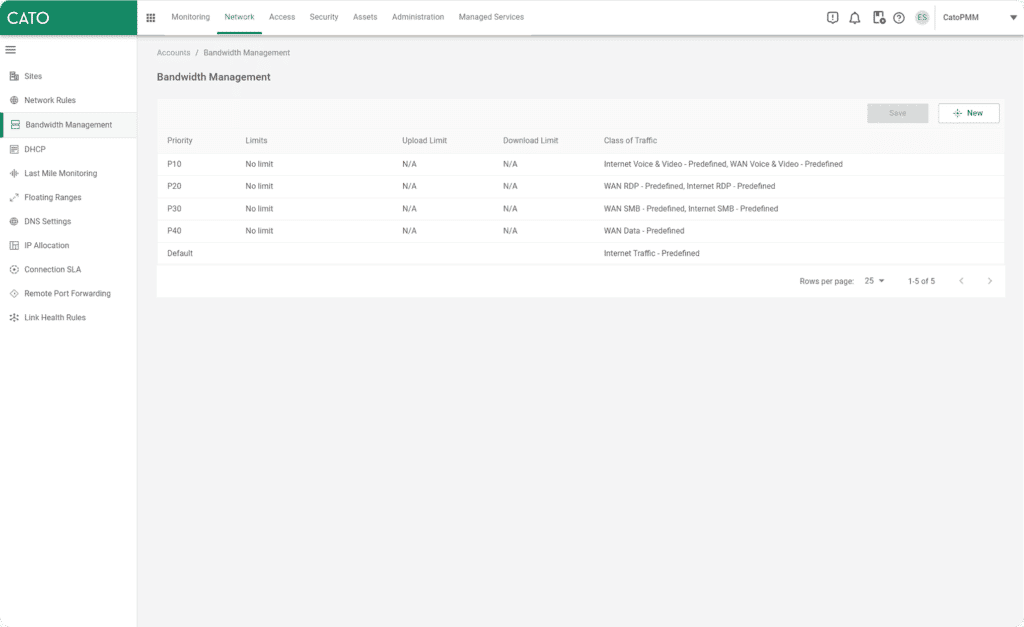

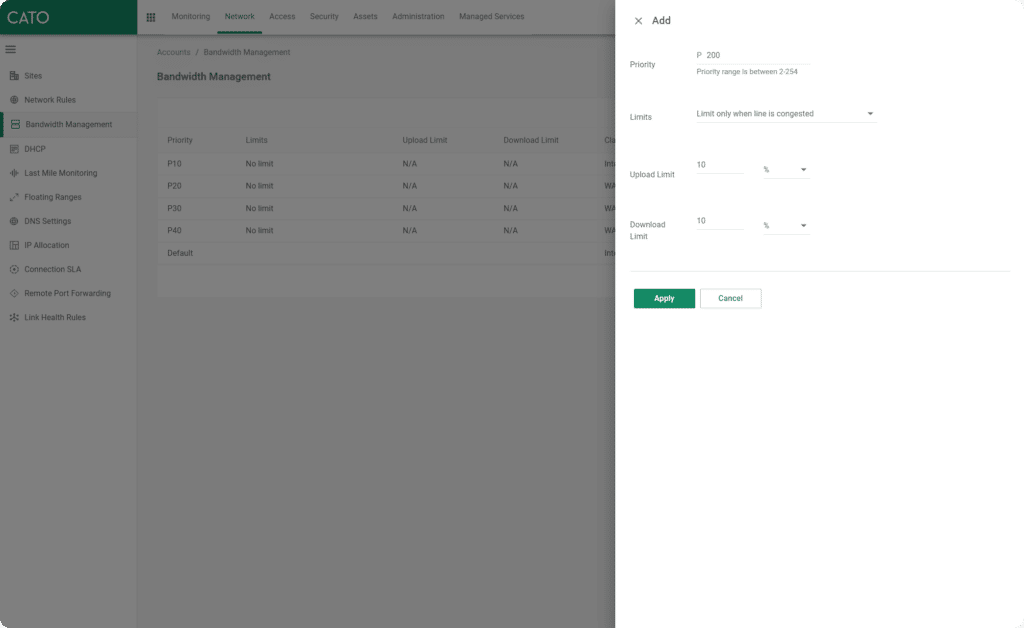

By default, the Cato Management Application has several pre-defined network rules and bandwidth priority levels to meet the most common use cases, but customers can quickly customize these policies or create their own rules based on the context types mentioned above. Customers can control the use of TCP acceleration and Packet Loss Mitigation and assign a bandwidth priority level to the traffic. Additionally, traffic routing across Cato’s backbone is fully under the customer’s control, allowing egressing from any of our PoPs to get as close to the destination as possible. You can even egress traffic from an IP address that is dedicated to your organization, all without opening a support ticket.

Cato’s Security Policies Share a Similar, Top-Down Logic

Cato’s security policies follow the same top-down logic and benefit from the same shared context as the network policy.

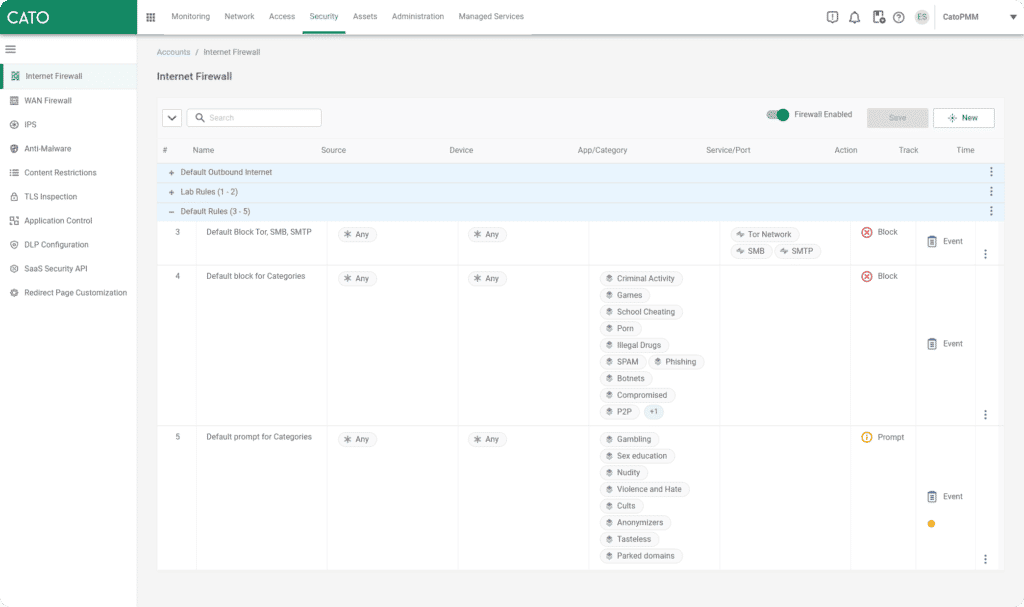

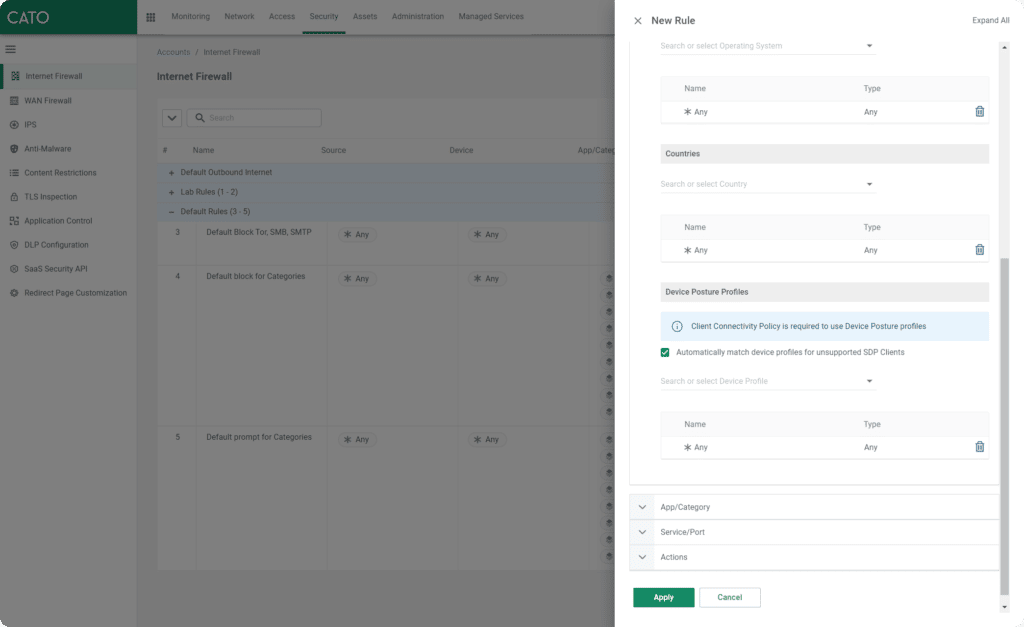

The Internet Firewall utilizes a block-list approach and is intended to enforce company-driven access policies to Internet websites and applications based on the application name, application category, port, protocol, and service. Unlike legacy security products, customers do not have to manage and attach multiple security profiles to their rules. All security engines (IPS, Anti-Malware, Next-Generation Anti-Malware) are enabled globally and scan all ports and protocols with exceptions created only when needed. This provides a consistent security posture for all users, locations, and devices without the pitfalls and misconfigurations of multiple security profiles.

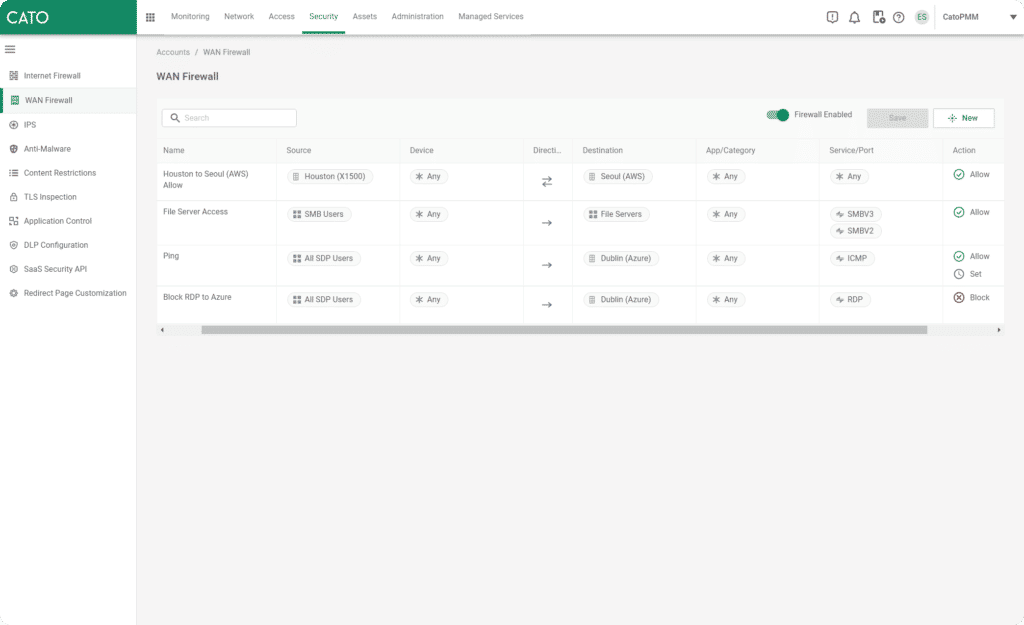

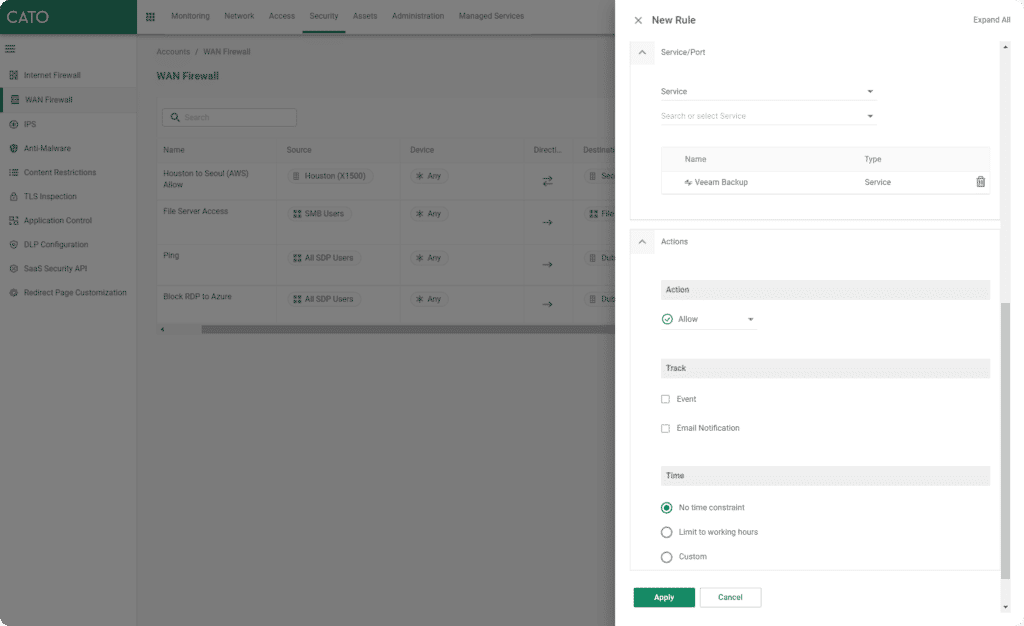

Cato’s WAN Firewall provides granular control of traffic between all connected edges (Site, Data Center, Cloud Data Center, and SDP User). Full mesh connectivity is possible, but the WAN Firewall has an allow-list approach to encourage a zero-trust access approach. The combination of source, destination, device, application, service, and other contexts is extremely flexible, allowing administrators to easily configure the necessary access between their users and locations. For example, typically only IT staff and management servers will need to connect to mobile SDP users directly, and this can be allowed in just a few clicks, or if you want to allow all SMB traffic between a site where your users are and a site with your file servers, that can also be done just as easily.

More About Cato’s Additional Security Capabilities

Cato has additional security capabilities beyond what we’ve covered, including DLP and CASB that have their own policy sets and as we continue to develop and deploy new capabilities you may see more added as well. But like what you’ve seen so far, you can expect simple, easy-to-build policies with powerful granular controls based on the shared context of both networking and security engines. Of course, all policy and service controls will be delivered from a true single-management point – the Cato Management Application.

Cato SSE 360 = SSE + Total Visibility and Control

For more information on Cato’s entire suite of converged, network security, please be sure to read our SSE 360 Whitepaper. Go beyond Gartner’s defined scope for an SSE service that offers full visibility and control of all WAN, internet, and cloud. Complete with configurable security policies that meet the needs of any enterprise IS team, see why Cato SSE 360 is different than traditional SSE vendors.