Showing 0 results

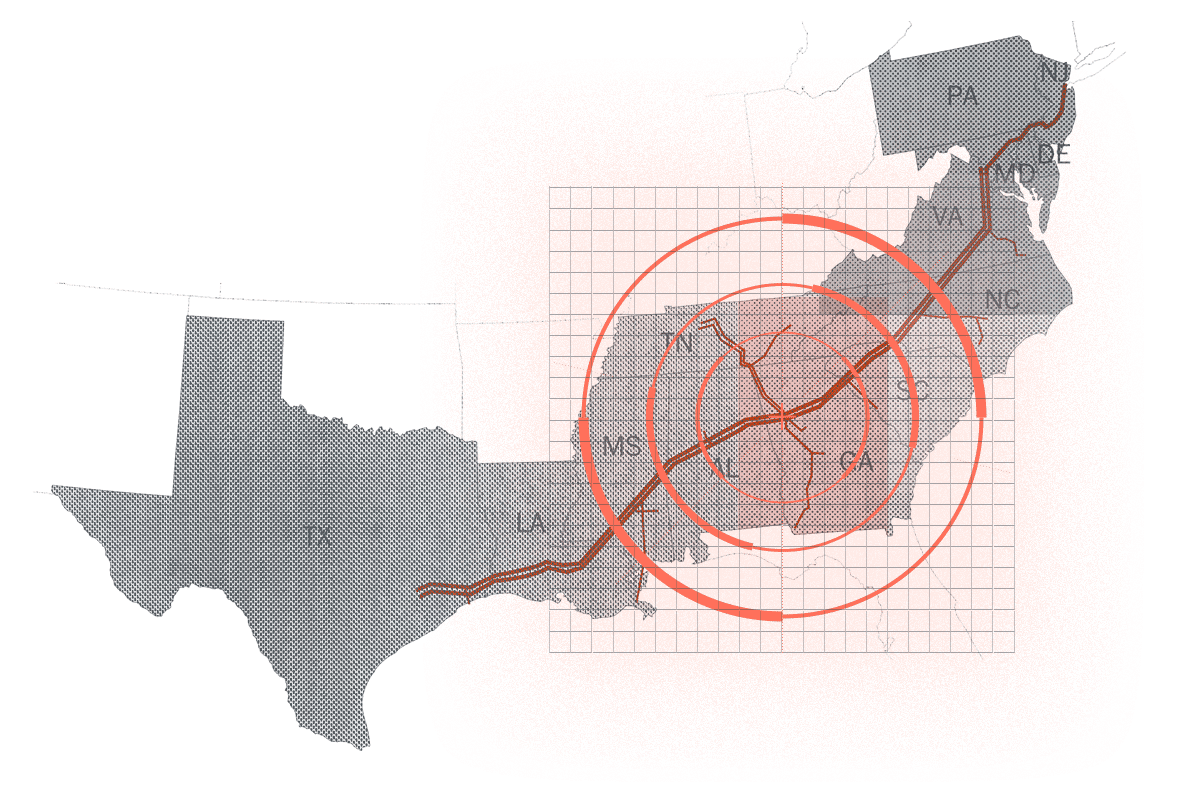

Cato Patches Vulnerabilities in Java Spring Framework in Record TimeAttackers are Zeroing in On Trust with New Device ID TwistThe New Shadow IT: How Will You Defend Against Threats from Amazon Sidewalk and Other “Unknown Unknowns” on Your Network?New Cato Networks SASE Report Identifies Age-Old Threats Lurking on Enterprise NetworksTargeting critical infrastructure has critical implicationsNew Microsoft Exchange Vulnerability DisclosedThe Biggest Misconception About Zero-Day AttacksHow to Improve Elasticsearch Performance by 20x for Multitenant, Real-Time ArchitecturesThreat actors are testing the waters with (not so) new attacks against ICS systemsEmotet Botnet: What It Means for You