During a recent malware hunt[1], the Cato research team identified some unique attributes of DGA algorithms that can help security teams automatically spot malware on... Read ›

The DGA Algorithm Used by Dealply and Bujo Campaigns During a recent malware hunt[1], the Cato research team identified some unique attributes of DGA algorithms that can help security teams automatically spot malware on their network.

The “Shimmy” DGA

DGAs (Domain Generator Algorithms) are used by attackers to generate a large number of – you guessed it – domains often used for C&C servers. Spotting DGAs can be difficult without a clear, searchable pattern.

Cato researchers began by collecting traffic metadata from malicious Chrome extensions to their C&C services. Cato maintains a data warehouse built from the metadata of all traffic flows crossing its global private backbone. We analyze those flows for suspicious traffic to hunt threats on a daily basis.

The researchers were able to identify the same traffic patterns and network behavior in traffic originating from 80 different malicious Chrome extensions, which were identified as from the Bujo, Dealply and ManageX families of malicious extensions. By examining the C&C domains, researchers observed an algorithm used to create the malicious domains. In many cases, DGAs appear as random characters. In some cases, the domains contain numbers, and in other cases the domains are very long, making them look suspicious.

Here are a few examples of the C&C domains (full domain list at the end of this post):

qalus.com

jurokotu.com

bunafo.com

naqodur.com

womohu.com

bosojojo.com

mucac.com

kuqotaj.com

bunupoj.com

pocakaqu.com

wuqah.com

dubocoso.com

sanaju.com

lufacam.com

cajato.com

qunadap.com

dagaju.com

fupoj.com

The most obvious trait the domains have in common is that they are all part of “.com” TLD (Top-Level Domain). Also, all the prefixes are five to eight letters long.

There are other factors shared by the domains. For one, they all start with consonants and then create a pattern that is built out of consonants and vowels; so that every domain is represented by consonant + vowel + consonant + vowel + consonant, etc. As an example, in jurokotu.com domain, removing the TLD will bring “jurokotu”, and coloring the word to consonants (red) and vowels (blue) will show the pattern: “jurokotu”.

From the domains we collected, we could see that the adversaries used the vowels: o, u and a, and consonants: q, m, s, p, r, j, k, l, w, b, c, n, d, f, t, h, and g. Clearly, an algorithm has been used to create these domains and the intention was to make them look as close to real words as possible.

[boxlink link="https://www.catonetworks.com/resources/8-ways-sase-answers-your-current-and-future-it-security-needs/?utm_source=blog&utm_medium=top_cta&utm_campaign=8_ways_sase_answers_needs_ebook"] 8 Ways SASE Answers Your Current and Future Security & IT Needs [eBook] [/boxlink]

“Shimmy” DGA infrastructure

A few additional notable findings are related to the same common infrastructure used by all the C&C domains.

All domains are registered using the same registrar - Gal Communication (CommuniGal) Ltd. (GalComm), which was previously associated with registration of malicious domains [2].

The domains are also classified as ‘uncategorized’ by classification engines, another sign that these domains are being used by malware. Trying to access the domains via browser, will either get you a landing page or HTTP ERROR 403 (Forbidden). However, we believe that there are server controls that allow access to the malicious extensions based on specific http headers.

All domains are translated to IP addresses belonging to Amazon AWS, part of AS16509. The domains do not share the same IP, and from time to time it seems that the IP for a particular domain is changed dynamically, as can be seen in this example:

tawuhoju.com

13.224.161.119

14/04/2021

tawuhoju.com

13.224.161.119

15/04/2021

tawuhoju.com

13.224.161.22

23/04/2021

tawuhoju.com

13.224.161.22

24/04/2021

Wrapping Up

Given all this evidence, it’s clear to us that the infrastructure used on these campaigns is leveraging AWS and that it is a very large campaign. We identified many connection points between 80 C&C domains, identifying their DGA and infrastructure. This could be used to identify the C&C communication and infected machines, by analyzing network traffic. Security teams can now use these insights to identify the traffic from malicious Chrome extensions.

IOC

bacugo[.]com

bagoj[.]com

baguhoh[.]com

bosojojo[.]com

bowocofa[.]com

buduguh[.]com

bujot[.]com

bunafo[.]com

bunupoj[.]com

cagodobo[.]com

cajato[.]com

copamu[.]com

cusupuh[.]com

dafucah[.]com

dagaju[.]com

dapowar[.]com

dubahu[.]com

dubocoso[.]com

dudujutu[.]com

focuquc[.]com

fogow[.]com

fokosul[.]com

fupoj[.]com

fusog[.]com

fuwof[.]com

gapaqaw[.]com

garuq[.]com

gufado[.]com

hamohuhu[.]com

hodafoc[.]com

hoqunuja[.]com

huful[.]com

jagufu[.]com

jurokotu[.]com

juwakaha[.]com

kocunolu[.]com

kogarowa[.]com

kohaguk[.]com

kuqotaj[.]com

kuquc[.]com

lohoqoco[.]com

loruwo[.]com

lufacam[.]com

luhatufa[.]com

mocujo[.]com

moqolan[.]com

muqudu[.]com

naqodur[.]com

nokutu[.]com

nopobuq[.]com

nopuwa[.]com

norugu[.]com

nosahof[.]com

nuqudop[.]com

nusojog[.]com

pocakaqu[.]com

ponojuju[.]com

powuwuqa[.]com

pudacasa[.]com

pupahaqo[.]com

qaloqum[.]com

qotun[.]com

qufobuh[.]com

qunadap[.]com

qurajoca[.]com

qusonujo[.]com

rokuq[.]com

ruboja[.]com

sanaju[.]com

sarolosa[.]com

supamajo[.]com

tafasajo[.]com

tawuhoju[.]com

tocopada[.]com

tudoq[.]com

turasawa[.]com

womohu[.]com

wujop[.]com

wunab[.]com

wuqah[.]com

References:

[1] https://www.catonetworks.com/blog/threat-intelligence-feeds-and-endpoint-protection-systems-fail-to-detect-24-malicious-chrome-extensions/

[2] https://awakesecurity.com/blog/the-internets-new-arms-dealers-malicious-domain-registrars/

Business continuity planning (BCP) is all about being ready for the unexpected. While BCP is a company-wide effort, IT plays an especially important role in maintaining... Read ›

3 Principles for Effective Business Continuity Planning Business continuity planning (BCP) is all about being ready for the unexpected. While BCP is a company-wide effort, IT plays an especially important role in maintaining business operations, with the task of ensuring redundancy measures and backup for data centers in case of an outage.

With enterprises migrating to the cloud and adopting a work-from-anywhere model, BCP today must also include continual access to cloud applications and support for remote users. Yet, the traditional network architecture (MPLS connectivity, VPN servers, etc.) wasn’t built with cloud services and remote users in mind. This inevitably introduces new challenges when planning for business continuity today, not to mention the global pandemic in the background.

Three Measures for BCP Readiness

In order to guarantee continued operations to all edges and locations, at all times – even during a data center or branch outage – IT needs to make sure the answer to all three questions below is YES.

Can you provide access to data and applications according to corporate security policies during an outage?

Are applications and data repositories as accessible and responsive during an outage as during normal operations?

Can you continue to support users and troubleshoot problems effectively during an outage?

If you can’t answer YES to all the above, then it looks like your current network infrastructure is inadequate to ensure business continuity when it comes to secure data access, optimized user experience, and effective visibility and management.

[boxlink link="https://www.catonetworks.com/resources/business-continuity-planning-in-the-cloud-and-mobile-era-are-you-prepared/?utm_source=blog&utm_medium=top_cta&utm_campaign=business_continuity+"] Business Continuity Planning in the Cloud and Mobile Era

| Get eBook [/boxlink]

The Challenges of Legacy Networks

Secure Data Access

When a data center is down, branches connect to a secondary data center until the primary one is restored. But does that guarantee business operations continue as usual? Although data replication may have operated within requisite RTO/RPO, users may be blocked from the secondary data center, requiring IT to update security policies across the legacy infrastructure in order to enable secure access.

When a branch office is down, users work from remote, connecting back via the Internet to the VPN in the data center. Yet VPN wasn’t designed to support an entire remote workforce simultaneously, forcing IT to add VPN servers to address the surge of remote users, who also generate more Internet traffic, resulting in the need for bandwidth upgrade. If a company runs branch firewalls with VPN access, challenges become even more significant, as IT must plan for duplicating these capabilities as well.

Optimized User Experience

When a data center is down, users can access applications from the secondary data center. But, if the performance of these applications relies on WAN optimization devices, IT will need to invest further in WAN optimization at the secondary data center, otherwise data transfer will slow down to a crawl. The same is true for cloud connections. If a premium cloud connection is used, these capabilities must also be replicated at the secondary data center.

When a branch office is down, remote access via VPN is often complicated and time-consuming for users. When accessing cloud applications, traffic must be backhauled to the data center for inspection, adding delay and further undermining user experience. The WAN optimization devices required for accelerating branch-datacenter connections are no longer available, further crippling file transfers and application performance. In addition, IT needs to configure new QoS policies for remote users.

Effective Visibility and Management

When a data center is down, users continue working from branch offices, and thus user management should remain the same. This requires IT to replicate management tools to the secondary data center in order to maintain user support, troubleshooting, and application management.

When a branch office is down, IT needs user management and traffic monitoring tools that can support remote users. Such tools must be integrated with existing office tools to avoid fragmenting visibility by maintaining separate views of remote and office users.

BCP Requires a New Architecture

Legacy enterprise networks are composed of point solutions with numerous components – different kinds of network services and cloud connections, optimization devices, VPN servers, firewalls, and other security tools – all of which can fail.

BCP needs to consider each of these components; capabilities need to be replicated to secondary data centers and upgraded to accommodate additional loads during an outage. With so much functionality concentrated in on-site appliances, effective BCP becomes a mission impossible task, not to mention the additional time and money required as part of the attempt to ensure business continuity in a legacy network environment.

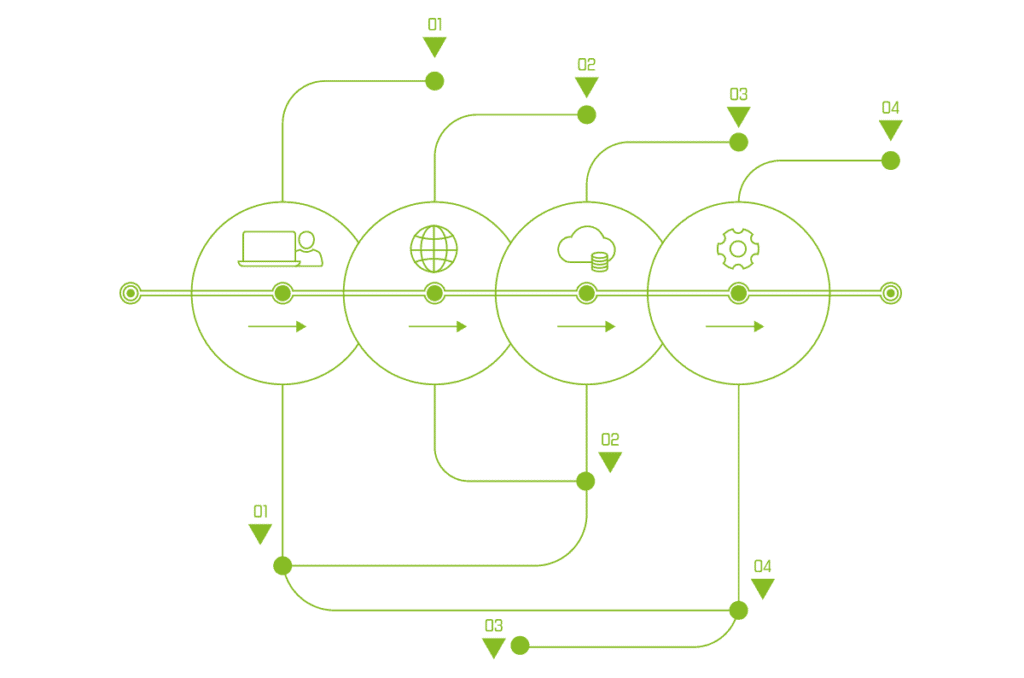

SASE: The Architecture for Effective BCP

SASE provides the adequate underlying infrastructure for BCP in today’s digital environment. With SASE, a single, global network connects and secures all sites, cloud resources, and remote users. There are no separate backbones for site connectivity, dedicated cloud connections for optimized cloud access, or additional VPN servers for remote access.

As such, there’s no need to replicate these capabilities for BCP. The SASE network is a full mesh, where the loss of a site can’t impact the connectivity of other locations. Access is restricted by a suite of security services running in cloud-native software built into the PoPs that comprise the SASE cloud. With optimization and self-healing built into the SASE service, enterprises receive a global infrastructure designed for effective BCP.

With SOC teams inundated by thousands of security alerts every day, CISOs, SOC managers and researchers need more effective means of prioritizing security alerts. Best... Read ›

Happy Hunting: A New Approach to Finding Malware Cross-Correlates Threat Intelligence Feeds to Reduce Detection Time With SOC teams inundated by thousands of security alerts every day, CISOs, SOC managers and researchers need more effective means of prioritizing security alerts. Best practices have urged us to start with alerts on the most critical resources. Such an approach, though, while valid, can leave security analysts chasing after millions of alerts, many that often turn into false positives.

We at Cato Networks Research Labs recently developed a different approach for our security team that we found to be remarkably effective. Our approach uses threat intelligence (TI) feeds to automatically identify top true-positive risks with high confidence. Here’s how we did it and some of the findings we learned along the way.

Correlating Threat Intelligence Feeds to Find Top Risk Malware

Our approach starts by identifying commonalities across TI feeds. Yes, alone, that’s nothing new. Normally, security analysts will try to eliminate false positives by looking for Indicators of Compromise (IoCs) that appear in multiple TI feeds. But how many TI feeds are enough to determine that one is valid? That’s the question and one we’ve now been able to answer.

We took 525 million real network traffic flows across 45 different feeds with 1.3M malicious domain IoCs. As shown in the graph: 0.46% of the total network flows had IoCs that were hit by at least one feed.

With a simple query we cross-matched malicious domains to TI feeds. This process revealed an exponential distribution for a manageable number to evaluate, by the number of feeds (see graph). Moreover, our research identified that 66.66% of the network flows which is correlated across 5 feeds, is a malware C&C communication. For network flows that matched 6 feeds, 100% of the flows are malware C&C communication. . Bottom line, we found that while specific cases may vary (like the number of feeds, their quality etc.), IoCs identified across five feeds and more are worth investigating and would bring very good rate of malware C&C communications.

[caption id="attachment_13156" align="aligncenter" width="1320"] Figure 1: Cross-Matching Security Alerts vs Threat Intelligence Feeds (# alerts & matched hits in feeds)[/caption]

Examples of Catching Malware Faster with New Cross-Correlation Approach

Using this approach, we identified three examples of malware on our customer networks — a Worm Cryptominer, Conficker malware, and malicious Chrome extension.

PCASTLE Worm Cryptominer

The first catch is of communication with a malicious domain by PCASTLE – a Worm-Cryptominer. PCASTLE is based on Powershell and Python, infects victims by laterally moving in the network using vulnerabilities like EternalBlue, and mine cryptocurrency on the infected machines.

PCASTLE attempts to communicate with the pp.abbny[.]com and info.abbny[.]com domains, using the URL as the infected machine identifier and additional information:

[caption id="attachment_13158" align="aligncenter" width="902"] Figure 2: A traffic attempt to the malicious domain. The URL includes information about the infected machine[/caption]

[caption id="attachment_13160" align="aligncenter" width="1381"] Figure 3: A traffic attempt to the malicious domain. The URL includes information about the infected machine[/caption]

As part of this infection investigation, we could see traffic directed to download additional packages to install on the infected machine from a different domain, bddp[.]net, using different URLs:

[caption id="attachment_13162" align="aligncenter" width="902"] Figure 4: Attempts to download additional malware[/caption]

Conficker Malware

Another fast malware discovery uncovered what seems like a newly registered domain of the famous Conficker malware. This malware exploits flaws in Windows to propagate across the network to form a botnet.

The domain uxfdsnkg[.]info, registered on the 1st October 2020, was identified on network flows of the 4th October 2020 (3 days after registered), with additional indicators (like HTTP headers and URL) which relates to Conficker malware:

[caption id="attachment_13164" align="aligncenter" width="902"] Figure 5: Domain(IoC), IP Address(IoC), url and http headers of Conficker C&C communication[/caption]

Malicious Chrome Extension

Finally, we identified communication back to a C&C server at pingclock[.]net, a malicious domain identified by several TI feeds. Searching the URI parameters and domain on the web, the suspicious traffic was identified to be related to Lnkr, per this research.

[caption id="attachment_13166" align="aligncenter" width="1381"] Figure 6: Domain(IoC), url, brower type, and user-agent of LNKR C&C communication[/caption]

The Lnkr malware uses an installed Chrome extension to track a user’s browsing activity and overlays ads on legitimate sites. It’s a common monetizing technique on the Internet.

Prioritizing Security Alerts using TI Feeds Lowers Your Malware Hunting Risks

With this new cross-correlation approach, we automated malware hunting for prioritizing security analysis and gaining higher SOC confidence. While not every malware can be hunted using threat intelligence feeds, and not all threat intelligence alerts contain evidence of C&C communication, matched data from overlapping TI feeds is found to be a good indicator for SOC managers to focus and direct further malware analysis.

With a simple cross-matching query, SOC teams gain an important tool for high priority threat hunting of network traffic. They can evaluate and block traffic based on Threat Intelligence feeds from several different feeds. It’s highly recommended to use more than one source of Threat Intelligence to incorporate this approach in your SOC. We’d love to hear how it works for you.

SIDEBAR

IoCs To Watch Out For

Conficker C&C domain:

uxfdsnkg[.]info

LNKR Chrome Extension C&C domain:

Pingclock[.]net

PCASTLE C&C and downloader domains:

pp.abbny[.]com

info.abbny[.]com/e.png

bddp[.]net

On December 8, FireEye reported that it had been compromised by a highly sophisticated state-sponsored adversary, which stole many tools used by FireEye red-team, the... Read ›

Sunburst: How Will You Protect Yourself from the Next Attack? On December 8, FireEye reported that it had been compromised by a highly sophisticated state-sponsored adversary, which stole many tools used by FireEye red-team, the team that plays the role of an attacker in penetration testing. Upon investigation, on December 13, FireEye and Microsoft published a technical report, pointing out that the adversary gained access to FireEye’s network via a trojan (named Sunburst) in SolarWinds Orion.

SolarWinds Orion is a management platform that allows organizations to monitor and manage the entire IT stack – VMs, network devices, databases and more. The Orion platform requires full administrative access to those resources, which makes compromising Orion very sensitive.

According to SolarWinds, the trojan was inserted into the Orion platform and updates between March and June 2020, through its build process. Orion’s source code was not infected. SolarWinds Orion has 33,000 customers, and SolarWinds believes that 18,000 customers may have been downloaded the trojanized Orion version. More than 425 of the US Fortune 500 companies use SolarWinds products.

Within a few minutes of identifying Sunburst’s IoCs, all Cato customers were protected against the trojan. Our detection and prevention engines were updated; all users with Sunburst on their network notified. (Read this blog to better understand the value of SASE and Cato’s response.) For non-Cato customers or those already infected with Sunburst, teams should follow Cybersecurity and Infrastructure Security Agency (CISA) guidelines and SolarWinds Security Advisory.

But here’s the question: If end-point detection (EDR) and antimalware were insufficient to protect the biggest companies in the world, how then can any enterprise expect to protect itself from such attacks in the future?

Sunburst: A Remarkably Sophisticated Attack

To answer that question, you need to understand Sunburst. The trojan managed to stay alive and hidden for roughly nine months, making it one of the most sophisticated attacks we’ve seen in the past decade. The trojan did this by using many evasive techniques and carefully choosing its targets.

Evasive techniques began at the outset. The trojanized updates were digitally signed and loaded as DLLs as part of SolarWinds Business Layer component. This is particularly important as it would render the trojan undetected by most EDR systems. The trojan also only starts running 12 or more days after the infection date, which made it hard to identify its infection channel (the update on the specific date). Finally, the trojan only runs if the system is attached to a domain, and with some registry keys set to specific values.

Once executed, the adversaries obtain administrative access to the different assets that are managed by the SolarWinds platform by gaining access to Orion’s privileges and certificates. The adversaries use these credentials to move laterally across the network and access the infected organization’s assets.

Sunburst also tries to evade detection by using a multi-stage sophisticated C&C communication. The first and main network footprint of Sunburst, is its C&C communication with avsvmcloud[.]com domain, in the following format:

(DGA).appsync-api.{region}.avsvmcloud.com

Where {region} can be one of: eu-west-1, eu-west-2, us-east-1, us-east-2.

Sunburst creates a DGA (Domain Generation Algorithm) to generate unique subdomains for C&C communication. Without one subdomain to detect and block, the adversary can better avoid detection.

What’s more, if the domain resolves to an IP on a blocked IP range (a block list), Sunburst will stop executing and add a key to the registry to avoid further runs and detection. Once the domain is resolved and initial communication is complete, the trojan understands that communication is possible, and they know the target organization. They can then move onto the next phase of exfiltrating data by communicating with C&C server in one of nine other domains.

If that’s not enough to avoid detection, Sunburst sends data to the C&C server by creating a covert channel over TLS and using SolarWinds’ Orion Improvement Program (OIP) protocol that is normally used to send telemetry data. A telemetry channel is an approved communication channel which communicates on a regular basis with its destination, like malware C&C communication.

As we’ve seen in Cobalt Strike, Sunburst uses the attributes of a legitimate protocol to communicate and avoid detection. In this case, the http patterns of Orion Improvement Program protocols have been used but with a different domain (normally, api.solarwinds.com). As an example, the URIs ‘/swip/Events’ and ‘/swip/upd/SolarWinds.CortexPlugin.Components.xml’ which are used by SolarWinds are used also in Sunburst.

Detection and Post-Infection Analysis

What should be clear is that stopping such attacks with EDR or antimalware alone is very challenging, if not impossible. However, these threats continue to require the network to exfiltrate data and propagate across the network. By looking at those properties, enterprises can at the very least detect such threats in the future and stop them before they cause harm.

Cato’s MDR team identifies trojans, like Sunburst, during threat hunting by leveraging several characteristics of the Cato platform. Sunburst C&C communication, for example, occur across HTTPS, which makes line-rate TLS inspection vital. While inspecting the traffic, the specific attribute to note is the popularity of avsvmcloud[.]com domain. Across Cato customers, the domain’s popularity was very low prior to December 08, 2020. An unfamiliar destination with questionable trust should raise alarms for anyone. Our MDR metrics would also spot DGA usage. Finally, periodic traffic to the C&C server at avsvmcloud[.]com and accessing a subdomain generated by DGA, would flag Sunburst traffic as a suspicious. You wouldn’t expect outbound Internet traffic from Orion to non-SolarWinds websites for updates, content, and sharing telemetry or your own assets.

Network-Based Threat Hunting is Crucial

As threat actors become more sophisticated, enterprises need to be more proactive about hunting threats. And it's not just governmental organizations or financial institutions that need to be concerned with threat hunting. Every enterprise should ‘assume breach’ and act every day to identify unknown threats within their networks. Only then will you be protected from the next Sunburst.

Last month’s security advisories published by the Cisco Security reveals several significant vulnerabilities in Cisco IOS and IOS XE software. Overall, there were 28 high... Read ›

The Newest Cisco Vulnerabilities Demonstrate All That’s Wrong with Today’s Patching Processes Last month’s security advisories published by the Cisco Security reveals several significant vulnerabilities in Cisco IOS and IOS XE software. Overall, there were 28 high impact and 13 medium impact vulnerabilities in these advisories, with a total 46 new CVEs. All Cisco products running IOS were impacted, including IOS XR Software, NX-OS Software, and RV160 VPN Router.

The sheer quantity of vulnerabilities should raise alarms but so should the severity. Based on my own analysis of two sets of advisories — Zone-based firewall feature vulnerabilities (CVE-2020-3421 and CVE-2020-3480 ) and DVMRP feature vulnerabilities (CVE-2020-3566 and CVE-2020-3569) — their impact will be very significant. Both advisories seriously leave enterprises exposed, in ways that never needed to or should have happened.

[caption id="attachment_11409" align="alignnone" width="2088"] Figure 1 - Many vulnerabilities with High impact provided by the Cisco advisory center (partial list).[/caption]

Zone-based firewall vulnerabilities expose networks to TCP attacks

The multiple vulnerabilities Cisco reported in its Zone-Based Firewall feature of IOS (CVE-2020-3421, CVE-2020-3480) leave enterprises network open to simple L4 attacks.

More specifically, Cisco advisory notes that these vulnerabilities could allow an unauthenticated, remote attacker to cause the device to reload or stop forwarding traffic through the firewall. Cisco reports that “The vulnerabilities are due to incomplete handling of Layer 4 packets through the device.” In such cases, the attacker could craft a sequence of traffic and cause a denial of service.

Organizations will need to patch affected devices as there are no workarounds. As Cisco explains in CVE-2020-342, “Cisco has released software updates that address these vulnerabilities. There are no workarounds that address these vulnerabilities.”

However, patches themselves introduce risks. They involve OS-level changes, which in the rush to publish often contain their own bugs. Network administrators need time to test and stage the new patch. In the meantime, the devices remain open for a simple L4 attack that could potentially take down their networks.

Handling of DVMRP vulnerabilities raises serious questions.

Even worse was how Cisco handled vulnerabilities Cisco IOS XR’s Distance Vector Multicast Routing Protocol (DVMRP) (CVE-2020-3566, CVE-2020-3569). Cisco originally published this Security Advisory on Aug 28, 2020, when Cisco’s response team became aware of exploits leveraging this vulnerability in the wild. But it took a month, yes, a month, before they provided some means for enterprises to address this threat.

According to the Cisco advisory, bugs in DVMRP “could allow an unauthenticated, remote attacker to either immediately crash the Internet Group Management Protocol (IGMP) process or make it consume available memory and eventually crash. The memory consumption may negatively impact other processes that are running on the device.”

In short, an attacker could craft an IGMP traffic to degrade packet handling and other processes in the device. These vulnerabilities affect Cisco devices running any version of the IOS XR Software with multicast routing enabled on any of its interfaces. For a month, Cisco announced to the world that the door was wide open on any network running multicast.

To make matters worse, last month’s security advisory does little to lock that door. There are no patches to fix the vulnerability or even workarounds to temporarily address the problem. Instead, Cisco shared two possible mitigations, but both are limited.

One mitigation suggests rate limiting the IGMP protocol. Such an approach requires customers to first understand the normal rate of IGMP traffic, which would require network analysis of past data that if not done correctly could cause other issues, such as blocking legitimate traffic.

The second mitigation proposed adding an ACL that denies DVMRP traffic for a specific interface. But this mitigation, though, only helps those interfaces that do not use DVMRP traffic, leaving other interfaces exposed.

[caption id="attachment_11410" align="alignnone" width="2474"] Figure 2 – Cisco first published an advisory on Aug 28, leaving an open, zero-day vulnerability without a patch.[/caption]

Enough with the pain of patching appliances

In both cases, enterprise networks were left seriously compromised by vulnerabilities in the very appliances meant to connect or protect them. And this is hardly the first time (check out this post for other examples).

Appliance vendors apologize, rush to provide assistance in the form of an update, but its enterprises who really pay the burden. Security and networking teams need to stop what they’re doing, and work double-time to address vulnerabilities ultimately created by the vendors. It’s pressurized, intense race to fix problems before attackers can exploit them.

At what point will appliance vendors stop penalizing IT and start solving the problems themselves? The sad answer — never.

The problem isn’t Cisco (or any other vendor’s) security group. It’s the nature of appliance. As long as vendors cling to aging appliance architectures, enterprises will suffer the pains of patching. Vendor security teams will invariably have to choose between alerting the public and providing corrective action.

The answer? Make the vendor responsible for your security infrastructure. If they’re not going to fix the problem – and stand behind it — then why should you be the one who has to pay for it? Cloud providers maintains the infrastructure for you and so should appliance vendors. With cloud providers, there are no gaps between vulnerability notification and proactive action for attackers to exploit. If a vulnerability exists, cloud providers can patch infrastructure hood and add mitigations transparently for all users everywhere — instantly.

That’s the power of the cloud and it’s particularly relevant as we start to look at SASE platforms. The advocacy for appliance-based SASE platforms will only continue to lead enterprises down this never-ending patch pain. Moving processing to the cloud resolves that pain for good. Anything else leaves enterprises suffering unprotected in this new age of networking and security.

Occasionally, prospective customers ask whether Cato offers sandboxing. It’s a good question, one that we’ve considered very carefully. As we looked at sandboxing, though, we... Read ›

Sandboxing is Limited. Here’s Why and How to Best Stop Zero-Day Threats Occasionally, prospective customers ask whether Cato offers sandboxing. It’s a good question, one that we’ve considered very carefully. As we looked at sandboxing, though, we felt that the technology wasn’t in line with the needs of today’s leaner, more agile enterprises. Instead, we took a different approach to prevent zero-day threats or unknown files containing threats.

What is Sandboxing?

Legacy anti-malware solutions rely mostly on signatures and known indicators of attack to detect threats, so they’re not always adept at catching zero-day or stealth attacks. Sandboxing was intended as a tool for detecting hidden threats in malicious code, file attachments and Web links after all those other mainstream methods had failed.

The idea is simple enough -- unknown files are collected and executed in the sandbox, a fully isolated simulation of the target host environment. The file actions are analyzed to detect malicious actions such as attempted communication with an external command and control (C&C) server, process injection, permission escalation, registry alteration or anything else that could harm the actual production hosts. As the file executes, the sandbox runs multiple code evaluation processes and then sends the admin a report describing and rating the likelihood of a threat.

Sandboxing Takes Time and Expertise

As with all security tools, however, sandboxing has its drawbacks. In this case, those drawbacks limit its efficiency and effectiveness particularly as a threat prevention solution. For one, the file analysis involved in sandboxing can take as much as five minutes--far too long for a business user to operate in real-time.

On the IT side, evaluating long, detailed sandboxing reports takes time, expertise, and resources. Security analysts need to have a good grasp of malware analysis and operating system details to understand the code’s behavior within the operating environment. They must also differentiate between legitimate and non-legitimate system calls to identify malicious behavior. Those are highly specialized skills that are missing in many enterprises.

As such, sandboxing is often more effective for detection and forensics than prevention. Sandboxes can be a great tool for analyzing malware after detection in order to devise a response and eradication strategy or prevent future attack. In fact, Cato’s security team uses sandboxes for that very purpose. But to prevent attacks, sandboxes take too long and impose too much complexity.

Sandboxes Don’t Always Work

The other problem with sandboxes is that they don’t always work. As the security industry develops new tools and strategies for detecting and preventing attacks, hackers come up with sophisticated ways to evade them and sandboxes are no exception. Sandbox evasion tactics include delaying malicious code execution; masking file type; and analyzing hardware, installed applications, patterns of mouse clicks and open and saved files to detect a sandbox environment. Malicious code will only execute once malware determines it is in a real user environment.

Sandboxes have also not been as effective against phishing as one might think. For example, a phishing e-mail may contain a simple PDF file that exhibits no malicious behavior when activated but contains a user link to a malicious sign-in form. Only when the user clicks the link will the attack be activated. Unfortunately, social engineering is one of the most popular strategies hackers use to gain network entry.

The result: Sandboxing solutions have had to devise more sophisticated environments and techniques for detecting and preventing evasion methods, requiring ever more power, hardware, resources and expense that yield a questionable cost/benefit ratio for many organizations

The Cato Approach

The question then isn’t so much whether a solution offers sandboxing but whether a security platform can consistently prevent unknown attacks and zero-day threats in real-time. Cato developed an approach that meets those objectives without the complexity of sandboxing.

Known threats are detected by our anti-malware solution. It leverages full traffic visibility even into encrypted traffic to extract and analyze files at line rate. Cato determines the true file type not based on the file extension (.pdf, .jpeg etc.) but based on file contents. We do this to combat evasion tactics for executables masking as documents. The file is then validated against known malware signature databases maintained and updated by Cato.

The next layer, our advanced anti-malware solution, defends against unknown threats and zero-day attacks by leveraging SentinelOne’s machine-learning engine, which detects malicious files based on their structural attributes. Cato’s advanced anti-malware is particularly useful against polymorphic malware that are designed to evade signature-based inspection engines.

And Cato’s advanced anti-malware solution is fast. Instead of 1-5 minutes to analyze files, the advanced machine learning and AI tools from SentinelOne allow Cato to analyze, detect and block the most sophisticated zero-day and stealth attacks in as little as 50 to 100ms. This enables Cato advanced anti-malware to operate in real-time in prevention mode.

At the same time, Cato does not neglect detection and response. Endpoints can still become infected by other means. Cato identifies these threats by detecting patterns in the traffic flows across Cato’s private backbone. Every day, Cato captures the attributes of billions of network flows traversing Cato’s global private backbone in our cloud-scale, big data environment. This massive data warehouse provides rich context for analysis for Cato’s AI and anomaly detection algorithms to spot potentially harmful behaviors symptomatic of malware. Suspicious flows are reviewed, investigated and validated by Cato researchers to determine the presence of live threats on customer networks. A clear report is provided (and alerts generated) with Cato researchers available to assist in remediation.

Check out articles in Dark Reading here and here to see how Cato’s network threat hunting capability was able to detect previously unidentified malicious bot activity. Check out this Cato blog for more information on MDR and Cato’s AI capabilities.

Protection Without Disruption

Organizations need to prevent and detect zero-day threats and attacks in unknown files, but we feel that sandboxing’s speed and complexity are incompatible with today’s leaner, nimbler digital enterprises. Instead, we’ve developed a real-time approach that doesn’t require sophisticated expertise and is always current. But don’t take our word for it, ask for a demo of our security platform and see for yourself.

At the beginning of the month, Microsoft released an advisory and security patch for a serious Windows Server Message Block (SMB) vulnerability called the Windows... Read ›

Protect Your Systems Now from the Critical Windows SMBv3 RCE Vulnerability At the beginning of the month, Microsoft released an advisory and security patch for a serious Windows Server Message Block (SMB) vulnerability called the Windows SMBv3 Client/Server Remote Code Execution Vulnerability (AKA Windows SMBv3 RCE or CVE-2020-0796). The Server Message Block (SMB) protocol is essential for Windows network file and print sharing. Left unpatched, this new SMB vulnerability has the potential to create a path for dangerous malware infection, which is why Microsoft has labeled it Critical.

Windows SMBv3 RCE isn’t the first vulnerability in SMB. In May 2017, the infamous Wannacry ransomware attack disabled more than 200,000 Windows systems in 150 countries using a similar (but not the same) SMB vulnerability. One of the hardest hit victims, the British National Health Service (NHS), had to cancel more than 19,000 appointments and delay numerous surgeries. Microsoft had already issued a security patch but Wannacry was able to infect thousands of unpatched systems anyway.

Cato urges every organization to apply the Microsoft patch (CVE-2020-0796) now across all relevant Windows systems, which we’ll discuss here. Cato also updated its IPS to block any exploit using this new vulnerability. As long as customers have their Cato IPS to Block mode, their systems will be protected. There’s no need to run IPS updates as you would with a security appliance or on-premises software. Thanks to Cato’s cloud-native architecture, the update is already deployed for all Cato customers.

How CVE-2020-0796 Works

Unlike Wannacry that exploited vulnerabilities in older versions of Windows, this new vulnerability lies in the latest version of Windows 10. Specifically, vulnerability is found in the decompression routines of SMB version 3.1.1 (SMBv3) found in Windows 10, version 1903 and onwards for both 32- and 64-bit systems, the and recent versions of Windows Server Core used in applications such as Microsoft Datacenter Server.

An attacker could exploit this vulnerability to execute malicious code on both the SMB server and client side. They could attack Windows SMB server directly or induce an SMB client user to connect to an infected SMB server and infect the client.

An attack using this vulnerability could happen in a few ways. A hacker could attack systems from outside the enterprise network directly if a system’s SMB port has been left open to the Internet. By default, Windows Firewall blocks external connections to the SMB port, however. A more common scenario would involve a user inadvertently installing malware on their system by clicking on a malicious link in a spam email. The malware would then exploit the new SMBv3 vulnerability to spread across other Windows systems on the network.

How to Protect Yourself

The best way to protect your organization from malware exploiting this critical vulnerability is to make sure all Windows 10 systems and any remote, contractor or other systems accessing the enterprise network have applied the Microsoft security patch. If you need to delay patching for any reason or can’t be sure every system is patched, there are other measures IT can take.

The easiest is to simply disable SMBv3 compression on all systems via registry key changes, which wouldn’t have any negative impact as SMBv3 compression isn’t used yet. Microsoft describes how to do this in its advisory (see figure 1 below) and it could be accomplished over hundreds of systems via Group Policy. This would solve the problem for SMB servers but not SMB clients.

[caption id="attachment_10132" align="aligncenter" width="2766"] Figure 1: Microsoft Instructions for Disabling SMBv3 Compression[/caption]

You could also block inbound TCP Port 445 traffic, but that port may be used for other Windows components and would only protect you from attacks from the outside, not attacks spreading internally.

As for internal network flows, it’s always prudent to segment your network to restrict unnecessary traffic in order to prevent attacks like these from spreading laterally. There is no reason, for example, that a client system from your finance department should have network access to systems in human resources via the Windows SMB protocol.

How Cato Protects You

There are two ways Cato protects its customers. Thanks to its cloud-native architecture, Cato continually maintains and updates its extensive security stack across every Cato PoP, protecting all communications across the Cato network, whether a branch office or mobile user connects over the Cato backbone to the datacenter or with another branch office or mobile user. Cato’s cloud-native architecture applied all security updates, including the IPS signature for this newly announced vulnerability, shortly after Microsoft released its advisory. Enterprise IT doesn’t have to do anything, such as updating a security appliance. All exploits that take advantage of this vulnerability are already blocked as long as your IT department has set the Cato IPS for Block mode on all traffic scopes (WAN, Inbound and Outbound).

[caption id="attachment_10134" align="aligncenter" width="1090"] Figure 2: Apply Block Settings to All Traffic Scopes[/caption]

Even without this IPS update, however, Cato’s security stack uses other means to detect and alert on any traffic anomalies that could indicate an attack, even a zero-day attack. For example, if a host normally communicates using SMB with one or two other hosts and then suddenly communicates with hundreds of hosts, Cato’s IPS will detect those anomalous flows. It can alert IT or even cut off the flows depending on configuration. This may not block an attack completely, but it will allow IT to limit the damage and apply necessary measures to prevent the attack in the future.

We’ll continue to keep you abreast of any critical Windows vulnerabilities in the future. Cato customers can rest assured that Cato will take all possible measures to protect their networks against new vulnerabilities, immediately.

One of the big selling points of SD-WAN tools is their ability to use the Internet to deliver private-WAN levels of performance and reliability. Give... Read ›

Unstuck in the middle: WAN Latency, packet loss, and the wide, wide world of Internet WAN One of the big selling points of SD-WAN tools is their ability to use the Internet to deliver private-WAN levels of performance and reliability. Give each site connections to two or three Internet providers and you can run even demanding, performance-sensitive applications with confidence. Hence the amount of MPLS going away in the wake of SD-WAN deployments. (See Figure 1.)

[caption id="attachment_9257" align="alignnone" width="424"] Figure 1: Plans for MPLS in the SD-WAN[/caption]

The typical use case here, though, is the one where the Internet can also do best: networks contained within a specific country. In such a network, inter-carrier connectivity will be optimal, paths will be many, and overall reliability quite high. Packet loss will be low, latency low, and though still variable, the variability will tend to be across a relatively narrow range.

Global Distance = Latency, Loss, and Variability

Narrow relative to what? In this case, narrow when compared to the range of variation on latency across global networks. Base latency increases with distance, inevitably of course, but the speed of light does not tell the whole story. The longer the distances involved, the greater the number of optical/electronic conversions, bumping up latency even further as well as steadily increasing cumulative error rates. And, the more numerous the carrier interconnects crossed, the worse: even more packets lost, more errors, and another place where variability in latency creeps in.

A truly global Internet-based WAN will face innumerable challenges to delivering consistent high-speed performance thanks to all this complexity. In such a use case, the unpredictability of variation in latency as well as the greater range for the variation is likely to make the user experiences unpleasantly unreliable, especially for demanding and performance-sensitive applications.

Global Fix: Optimized Middle Miles

To fix the problem without simply reverting to a private WAN, one can seek to limit the use of public networks to the role they best fill: the ingress and egress, connecting a site to the world. But instead of having the Internet be the only path available to packets, you can also have a controlled, optimized, and consistent middle-mile network. Sites connect over the Internet to a point of presence (POP) that is “close” for Internet values of the term—just a few milliseconds away, basically, without too many hops. The POPs are interconnected with private links that bypass the complexity and unpredictability of the global Internet to deliver consistent and reliable performance across the bulk of the distance. Of course, they still also have the Internet available as backup connectivity! Given such a middle-mile optimization, even a globe-spanning SD-WAN can expect to deliver solid performance comparable to—but still at a lower cost than—a traditional private MPLS WAN.

One potential pain point in an SD-WAN deployment is vendor sprawl at the WAN edge: the continual addition of vendors to the portfolio IT has... Read ›

Fight Edge Vendor Sprawl One potential pain point in an SD-WAN deployment is vendor sprawl at the WAN edge: the continual addition of vendors to the portfolio IT has to manage to keep the edge functional and healthy.

This sprawl comes in two forms: appliance vendors, and last-mile connectivity vendors.

As noted in an earlier post, most folks deploying an SD-WAN want to flatten that unsightly pile of appliances in the branch network closet. In fact, according to Nemertes’ research:

78% want SD-WAN to replace some or all branch routers

77% want to replace some or all branch firewalls

82% want to replace some or all branch WAN optimizers

In so doing, they not only slough off the unsightly pounds of excess equipment but also free themselves from the technical and business relationships that come with those appliances, in favor of the one associated with their SD-WAN solution.

The same issue crops up in a less familiar place with SD-WAN: last mile connectivity.

But we thought having options for the last mile was good!

It can be, for sure. One of the cool things about SD-WAN, after all, is that it allows a company to use the least expensive connectivity available at each location. Given that this is generally the basis of the business case that gets SD-WAN in the door, an enterprise with a large WAN can easily wind up with dozens or scores of providers, representing a mix of regional, local, and hyper-local cost optimizations. In some places, having the same provider for everybody may be most cost-effective; in others, a different provider per metro area, or even per location in a metro, might save the most money. And money—especially hard dollars flowing out the door—talks.

But just as with the branch equipment stack, there is real cost, both financial and operational, to managing each last-mile provider relationship. The business-to-business relationship of contracts and bills requires time and effort to maintain; contracts need to be vetted, bills reviewed, disputes registered and settled. So too does the IT-to-service provider technical relationship take work: teams need to know how to reach each other, ticket systems need to be understood, disaster recovery plans tested, and so on.

The technical support part of the relationship can be especially trying in a hyper-aggressive cost reduction environment. A focus on extreme cost-cutting may lead IT to embrace consumer-grade connectivity in some locations, even when business-grade connectivity is readily available. IT will then have to deal with consumer-grade technical support hours and efforts as well, which in the long term can eat up in soft dollars much of the potential hard dollar savings.

Grappling with sprawl

SD-WAN users have to either sharply limit the size of their provider pool, or make it someone else’s problem to handle the sprawl. Our data show that more than half of SD-WAN users want someone else to handle the last mile vendor relationship.

[caption id="attachment_9024" align="alignnone" width="625"] How SD-WAN users deal with last-mile sprawl[/caption]

When others manage the last mile, we see dramatic decreases in site downtime, both the duration of incidents and the sum total of downtime for the year. If the same vendor manages the SD-WAN itself, then IT will have gotten for itself the least potential for confusion and finger-pointing should any problems arise, without losing the benefits of cost reduction and last mile diversity.

Retailers, financial services firms, and other kinds of companies want to become more agile in their branch strategies: be able to light up, move, and... Read ›

Beyond the Thin Branch: Move Network Functions to Cloud, Says Leading Analyst Retailers, financial services firms, and other kinds of companies want to become more agile in their branch strategies: be able to light up, move, and shut down branches quickly and easily. One sticking point has always been the branch network stack: deploying, configuring, managing, and retrieving the router, firewall, WAN optimizer, etc., as branches come and go. And everyone struggles with deploying new functionality at all their branches quickly: multi-year phased deployments are not unusual in larger networks.

Network as a Service (NaaS) has arisen as a solution: use a router in the branch to connect to a provider point of presence, usually over the Internet, with the rest of the WAN’s functionality delivered there.

In-net SD-WAN is an extension of the NaaS idea: SD-WAN—centralized, policy-based management of the WAN delivering the key functions of WAN virtualization and application-aware security, routing, and prioritization—delivered in the provider’s cloud across a curated middle mile.

In-net SD-WAN allows maximum service delivery with minimum customer premises equipment (CPE) because most functionality is delivered in the service provider cloud, anywhere from edge to core. We’ve discussed how the benefits of this kind of simplification to the stack. It offers a host of other benefits as well, based on the ability to dedicate resources to SD-WAN work as needed, and the ability to perform that work wherever it is most effective and economical. Some jobs will best be handled in carrier points of presence (their network edge), such as packet replication or dropping, or traffic compression. Others may be best executed in public clouds or the provider’s core, such as traffic and security analytics and intelligent route management.

Cloud Stack Benefits the Enterprise: Freedom and Agility

People want a lot out of their SD-WAN solution: routing, firewalling, and WAN optimization, for example. (Please see figure 1.)

[caption id="attachment_6278" align="aligncenter" width="939"] Figure 1: Many Roles for SD-WAN[/caption]

Enterprises working with in-net SD-WAN are more free to use resource-intensive functions without feeling the limits of the hardware at each site. They are free to try new functions more frequently and to deploy them more broadly without needing to deploy additional or upgraded hardware. These facts can allow a much more exact fit between services needed and services used since there is no up-front investment needed to gain the ability to test an added function.

Enterprises are also able to deploy more rapidly. On trying new functions at select sites and deciding to proceed with broader deployment, IT can snap-deploy to the rest. On lighting up a new site, all standard services—as well as any needed uniquely at that site—can come online immediately, anywhere.

Cloud Stack Benefits: Security and Evolution

The provider, working with a software-defined service cloud, can spin up new service offerings in a fraction of the time required when functions depend on specialized hardware. The rapid evolution of services, as well as the addition of new ones, makes it easier for an enterprise to keep current and to get the benefits of great new ideas.

And, using elastic cloud resources for WAN security functions decreases the load on networks, and on data center security appliances. Packets that get dropped in the provider cloud for security reasons don’t consume any more branch or data center link capacity, or firewall capacity, or threaten enterprise resources. This reduces risk for the enterprise overall.

Getting to Zero

A true zero-footprint stack is not possible, of course. Functions like link load balancing and encryption have to happen on premises so there always has to be some CPE (however generic a white box it may be) on site. But the less that box has to do, and the more the heavy lifting can be handled elsewhere, the more the enterprise can take advantage of all these benefits in an in-net SD-WAN.

WANs are slow. Not in terms of data rates and such, but in terms of change. In most enterprises, the WAN changes more slowly than... Read ›

WAN on a Software Timeline WANs are slow. Not in terms of data rates and such, but in terms of change. In most enterprises, the WAN changes more slowly than just about any other part of the infrastructure. People like to set up routers and such and then touch them as infrequently as possible—and that goes both for enterprises and for their WAN service providers as well.

One great benefit of SDN, SD-WAN, and the virtualization of network functions (whether via virtual appliances or actual NFV) is that they can put innovation in the network on a software timeline rather than a hardware timeline. IT agility is a major concern for any organization caught up in a digital transformation effort. Nemertes’ most recent cloud research study found a growing number of organizations, currently 37%, define success in terms of agility. So, the speed-up is sorely needed. This applies whether the enterprise is running that network itself, or the network belongs to a service provider.

When the network requires specialized and dedicated hardware to provide core functionality, making significant changes in that function can take months or years. Months, if the new generation of hardware is ready and “all” you have to do is roll it out (and you roll it out aggressively, at that). Years, if you have to wait for the new functionality to be added to custom silicon or wedged into a firmware or OS update. If you are an enterprise waiting to implement some new customer-facing functionality, or a new IOT initiative, waiting that long can see an opportunity pass. If you are a service provider, having to wait that long means you cannot offer new or improved services to your customers frequently, quickly, or easily—you have to invest a lot, between planning for and rolling out infrastructure to support your new service, before you can see how well customers take up the service.

When the network is running on commodity hardware such as x86 servers and whitebox switches, you can fundamentally change your network’s capabilities by deploying new software. Fundamental change can happen in weeks, even days. Proof of concept deployments take less time, and it is easier to upshift them into full deployments or downshift them to deal with problems. Rolling a change back becomes a matter of reverting to the previous state.

Whether enterprise or service provider, this shift lowers the barrier to innovation in the network, dramatically. It becomes possible to try more innovations and offer more new services with a much lower investment of staff time and effort, a lot less money up front, and with a much shorter planning horizon. The organization can work more to current needs and spend less time trying to predict demand five years in advance in order to justify a major capital equipment rollout.

For enterprises, shifting to a software-based networking paradigm (incorporating some or all of SDN, SD-WAN, virtual appliances and/or NFV) allows in-house application developers unprecedented opportunities to affect or respond to network behavior. For service providers, it means being able to more quickly meet changing customer needs, address new opportunities, and use new technologies as they become available.

Of course, making a shift as fundamental and far-reaching as moving from legacy to SDN, from specialized hardware to white boxes, is not trivial. But it should be on the roadmap for every organization, for all the parts of the network they run on their own; it should be a selection criterion for their network service providers as well.

Managing a big pile of network gear at every branch location is a hassle. No surprise, then, that Nemertes’ 2018-19 WAN Economics and Technologies research... Read ›

SD-WAN: Unstacking the Branch for WAN Simplicity Managing a big pile of network gear at every branch location is a hassle. No surprise, then, that Nemertes’ 2018-19 WAN Economics and Technologies research study is showing huge interest in collapsing the branch stack among those deploying SD-WAN:

78% want to replace some or all branch routers with SD-WAN solutions

77% want to replace some or all branch firewalls

82% want to replace some or all branch WAN optimizers

While collapsing the WAN stack can have capital benefits, although it is not guaranteed unless one moves to an all-opex model, whether box-based Do-It-Yourself (DIY) or an in-net/managed solution (where a service provider manages the solution and delivers some or all SD-WAN functionality in their network instead of at the endpoints). After all, the more you want a single box to do, the beefier it has to be, and one expensive box can wind up costing more than three relatively cheap ones. More compelling in the long run are the operational benefits of collapsing the stack: smaller vendor and product pool, easier staff skills maintenance, and simpler management processes.

IT sees benefits from reducing the number of products and vendors it has to manage through each device-layer’s lifecycle. Fewer vendors means fewer management contracts to juggle. It means fewer sales teams to try to turn into productive partners. And, it means fewer technical support teams to learn how to work with—and relearn and relearn again and again through vendor restructurings, acquisitions, divestitures of products, or simply deals with support staff turnover. Having a single relationship, whether box vendor or service provider, brings these costs down as far as possible, and simplifies relationship management as much as possible.

Fewer solutions typically also means reducing the number of technical skill sets needed to keep the WAN humming. There is that special, though not uncommon, case where solutions converge but management interfaces don’t, resulting in little or no savings or improvement of this sort. But, when converged solutions come with converged management tools and a consistent, unified interface, life gets better for WAN engineers. When a team only has to know one or two management interfaces instead of five or six, it is easier for everyone to master them, and so to provide effective cross-coverage. Loss or absence of a team member no longer carries the risk of a vital skill set going missing.

Most importantly, though, IT should be able to look forward to simplifying operations. When the same solution can replace the router, firewall, and WAN optimizer, change management gets easier, and the need to do network functional regression testing decreases. IT no longer has to worry that making a configuration change on one platform will have unpredictable effects on other boxes in the stack. The need to make sure one change won’t trigger cascading failures in other systems is part of what drives so many organizations to avoid changing anything on the WAN, whenever possible.

A side effect of that lowered barrier to change on the WAN should be improved security. We have seen far too many networks in which branch router operating systems are left unpatched for very long stretches of time, IT being unwilling to risk breaking them in order to push out patches, even security patches.

Although it can be argued that the SD-WAN appliance becomes too much of a single point of failure when it takes over the whole stack, it is worth remembering that when three devices are stacked up in the traffic path, failure in any of them can kill the WAN. A lone single point of failure is better than three at the same place, and it is easier to engineer high availability for a single solution than for several.

And, of course, if the endpoint is mainly a means of connecting the branch to an in-net solution, redundancy at the endpoint is even easier (and redundancy in the provider cloud should be table stakes as a selection criterion). Whether IT is doing the engineering itself or relying on the engineering of a service provider, that’s a win no matter what.

The severe vulnerability Cisco reported in its Cisco Adaptive Security Appliance (ASA) Software has generated widespread outcry and frustration from IT managers across the industry.... Read ›

Cisco ASA CVE-2018-0101 Vulnerability: Another Reason To Drop-the-Box The severe vulnerability Cisco reported in its Cisco Adaptive Security Appliance (ASA) Software has generated widespread outcry and frustration from IT managers across the industry.

While Cato generally does not generally discusses security bugs in other vendor products, this vulnerability demonstrates why the appliance-centric way of delivering network security is all but obsolete. When a vulnerability ranks “critical,”admins everywhere must go into a fire drill to patch a huge number of devices or risk a breach. This is an enormous waste of resources and a perpetual risk for organizations, particularly those who can’t quickly respond.

The advisory, CVE-2018-0101, explains how an unauthenticated, remote attacker can cause a reload of an affected system or remotely execute code. The vulnerability occurs in the Secure Sockets Layer (SSL) VPN functionality of the Cisco Adaptive Security Appliance (ASA) Software. The vulnerability is considered critical and organizations should take immediate action. You can read the Cisco advisory here.

Cisco ASA is a unified threat management (UTM) platform designed to protect the network perimeter. According to Shodan, a search engine for finding specific types of internet-connected devices, approximately 120,000 ASAs have the WebVPN software enabled, the vulnerable component pertinent to the advisory.

Map of vulnerable ASAs

The release once again underscores the problems inherent in security appliances. As we’ve discussed before, UTMs, and appliances in general, suffer from numerous problems. For one, UTMs often lack the capacity power to run all features simultaneously. They also require ongoing care and maintenance, including configuration, software updates and upgrades, patches and troubleshooting.

CVE-2018-0101 is just the latest example. The advisory has left IT pros scrambling — and frustrated: “hey @Cisco thanks for NOT providing the fix for CVE-2018-0101 to customers without a current SmartNet contract. I'm going to advise all my clients with an ASA to immediately switch to a product of another vendor witch does leave it's customers sit in the rain with open vulns,” tweets Jenny

Beattie a self-described, network engineer writes “CVE-2018-0101 is kicking my ass #patch #cisco #security Only 153 "critical" devices to go…”

To make matters worse, there can be significant time between issuing the patch and publishing the advisory. “Eighty days is the amount of time that passed between the earliest software version that fixed the vulnerability being released, and the advisory being published. Eighty Days!” writes Colin Edwards,

“….I’m not sure that customers should be willing to accept that an advisory like this can be withheld for eighty days after some fixes are already available. Eighty days is a long time, and it’s a particularly long time for a vulnerability with a CVSS Score of 10 that affects devices that are usually directly connected to the internet.”

Vulnerabilities occured in the past, and will occur in the future. The fire drill imposed on security admins everywhere can be avoided. How? Cato’s answer is simple: drop the box. Cato provides Firewall as a Service by converging the full range of network security capabilities into a cloud-based service.

IT professionals no longer have to race to apply new security patches. Instead, Cato Research Labs keeps security current, updating the service, if necessary, once for all customers. And with security in the cloud, organizations can harness cloud elasticity to scale security features according to their needs without having to compromise due to appliance location or capacity constraints. A cloud-based network security stack also provides better visibility and inspection of traffic as well as unified policy management.

To learn more about firewall-as-a-service visit here.

With Bitcoin, and cryptocurrencies in general, growing in popularity, many customers have asked Cato Research Labs about Bitcoin security risks posed to their networks. Cato... Read ›

The Crypto Mining Threat: The Security Risk Posed By Bitcoin and What You Can Do About It With Bitcoin, and cryptocurrencies in general, growing in popularity, many customers have asked Cato Research Labs about Bitcoin security risks posed to their networks.

Cato Research Labs examined crypto mining and the threats posed to the enterprise. While immediate disruption of the network or loss of data is unlikely to be a direct outcome of crypto mining, increased facility costs may result. Indirectly, the presence of crypto mining software likely indicates a device infection.

Customers of Cato’s IPS as a service are protected against the threats posed by crypto mining. Non-Cato customers should block crypto mining on their networks. This can be done by disrupting the process of joining and communicating with the crypto mining pool either by blocking the underlying communication protocol or by blocking crypto mining pool addresses and domains. For a list of addresses and domains, you should block, click here.

The Risk of Crypto Mining and What You Can Do

Crypto mining is the validating of bitcoin (or other cryptocurrency) transactions and the adding of encrypted blocks to the blockchain. Miners establish valid block by solving a hash, receiving a reward for their efforts. The possibility of compensation is what attracts miners, but it’s the need for compute capacity to solve the hash that leads miners to leverage enterprise resources.

Mining software poses direct and indirect risks to an organization:

Direct: Mining software is compute intensive, which will impact the performance of an employee’s device. Running processors at a “high-load” for a long time will increase electricity costs. The life of a processor or the battery within a laptop may be shortened.

Indirect: Some botnets are distributing native mining software, which accesses the underlying operating system in a way similar to how malware exploits a victim’s device. The presence of native mining software may well indicate a compromised device.

Cato Research Labs recommends blocking crypto mining. Preferably, this should be done using the deep packet inspection (DPI) engine in your firewalls. Configure a rule to detect and the block the JSON-RPC messages used by Stratum, the protocol mining pools use to distribute tasks among member computers. DPI rules should be configured to block based on three fields which are required in Stratum subscription requests: id, method, and params.

However, DPI engines may lack the capacity to inspect all encrypted traffic. Blocking browser-based, mining software may be a problem as Stratum often runs over HTTPS. Instead, organizations should block access to the IP addresses and domains used by public blockchain pools.

Despite our best efforts, no such list of pool address or domains could be found, which led Cato Research Labs to develop its own blacklist. Today, the list identifies hundreds of pool addresses. The list can be download here for import into your firewall.

Cryptocurrency mining may not be the gravest threat to enterprise security, but it should not be ignored. The risk of impaired devices, increased costs, and infections means removing mining software warrant immediate attention. The blacklist of addresses provided by Cato Research Labs will block access to existing public blockchain pools, but not new pools or addresses. It’s why Cato Research strongly recommends configuring DPI rules on DPI engine that have sufficient capacity to inspect all encrypted sessions.

In the light of recent ransomware attack campaigns against Microsoft RDP servers, Cato Research assessed the risk network scanning poses to organizations. Although well researched,... Read ›

Advisory: Why You Should (Still) Care About Inbound Network Scans In the light of recent ransomware attack campaigns against Microsoft RDP servers, Cato Research assessed the risk network scanning poses to organizations. Although well researched, many organizations continue to be exposed to this attack technique. Here’s what you can (and should) do to protect your organization.

What is Network Scanning?

Network scanning is a process for identifying active hosts on a network. Different techniques may be used. In some cases, network scanners will use port scans and in other cases ping sweeps. Regardless, the goal is to identify active hosts and their services.

Network scanning is commonly associated with attackers but not every network scan indicates a threat. Some scanners are benign and are part of various research initiatives. The University of Pennsylvania, for example, uses network scanning in the study of global trends in protocol security. However, while research projects will stop at scanning Internet IP-ranges for potentially open services, malicious actors will go further and attempt to hack or even gain root privilege on remote devices.

What’s Services Are Normally Targeted By Network Scanning?

While some scans target specific organizations, most scans over the Internet are searching for vulnerable services where hackers can execute code on the remote device.

Occasionally, after a new vulnerability in a service is publically introduced, a massive scan for this service will follow. Attackers may try to gain control of IoT devices or routers, control them using a bot that may be used later for DDoS attacks (such as the Mirai botnet) or even cryptocurrencies mining, which are very popular these days.

In addition, hackers may exploit known vectors in websites that serve many users, such as Wordpress vulnerabilities. This can be used as a source for drive-by attacks to compromise end-user machines on a large scale

How Widespread Are Network Scanning Attacks?

We’ve seen that some organizations continue to expose services unnecessarily to the world. Those services are being scanned, which exposes them to attack.

During a two-week period, Cato Research observed scans from thousands of scanners. More than 80% of the scanners originated from China, Latvia, Netherlands, Ukraine or the US (see figure 1).

Figure 1 - Top countries originating scans

When we look at the types of the scanned services, most scans targeted SQL, Microsoft RDP (Remote Desktop Protocol) and HTTP for different reasons (see figure 2). The large number of RDP scans is due to a variety of disclosed vulnerabilities in RDP, exploited by recent ransomware attack campaigns using password-guessing, brute force attacks on Microsoft RDP servers.

As for SQL Servers, it seems like the hunt for databases still exists. Servers running SQL tend to contain the most valuable information from the attacker’s perspective - personal details, phone numbers, and credit card information. This also applies to attacks on web servers, which may store valuable information such as personal information about web-site users, like their email addresses and passwords.

Figure 2 - HTTP, RDP, and SQL were the most scanned services

Recommendations

Organizations should protect themselves from scanning attacks with the following actions:

Whenever possible, the organization should not expose servers to the Internet. They should only make them accessible via the WAN firewall to sites and mobile users connected to Cato Cloud.

In case a server needs to be accessed from the public Internet, we recommend limiting access to specific IP addresses or ranges. This can be easily done by configuring Remote Port Forwarding in the Cato management console. When IP access rules are not enough, consider applying IPS geo-restriction rules to deny any access from “riskier” regions, such as accepting inbound connections from China, Latvia, Netherland or Ukraine.

If none of the above could be set, we recommend using Cato IPS rules to help in blocking various attempts to attack the server.

Network scanning may be a well-known technique but that doesn’t diminish its effectiveness. Be sure to apply these recommendations to prevent attackers from using this technique to penetrate of your network.

Read about top security websites

The much publicized critical CPU vulnerabilities published last week by Google’s Project Zero and its partners, will have their greatest impact on virtual hosts or... Read ›

The Meltdown-Spectre Exploits: Lock-down your Servers, Update Cloud Instances The much publicized critical CPU vulnerabilities published last week by Google’s Project Zero and its partners, will have their greatest impact on virtual hosts or those servers where threat actors can gain physical access.

The vulnerabilities, named Meltdown and Spectre, are hardware bugs that can be abused to leak information from one process to another in the underlying process or the dependent on operating system. More specifically, the vulnerability stems from a misspeculated execution that allows arbitrary virtual memory reads, bypassing process isolation of the operating system or processor. Such unauthorized memory reads may reveal sensitive information, such as passwords and encryption keys. These vulnerabilities affect many modern CPUs including Intel, AMD and ARM.

Cato Research Labs analyzed the security impact of vulnerabilities Spectre (CVE-2017-5753, and CVE-2017-5715) Meltdown (CVE-2017-5754) on Cato Cloud and our customers’ networks. Any measures needed to protect the software or hardware have been taken by Cato.

We highly recommend that Cato customers follow their cloud provider’s guidelines for patching operating system running in the virtual machine of their cloud hosts. Most cloud providers have already patched the underlying hypervisors. Specific patching instructions can be found here for Microsoft Azure, Amazon AWS, and Google Cloud Platform.