Cato CTRL™ Threat Research: New MongoDB Vulnerability Allows Instant Remote Server Takedown (CVE-2026-25611)

|

Listen to post:

Getting your Trinity Audio player ready...

|

Executive Summary

Cato CTRL’s Vitaly Simonovich (senior security researcher) has discovered a new vulnerability (CVE-2026-25611 with a “High” severity rating of 7.5 out of 10) in all MongoDB versions with compression enabled (version 3.4+, enabled by default since version 3.6), including MongoDB Atlas. The vulnerability can enable a threat actor to crash any MongoDB server.

MongoDB Atlas clusters are not internet-reachable by default. Clients must be allowed via the project’s IP access list or private connectivity. Exposure primarily occurs when customers choose to allow access from anywhere (0.0.0.0/0).

This issue was responsibly disclosed to MongoDB through its bug bounty program and was patched in partnership with its security team.

According to Shodan, 207,000+ MongoDB instances are exposed to the internet at the time of writing.

Figure 1. Publicly accessible MongoDB servers based on Shodan

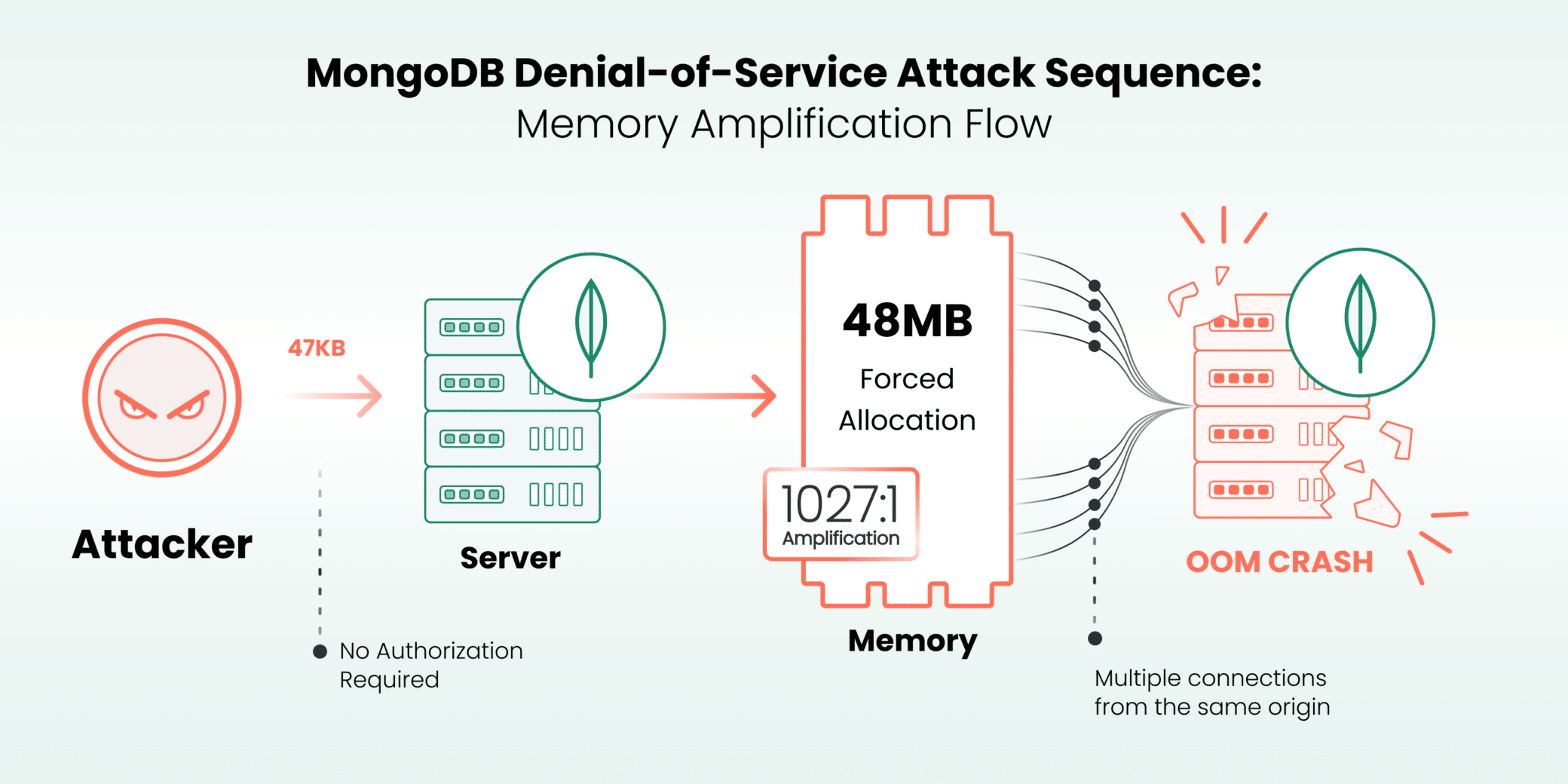

MongoDB Server allocates memory based on threat actor-controlled values before validating them, enabling unauthenticated threat actors to force 48MB allocations with 47KB packets. With as few as 3-10 connections, a threat actor can crash small MongoDB instances in seconds. Even 64GB enterprise servers fall with ~1,350 connections.

- What we found: MongoDB allocates memory based on the threat actor-controlled `

uncompressedSize` field in OP_COMPRESSED wire protocol messages before validating that the compressed data actually decompresses to the claimed size. A threat actor can send crafted packets (~47KB each) that force the server to allocate 48MB per connection, resulting in a 1027:1 amplification ratio. - How it works: The vulnerable code path in `

message_compressor_manager.cpp` calls `SharedBuffer::allocate(uncompressedSize)` before attempting decompression. By claiming a massive decompressed size while sending minimal actual data, threat actors force enormous memory allocations that persist until the connection closes. - Why it matters:

- Pre-authentication: No credentials required. Exploits wire protocol parsing before any auth check.

- High amplification: 47KB sent forces 48MB allocated (1027:1 ratio).

- Fast: Server out of memory (OOM) is killed in seconds (512MB instance crashes with just 10 connections).

- Default vulnerable: Compression is enabled by default since MongoDB 3.6.

- Affected MongoDB versions prior to 8.2.4 / 8.0.18 / 7.0.29 with compression enabled (3.4+, enabled by default since 3.6).

- Note: For more information, visit the MongoDB website.

Figure 2. MongoDB DoS attack sequence

2025 Cato CTRL™ Threat Report | Download the reportTechnical Overview

Before diving into the vulnerability, here’s a quick primer on the relevant MongoDB internals.

Background: Understanding the Concepts

MongoDB Wire Protocol

The MongoDB Wire Protocol is the binary protocol used for communication between clients (drivers, shells, applications) and MongoDB servers over transmission control protocol (TCP). By default, MongoDB listens on port 27017.

Every message starts with a standard 16-byte header containing:

- messageLength: Total size of the message.

- requestID: Unique identifier for the request.

- responseTo: ID of the request this message responds to (0 for requests).

- opCode: The operation type (e.g., 2013 for OP_MSG, 2012 for OP_COMPRESSED).

OP_COMPRESSED: Wire Protocol Compression

Introduced in MongoDB version 3.4, OP_COMPRESSED (opcode 2012) wraps any standard message with compression. It supports three algorithms:

During the initial handshake, client and server negotiate which compressors to use. Once agreed, either side can send compressed messages. The receiver uses the `compressorId` byte to know which algorithm to apply.

The Decompression Flow

When a server receives an OP_COMPRESSED message, the `MessageCompressorManager` handles decompression:

- Parse the header, extract `uncompressedSize` and `compressorId`.

- Allocate a buffer of `uncompressedSize` bytes.

- Decompress the payload into that buffer.

- Validate that the decompressed size matches the claimed size.

- Process the inner message as if it arrived uncompressed.

The vulnerability lies in step 2 happening before step 4. The server trusts the claimed size and allocates memory before verifying it.

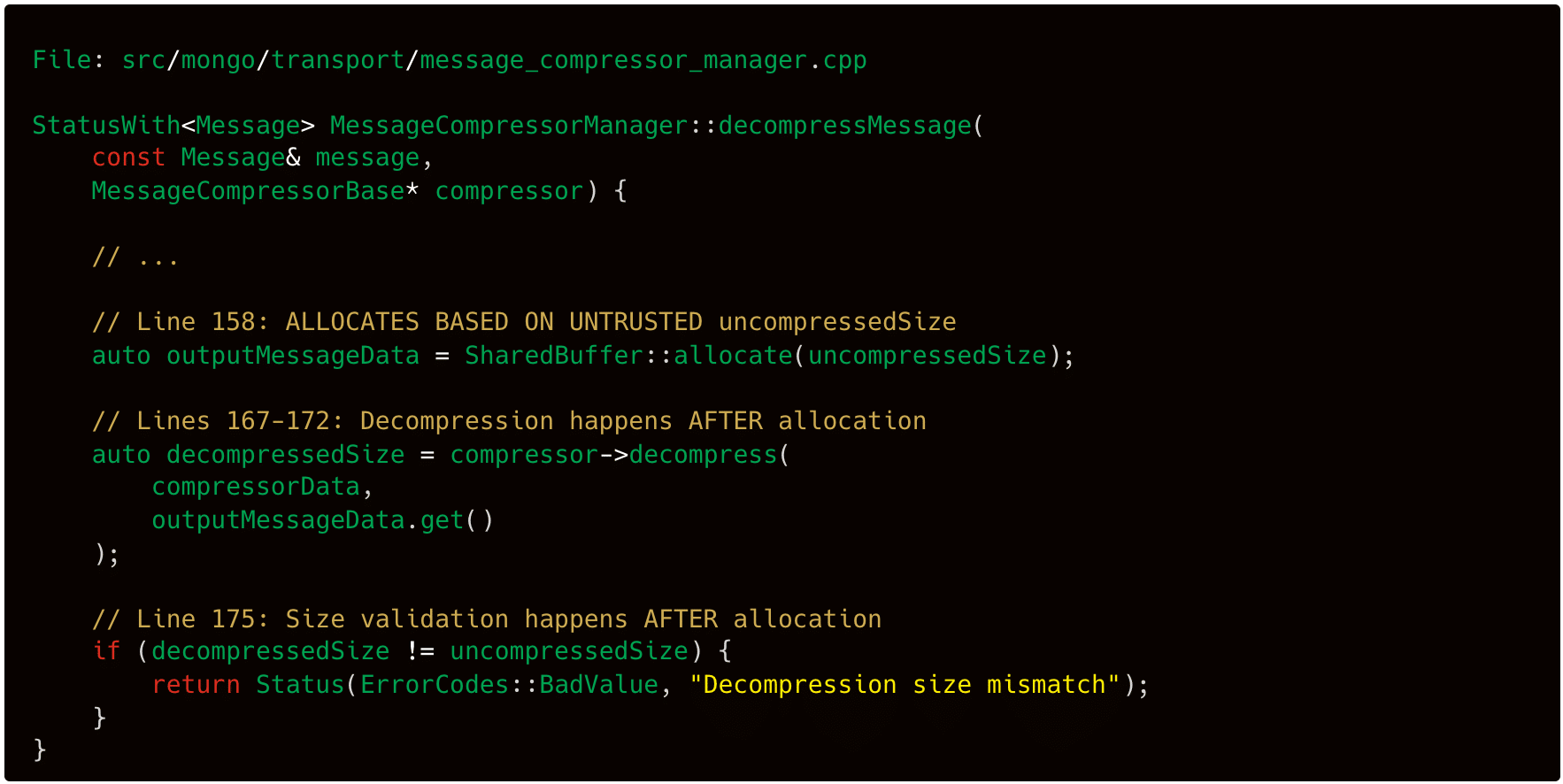

The Vulnerable Code Path

The vulnerability exists in MongoDB’s message decompression logic. When the server receives an OP_COMPRESSED message, it extracts the `uncompressedSize` field from the packet header and immediately allocates a buffer of that size before verifying the compressed data actually decompresses to match.

Figure 3. MongoDB vulnerable code

The problem: memory allocation happens at line 158 based on untrusted input. Validation happens at line 175 after the damage is done.

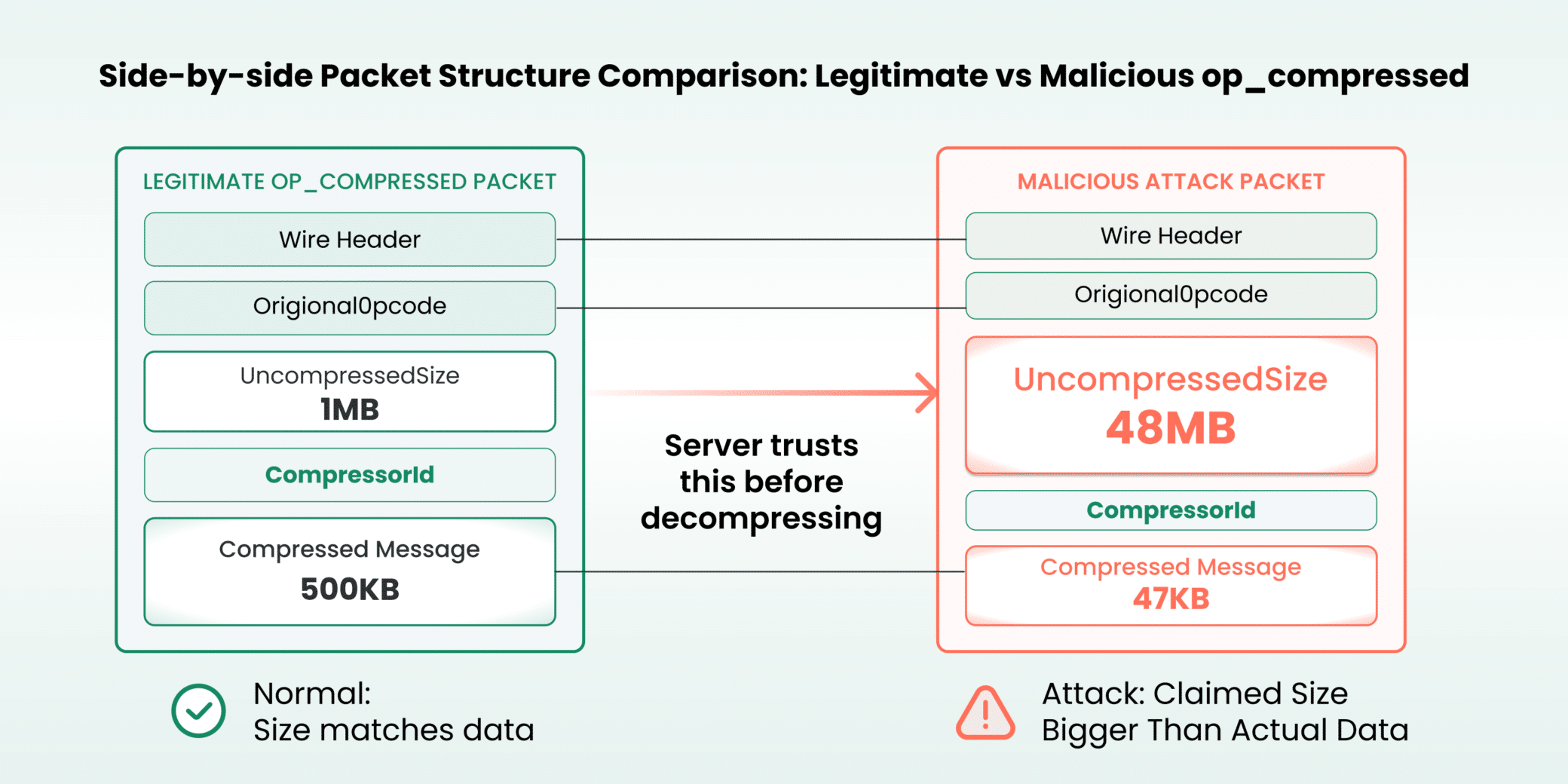

Wire Protocol Structure

OP_COMPRESSED messages follow this structure:

The `uncompressedSize` field is 4 bytes, allowing claims up to ~4GB. MongoDB caps this at 48MB per message, but that’s still enough to cause significant damage when multiplied across connections.

Figure 4. Side-by-side packet structure comparison: legitimate vs. malicious

Attack Mechanics

- Threat actor creates a zlib-compressed payload (~47KB) containing valid repetitive data.

- Threat actor sets `uncompressedSize` to maximum allowed (48,000,000 bytes).

- Server receives packet and extracts `uncompressedSize` from header.

- Server allocates 48MB buffer before attempting decompression.

- Decompression completes successfully (data is valid). Memory remains allocated.

- Threat actor opens concurrent connections (10 for 512MB server, 25 for 1GB, etc.)

- Server exhausts available memory → OOM killed by kernel (exit code 137).

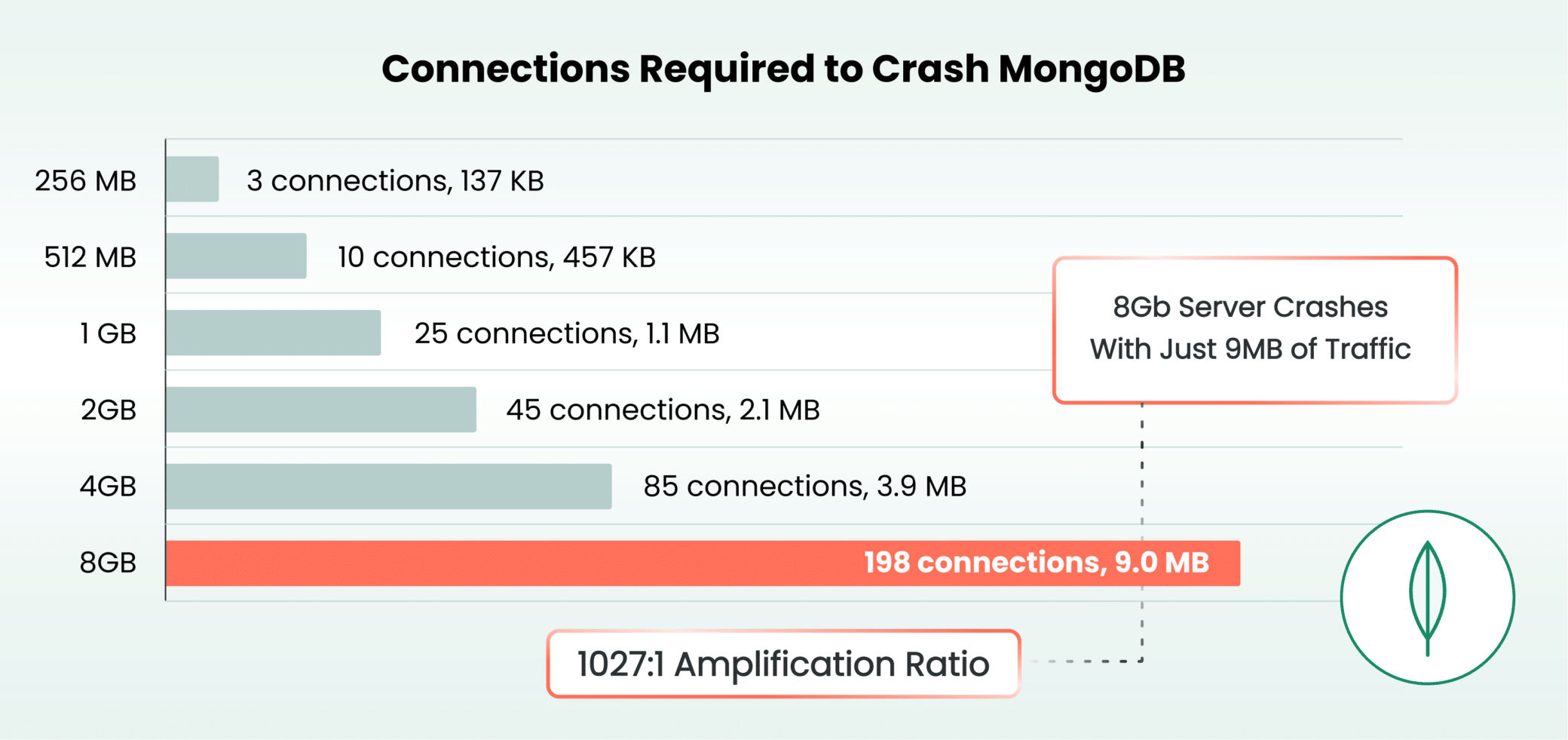

The compressed data is valid. It genuinely decompresses to the claimed size. The server keeps the connection open, waiting for the next message, and the allocated buffer persists. Our testing showed a 512MB MongoDB instance crashes with just 10 connections (~457KB of attack traffic), while an 8GB instance requires 198 connections (~9MB).

Connections Required for OOM

We tested MongoDB version 8.0 in Docker containers with various memory limits to determine the precise number of connections needed to trigger an OOM kill. Each connection sends a ~47KB payload that forces a 48MB allocation, which is a 1,027:1 amplification ratio. To put that into perspective, 47KB is a short email. 48MB is roughly the equivalent of an audio podcast. Each connection tricks the server into reserving a podcast episode’s worth of memory for an email’s worth of data.

Key observations:

- Formula: `Min Connections ≈ (RAM_MB – 100) / 48` – MongoDB’s ~100MB base memory usage leaves the rest vulnerable.

- Linear scaling: Connections needed scale linearly with available random-access memory (RAM) (~20 per GB).

- Minimal bandwidth: Even an 8GB server falls with just 9MB of attack traffic.

- Single machine: All tests conducted from a single threat actor machine.

Extrapolating to production deployments:

Even well-provisioned enterprise deployments can be overwhelmed. A threat actor on a typical home connection could crash a 64GB MongoDB server in under a minute.

Figure 5. Number of connections required to takedown MongoDB servers

Conclusion

The MongoDB vulnerability can enable a threat actor to takedown any MongoDB server. According to Shodan, 207,000+ MongoDB instances are exposed to the internet at time of writing. This vulnerability turns MongoDB’s compression feature, which is designed to improve performance, into an attack surface.

The fix is straightforward: validate the claimed `uncompressedSize` against the actual compressed data length before allocation. Compression algorithms have known maximum ratios; zlib can’t achieve 1027:1 compression on random data. The accumulative allocated size per connection can crash a MongoDB server. Organizations should review whether their MongoDB deployments require direct internet exposure.

Protections

For MongoDB customers:

- Restrict network access: Limit MongoDB exposure to trusted networks using firewalls and virtual private networks (VPNs).

- Implement connection limits: Configure `maxIncomingConnections` and use OS-level connection rate limiting.

- Monitor memory usage: Alert on unusual memory consumption patterns.

- Consider disabling compression: If your workload doesn’t benefit from compression, disable it with `–networkMessageCompressors=disabled`.

- Affected MongoDB versions prior to 8.2.4 / 8.0.18 / 7.0.29 with compression enabled (3.4+, enabled by default since 3.6) – update to the patched version

For Cato customers:

- IPS Protections: Cato IPS detects and blocks malformed OP_COMPRESSED packets and abnormal connection patterns targeting MongoDB.

- Network Segmentation: Use Cato’s network segmentation capabilities in the Cato SASE Platform to isolate database traffic from untrusted sources.

- MDR Monitoring: Cato MDR can triage suspicious MongoDB traffic patterns, including connection floods and unusual packet sizes.

Indicators of Compromise (IoCs)

Network Indicators:

- High volume of TCP connections to port 27017 (or any other configured port) from a single source.

- OP_COMPRESSED packets (opCode 2012) with large `uncompressedSize` values (>10MB) but small total packet size (<100KB).

- Rapid connection establishment followed by idle connections.

System Indicators:

- MongoDB process memory usage is spiking rapidly.

- OOM killer events in system logs targeting mongod.

- MongoDB exit code 137 (SIGKILL from OOM).