Securing Agentic AI: Why Visibility, Behavior, and Guardrails Matter

Table of Contents

|

Listen to post:

Getting your Trinity Audio player ready...

|

Agentic AI is quickly transitioning from experimentation to production. Enterprises are deploying AI agents to interpret goals, decide what actions to take, interact with business tools and APIs, and execute those actions autonomously, with limited or no human oversight. The promise is speed and efficiency, but the proverbial “blast radius” is bigger and fundamentally different from anything security teams have managed before.

Traditional AI security focuses on models and data. Agentic AI introduces something new: autonomous behavior. Agents invoke tools via APIs, inherit permissions, process prompts dynamically, and adapt based on context. Without the right controls, organizations quickly lose track of what agents exist, what they can do, and whether they are behaving as intended.

To secure agentic AI effectively, organizations must address three critical challenges:

- Visibility and Posture – understanding which agents exist and how they are configured

- Runtime Behavior – understanding agentic activity at runtime

- Guardrails and Controls – detecting and/or blocking risk agentic behavior

The First Challenge: Unclear Agent Inventory and Posture

As AI adoption accelerates, agents are being deployed across local environments, managed platforms, and custom-built frameworks. Many organizations lack clear answers to basic questions:

- What agents exist?

- What tools do they invoke?

- What permissions do they have?

- Where is access broader than necessary?

Without this visibility, security teams can struggle to identify over-privileged agents, risky tool connections or configuration gaps, especially before agents run in production. This mirrors early cloud security challenges, where misconfigurations and excessive permissions became widespread due to a lack of centralized visibility.

Agentic AI security must start with visibility and posture management, which involves identifying all agents, understanding their capabilities, mapping tool access, and defining expected behavior to proactively reduce risk.

Agentic AI security must start with visibility and posture management, which involves identifying all agents, understanding their capabilities, mapping tool access, and defining expected behavior to proactively reduce risk.

The Second Challenge: Limited Visibility Into Runtime Agent Behavior

Configuration alone does not reflect reality. An agent may be approved and properly configured yet behave in unexpected ways once it begins operating.

At runtime, agents:

- Process dynamic prompts

- Decide which tools to call

- Execute multi-step workflows

- Adapt behavior based on context

Without runtime visibility, teams cannot validate behavior, troubleshoot issues, or effectively investigate incidents. An agent might chain tool calls in unintended ways or interpret prompts differently than expected, leading to risky outcomes.

Effective agentic AI security requires continuous monitoring of actual behavior. Capturing prompts, tool calls, workflow steps, and decisions provides clear visibility into how agents behave at runtime. Comparing this activity against approved scopes and policies enables teams to detect anomalies early and understand not only what happened, but also why.

The Third Challenge – Unsafe Tool Requests and Toxic Agent Flows

The most serious risks in Agentic AI arise when agents make unsafe tool requests and create toxic execution flows. Agents may invoke tools outside their approved scope, causing chain actions in risky sequences or reliance on data from untrusted or unverified sources. These behaviors can distort decision-making, produce unsafe outputs, and cause agents to act on inputs that should never influence outcomes.

To mitigate this risk, organizations need real-time policy protection that evaluates agent actions as they occur. This includes identifying out-of-scope or high-risk tool requests, detecting toxic execution paths, and preventing agents from using untrusted data sources.

Without consistent real-time policy enforcement across agent workflows, enterprises lose control over how agents reason, act, and generate outputs, increasing the likelihood that agents drift beyond their intended scope.Why Agentic AI Security Requires a New Approach.

Cato Agentic AI SecurityHow Cato AI Security Delivers Value

Agentic AI is not just another application or model. It is an autonomous actor operating across systems, tools, and data. Securing it requires moving beyond static configuration checks to continuous, behavior-driven security, with clear identity, runtime insight, and consistent enforcement. Organizations that get this right can maintain Agentic AI trust, control, and accountability.

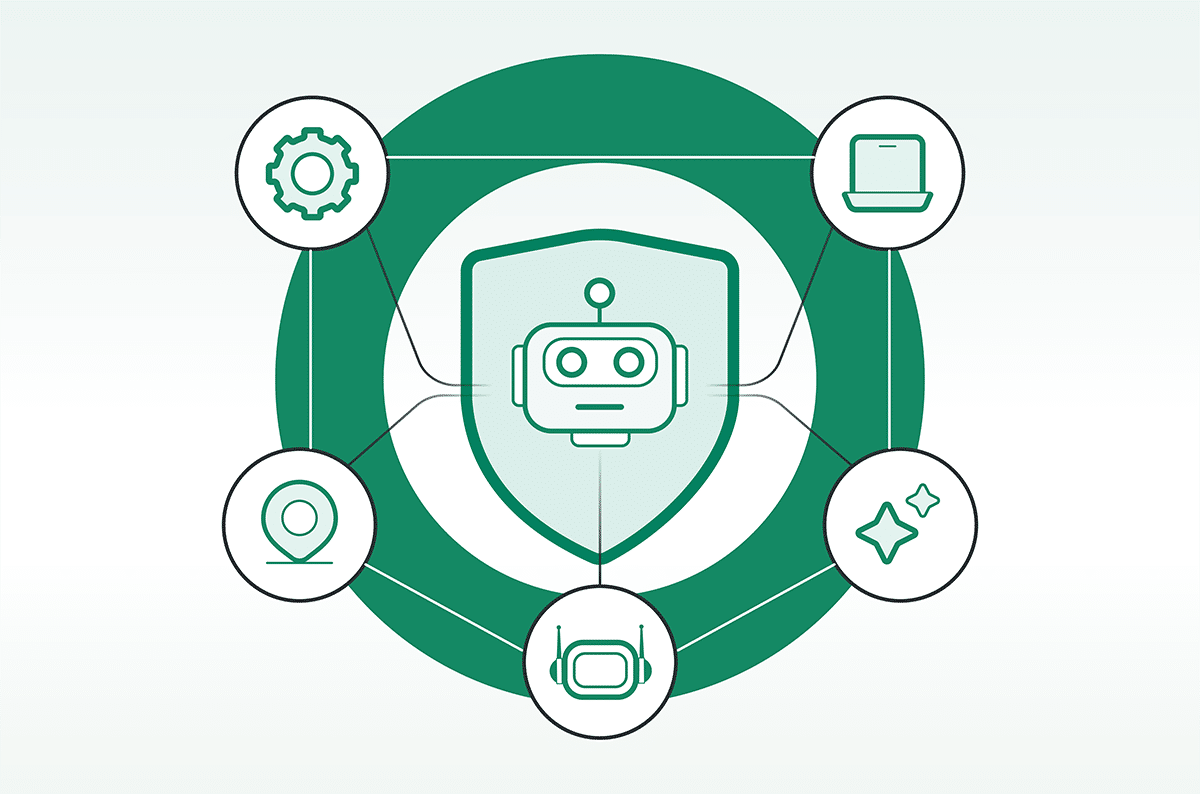

Cato Agentic AI Security directly addresses these challenges by bringing visibility, control, and consistency to autonomous agents:

- Cato establishes clear agent posture by identifying agents, mapping tool access and permissions, and defining expected behavior before execution, helping teams uncover over-privileged agents and risky connections early.

- Once agents are running, Cato delivers real-time visibility into prompts, decisions, and tool activity, creating traceable records that show how actions were initiated and whether agents stayed within approved scopes.

- Cato also enforces consistent runtime guardrails. By evaluating prompts, workflow signals, and tool requests, Cato identifies manipulation attempts and unsafe actions and either blocks them inline or raises alerts based on policy and agent capability, keeping behavior safe and predictable even as conditions change.

The True Value of Cato Extends Beyond Agentic AI Alone.

Agentic AI is one part of a broader enterprise AI landscape that includes how employees use AI, the AI applications companies build, and the overall AI security posture. Cato AI Security brings these together through a single unified solution that provides a holistic view of AI risk, behavior, and control across the organization.

With Cato, enterprises do not just secure agents. They secure AI as a system, enabling safe, scalable AI innovation without blind spots.

Securing Agentic AI is a native part of the Cato AI Security solution.