OpenClaw: Cato Governance Controls and Sector Exposure Insights from the Cato SASE Platform

Table of Contents

- 1. Why AI Service Exposure Needs Continuous Enforcement

- 2. The problem pattern we are addressing

- 3. Where we see the most exposure, and why

- 4. The new autonomous posture in the Cato SASE Platform

- 5. Why we rate this as HIGH impact

- 6. Internet Firewall enforcement with the OpenClaw app signature

- 7. What you will see in the Cato SASE Platform

- 8. Final Thoughts

|

Listen to post:

Getting your Trinity Audio player ready...

|

Agentic AI does not just answer, it acts. The moment an agent has a reachable control plane, you have effectively created a “remote hands” interface into your environment. In our recent blog post, “When AI Can Act: Governing OpenClaw,” we explained why this shift breaks old security assumptions and why governance must be continuous, enforced, and context-aware rather than a one-time checklist. We also shared insights into third-party applications already being used in workflows integrated with OpenClaw, along with early adoption trends. At the same time, the industry has seen how quickly things can go sideways when OpenClaw-style gateways are exposed to the internet, often unintentionally and at scale. Public reporting has highlighted tens of thousands of internet-exposed instances, largely driven by default bindings and permissive publishing patterns, which reinforces why this needs to be governed as an ongoing security posture.

In this post, we share insights from the Cato SASE Platform on the overpermissive exposure trends we are seeing across sectors, how we make it easy for customers to quickly track and govern risky exposure to OpenClaw and other AI services through Remote Port Forwarding, and how they can enforce blocking from the Internet Firewall using the newly signed OpenClaw app.

When AI Can Act: Governing OpenClaw | Read the blogWhy AI Service Exposure Needs Continuous Enforcement

Remote access and remote control surfaces are not new. What is new is that AI services, and especially agentic frameworks like OpenClaw, can introduce a control plane that is both powerful and easy to expose by accident. In many environments, exposure happens through a combination of convenience and speed: a broad traffic source, an allow rule that “just works,” and a port range that accidentally includes a sensitive service. Over time, these patterns become invisible and normalized, until they are discovered externally.

We also see a second trend that makes governance even more urgent: OpenClaw’s ecosystem is expanding quickly, and the broader tooling and plugin landscape creates opportunities for abuse, including malicious “skills” designed to trick users or extend attacker reach.

This is exactly where posture needs to move from periodic review into continuous control. In other words, do not wait for a quarterly audit to tell you that a sensitive AI service port was left reachable from everywhere.

The problem pattern we are addressing

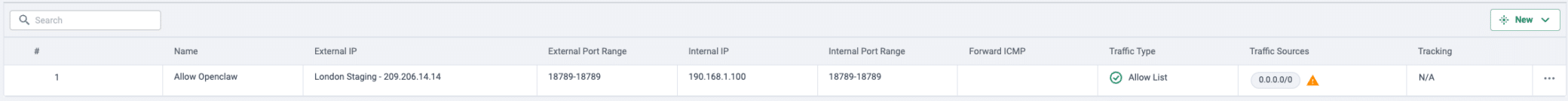

In customer environments we repeatedly encounter RPF rules that were never intended to be globally reachable, but effectively are. The highest-risk pattern is when an enabled rule allows a very broad and un-controlled traffic source and includes ports associated with AI service gateways. For OpenClaw, one example that has become especially relevant is TCP/18789, which has been observed in public exposure research and is often present in port ranges that were created for “temporary” access and then forgotten.

Once this kind of rule exists, it can become a quiet pathway into systems that sit behind what teams believe is a hardened perimeter. If the service behind that port is an agent gateway or something that can trigger actions, the operational risk and the blast radius change dramatically.

Where we see the most exposure, and why

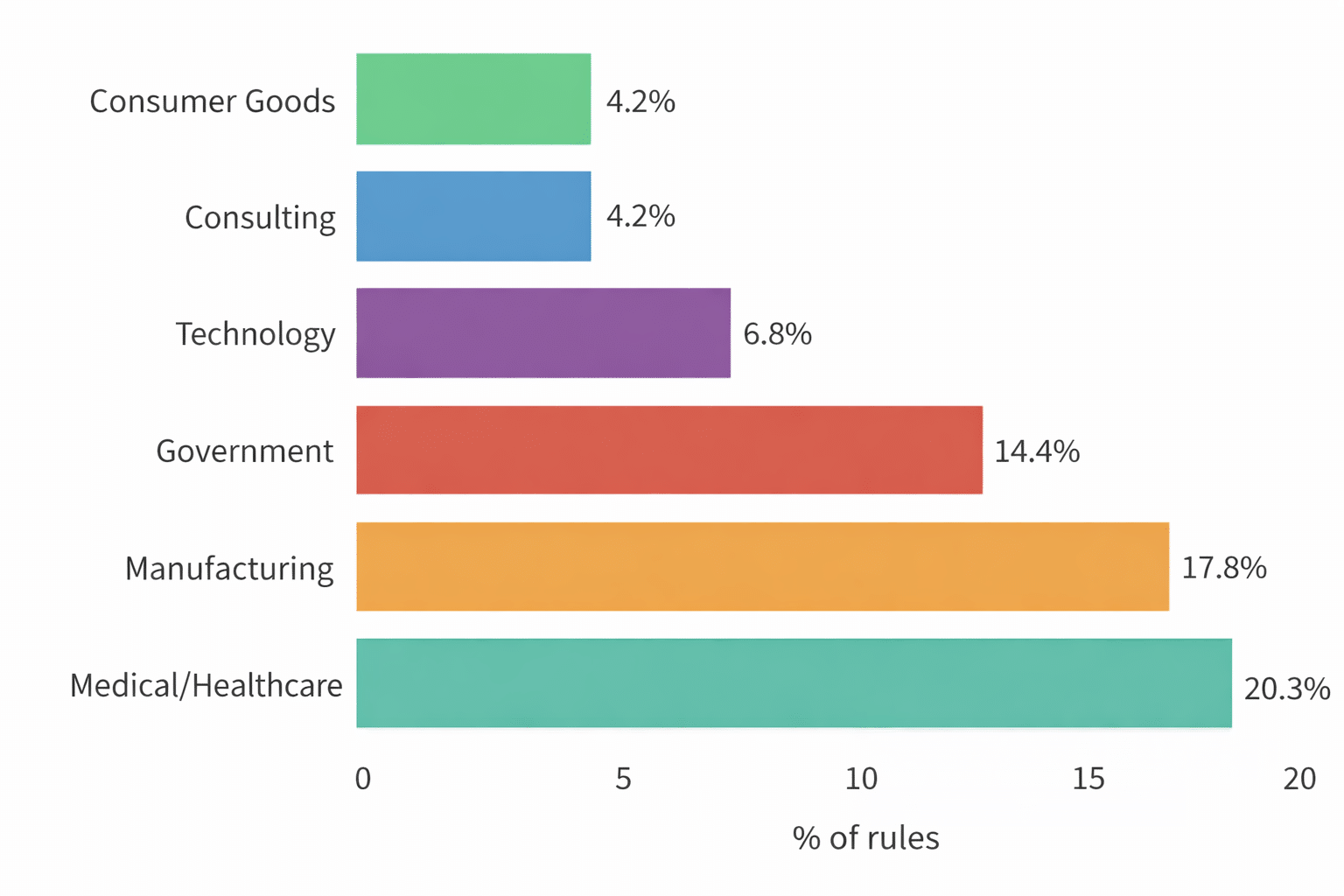

Our Cato SASE Platform telemetry shows that overly permissive AI-service exposure in Remote Port Forwarding is not evenly distributed across sectors. In Figure 1, we show the top six sectors by share of exposed rules: Medical and Healthcare (20.3%), Manufacturing (17.8%), Government (14.4%), Technology (6.8%), Consulting (4.2%), and Consumer Goods (4.2%). The concentration at the top is meaningful. These are environments where operational urgency and always-on connectivity routinely collide with security guardrails. They also tend to have a higher proportion of third-party access, distributed sites, and systems that must remain reachable for support, integration, or automation. That combination increases the likelihood that a “temporary” RPF rule is created broadly, works, and then stays in place longer than intended.

The sector drill-down adds an even sharper signal. Within Government, a large portion maps to Transportation, which commonly relies on geographically distributed infrastructure and vendor-supported systems that cannot tolerate downtime. In Healthcare, the exposure clusters around Retail-related healthcare, a space where patient-facing operations and partner integrations can drive rapid enablement and permissive access patterns. In Manufacturing, the concentration in Factories and Manufacturing and Industrial Machinery and Equipment tracks with OT and hybrid environments, where remote troubleshooting, maintenance windows, and vendor access are frequent, and port exposure can be treated as operational plumbing rather than a policy risk. In Technology, the dominance of Internet and Software and Services and Information Technology and Services suggests something else as well: earlier experimentation with agentic tooling and AI services, including OpenClaw-style gateways, alongside fast-moving build and support workflows. In short, the sectors most exposed tend to share one theme: they are both highly operational and highly connected, which makes them more likely to open access quickly, and more likely to forget to tighten it later.

Figure 1. Top sectors by percentage of overpermissive RPF rules flagged for risky AI-service exposure.

The new autonomous posture in the Cato SASE Platform

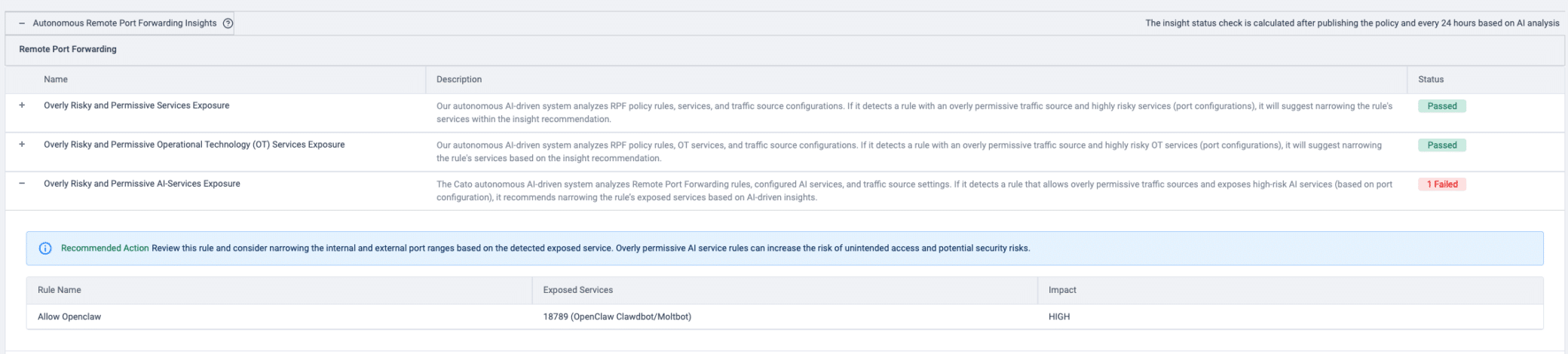

To help customers govern this risk immediately, we activated a new autonomous posture in the Cato SASE Platform under Remote Port Forwarding, titled “Overly Risky and Permissive AI-Services Exposure.” This posture continuously evaluates Remote Port Forwarding rules and highlights situations where an overly broad traffic source is combined with exposure of high-risk AI service ports in a way that can unintentionally expand the attack surface. When the platform identifies a risky combination on an enabled rule, it surfaces a clear Passed or Failed status and provides the operational context an admin needs to act, including the rule name, the exposed service indicator (for example, an exposed port such as TCP/18789 associated with OpenClaw Clawdbot or Moltbot), and an Impact: HIGH assessment. It also provides a focused recommendation: carefully review the rule and consider narrowing the internal and external port ranges as indicated by the exposed service detection, and reduce overly permissive exposure so the rule matches the minimum required access instead of the broadest possible access. Overly permissive AI-service rules can lead to unexpected outcomes or potential security risks.

Why we rate this as HIGH impact

OpenClaw is not “just another service.” It is often deployed as an agentic interface that can bridge humans, automation, and operational workflows. That makes its exposure materially different from a standard web endpoint because it can become an action surface, not only a data surface.

Our earlier OpenClaw governance post explains the core security shift: once AI can act, governance must include both visibility and enforceable controls, and those controls must be able to respond immediately as configurations drift. This posture is one concrete example of that principle applied in the RPF domain.

Internet Firewall enforcement with the OpenClaw app signature

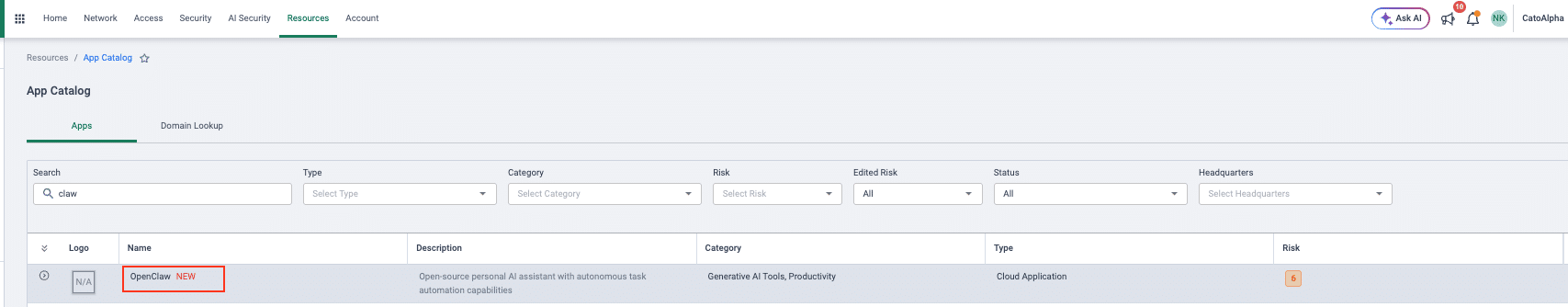

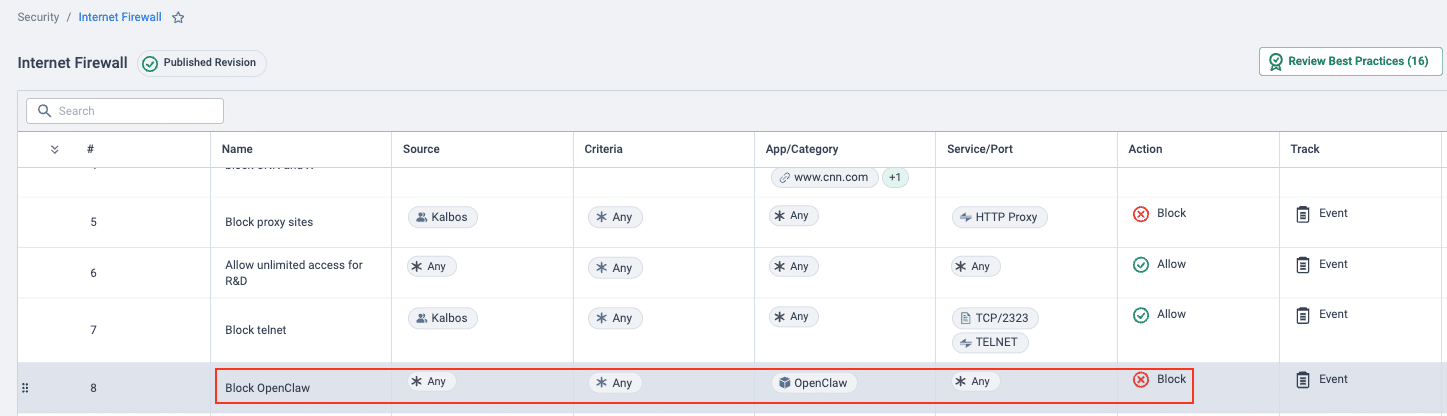

Posture findings help you spot and fix overexposure, but many teams also want an immediate enforcement lever to stop risky access paths altogether. To support that, we added OpenClaw to the Cato App Catalog as a newly signed application, so customers can block OpenClaw directly in the Internet Firewall policy. This gives security teams a fast, centralized way to govern OpenClaw traffic at the edge, whether to prevent unsanctioned usage, enforce a phased rollout, or reduce exposure while tightening Remote Port Forwarding rules.

What you will see in the Cato SASE Platform

In Figure 2, we show the Remote Port Forwarding posture category, “Overly Risky and Permissive AI-Services Exposure,” including an example finding where the posture automatically flags OpenClaw exposure with a Failed status.

Figure 2. RPF posture finding for risky AI-service exposure.

In Figure 3, we show an example Remote Port Forwarding rule that permits a broad source and exposes a port range that includes TCP/18789, which makes the OpenClaw gateway reachable.

Figure 3. Example RPF rule exposing TCP/18789.

In Figure 4, we show the newly signed OpenClaw app added to the App Catalog, including its associated risk level and relevant category. In Figure 5, we show how to create an Internet Firewall rule to block the OpenClaw app.

Figure 4. OpenClaw app entry in the App Catalog.

Figure 5. Internet Firewall rule blocking OpenClaw.

Final Thoughts

OpenClaw is the lesson, not the exception. As agentic AI services proliferate, exposure can appear faster than traditional governance cycles can detect and correct it. That is why Cato combines two practical levers that work together. First, the autonomous posture helps you see and fix real exposure by identifying overly permissive Remote Port Forwarding rules that unintentionally publish high-risk AI service ports, and then guiding you to narrow the rule to the minimum required access. Second, the newly signed OpenClaw app in the App Catalog lets you block ahead of time by enforcing an Internet Firewall rule that prevents OpenClaw traffic from the outset, whether to stop unsanctioned usage or to control rollout. Together, visibility plus enforcement gives customers a straightforward way to govern OpenClaw and similar AI services with speed, consistency, and much less guesswork.