VoIP, DiffServ, and QoS: Don’t Be Held Captive by Old School Networking

|

Listen to post:

Getting your Trinity Audio player ready...

|

We frequently talk to organizations who are enthusiastically searching for alternatives to their old and tired MPLS and IPsec networks. They’re ready to realize the benefits of a new SASE infrastructure but remain constrained by their old beliefs about network engineering.

Last year, for example, we spoke to an organization that wanted to replace its legacy IPsec network with something that would provide a better level of service for voice traffic. It’s not unusual for people to approach us with this sort of request, after all Cato provides extensive Unified Communications (UC) and UCaaS optimization, but this time there was a twist: the customer insisted the solution preserve Differentiated Services Code Point (DSCP) bits across the middle-mile.

Back to Networking School

For those of us who might have gotten their engineering degrees when hairs were a bit darker and Corona only meant something around the sun, Differentiated Services (or Diffserv for short) emerged in the late 90’s as an early form of network-based quality of service (QoS). It replaced the six bits of the old ToS field in the header of IPv4 packets with a DSCP value proclaiming the packet’s relative importance and providing suggestions for how to handle it.

End-to-end QoS with DSCP requires customers to configure their senders and access switches to recognise different types of traffic and mark packets with the correct DSCP values. They then need to configure all intermediate network equipment with the correct queuing and congestion control commands to achieve the desired effect.

It’s a lot of time spent driving multiple CLI’s to produce an outcome which is highly resistant to contemporary concepts of application classification, identity awareness, flexibility and visibility. It’s not hard to see why DSCP struggled to gain real-world acceptance outside the IP telephony space.

By passing DSCP bits across the middle mile, the organization would be able to preserve QoS, ensuring voice quality. By the time the company came to Cato, they had already rejected several solutions that in theory claimed to pass DSCP without interference. Those solutions zeroed out the DSCP field somewhere between sender and destination, leading to a noticeable (and negative) impact on voice call quality.

Cato Brings a More Effective, Simpler Approach to QoS

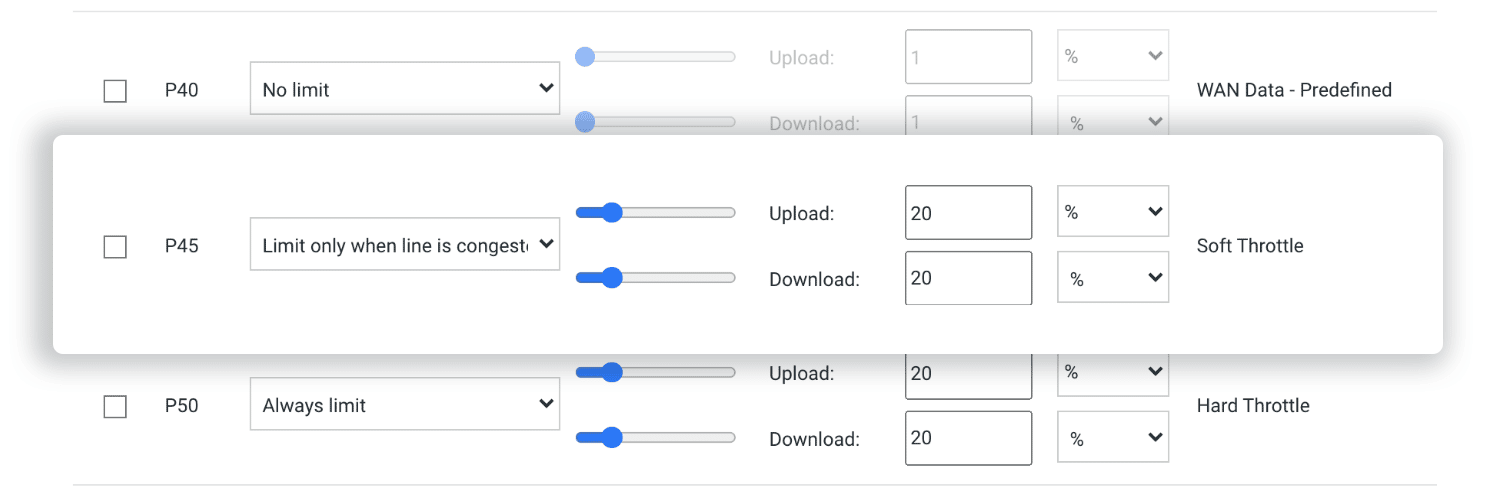

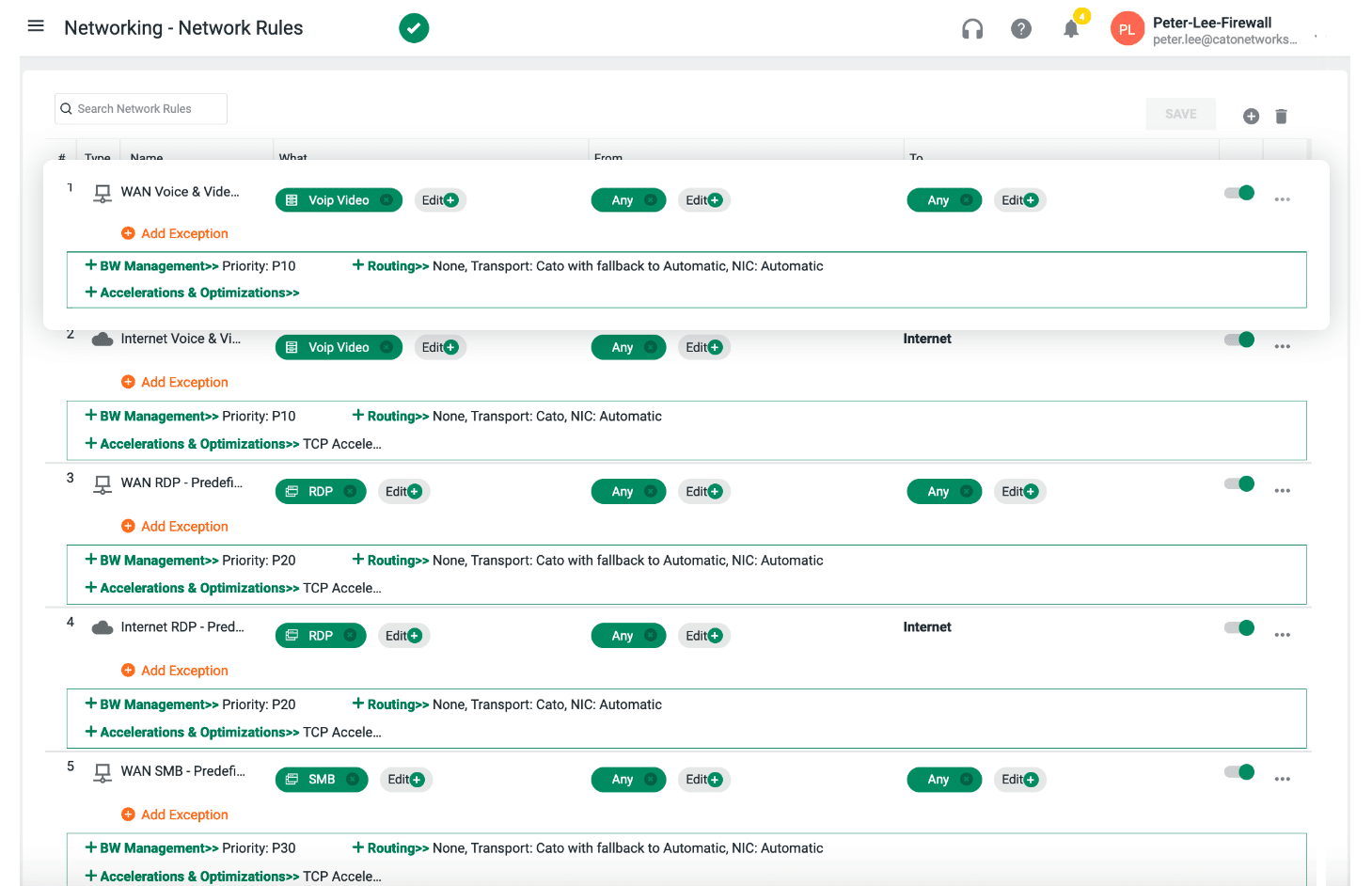

Cato can support end-to-end DiffServ but we prefer a more modern and much simpler way. Instead of playing with DiffServ bits our customers define bandwidth classes with their numerical priority and limits. Each bandwidth rule details the priority level and congestion behaviour – no limit, limit only when line is congested, or always limit – together with relative or absolute values for rate limiting of upstream and downstream traffic.

Customers then allocate traffic to those bandwidth classes in their network rules. That’s it. There are no bits to set or devices to configure. Once mapped, voice is prioritized end to end. We have many, many companies taking this approach. Even UCaaS leaders, like RingCentral, have adopted Cato’s SASE platform.

And to make configuration even easier, Cato provisions each account with a starting set of bandwidth classes and network rules based on most common customer usage, such as prioritize voice and video over file transfers and web browsing or prioritize WAN over Internet.

Although Cato’s approach to QoS supersedes and obsoletes DSCP, we still support the use of DSCP. We can always use DSCP as a selector for allocating traffic to a particular bandwidth. Customers who mark their VoIP traffic with DiffServ will see those traffic classes mapped to the proper bandwidth class. We can also maintain DSCP points across the middle mile by disabling some of our more advanced network optimisation features. After we proved this to the organization with network captures and a short trial, they went ahead with the Cato purchase.

Great Voice Quality Without DiffServ

Now here’s the rub. Recently I revisited this customer’s configuration when another organization also asked us about DSCP. The irony? For all their insistence on preserving DSCP codepoints, the customer was not taking advantage of this capability. I could see plenty of DSCP markers entering their Cato tunnels at the source, but very little DSCP leaving the Cato tunnels at the destination.

At the same time, the customer raised no support tickets, and a quick check of their analytics screen showed huge volumes of file transfers and software updates, happily sharing the links with their voice calls.

In short, despite not preserving DSCP across the middle mile, voice quality was fine. Why? Because it is being cared for by Cato QoS – not DSCP. They’d moved from the old way to the new way of thinking without even realizing it.

Don’t Be Constrained by Old-School Thinking

Cato Cloud does far more than just prioritizing packets and sending them to a static next-hop IP. Our software steers network flows via the best-performing links at that moment, accounting for factors such as packet loss, latency, and jitter. Cato’s approach is what true QoS should be – a tight coupling of application performance requirements with network performance SLAs.

The cloud changed our notions around servers and storage. SASE clouds do the same for networking. Organizations seeking a better alternative to their legacy MPLS/IPsec networks need to let go of “old-school” approaches that were self-evident when all we had to do was connect offices over a private network.

Today, with enterprises needing to connect users and resources everywhere, we need to expand how we think about networking and security. We need to understand the problem – preserving VoIP quality — but remain open to new solutions. Only then will we truly benefit from this new shift embodied by the world of SASE.

To learn more about how Cato improves VoIP, UC, and UCaaS check out this case study with RingCentral.