Cyber security investors, vendors and the press are abuzz with a new concept introduced by Palo Alto Networks (PANW) in their recent earnings announcement and... Read ›

The Platform Matters, Not the Platformization Cyber security investors, vendors and the press are abuzz with a new concept introduced by Palo Alto Networks (PANW) in their recent earnings announcement and guidance cut: Platformization. PANW rightly wants to address the “point solutions fatigue” experienced by enterprises due to the “point solution for point problem” mentality that has been prevalent in cyber security over the years. Platformization, claims PANW, is achieved by consolidating current point solutions into PANW platforms, thus reducing the complexity of dealing with multiple solutions from multiple vendors. To ease the migration, PANW offer customers to use its products for free for up to 6 months while the displaced products contracts expire.

We couldn’t agree more with the need for point solution convergence to address customers’ challenges to sustain their disjointed networking and security infrastructure. Cato was founded nine years ago with the mission to build a platform to converge multiple networking and security categories. Today, over 2200 enterprise customers enjoy the transformational benefits of the Cato SASE Cloud platform that created the SASE category.

Does PANW have a SASE platform? Many legacy vendors, including PANW and most notably Cisco, have grown through M&A establishing a portfolio of capabilities and a business one-stop-shop. Integrating these acquisitions and OEMs into a cohesive and converged platform is, however, extremely difficult to do across code bases, form factors, policy engines, data lakes, and release cycles. What PANW has today is a collection of point solutions with varying degrees of integration that still require a lot of complex care and feeding from the customer. In my opinion, PANW’s approach is more “portfolio-zation” than “platformization,” but I digress.

[boxlink link="https://www.catonetworks.com/resources/the-complete-checklist-for-true-sase-platforms/"] The Complete Checklist for True SASE Platforms | Download the eBook [/boxlink]

The solution to the customer-point-solution-malaise lies with a true platform architected from the ground up to abstract complexity. When customers look at the Cato platform, they see a way to transform how their IT teams secure and optimize the business. Cato provides a broad set of security capabilities, governed by one global policy engine, autonomously maintained for maximum availability and scalability, peak performance, and optimal security posture and available anywhere in the world. To deliver this IT “superpower” requires a platform, not “platformization.”

For several years, we have been offering customers ways to ease the migration from their point solutions towards a better outcome. We have displaced many point solutions in most of our customers including MPLS services, firewalls, SWG, CASB/DLP, SD-WAN, and remote access solutions across all vendors – including PANW. Customers make this strategic SASE transformation decision not primarily because we incentivize them, but because they understand the qualitative difference between the Cato SASE Platform and their current state.

PANW can engage customers with their size and brand, not with a promise to truly change their reality. If you want to see how a true SASE platform transforms IT functionally, operationally, commercially, and even personally – take Cato for a test drive.

One of the observations I sometimes get from analysts, investors, and prospects is that Cato is a mid-market company. They imply that we are creating... Read ›

The Cato Journey – Bringing SASE Transformation to the Largest Enterprises One of the observations I sometimes get from analysts, investors, and prospects is that Cato is a mid-market company. They imply that we are creating solutions that are simple and affordable, but don’t necessarily meet stringent requirements in scalability, availability, and functionality.

Here is the bottom line: Cato is an enterprise software company. Our mission is to deliver the Cato SASE experience to organizations of all sizes, support mission critical operations at any scale, and deliver best-in-class networking and security capabilities.

The reason Cato is perceived as a mid-market company is a combination of our mission statement which targets all organizations, our converged cloud platform that challenges legacy blueprints full of point solutions, our go-to-market strategy that started in the mid-market and went upmarket, and the IT dynamics in large enterprises. I will look at these in turn.

The Cato Mission: World-class Networking and Security for Everyone

Providing world class networking and security capabilities to customers of all sizes is Cato’s reason-for-being. Cato enables any organization to maintain top notch infrastructure by making the Cato SASE Cloud its enterprise networking and security platform. Our customers often struggled to optimize and secure their legacy infrastructure where gaps, single points of failure, and vulnerabilities create significant risks of breach and business disruption.

Cato SASE Cloud is a globally distributed cloud service that is self-healing, self-maintaining, and self-optimizing and such benefits aren’t limited to resource constrained mid-market organizations. In fact, most businesses will benefit from a platform that is autonomous and always optimized. It isn’t just the platform, though. Cato’s people, processes, and capabilities that are focused on cloud service availability, optimal performance, and maximal security posture are significantly higher than those of most enterprises.

Simply put, we partner with our customers in the deepest sense of the word. Cato shifts the burden of keeping the lights on a fragmented and complex infrastructure from the customer to us, the SASE provider. This grunt work does not add business value, it is just a “cost of doing business.” Therefore, it was an easy choice for mid-market customers that could not afford wasting resources to maintain the infrastructure. Ultimately, most organizations will realize there is simply no reason to pay that price where a proven alternative exists.

The most obvious example of this dynamic of customer capabilities vs. cloud services capabilities is Amazon Web Services (AWS). AWS eliminates the need for customers to run their own datacenters and worry about hosting, scaling, designing, deploying, and building high availability compute and storage. In the early days of AWS, customers used it for non-mission-critical departmental workloads. Today, virtually all enterprises use AWS or similar hyperscalers as their cloud datacenter platforms because they can bring to bear resources and competencies at a global scale that most enterprises can’t maintain.

AWS was never a “departmental” solution, given its underlying architecture. The Cato architecture was built with the same scale, capacity, and resiliency in mind. Cato can serve any organization.

The Cato SASE Cloud Platform: Global, Efficient, Scalable, Available

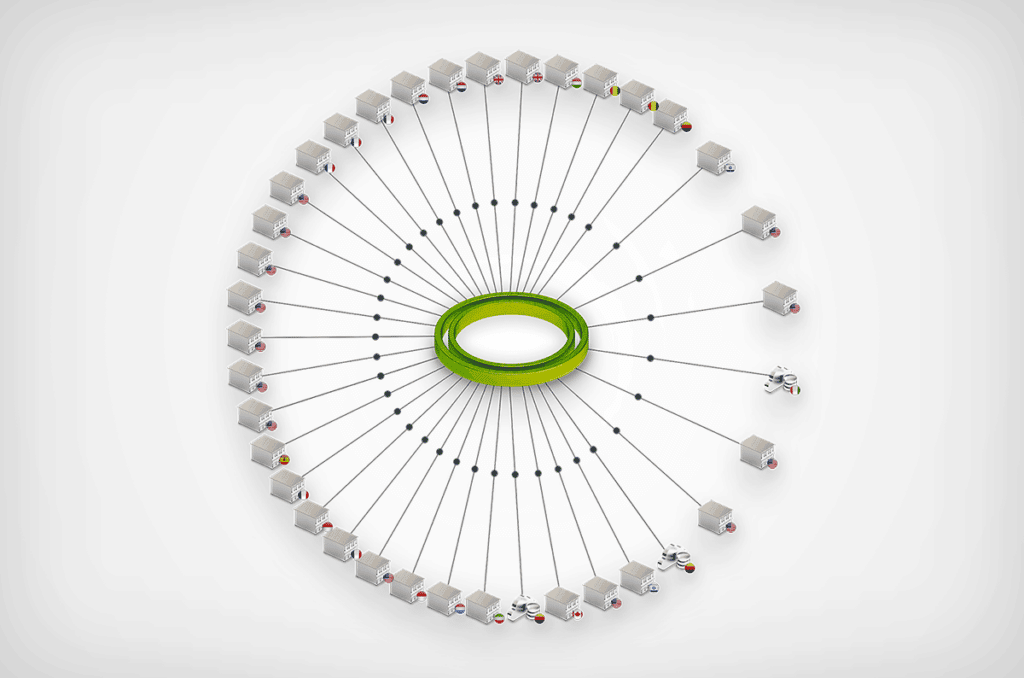

Cato created an all-new cloud-native architecture to deliver networking and security capabilities from the cloud to the largest datacenters and down to a single user. The Cato SASE Cloud is a globally distributed cloud service comprised of dozens of Points of Presence (PoPs). The PoPs run thousands of copies of a purpose-built and cloud-native networking and security stack called the Single Pass Cloud Engine (SPACE). Each SPACE can process traffic flows from any source to any destination in the context of a specific enterprise security policy. The SPACE enforces application access control (ZTNA), threat prevention (NGFW/IPS/NGAM/SWG), and data protection (CASB/DLP) and is being extended to address additional domains and requirements within the same software stack.

Large enterprises expect the highest levels of scalability, availability, and performance. The Cato architecture was built from the ground up with these essential attributes in mind. The Cato SASE Cloud has over 80 compute PoP locations worldwide, creating the largest SASE Cloud in the world. PoPs are interconnected by multiple Tier 1 carriers to ensure minimal packet loss and optimal path selection globally. Each PoP is built with multiple levels of redundancy and excess capacity to handle load surges. Smart software dynamically diverts traffic between PoPs and SPACEs in case of failure to ensure service continuity. Finally, Cato is so efficient that it has recently set an industry record for security processing -- 5 Gbps of encrypted traffic from a single location.

Cato’s further streamlines SOC and NOC operations with a single management console, and a single data lake providing a unified and normalized platform for analytics, configuration, and investigations. Simple and streamlined is not a mid-market attribute. It is an attribute of an elegant and seamless solution.

Go to Market: The Mid-Market is the Starting Point, Not the Endgame

Cato is not a typical cybersecurity startup that addresses new and incremental requirements. Rather, it is a complete rethinking and rearchitecting of how networking and security should be delivered. Simply put, Cato is disrupting legacy vendors by delivering a new platform and a completely new customer experience that automates and streamlines how businesses connect and secure their devices, users, locations, and applications.

Prospects are presented with a tradeoff: continue using legacy technologies that consume valuable IT time and resources spent on integration, deployment, scaling, upgrades, and maintenance, or adopt the new Cato paradigm of simplicity, agility, always on, and self-maintaining. This is not an easy decision. It means rethinking their networking and security architecture. Yet it is precisely the availability of the network and the ability to protect against threats that impact the enterprise’s ability to do business.

[boxlink link="https://www.catonetworks.com/resources/cato-sase-vs-the-sase-alternatives/"] Cato SASE vs. The SASE Alternatives | Download the eBook [/boxlink]

With that backdrop, Cato found its early customers at the “bottom” of the mid-market segment. These customers had to balance the risk of complexity and resource scarcity or the risk of a new platform. They often lacked the internal budgets and resources to meet their needs with existing approaches; they were open to considering another way.

Since then, seven years of technical development in conjunction with wide-spread market validation of single-vendor SASE as the future of enterprise networking and security have led Cato to move up market and acquire Fortune 500 and Global 1000 enterprises at 100x the investment of early customers – on the same architecture. In the process, Cato replaced hundreds of point products, both appliances and cloud- services, from all the leading vendors to transform customers’ IT infrastructure.

Cato didn’t invent this strategy of starting with smaller enterprises and progressively addressing the needs of larger enterprises. Twenty years ago, a small security startup, Fortinet, adopted this same go-to-market approach with low-cost firewall appliances, powered by custom chips, targeting the SMB and mid-market segments. The company then proceeded to move up market and is now serving the largest enterprises in the world. While we disagree with Fortinet on the future of networking and security and the role the cloud should play in it, we agree with the go-to-market approach and expect to end in the same place.

Features and Roadmap: Addressing Enterprise Requirements at Cloud Speed

When analysts assess Cato’s platform, they do it against a list of capabilities that exist in other vendors’ offerings. But this misses the benefit of hindsight. All too often, so-called “high-end features” had been built for legacy conditions, specific edge cases, particular customer requirements that are now obsolete or have low value. In networking, for example, compression, de-duplication, and caching, aren’t aligned with today’s requirements where traffic is compressed, dynamic, and sent over connections with far more capacity that was ever imagined when WAN optimization was first developed.

On the other hand, our unique architecture allows us to add new capabilities very quickly. Over 3,000 enhancements and features were added to our cloud service last year alone. Those features are driven by customers and cross-referenced with what analysts use in their benchmarks. For that very reason, we run a customer advisory board, and conduct detailed roadmap and functional design reviews with dozens of customers and prospects. Case in point is the introduction of record setting security processing -- 5 Gbps of encrypted traffic from a single location. No other vendor has come close to that limit.

The IT Dynamics in Large Enterprises: Bridging the Networking and Security SILOs

Many analysts are pointing towards enterprise IT structure and buying patterns as a blocker to SASE adoption. IT organizations must collaborate across networking and security teams to achieve the benefits of a single converged platform. While smaller IT organizations can do it more easily, larger IT organizations can achieve the same outcome with the guidance of visionary IT leadership. It is up to them to realize the need to embark on a journey to overcome the resource overload and skills scarcity that slows down their teams and negatively impacts the business. Across multiple IT domains, from datacenters to applications, enterprises partner with the right providers that through a combination of technology and people help IT to support the business in a world of constant change.

Cato’s journey upmarket proves that point. As we engage and deploy SASE in larger enterprises, we prove that embracing networking and security convergence is more of matter of imagining what is possible. With our large enterprise customers' success stories and the hard benefits they realized, the perceived risk of change is diminished and a new opportunity to transform IT emerges.

The Way Forward: Cato is Well Positioned to Serve the Largest Enterprises

Cato has reimagined what enterprise networking and security should be. We created the only true SASE platform that delivers the seamless and fluid experience customers got excited about when SASE was first introduced. We have matured the Cato SASE architecture and platform for the past eight years by working with customers of all sizes to make Cato faster, better, and stronger. We have the scale, the competencies, and the processes to enhance our service, and a detailed roadmap to address evolving needs and requirements. You may be a Request for Enhancement (RFE) away from seeing how SASE, Cato’s SASE, can truly change the way your enterprise IT enables and drives the business.

Customer experience isn’t just an important aspect of the SASE market, it is its essence. SASE isn’t about groundbreaking features. It is about a new... Read ›

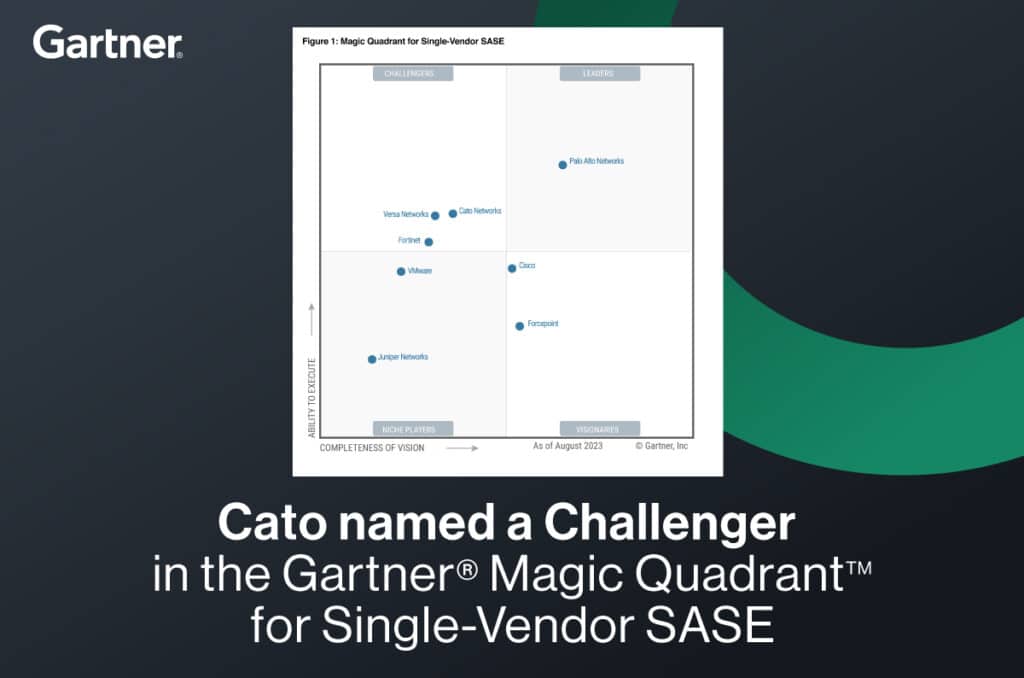

The Magic Quadrant for Single Vendor SASE and the Cato SASE Experience Customer experience isn’t just an important aspect of the SASE market, it is its essence. SASE isn’t about groundbreaking features. It is about a new way to deliver and consume established networking and security features and to solve, once and for all, the complexity and risks that has been plaguing IT for so long.

This is an uncharted territory for customers, channels, and analyst firms. The “features” benchmark is clear: whoever has the most features created over the past two decades in CASB, SWG, NGFW, SD-WAN, and ZTNA – is the “best.” But with SASE, more features aren’t necessarily better if they can’t be deployed, managed, scaled, optimized, or used globally in a seamless way. Rather, it is the “architecture” that creates the customer experience that is the essence of SASE: having the “features” delivered anywhere, at scale, with full resiliency and optimal security posture, to any location, user, or application. This calls for a global cloud-native service architecture that is converged, secure, self-maintaining, self-healing, and self-optimizing.

The SASE architecture, built from the ground up and not through duct taping products from different generations and acquisitions, is the basis for the superior SASE experience. It is seamlessly managed by a single console (really, just one) to make management and configuration consistent, easy, and intuitive. Users create a rich unified policy using the full access context to drive prevention and detection decisions. A single data lake is fed with all events, decisions, and contexts across all domains for streamlined end-to-end visibility and analysis.

It is important to understand this ‘’features’’ vs. ‘’architectures’ dichotomy. Imagine you would rank any Android phone vs. an iPhone on any reasonable list of attributes. The Android phones had, for years, better hardware, more features, more flexibility, lower cost, and bigger market share. And yet they failed to stop Apple since the launch of the iPhone, for that illusive quality called the “Apple experience.”

[boxlink link="https://www.catonetworks.com/resources/cato-named-a-challenger-in-the-gartner-magic-quadrant-for-single-vendor-sase/"] Cato named a Challenger in Gartner’s Magic Quadrant for Single Vendor-SASE | Get the Report [/boxlink]

Carlsberg called Cato “The Apple of Networking.” Customers understand and value the “Cato SASE Experience” even when our SD-WAN device or converged CASB engine are missing a feature. They know they can get it, if needed, through our high-velocity roadmap that is made possible by our architecture.

What is very hard to do, is to build and mature a SASE architecture that is foundational to any SASE feature. To achieve that, Cato had built the largest SASE cloud in the world with over 80 PoPs. We optimized the service to set a record for SASE throughput from a single location at 5 Gbps with full encryption/decryption and security inspection. We had deployed massive global enterprises with the most demanding real-time and mission-critical workloads with sustained optimal performance and security posture.

And the “features”? We roll them out at a pace of 3,000 enhancements per year, on a bi-weekly schedule, without compromising availability, security, or the customer experience. Cato is expanding its SASE platform outside the core network security market boundaries and into adjacent categories such as endpoint protection, extended detection and response, and IoT that can benefit from the same streamlined architecture.

Cato delivers the true SASE experience. That powerful simplicity customers have been longing for.

Try us.

Cato. We are SASE.

*Gartner, Magic Quadrant for Single-Vendor SASE, Andrew Lerner, Jonathan Forest,16 August 2023

GARTNER is a registered trademark and service mark of Gartner and Magic Quadrant is a registered trademark of Gartner, Inc. and/or its affiliates in the U.S. and internationally and are used herein with permission. All rights reserved.Gartner does not endorse any vendor, product or service depicted in its research publications and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner research publications consist of the opinions of Gartner’s research organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this research, including any warranties of merchantability or fitness for a particular purpose.

Today, Forrester released The Forrester Wave™: Zero Trust Edge Solutions, Q3 2023 Report. Zero Trust Edge (ZTE) is Forrester’s name for SASE. We were delighted... Read ›

Cato named a Leader in Forrester’s 2023 Wave for Zero Trust Edge Today, Forrester released The Forrester Wave™: Zero Trust Edge Solutions, Q3 2023 Report. Zero Trust Edge (ZTE) is Forrester’s name for SASE. We were delighted to be described as the “poster child” of ZTE and SASE and be named a “Leader” in the report.

To date, thousands of enterprises with tens of thousands of locations, and millions of users, run on Cato. The maturity, scale, and global footprint of Cato’s SASE platform enables us to serve the most demanding and mission-critical workloads in industries such as manufacturing, engineering, retail, and financial services. Cato’s record-setting multi-gig SASE processing capacity extends the full set of our capabilities to cloud and physical datacenters, campuses, branch locations, and down to a single user or IoT device.

Cato isn’t just the creator of the SASE category. It is the only pure-play SASE provider that built, from the ground up, the full set of networking and security capabilities delivered as a single, global, cloud service. We created the Cato SASE Cloud eight years ago with the aim to level the complex IT infrastructure playing field. Cato focuses on simplifying, streamlining, and hardening networking and security infrastructure to enable organizations of all sizes to secure and optimize their business regardless of the resources and skills at their disposal. This is, at its core, the promise of SASE.

Cato has SASE DNA. As Forrester notes, we deliver networking and security as a unified service. The SASE features, however, and the order we deliver them are driven by customer demand and the identification of new opportunities to bring the SASE value to new areas of the IT infrastructure.

[boxlink link="https://www.catonetworks.com/resources/the-forrester-wave-zero-trust-edge-solutions/"] Forrester Reveals 2023 ZTE (SASE) Providers | Get the Report [/boxlink]

This “architecture vs. features” trade off makes assessing SASE providers very tricky. The SASE architecture is a radical departure from the legacy architecture of appliances and point solutions and into converged cloud delivered services. SASE incorporates into this new architecture mostly commoditized, and well-defined features. In this new market, it is the architecture that sets SASE providers apart as long as they deliver the features a customer actually needs. Simply put, it is the SASE architecture that drastically improves the IT operating model and enables the promised business outcomes.

When we work with customers to evaluate SASE, our focus is always on the IT team and the end-user experience. What they observe is the speed at which we deploy our service through zero touch and self-service light edges, the seamless and intuitive nature of our user interface that exposes an elegant underlying design, the global reach of our cloud service, and total lack of need for difficult integrations.

Cato is the simplicity customers always hoped for because we aren’t a legacy provider that had to play catch up to SASE. All the other co-leaders are appliance companies that were forced to build a cloud service to participate in SASE. They market SASE but deliver physical or virtual appliances placed in someone else’s cloud.

We are committed to helping customers use SASE to achieve security and networking prowess previously available only to the largest organizations. Cato’s SASE will change the way your IT team supports the business, drives the business, and is perceived by the business.

Start your journey today, with the true SASE leader.

Cato. We are SASE.

SASE = SD-WAN + SSE. This simple equation has become a staple of SASE marketing and thought leadership. It identifies two elements that underpin SASE,... Read ›

SASE is not SD-WAN + SSE SASE = SD-WAN + SSE. This simple equation has become a staple of SASE marketing and thought leadership. It identifies two elements that underpin SASE, namely the network access technology (SD-WAN) and secure internet access (Security Service Edge (SSE)).

The problem with this equation is that it is simply wrong. Here is why.

The “East-West” WAN traffic visibility gap: SASE converges two separate disciplines: the Wide Area Network and Network Security. It requires that all WAN traffic will be inspected. However, SSE implementations typically secure “northbound” traffic to the Internet and have no visibility into WAN traffic that goes “east-west” (for example, between a branch and a datacenter). Therefore, legacy technologies like network firewalls are still needed to close the visibility and enforcement gap for that traffic.

The non-human traffic visibility gap: Most SSE implementations are built to secure user-to-cloud traffic. While an important use case, it doesn’t cover traffic between applications, services, devices, and other entities where installing agents or determining identities is impossible. Extending visibility and control to all traffic regardless of source and destination requires a separate network security solution.

The private application access (ZTNA) vs secure internet access (SIA) gap: SSE solutions are built to deliver SIA where there is no need to control the traffic end-to-end. A proxy would suffice to inspect traffic on its way to cloud applications and the Web. ZTNA introduces access to internal applications, which are not visible to the Internet, and were not necessarily accessed via Web protocols. This requires a different architecture (the “application connector”) where traffic goes through a cloud broker and is not inspected for threats. Extending inspection to all application traffic across all ports and protocols requires a separate network security solution.

What is missing from the equation? The answer is: a cloud network.

By embedding the security stack into a cloud network that connects all sources and destinations, all traffic that traverses the WAN is subject to inspection. The cloud network is what enables SASE to achieve 360 degrees visibility into traffic across all sources, destinations, ports, and protocols, anywhere in the world. This traffic is then inspected, without compromise, by all SSE engines across threat prevention and data security. This is what we call SSE 360.

[boxlink link="https://www.catonetworks.com/resources/sase-vs-sd-wan-whats-beyond-security/"] SASE vs SD-WAN: What’s Beyond Security | Download the eBook [/boxlink]

There are other major benefits to the cloud network. The SASE PoPs aren’t merely securing traffic to the Internet but are interconnected to create a global backbone. The cloud network can apply traffic optimization in real time including calculating the best global routes across PoPs, egressing traffic close to the target application instead of using the public Internet and applying acceleration algorithms to maximize end-to-end throughout. All, while securing all traffic against threats and data loss. SASE not only secures all traffic but also optimizes all traffic.

With SSE 360 embedded into a cloud network, the role of SD-WAN is to be the on-ramp to the cloud network for both physical and virtual locations. Likewise, ZTNA agents provide the on-ramp for individual users’ traffic. In both cases, the security and optimization capabilities are delivered through the cloud. This cloud-first/thin-edge holistic design is the SASE architecture enterprises had been waiting for.

Cloud networks are an essential pillar of SASE. They exist in certain SASE solutions that use the Internet, or a third-party cloud network such as are available through AWS, Azure, or Google. While these cloud networks provide global connectivity to the SASE solution, they are decoupled from the SSE layer and act as a “black box” where the optimizations of routing, traffic, application access, and the ability to reach any geographical region, are outside the control of the SASE solution provider. Having a cloud network, however, is preferred for the reasons mentioned than having no cloud network at all.

SASE needs an updated equation. SASE = SD-WAN + Cloud Network + SSE. Make sure you choose the right architecture on your way to full digital transformation.

ChatGPT is all the rage these days. Its ability to magically produce coherent and typically well-written, essay-length answers to (almost) any question is simply mind-blowing.... Read ›

ChatGPT and Cato: Get Fish, Not Tackles ChatGPT is all the rage these days. Its ability to magically produce coherent and typically well-written, essay-length answers to (almost) any question is simply mind-blowing. Like any marketing department on the planet, we wanted to “latch onto the news." How can we connect Cato and ChatGPT?

Our head of demand generation, Merav Keren, made an interesting comparison between ChatGPT and Google Search. In a nutshell, Google gives you the tools to craft your own answer, ChatGPT gives you the outcome you seek, which is the answer itself. ChatGPT provides the fish, Google Search provides the tackles.

How does this new paradigm translate into SASE, networking, and security? We have discussed at length the topic of outcomes vs tools. The emergence of ChatGPT is an opportunity to revisit this topic.

Historically, networking and network security solutions provided tools for engineers to design and build their own “solutions” to achieve a business outcome. In the pre-cloud era, the two alternatives on the table were Do-it-Yourself or pay someone else to Do-it-for-You). The tools approach was heavily dependent on budget, people, and skills to design, deploy, manage, and adjust the tools comprising the solution to make sure they continuously deliver the business outcome.

Early attempts to build a “self-driving” infrastructure to sustain desired outcomes didn’t take off. For example, intent-based networking was created to enable engineers to state a desired business outcome and let the “network” implement low-level policies to achieve it. Other attempts like SD-WAN fared better because the scope of desired outcomes was more limited and the infrastructure more uniform and coherent.

[boxlink link="https://www.catonetworks.com/resources/outcomes-vs-tools-why-sase-is-the-right-strategic-choice-vs-legacy-appliances/?utm_medium=blog_top_cta&utm_campaign=features_vs_outcomes"] The Pitfalls of SASE Vendor Selection: Features vs. Strategic Outcomes | Download the White Paper [/boxlink]

Thinking about IT infrastructure as enabling business outcomes became even more elusive as complexity grew with the emergence of digital transformation. Cloud migration and hybrid cloud, SaaS usage proliferation, growing use of remote access, and the expansion of attack surface to IoT have strained the traditional approach of IT solution engineering of applying new tools to address new requirements.

In this age of skills and resource scarcity, IT needs to acquire “outcomes” not mere “tools.”

There is an important distinction here between legacy and modern outcome delivery. Legacy outcome delivery is typically associated with service providers. They use tools to engineer a solution for customers, and then use manpower to maintain and adapt the solution to deliver an agreed upon outcome. To ensure they meet the committed outcomes, customers demand and get SLAs backed by penalties. This business structure silently acknowledges the fact that a service provider is fundamentally using the “same” headcount to achieve an outcome without any fundamental advantage over the customer’s IT. Penalties serve to motivate the service provider to deploy sufficient resources to deliver what the customer is paying for.

Modern outcome delivery is built on cloud native service platforms. It is built with a software platform that can adapt to changes and emerging requirements with minimal human touch. Most engineering goes into enhancing platform capabilities not managing it to specific customer needs.

This is where Cato Networks shines. Once a customer onboards into Cato, our platform is designed to continuously deliver “a secure and optimal access for everyone and everywhere” outcome without the customer having to do anything to sustain that outcome. The Cato SASE Cloud combines extreme automation, artificial intelligence, and machine learning to adapt to infrastructure disruptions, geographical expansion, capacity changes, user-base mobility, and emerging threats. While highly skilled engineers enhance the platform capabilities to seamlessly detect and respond to these changes, they do not get involved in the platform decision making process that is largely self-driving. Simply put, much of the customer experience lifecycle with Cato is fully and truly automated and embodies massive investment in outcome-driven infrastructure that is fully owned by Cato.

What this means is that any customer that onboards into Cato immediately experiences the networking and security outcomes typical of a Fortune 100 enterprise, in the same way an average content writer could deliver better and faster outcomes when assisted by the outcome driven ChatGPT.

If you want a fresh supply of fish coming your way as “Cato Outcomes”, take us for a test drive. Tackles are included, yet optional.

Gartner has just issued a press release announcing its Top Trends Impacting Infrastructure and Operations for 2023. Among the six trends that will have significant... Read ›

Gartner Names Top I&O Trends for 2023 Gartner has just issued a press release announcing its Top Trends Impacting Infrastructure and Operations for 2023. Among the six trends that will have significant impact over the next 12 to 18 months Gartner named the Secure Access Service Edge (SASE), sustainable technology, and heated skills competition.

Below is a discussion of these trends and how they are interrelated.

Secure Access Service Edge (SASE) was created by Gartner in 2019 and has repeatedly been highlighted as a transformative category. According to Gartner’s press release, “SASE is a single-vendor product that is sold as an integrative service which enables digital transformation. Practically, SASE enables secure, optimal, and resilient access by any user, in any location, to any application. This basic requirement had been fulfilled for years by a collection of point solutions for network security, remote access, and network optimization, and more recently with cloud security and zero trust network access. However, the complexity involved in delivering optimal and secure global access at the scale, speed, and consistency demanded by the business requires a new approach.

Gartner’s SASE proposes a new global, cloud-delivered service that enables secure and optimal access everywhere in the world. Says Gartner analyst Jeffrey Hewitt: “I&O teams implementing SASE should prioritize single-vendor solutions and an integrated approach.” SASE’s innovation is the re-architecture of IT networking and network security to enable IT to support the demands of the digital business.

[boxlink link="https://www.catonetworks.com/resources/inside-look-life-before-and-after-deploying-sase/?utm_medium=blog_top_cta&utm_campaign=before_and_after_sase"] An Inside Look: Life Before and After Deploying a SASE Service | Whitepaper [/boxlink]

This is the tricky part about SASE: while the capabilities, also offered by legacy point solutions, are not new, the platform architecture is brand new. To deliver scalable, resilient, and global secure access that is also agile and fast, SASE must live in the cloud as a single holistic platform.

SASE architecture also has a direct impact on the competition for skills. When built from the ground up as a coherent and converged solution delivered as a service, SASE is both self-maintaining and self-healing. A cloud-native SASE platform delivered “as a service” offloads the infrastructure maintenance tasks, from the IT staff. Simply put, a smaller IT team can run a complex networking and network security infrastructure when supported by a cloud-native SASE provider, such as Cato Networks. The SASE provider maintains optimal security posture against emerging threats, seamlessly upgrades the platform with new capabilities, and reduces the time to detect, troubleshoot and fix problems. Using the right SASE platform, customers will also alleviate the pressure to acquire the right skills to support and maintain individual point solutions, and the resources needed to “keep the lights on” by maintaining a fragmented infrastructure in perfect alignment and optimal posture.

Beyond skills, SASE also has a positive impact on technology sustainability. Cloud-native SASE service eliminates a wide array of edge appliances including routers, firewalls, WAN optimizers and more. By moving the heavy lifting of security inspection and traffic optimization to the cloud, network edge footprint and processing requirements will decline, reducing the power consumption, cooling requirements, and environmental impact of edge appliance disposition.

The road to simpler, faster, and secure access starts with a cloud-native, converged, single vendor SASE. Customers can expect better user experience, improved security posture, agile support of strategic business initiatives, and a lower environmental impact.

Organizations are in the midst of an exciting period of transformational change. Legacy IT architectures and operational models that served enterprises over the past three... Read ›

SASE, SSE, ZTNA, SD-WAN: Your Journey, Your Way Organizations are in the midst of an exciting period of transformational change. Legacy IT architectures and operational models that served enterprises over the past three decades are being re-evaluated. IT organizations are now driven by the need for speed, agility, and supporting the business in a fiercely competitive environment.

What kind of transformation is needed to support the modern business? The short answer is “cloudification.” Migration of applications to the cloud had been going on for a decade, offloading complex datacenter operations away from IT, and in that way increasing business resiliency and agility. However, the migration of other pillars of IT infrastructure, such as networking and security, to the cloud is a newer trend.

Transforming Networking and Security for the Modern Enterprise

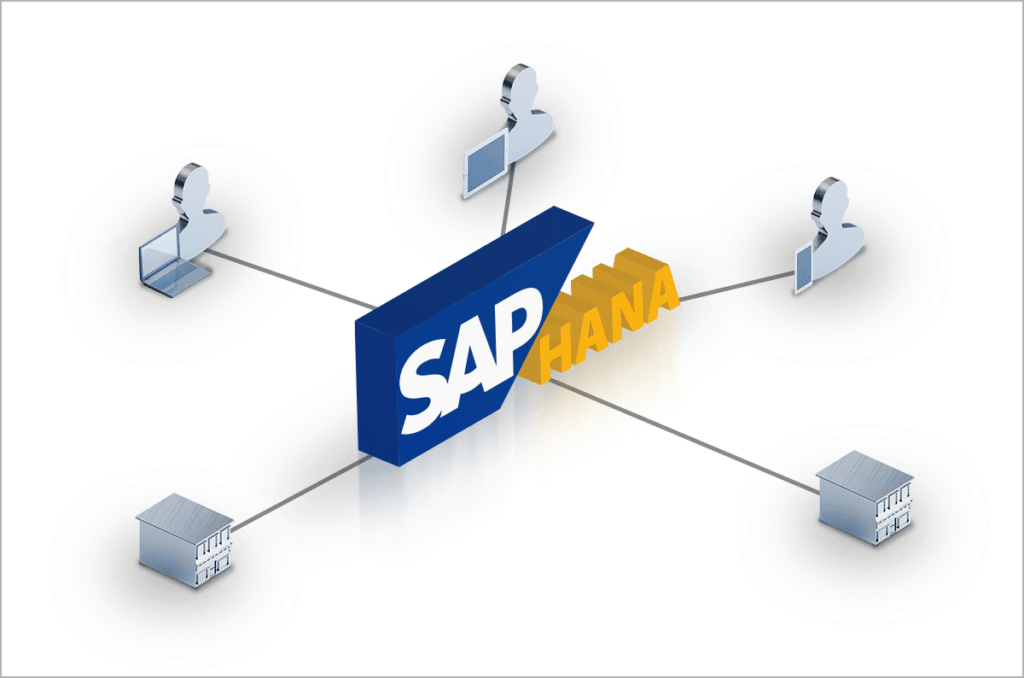

In 2019, Gartner outlined a new architecture, the Secure Access Service Edge (SASE), as the blueprint for converging a wide range of networking and security functions into a global cloud service. Key components include SD-WAN, Firewall as a Service (FWaaS), Secure Web Gateway (SWG), Cloud Access Security Broker (CASB), Data Loss Prevention (DLP), and Zero Trust Network Access (ZTNA). Two years later, Gartner created a related framework focused exclusively on the security pillar of SASE, the Security Service Edge (SSE).

By moving to a converged cloud design, SASE and its major components of SD-WAN and SSE aim to eliminate the pile of point solutions, management consoles, and loose integrations that led to a rigid, costly, and complex infrastructure. This transformation addresses the root causes of IT's inability to move at the speed of business – budgetary constraints, resource limitations, and insufficient technical skills.

The Journey to a Secure Network for the Modern Enterprise

As customers started to look at the transformational power of SASE, many saw a long journey to move from their current set of appliances, services, and point solutions to a converged SASE platform. IT knows too well the challenges of migrating from proprietary applications in private datacenters to public cloud applications and cloud datacenters, a journey that is still on going in many enterprises today.

How should enterprise IT leaders proceed in their journey to transform networking and security? There are two dimensions to consider: the use cases and the IT constraints.

Driving Transformation through Key Use Cases

There are several key use cases to consider as the entry point to the networking and security transformation journey. Taking a platform approach to solving these immediate challenges will make addressing future challenges much easier and more cost-effective as the enterprise proceeds towards a full infrastructure transformation.

Work from Anywhere (ZTNA)

During COVID the need for secure remote access (ZTNA) became a critical IT capability. Enterprises must be ready to provide the entire workforce, not just the road warriors, with optimized and secure access to applications, on-premises and in the cloud. Deploying a ZTNA solution that is part of the SSE pillar of a single-vendor SASE platform overcomes the scalability and security limitations of appliance-based VPN solutions. ZTNA represents a “quick win,” eliminating a legacy point-solution and establishes a broad platform for continued transformation.

Cloud access control and sensitive data protection (CASB/DLP)

The adoption of public cloud applications enables users to get work done faster. However, while the cloud may only be a click away, unsanctioned applications increase business risk through security breaches, compliance violations, and data loss. Deploying the CASB and DLP capabilities in the SSE portion of a SASE platform addresses the need to control access to the cloud and protect sensitive data.

Firewall elimination (FWaaS)

One of the biggest challenges in managing an enterprise security footprint is the need to patch, upgrade, size, and retire discrete appliances. With Firewall as a Service (FWaaS), enterprises relieve themselves of this burden, migrating the WAN security and routing of firewall appliances to the cloud. FWaaS is not included in Gartner’s SSE definition but is a part of some SSE platforms, such as Cato SSE 360.

Migration of MPLS to Secure SD-WAN

The legacy MPLS services connecting locations are unsuitable for supporting cloud adoption and the remote workforce. By migrating locations from MPLS to SD-WAN and Internet connectivity, enterprises install a modern, agile network well suited toward business transformation. Customers may choose to preserve their existing security infrastructure, initially deploying only edge SD-WAN and global connectivity capabilities of a SASE platform, like Cato SASE Cloud. When ready, companies can migrate locations and users to the SSE capabilities of the SASE platform.

Whether the enterprise comes from networking or security, the right platform should enable a gradual journey to full transformation. Deploying SD-WAN that is a part of a single-vendor SASE platform, enables future migration of the security infrastructure into the SSE pillar. Conversely, deploying one of the security use cases of ZTNA, CASB/DLP or FWaaS that are part of a converged SSE platform enables seamless accommodation of other security use cases. And if SSE is a part of a single-vendor SASE platform, migration can be further extended into the network to address migration from MPLS or third-party SD-WAN into a full SASE deployment.

Accelerating Your Journey by Overcoming Enterprise IT Constraints

The duration and structure of your journey is impacted by enterprise constraints. Below are some examples and best practices we learned from our customers on dealing with them.

Retiring existing solutions

The IT technology stack includes existing investments in SD-WAN, security appliances, and security services that have different contractual terms and subscription durations. Some customers want to let current contracts run their course before evaluating a move to converge existing point products into a SASE or SSE platform. Other customers work with vendors to shorten the migration period with buyout programs.

Working across organizational silos

SASE project is cross functional, involving the networking and security teams. Depending on organizational structure, the teams may be empowered to make standalone decisions, complicating a collaborative decision. We have seen strong IT leadership guide teams to evaluate a full transformation as an opportunity to maximize value for the business, while preserving role-based responsibility for their respective domains.

If bringing the teams together isn’t possible in the short term, a phased approach to SASE is appropriate. When SD-WAN or SSE decisions are taken independently the teams should assess providers that can deliver a single-platform SASE even if the requirements are limited to either the networking or the security domains.

The Way Forward: Your Transformation Journey, Done Your Way

As the provider of the world’s first and most mature single-vendor SASE platform, that is powered by Cato SSE 360 and Cato’s Edge SD-WAN, we empower you to choose how to approach your transformation journey. You can start with either network transformation (SD-WAN) or security transformation (SSE 360) and then proceed to complete the transformation by seamlessly expanding the deployment to a full SASE on the very same platform. Obviously, the deeper the convergence the larger the business value and impact it will create.

To learn more about visit the following links: Cato SASE, Cato SSE 360, Cato Edge SD-WAN, and Cato ZTNA.

I love Trombones… in marching bands. Some trombones, however, generate a totally different sound: sighs of angst across networking teams around the world. Why “The... Read ›

The Sound of the Trombone I love Trombones… in marching bands. Some trombones, however, generate a totally different sound: sighs of angst across networking teams around the world.

Why “The Trombone Effect” Is So Detrimental to IT Teams and End Users

The “Trombone Effect” occurs in a network architecture that forces a distributed organization to use a single secure exit point to the Internet. Simply put, network traffic from remote locations and mobile users is being backhauled to the corporate datacenter where it exits to the Internet through the corporate’s security appliances stack. Network responses then flow back through the same stack and travel from the data center to the remote user.

This twisted path, resembling the bent pipes of a trombone, has a negative impact on latency and therefore on the user experience. Why does this compromise exist? If you are in a remote office, your organization may not be able to afford a stack of security appliances (firewall, secure web gateway or SWG, etc.) in your office. Affordability is not just a matter of money. Distributed appliances have policies that need to be managed and if the appliance fails or requires maintenance – someone has to take care of it at that remote location. Mobile users are left unprotected because they are not “behind” the corporate network security stack.

[boxlink link="https://www.catonetworks.com/resources/cato-sse-360-finally-sse-with-total-visibility-and-control/?utm_source=blog&utm_medium=top_cta&utm_campaign=cato_sse_360"] Cato SSE 360: Finally, SSE with Total Visibility and Control | Whitepaper [/boxlink]

Do Regional Hubs Mitigate the Impact of “Trombone Effect?”

The most recent answer to the Trombone Effect is the use of “regional hubs”. These “mini” data centers host the security stack and shorten the distance between remote locations and security exit points to the internet. This approach reduces the end user performance impact, by backhauling to the nearest hub. However, the fundamental issue of managing multiple instances of the security stack remains as well as the need to set up distributed datacenters and address performance and availability requirements.

Solving the “Trombone Effect” with Cato SSE 360

Cato Networks solves the “Trombone Effect” with Cato’s Security Service Edge 360 (SSE 360), which ensures that security is available everywhere that users, applications, and data reside. Rather than making security available in just a few places,

Threat prevention and data protection are uniformly enforced via our private backbone spanning over 75+ PoPs supporting customers in 150+ countries. Because the PoPs reside within 25 ms of all users and locations, companies don’t need to set up regional hubs to secure the traffic, alleviating the cost, complexity and responsibility for capacity planning and management, while ensuring optimal security posture without compromising the user experience.

Next Steps: Get Clear on Cato SSE 360

If you are a victim of the “Trombone Effect,” then Cato Networks can easily solve this with SSE 360. Visit our Cato SSE 360 product page, to learn about our architecture, capabilities, benefits, and use cases, and receive a thorough overview of our service offering.

The Secure Access Service Edge (SASE) is a unique innovation. It doesn’t focus on new cutting-edge features such as addressing emerging threats or improving application... Read ›

Lipstick on a Pig: When a Single-Pane-of-Glass Hides a Bad SASE Architecture The Secure Access Service Edge (SASE) is a unique innovation. It doesn’t focus on new cutting-edge features such as addressing emerging threats or improving application performance. Rather, it focuses on making networking and security infrastructure easier to deploy, maintain, manage, and adapt to changing business and technical requirements. This new paradigm is threatening legacy point solution incumbents. It portrays the world they created for their customers as costly and complex, pressuring customer budgets, skills, and people.

Gartner tackled this market trend in their research note: “Predicts 2022: Consolidated Security Platforms are the Future.” Writes Gartner, “The requirement to address changing needs and new attacks prompts SRM (security and risk management) leaders to introduce new tools, leaving enterprises with a complex, fragmented environment with many stand-alone products and high operational costs.” In fact, customers want to break the trend of increasing operational complexity. Writes Gartner. “SRM leaders tell Gartner that they want to increase their efficiency by consolidating point products into broader, integrated security platforms that operate as a service”.

This is the fundamental promise of SASE. However, SASE is extremely difficult for vendors that start from a pile of point solutions built for on-premises deployment. What such vendors need to do is re-architect these point solutions into a single, converged platform delivered as a cloud service. What they can afford to do is to hide the pile behind a single pane of glass. Writes Gartner: “Simply consolidating existing approaches cannot address the challenges at hand. Convergence of security systems must produce efficiencies that are greater than the sum of their individual components."

[boxlink link="https://www.catonetworks.com/resources/5-questions-to-ask-your-sase-provider/?utm_source=blog&utm_medium=top_cta&utm_campaign=5_questions_for_sase_provider"] 5 Questions to Ask Your SASE Provider | eBook [/boxlink]

How can you achieve efficiency that is greater than the sum of the SASE parts? The answer is: core capabilities should be built once and be leveraged to address multiple functional requirements.

For example, traffic processing. Traffic processing engines are at the core of many networking and security products including routers, SD-WAN devices, next generation firewalls, secure web gateways, IPS, CASB/DLP, and ZTNA products. Each such engine uses a separate rule engine, policies, and context attributes to achieve its desired outcomes. Their deployment varies based on the subset of traffic they need to inspect and the source of that traffic including endpoints, locations, networks, and applications.

A true SASE architecture is “single pass.” It means that the same traffic processing engine can address multiple technical and business requirements (threat prevention, data protection, network acceleration). To do that, it must be able to extract the relevant context needed to enforce the applicable policies across all these domains. It needs a rule engine that is expandable to create rich rulesets that use context attributes to express elaborate business policies. And it needs to feed a common repository of analytics and events that is accessible via a single management console.

Simply put, the underlying architecture drives the benefits of SASE bottom-up -- not a pretty UI managing a convoluted architecture top-down. If you have an aggregation of separate point products everything becomes more complex -- deployment, maintenance, integration, resiliency, and scaling -- because each product brings its unique set of requirements, processes, and skills to an already very busy IT organization.

This is why Cato is the world’s first and most mature SASE platform. It isn’t just because we cover the key functional requirements of SD-WAN, Secure Web Gateway (SWG), Firewall-as-a-Service (FWaaS), Cloud Access Security Broker (CASB), and Zero Trust Network Access (ZTNA). Rather, it is because we built the only true SASE architecture to deliver these capabilities as a single global cloud service with simplicity, automation, scalability, and resiliency that truly enables IT to support the business with whatever comes next.

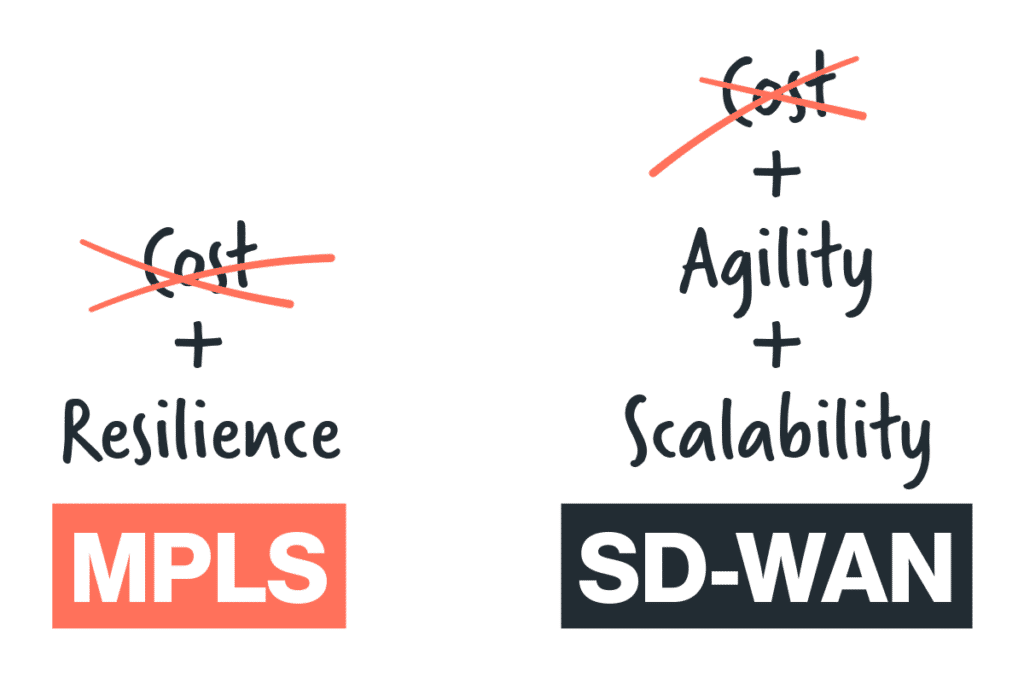

WAN transformation with SD-WAN and SASE is a strategic project for many organizations. One of the common drivers for this project is cost savings, specifically... Read ›

Does WAN transformation make sense when MPLS is cheap? WAN transformation with SD-WAN and SASE is a strategic project for many organizations. One of the common drivers for this project is cost savings, specifically the reduction of MPLS connectivity costs. But, what happens when the cost of MPLS is low? This happens in many developing nations, where the cost of high-quality internet is similar to the cost of MPLS, so migration from MPLS to Internet-based last mile doesn’t create significant cost savings.

Should these customers stay with MPLS? While every organization is different, MPLS generally imposes architectural limitations on enterprise WANs which could impact other strategic initiatives. These include cloud migration of legacy applications, the increased use of SaaS applications, remote access and work from home at scale, and aligning new capacity, availability, and quality requirements with available budgets. In short, moving away from MPLS prepares the business for the radical changes in the way applications are deployed and how users access them.

Legacy MPLS WAN Architecture: Plain Old Hub and Spokes

MPLS WAN was designed decades ago to connect branch locations to a physical datacenter as a telco-managed network. This is a hard-wired architecture, that assumes most (or all) traffic needs to reach the physical datacenter where business applications reside. Internet traffic, which was a negligible part of the overall enterprise traffic, would backhaul into the datacenter and securely exit through a centralized firewall to the internet.

[boxlink link="https://www.catonetworks.com/resources/what-telcos-wont-tell-you-about-mpls?utm_source=blog&utm_medium=top_cta&utm_campaign=windstream_partnership_news"] What Others Won’t Tell You About MPLS | EBOOK [/boxlink]

Cloud migration shifts the Hub

Legacy MPLS design is becoming irrelevant for most enterprises. The hub and spokes are changing. For example, Office365. This SaaS application has dramatically shifted the traffic from on-premises Exchange and SharePoint in the physical datacenter, and offline copies of Office documents on users’ machines, to a cloud application. The physical datacenter is eliminated as a primary provider of messaging and content, diverting all traffic to the Office 365 cloud platform, and continuously creating real-time interaction between user’s endpoints and content stores in the cloud.

If you look at the underlying infrastructure, the datacenter firewalls and datacenter internet links carry the entire organization's Office 365 traffic, becoming a critical bottleneck and a regional single point of failure for the business.

Work from home shifts the Spokes

Imagine now, that we suddenly moved to a work-from-home hybrid model. The MPLS links are now idle in the deserted branches, and all datacenter inbound and outbound traffic is coming through the same firewalls and Internet links likely to create scalability, performance, and capacity challenges. In this example, centralizing all remote access to a single location, or a few locations globally, isn’t aligned with the need to provide secure and scalable access to the entire organization in the office and at home.

Internet links offer better alignment with business requirements than MPLS

While MPLS and high-quality direct internet access prices are getting closer, MPLS offers limited choice in aligning customer capacity, quality, and availability requirements with underlay budget.

Let’s look at availability first. While MPLS comes with contractually committed time to fix, even the most dedicated telco can’t always fix infrastructure damage in a couple of hours over the weekend. It may make sense to use a wired line and wireless connection managed by edge SD-WAN device to create physical infrastructure redundancy.

Capacity and quality pose a challenge as well. Traffic mix is evolving in many locations. For example, a retail store may want to run video traffic for display boards which will require much larger pipes. Service levels to that video streams, however, are different than those of Point-of-Sale machines. It could make sense to run the mission-critical traffic on MPLS or high-quality internet links and the best-effort video traffic on low-cost broadband links, all managed by edge SD-WAN.

Furthermore, if the video streams reside in the cloud, running them over MPLS will concentrate massive traffic at the datacenter firewall and Internet link chokepoint. It would make more sense to go with direct internet access connectivity at the branch, connect directly to the cloud application and stream the traffic to the branch. This requires adding a cloud-based security layer that is built to support distributed enterprises.

The Way Forward: MPLS is Destined to be replaced by SASE

Even if you don’t see immediate cost savings, shifting your thinking from MPLS-based network design to an internet- and cloud-first mindset is a necessity. Beyond the underlying network access, a SASE platform that combines SD-WAN, cloud-based security, and zero-trust network access will prepare your organization for the inevitable shift in how users’ access to applications is delivered by IT in a way that is optimized, secure, scalable, and agile.

In Cato, we refer to it as making your organization Ready for Whatever’s Next.

We are proud and excited to announce our partnership with Windstream Enterprise (WE), a leading Managed Service Provider (MSP) delivering voice and communication services across... Read ›

Windstream Enterprise partners with Cato Networks to Deliver Cloud-native SASE to organizations in North America We are proud and excited to announce our partnership with Windstream Enterprise (WE), a leading Managed Service Provider (MSP) delivering voice and communication services across North America. WE will offer Cato’s proven and mature SASE platform to enterprises of all sizes.

Cato offers WE a unique business and technical competitive advantage. By leveraging Cato’s SASE platform, WE can rapidly deploy a wide range of networking and security capabilities across locations, users, and applications to enable customers’ digital transformation journeys. Unlike SASE solutions composed from point products, Cato’s converged platform enables WE to get to market faster with a feature-rich SASE solution and meet unprecedented customer demand.

Agility and velocity are critical for both partners and customers today. Businesses expand geographically, grow through M&A, instantly adapt to new ways of work, and must protect themselves against the evolving threat landscape. These ever-changing requirements call for dynamic, scalable, resilient, and ubiquitous network and security infrastructure that can be ready for whatever comes next.

[boxlink link="https://www.catonetworks.com/news/windstream-enterprise-delivers-sase-solution-with-cato-networks/?utm_source=blog&utm_medium=top_cta&utm_campaign=windstream_partnership_news"] Windstream Enterprise Delivers North America’s First Comprehensive Managed SASE Solution with Cato Networks | News [/boxlink]

This is the promise of SASE that Cato Networks has been perfecting for the past seven years. There is no other SASE offering in the market that can deliver on that promise with the same simplicity, velocity, and agility as Cato. Here are some of the benefits that WE and our mutual customers will experience with Cato SASE:

Rapidly evolving networking and security capabilities: Cato’s cloud-native software stack includes SD-WAN, Firewall as a Service (FWaaS), Secure Web Gateway (SWG), Advanced Threat Prevention with IPS and Next-Gen Anti-Malware, Cloud Access Security Broker (CASB) and Zero Trust Network Access (ZTNA). Cato experts ensure these capabilities rapidly evolve and adapt to new business requirements and security threats without any involvement from our partners and customers.

Instant-on for locations and users: WE can connect enterprise customers locations and users to Cato quickly through zero-touch provisioning and let the Cato SASE Cloud handle the rest (route optimization, quality of service, traffic acceleration, and security inspection).

Elastic capacity, available anywhere: Cato SASE Cloud can handle huge traffic flows of up to 3 Gbps per location in North America and globally through a dense footprint of Points-of-Presence (PoPs). No capacity planning is needed.

Fully automated self-healing: Cato’s cloud-native SASE is architected with automated intelligent resiliency from the customer edge to the cloud service PoPs. High availability by design ensures service continuity without any human intervention. No need for complex HA planning and orchestration.

True single pane of glass: Since Cato is a fully converged platform, it was built with a single management application to manage all configuration, reporting, analytics, and troubleshooting across all functions. Additionally, customers gain access to WE Connect Portal to enable easy configuration, analysis, and automation of their fully cloud-native SASE framework.

Through our partnership, powerful SASE managed services become easier and more efficient to deliver. Cato and WE are ready to usher customers into a new era where advanced managed services meet a cloud-native software platform to create a customer experience and deliver customer value like never before.

Understanding SASE is tricky because it has no “new cool feature.” Rather, SASE is an architectural shift that fundamentally changes how common networking and security... Read ›

New Gartner Report Explores The Portfolio or Platform Question for SASE Solutions Understanding SASE is tricky because it has no “new cool feature.” Rather, SASE is an architectural shift that fundamentally changes how common networking and security capabilities are delivered to users, locations, and applications globally. It is, primarily, a promise for a simple, agile, and holistic way of delivering secure and optimized access to everyone, everywhere, and on any device.

When Gartner introduced SASE in the 2019 report, The Future of Network Security is in the Cloud, the analyst firm highlighted convergence of network and network security services as the main architectural attribute of SASE. According to Gartner, “This market converges network (for example, software-defined WAN [SD-WAN]) and network security services (such as SWG, CASB and firewall as a service [FWaaS]). We refer to it as the secure access service edge and it is primarily delivered as a cloud-based service.”

Cobbling together multiple products wasn’t a converged approach from both technology and management perspectives. Many vendors got the message and started to create their single-vendor solutions. Some developed missing components, such as adding SD-WAN capability to a firewall appliance. Others acquired pieces such as SD-WAN, CASB, or Remote Browser Isolation (RBI) to build on to existing solutions. According to Gartner ® Market Opportunity Map: Secure Access Service Edge, Worlwide1 report, by 2023, no less than 10 vendors will offer a one-stop-shop SASE solution.

Cato is a big proponent of “convergence" as a key requirement for fulfilling the SASE promise. The direction of many SASE vendors is towards a “one stop shop.” Does “convergence” equal “one-stop shop” and should you care?

[boxlink link="https://www.catonetworks.com/resources/what-to-expect-when-youre-expectingsase/?utm_source=blog&utm_medium=top_cta&utm_campaign=expecting_sase"] The Total Economic Impact™ of Cato's SASE Cloud | Read Report [/boxlink]

SASE: Platform (“convergence”) does not mean Portfolio (owned by a “one stop shop”)

The answer to that question was addressed in a recent research paper from Gartner titled "Predicts 2022: Consolidated Security Platforms Are the Future"2 There Gartner makes a key distinction between Portfolio and Platform security companies. According to Gartner:

“Vendors are taking two clear approaches to consolidation:

Platform Approach

Leverage interdependencies and commonalities among adjacent systems

Integrating consoles for common functions

Support for organizational business objectives at least as effectively as best-of-breed

Integration and operational simplicity mean security objectives are also met.

Portfolio Approach

Leveraged set of unintegrated or lightly integrated products in a buying package

Multiple consoles with little to no integration and synergy

Legacy approach in a vendor wrapper

Will not fulfill any of the promised advantages of consolidation.

Differentiating between these approaches is key to the efficiency of the suite, and vendor marketing will always say they are a platform. As you evaluate products, you must look at how integrated the consoles are for the management and monitoring of the consolidated platform. Also, assess how security elements (such as data definitions, malware engines) and more can be reused without being redefined, or can apply across multiple areas seamlessly. Multiple consoles and multiple definitions are warnings that this is a portfolio approach that should be carefully evaluated.”

SASE Platforms Require Cloud-based Delivery

Convergence of networking and security is, however, just one step towards fulfilling the SASE promise. Cloud-based delivery is the key ingredient for achieving the operational and security benefits of SASE. According to Gartner:

“As the platforms shift to the cloud for management, analysis and even delivery, the ability to leverage the shared responsibility model for security brings enormous benefits to the consumer. However, this extends the risk surface to the vendor and requires further due diligence in third-party vendor management. The benefits include:

Lack of physical technical debt; there is no hardware to amortize before shifting vendors or technology.

The end-customer’s data center footprint is reduced or eliminated for key technologies.

Operational tasks (e.g., patching, upgrades, performance scaling and maintenance) are performed by the cloud provider. The system is maintained and monitored around the clock, and the staffing of the provider supplements that of the end customer.

Controls are placed close to the hybrid modern workforce and to the distributed modern data; the path is not forced through an arbitrary, customer-owned location for filtering.

Despite being large targets, cloud-native security vendors have the scale and focus to secure, manage, and monitor their infrastructure better than most individual organizations.”

Gartner analysts Neil MacDonald and Charlie Winckless in the report predict that “[B]y 2025, 80% of enterprises will have adopted a strategy to unify web, cloud services and private application access from a single vendor’s SSE [secure service edge] platform.” One of their key findings that led to this strategic planning assumption is:

“Single-vendor solutions provide significant operational efficiency and security efficacy, compared with best-of-breed, including reduced agent bloat, tighter integration, fewer consoles to use, and fewer locations where data must be decrypted, inspected, and recrypted.”

The report further states:

“The shift to remote work and the adoption of public cloud services was well underway already, but it has been further accelerated by COVID-19. SSE allows the organization to support anywhere, anytime workers using a cloud-centric approach for the enforcement of security policy. SSE offers immediate opportunities to reduce complexity, costs and the number of vendors.”

Cato: The SASE Platform powered by a Global Backbone

How does Cato measure up to this vision of the future? Cato was built from the ground up as a cloud-native service, built on one global backbone, to deliver one security stack, managed from a single console, and enforcing one comprehensive networking and security policy on all users, locations, and applications—and it’s all available today from this single vendor.

We welcome you to test drive the simple, agile, and holistic Cato SASE Cloud. We promise an eye-opening experience.

Learn more:

Security Service Edge (SSE): It’s SASE without the “A” (blog post)

How to Secure Remote Access (blog post)

The Future of Security: Do All Roads Lead to SASE? (webinar)

8 Ways SASE Answers Your Future IT & Security Needs (eBook)

1 Gartner, “Market Opportunity Map: Secure Access Service Edge, Worldwide ” Joe Skorupa, Nat Smith, and Even Zeng. July 16, 2021

2 Gartner, “Predicts 2022: Consolidated Security Platforms Are the Future” Charlie Winckless, Joerg Fritsch, Peter Firstbrook, Neil MacDonald, and Brian Lowans. December 1, 2021

GARTNER is registered trademark and service mark of Gartner, Inc. and/or its affiliates in the U.S. and internationally and is used herein with permission. All rights reserved.

Hundreds of millions of people worldwide were directed to work remotely in 2020 in response to pandemic lockdowns. Even as such restrictions are beginning to... Read ›

How to Secure Remote Access Hundreds of millions of people worldwide were directed to work remotely in 2020 in response to pandemic lockdowns. Even as such restrictions are beginning to ease in some countries and employees are slowly returning to their offices, remote work continues to be a popular workstyle for many people.

Last June, Gartner surveyed more than 100 company leaders and learned that 82% of respondents intend to permit remote working at least some of the time as employees return to the workplace. In a similar pattern, out of 669 CEOs surveyed by PwC, 78% say that remote work is a long-term prospect. For the foreseeable future, organizations must plan how to manage a more complex, hybrid workforce as well as the technologies that enable their productivity while working remotely.

The Importance of Secure Remote Access

Allowing employees to work remotely introduces new risks and vulnerabilities to the organization. For example, people working at home or other places outside the office may use unmanaged personal devices with a suspect security posture. They may use unsecured public Internet connections that are vulnerable to eavesdropping and man-in-the-middle attacks. Even managed devices over secured connections are no guarantee of a secure session, as an attacker could use stolen credentials to impersonate a legitimate user. Therefore, secure remote access should be a crucial element of any cybersecurity strategy.

[boxlink link="https://www.catonetworks.com/resources/the-hybrid-workforce-planning-for-the-new-working-reality/?utm_source=blog&utm_medium=top_cta&utm_campaign=hybrid_workforce"] The Hybrid Workforce: Planning for the New Working Reality | Download eBook [/boxlink]

How to Secure Remote Access: Best Practices

Secure remote access requires more than simply deploying a good technology solution. It also demands a well-designed and observed company security policy and processes to prevent unauthorized access to your network and its assets. Here are the fundamental best practices for increasing the security of your remote access capabilities.

1. Formalize Company Security Policies

Every organization needs to have information security directives that are formalized in a written policy document and are visibly supported by senior management. Such a policy must be aligned with business requirements and the relevant laws and regulations the company must observe. The tenets of the policy will be codified into the operation of security technologies used by the organization.

2. Choose Secure Software

Businesses must choose enterprise-grade software that is engineered to be secure from the outset. Even small businesses should not rely on software that has been developed for a consumer market that is less sensitive to the risk of cyber-attacks.

3. Encrypt Data, Not Just the Tunnel

Most remote access solutions create an encrypted point-to-point tunnel to carry the communications payload. This is good, but not good enough. The data payload itself must also be encrypted for strong security.

4. Use Strong Passwords and Multi-Factor Authentication

Strong passwords are needed for both the security device and the user endpoints. Cyber-attacks often happen because an organization never changed the default password of a security device, or the new password was so weak as to be ineffective. Likewise, end-users often use weak passwords that are easy to crack. It’s imperative to use strong passwords and MFA from end to end in the remote access solution.

5. Restrict Access Only to Necessary Resources

The principle of least privilege must be utilized for remote access to resources. If a person doesn’t have a legitimate business need to access a resource or asset, he should not be able to get to it.

6. Continuously Inspect Traffic for Threats

The communication tunnel of remote access can be compromised, even after someone has logged into the network. There should be a mechanism to continuously look for anomalous behavior and actual threats. Should it be determined that a threat exists, auto-remediation should kick in to isolate or terminate the connection.

Additional Considerations for Secure Remote Access

Though these needs aren’t specific to security, any remote access solution should be cost-effective, easy to deploy and manage, and easy for people to use, and it should offer good performance. Users will find a workaround to any solution that is slow or hard to use.

Enterprise Solutions for Secure Remote Access

There are three primary enterprise-grade solutions that businesses use today for secure remote access: Virtual Private Network (VPN); Zero Trust Network Access (ZTNA); and Secure Access Service Edge (SASE). Let’s have a look at the pros and cons of each type of solution.

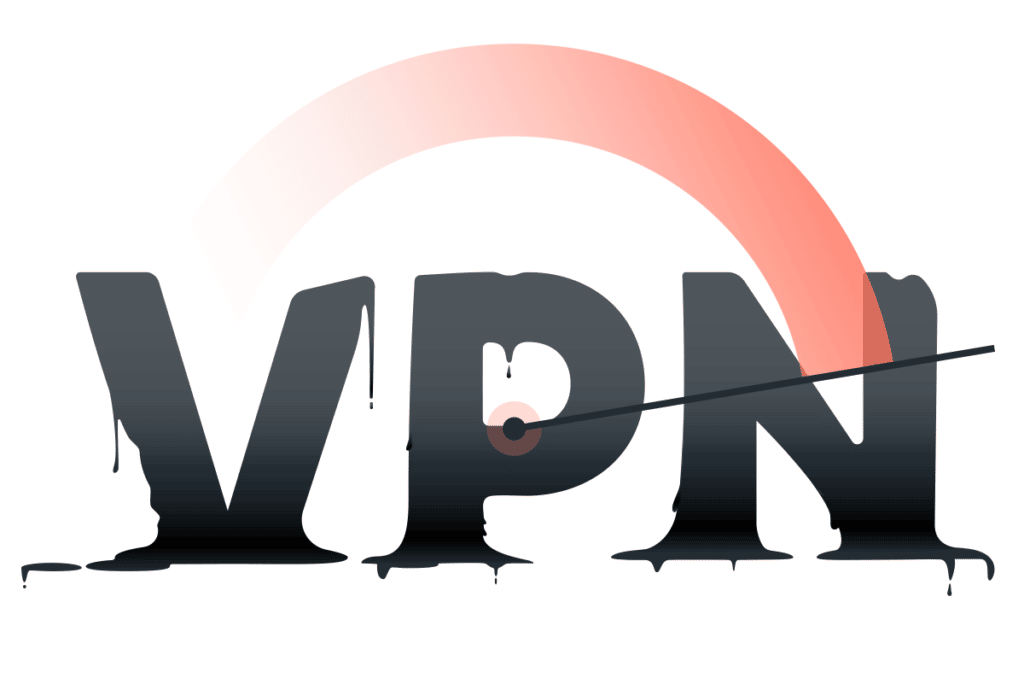

1. Virtual Private Network (VPN)

Since the mid-1990s, VPNs have been the most common and well-known form of secure remote access. However, enterprise VPNs are primarily designed to provide access for a small percentage of the workforce for short durations and not for large swaths of employees needing all-day connectivity to the network.

VPNs provide point-to-point connectivity. Each secure connection between two points requires its own VPN link for routing traffic over an existing path. For people working from home, this path is going to be the public Internet. The VPN software creates a virtual private tunnel over which the user’s traffic goes from Point A (e.g., the home office or a remote work location) to Point B (usually a terminating appliance in a corporate datacenter or in the cloud). Each terminating appliance has a finite capacity for simultaneous users; thus, companies with many remote workers may need multiple appliances. VPN visibility is limited when companies deploy multiple disparate appliances.

Security is still a considerable concern when VPNs are used. While the tunnel itself is encrypted, the traffic traveling within that tunnel typically is not. Nor is it inspected for malware or other threats. To maintain security, the traffic must be routed through a security stack at its terminus on the network. In addition to inefficient routing and increased network latency, this can result in having to purchase, deploy, monitor, and maintain security stacks at multiple sites to decentralize the security load. Simply put, providing security for VPN traffic is expensive and complex to manage.

Another issue with VPNs is that they provide overly broad access to the entire network without the option of controlling granular user access to specific resources. There is no scrutiny of the security posture of the connecting device, which could allow malware to enter the network. What’s more, stolen VPN credentials have been implicated in several high-profile data breaches. By using legitimate credentials and connecting through a VPN, attackers were able to infiltrate and move freely through targeted company networks.

2. Zero Trust Network Access (ZTNA)

An up-and-coming VPN alternative is Zero Trust Network Access, which is sometimes called a software-defined perimeter (SDP). ZTNA uses granular application-level access policies set to default-deny for all users and devices. A user connects to and authenticates against a Zero Trust controller, which implements the appropriate security policy and checks device attributes. Once the user and device meet the specified requirements, access is granted to specific applications and network resources based upon the user’s identity. The user’s and device’s status are continuously verified to maintain access.

This approach enables tighter overall network security as well as micro-segmentation that can limit lateral movement in the event a breach occurs.

ZTNA is designed for today’s business. People work everywhere — not only in offices — and applications and data are increasingly moving to the cloud. Access solutions need to be able to reflect those changes. With ZTNA, application access can dynamically adjust based on user identity, location, device type, and more. What’s more, ZTNA solutions provide seamless and secure connectivity to private applications without placing users on the network or exposing apps to the internet.