Featured

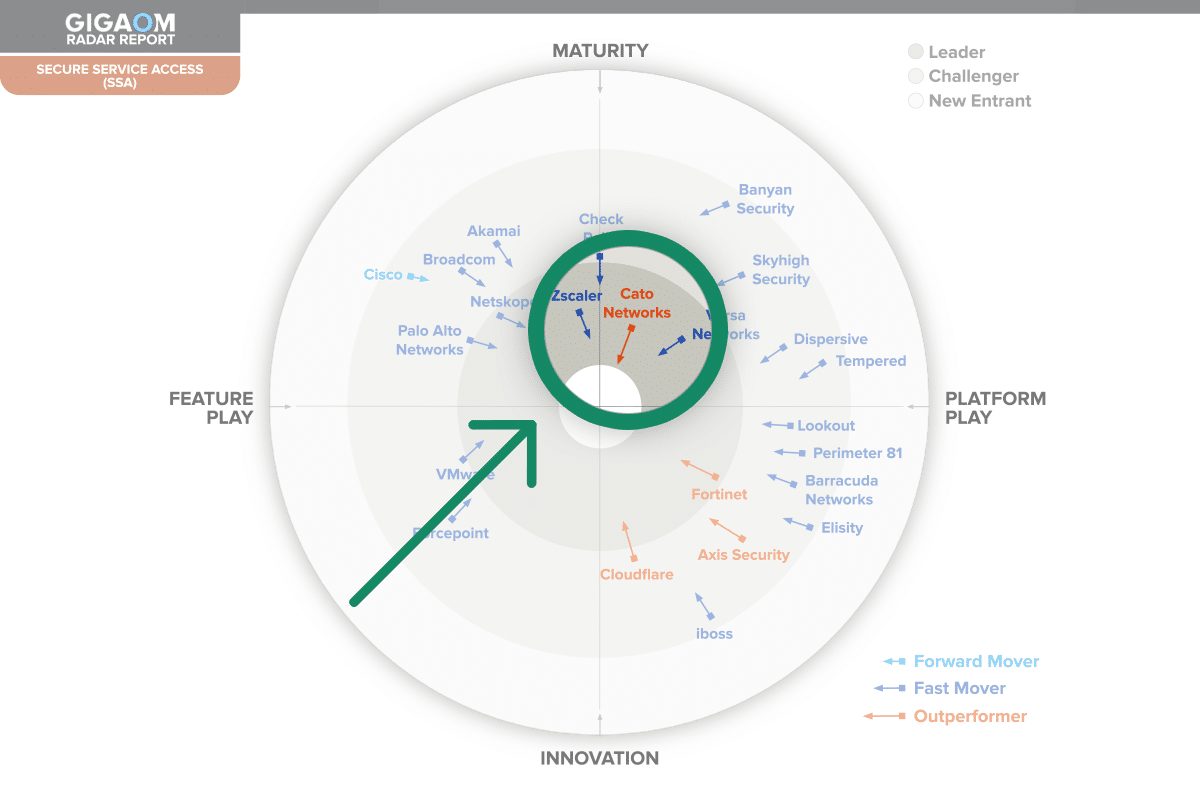

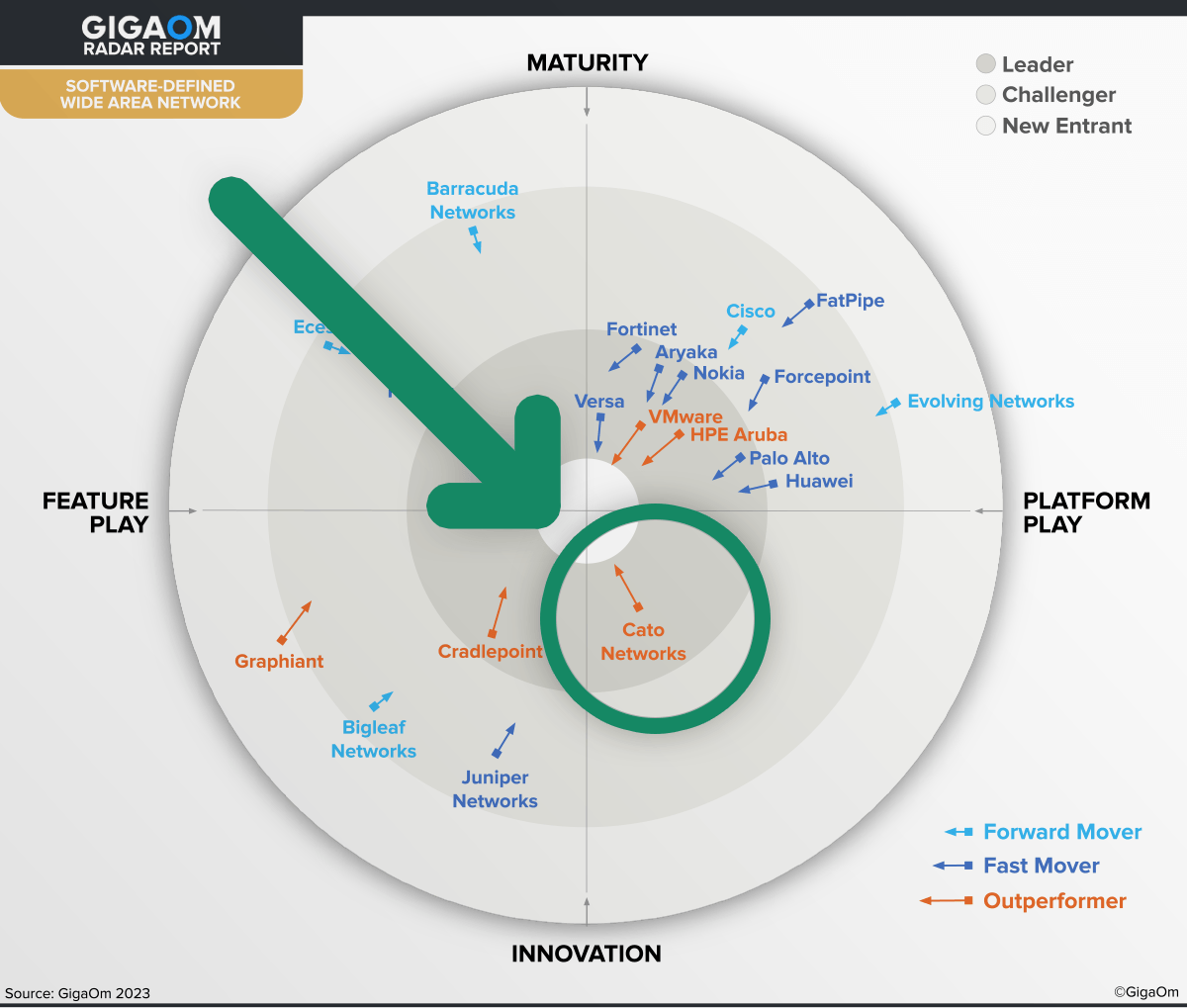

Cato Networks recognized as a Leader in the 2024 Gartner®...

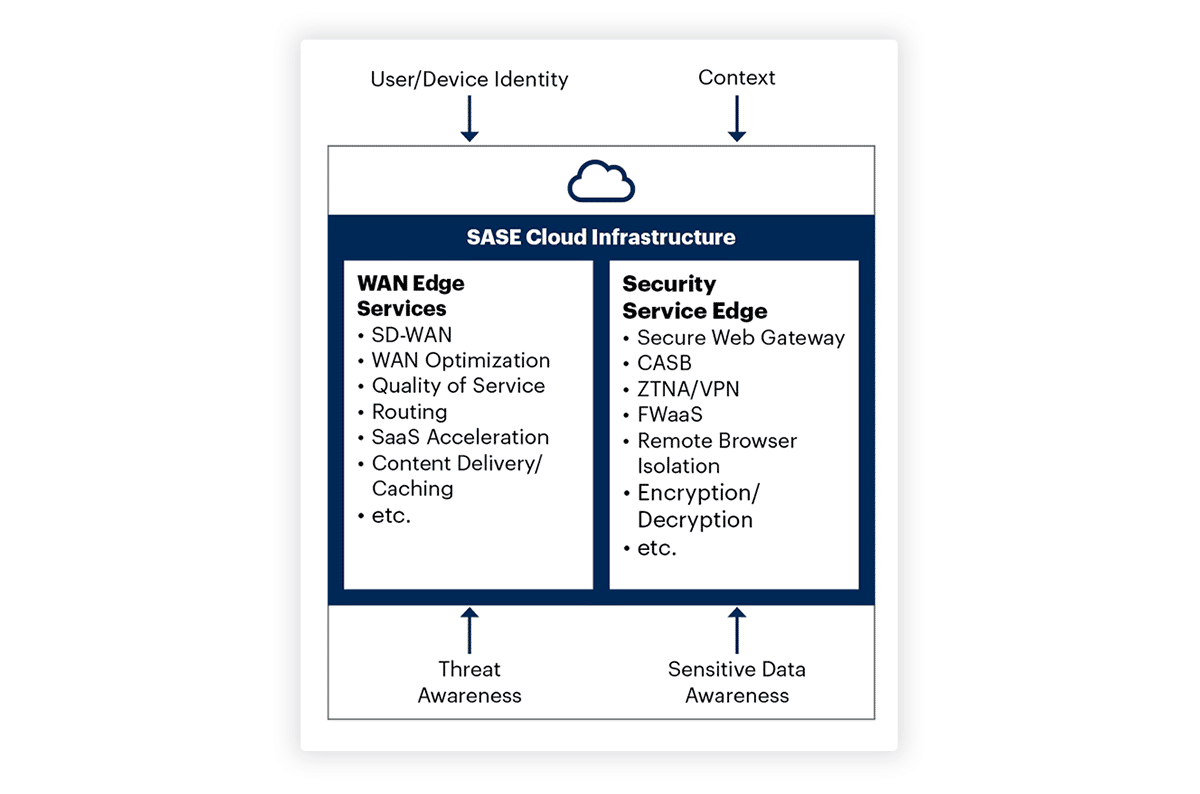

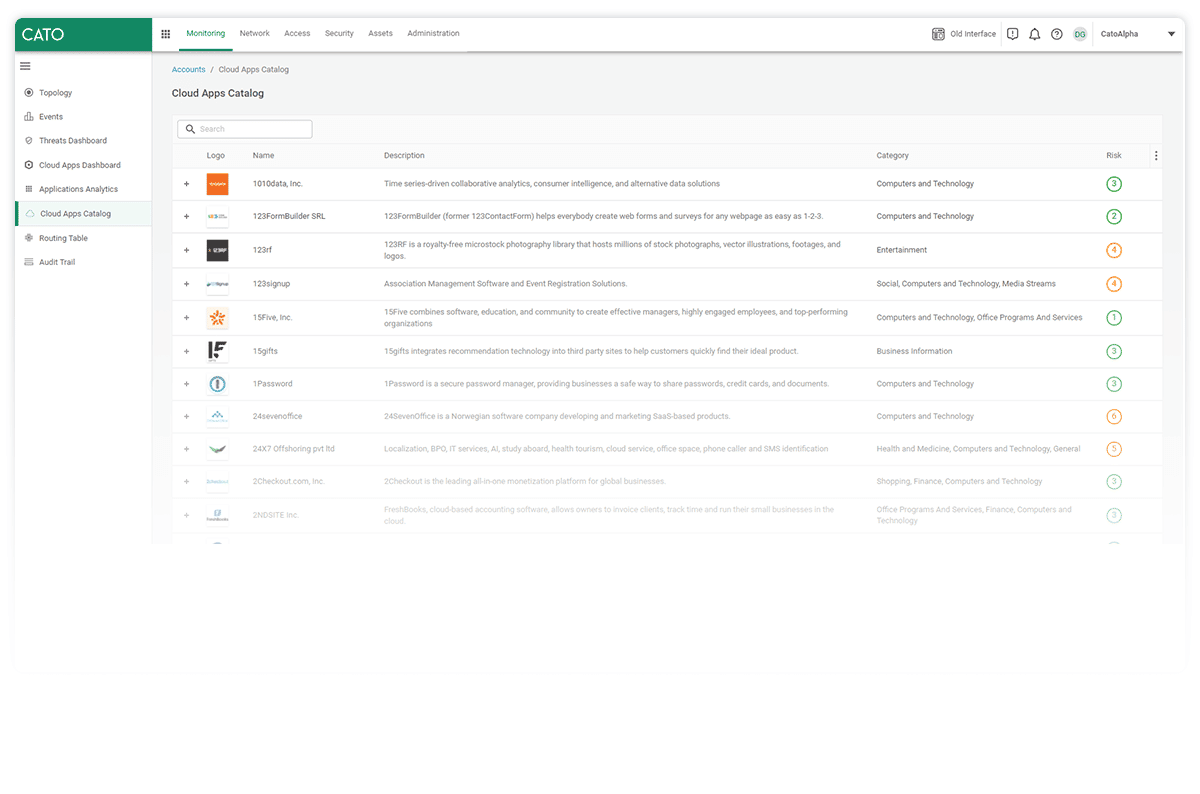

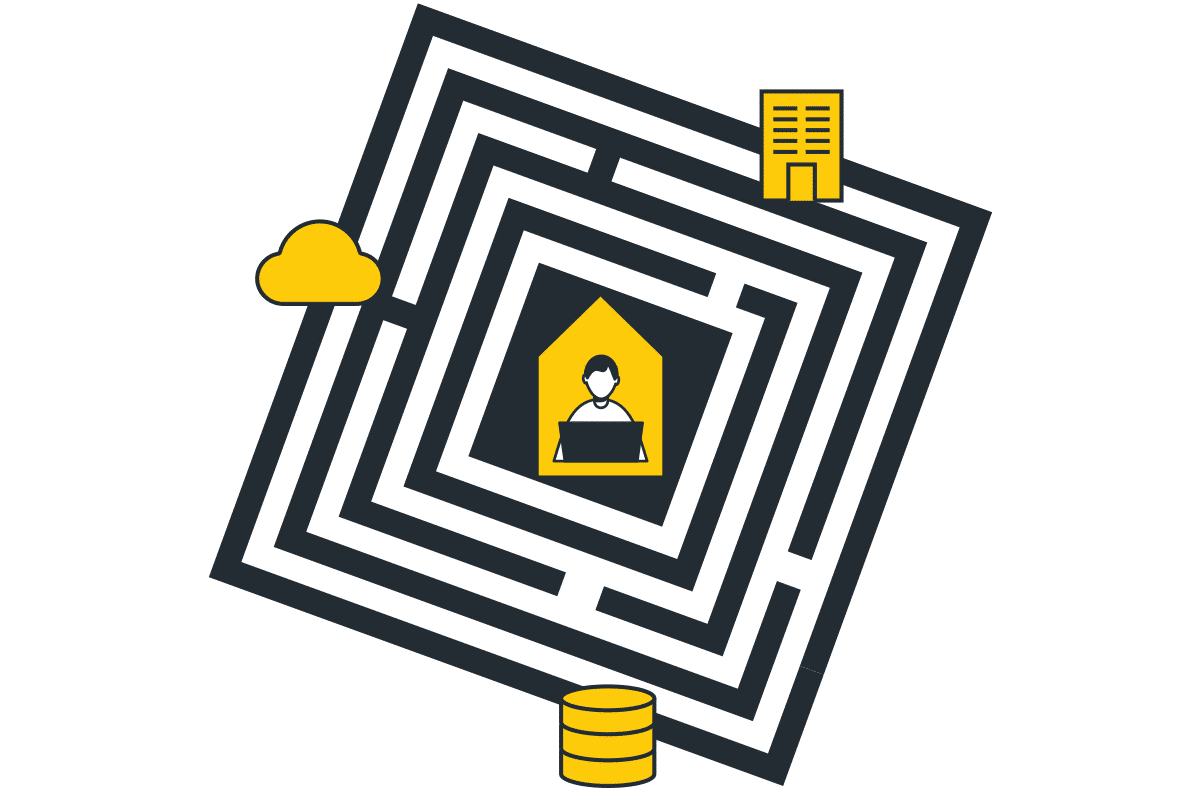

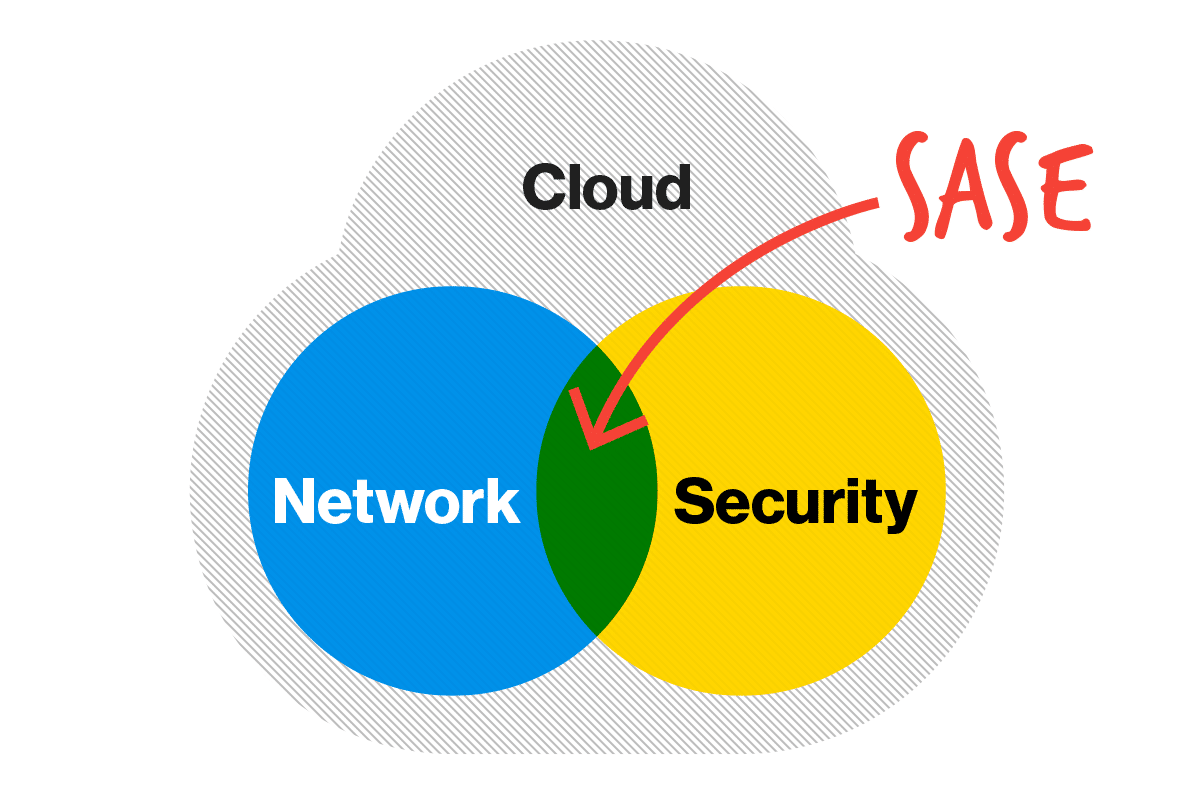

SASE is all about strategically solving business problems. The systematic removal of technology barriers standing in the way of business outcomes. It is a brand...

Showing 0 results